Introduction

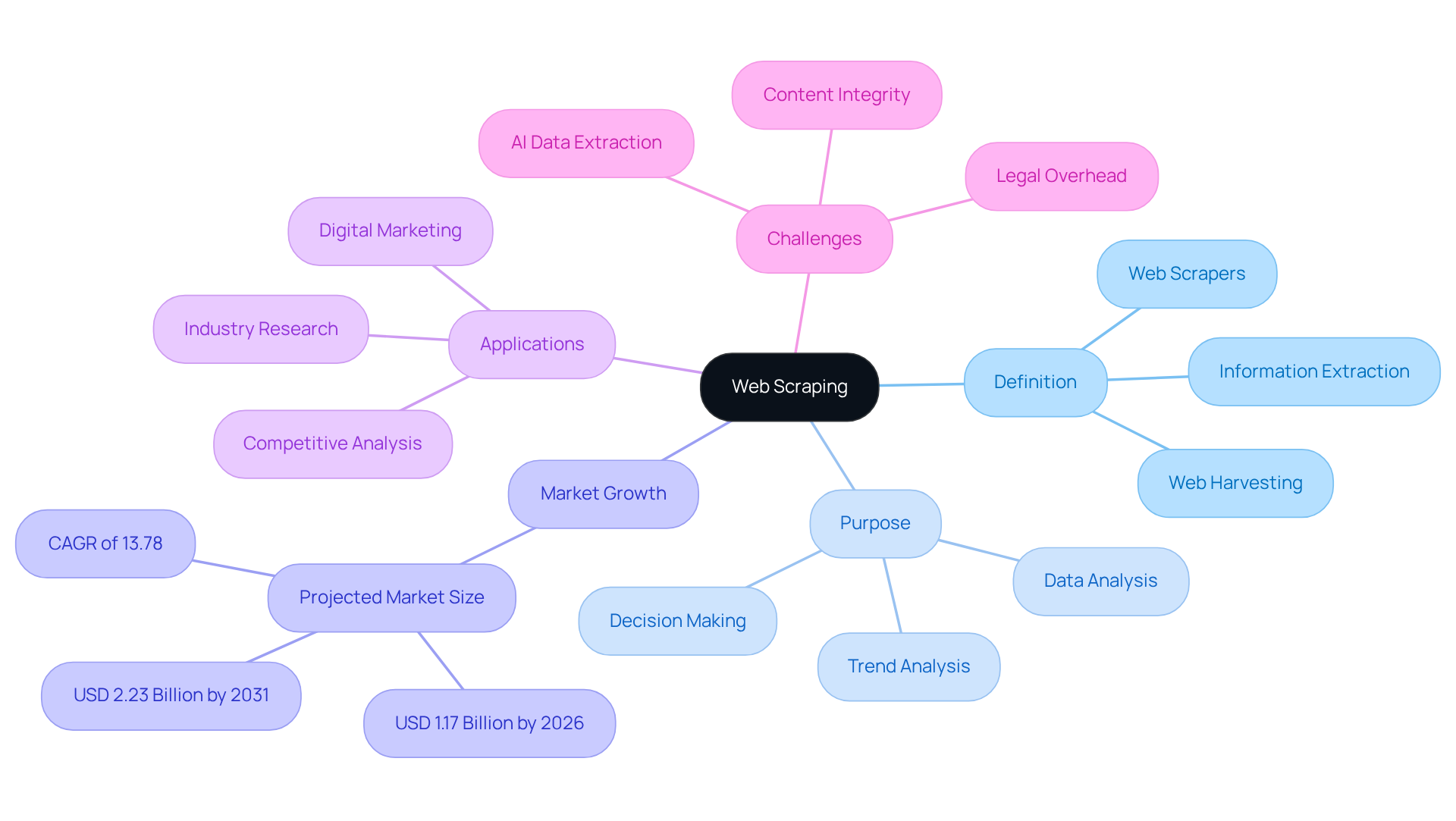

Web scraping has emerged as a significant force in the digital landscape, enabling the automated extraction of valuable data from websites. This powerful technique streamlines information gathering and empowers businesses and researchers to derive actionable insights from the vast ocean of online content.

However, as the demand for data-driven decision-making grows, complexities surrounding the ethical and legal implications of web scraping also increase. Key challenges arise when balancing the pursuit of information with the necessity of adhering to privacy laws and copyright regulations.

- Ethical Considerations: Understanding the moral implications of data extraction.

- Legal Compliance: Navigating privacy laws and copyright regulations.

- Data Integrity: Ensuring the accuracy and reliability of extracted data.

Define Web Scraping and Its Purpose

Web harvesting, also known as web gathering or web information extraction, is the using specialised software tools called web scrapers, highlighting . These tools demonstrate what a web scraper does by navigating web pages, extracting relevant information, and converting it into structured formats such as spreadsheets or databases.

The primary objective of is to efficiently gather substantial amounts of information, which relates to , enabling companies and researchers to analyse trends, conduct industry research, and make informed decisions based on real-time insights. In 2026, the , growing at a CAGR of 13.78% to USD 2.23 billion by 2031. This growth underscores the increasing relevance of .

By transforming unstructured web content into organised datasets, answers the question of what does a web scraper do, significantly enhancing the efficiency and accuracy of analysis and making it an essential tool across various sectors. Data analysts assert that understanding what does a web scraper do can lead to improved business decision-making, as it provides critical insights that drive strategic initiatives and competitive advantage.

Moreover, the use of enterprise-class private , such as those offered by Appstractor, ensures secure and reliable information gathering. This is crucial for maintaining information integrity and adaptability in . Appstractor provides various , including rotating proxies for self-serve IPs and full-service options for turnkey information delivery, catering to the diverse needs of digital marketing professionals.

The emergence of , necessitating robust web collection strategies to maintain competitive positioning.

Explore Techniques and Methods of Web Scraping

Web harvesting techniques can be broadly classified into various methods, each designed for specific . The most common techniques include:

- HTML Parsing: This method involves retrieving the HTML content of a web page and using libraries like Beautiful Soup or lxml in Python to parse the HTML and extract the required information. In 2026, advancements in , allowing for quicker setup and reduced maintenance. , particularly in the real estate sector, provide listing change alerts that help users stay updated with minimal manual intervention.

- DOM Parsing: Similar to HTML parsing, this technique focuses on navigating the Document Object Model (DOM) of a web page to locate and extract specific elements. As websites become more dynamic, in information extraction. ensure that information extraction remains dependable even as web environments evolve.

- : Numerous websites provide APIs that enable structured information access. Utilising APIs is often more than conventional scraping techniques, as they deliver information in a pre-defined format, reducing the risk of errors related to web page modifications. For instance, integrating APIs can simplify information collection processes, making it easier for digital marketing specialists to access real-time insights. Appstractor supports seamless , enhancing the overall information management experience.

- Regular Expressions: This technique employs patterns to recognise and retrieve specific information points from text, making it particularly effective for extracting details from unstructured content. Regular expressions can enhance the precision of information extraction when combined with other techniques, especially in scenarios where content is not consistently formatted.

- Headless Browsers: Tools such as Puppeteer or Selenium simulate user interactions with web pages, allowing scrapers to gather information from dynamic websites that rely on JavaScript. In 2026, the shift towards genuine browsers instead of headless alternatives is driven by the need for improved reliability and success rates in tasks, as contemporary anti-bot systems become more advanced. , featuring a global self-repairing IP pool, guarantees continuous uptime and efficient information extraction.

As the landscape of web evolves, , allowing teams to adapt to changing web environments more effectively. Furthermore, the trend towards managed services is gaining momentum as organisations aim to ease the challenges of maintaining collection infrastructure, ensuring adherence to privacy standards while optimising their collection strategies. Appstractor's commitment to GDPR compliance and compensation benchmarking further enhances its appeal to companies seeking trustworthy and ethical data collection solutions.

Highlight Applications and Use Cases of Web Scraping

has emerged as a vital tool across various industries, with significant applications including:

- E-commerce: Businesses utilise to , , and analyse customer reviews. This capability allows them to adjust strategies in real-time, ensuring competitiveness in a rapidly evolving market. enhance this process by providing , enabling businesses to respond swiftly to industry changes.

- Industry Analysis: Companies to , industry trends, and competitor actions. This information informs promotional strategies and product development, allowing firms to align their offerings with consumer demands. By 2026, approximately 55% of businesses are expected to employ web scraping for research purposes, illustrating what does a web scraper do and underscoring its growing importance. As Scott Vahey, Director of Technology at Ficstar, notes, "Companies today are laser-focused on monitoring tariffs and prices amid inflation, while also leveraging AI to enhance information quality."

- Real Estate: Real estate agents aggregate property listings to analyse market trends and provide clients with comprehensive insights. facilitate this by ensuring agents can access accurate and timely information, thereby enhancing their ability to identify opportunities and make informed recommendations.

- Travel and Hospitality: Travel agencies scrape flight and hotel prices to offer competitive rates while analysing seasonal demand patterns. This data-driven approach aids in and improving customer satisfaction, supported by the speed and reliability of Trusted Proxies.

- Social Media Monitoring: Brands employ web extraction to track mentions, sentiment, and engagement metrics across social media platforms. This data is crucial for managing online reputation and refining marketing strategies based on consumer feedback. Trusted Proxies ensure that brands can gather this information without interruption.

- Lead Generation: Businesses scrape contact information from various sources to . This enhances outreach efforts, allowing for more personalised and effective communication with potential customers, facilitated by the use of Trusted Proxies.

These applications highlight what does a web scraper do by serving as a tool for , enabling organisations to maintain a competitive edge in their respective markets. Furthermore, numerous users have reported that utilising Trusted Proxies significantly enhances their web data extraction capabilities. For instance, a CEO from eData Web Development remarked that using Trusted Proxies enabled deep monitoring and ranking insights, contributing to larger SEO projects. Similarly, a freelance SEO consultant noted that their reports operated four times faster and were more accurate due to external proxies, highlighting the essential role of reliable proxy services in enhancing web data extraction efforts.

Discuss Legal and Ethical Considerations in Web Scraping

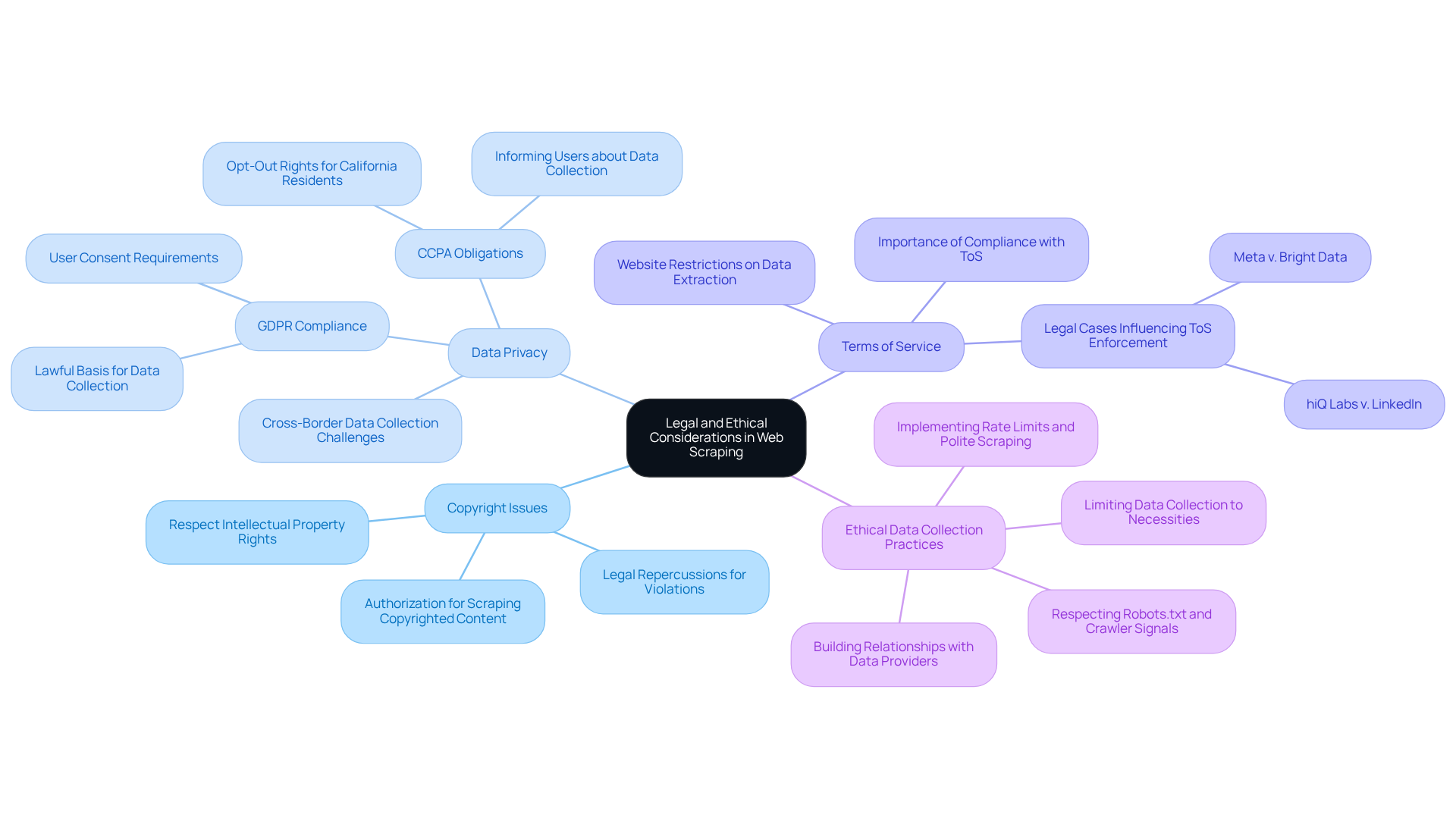

Navigating the legal and ethical landscape of [web data extraction](https://appstractor.com) is crucial for businesses aiming to utilise this powerful tool effectively. Key considerations include:

- : Scraping copyrighted content without permission can lead to significant legal repercussions. Respecting intellectual property rights and adhering to the of the websites being scraped is essential to avoid potential lawsuits.

- Data Privacy: The collection of personal data without explicit consent can violate privacy laws, such as the in Europe and the in the U.S. Scrapers must ensure compliance with these regulations to protect individuals' privacy rights and avoid hefty fines.

- : A considerable portion of websites feature clear restrictions against data extraction in their terms of service. Ignoring these terms can lead to legal action or being permanently banned from accessing the site. Recent court cases, such as hiQ Labs v. LinkedIn and Meta v. Bright Data, highlight the evolving nature of these legal frameworks and the importance of understanding them.

- : Adopting is vital for responsible web data extraction. This involves to avoid server overload, honouring robots.txt files, and ensuring openness regarding information collection practises. Ethical extraction not only reduces legal risks but also promotes better relationships with information providers, enhancing the quality and reliability of the information gathered.

By adhering to these legal and ethical considerations, businesses can leverage web scraping responsibly, maximising the benefits of data extraction while minimising associated risks.

Conclusion

In conclusion, web scraping stands as a vital tool for automating the collection of information from diverse online sources, effectively converting unstructured data into structured formats that facilitate analysis. By grasping the functionality of web scrapers, organisations can leverage this technology to extract insights that inform strategic decisions and bolster their competitive edge in the marketplace.

This exploration has underscored essential elements of web scraping, including its definition, techniques, applications across various industries, and the significant legal and ethical considerations that accompany its implementation. From e-commerce to real estate, the capacity to efficiently gather and analyse data empowers businesses to swiftly adapt to market fluctuations and consumer preferences. Furthermore, the incorporation of advanced tools and methodologies, such as API access and AI-driven extraction, exemplifies the dynamic nature of web scraping.

As the significance of data-driven decision-making continues to escalate, it is imperative for organisations to embrace web scraping with a sense of responsibility. Prioritising ethical practises and legal compliance is crucial to maximising the advantages of this technology while mitigating associated risks. By doing so, organisations not only protect their operations but also enhance the integrity of the data they collect, thereby paving the way for informed strategies and sustainable growth in an increasingly competitive digital environment.

Frequently Asked Questions

What is web scraping?

Web scraping, also known as web harvesting or web information extraction, is the automated process of collecting information from websites using specialised software tools called web scrapers.

What does a web scraper do?

A web scraper navigates web pages, extracts relevant information, and converts it into structured formats such as spreadsheets or databases.

What is the primary purpose of web scraping?

The primary purpose of web scraping is to efficiently gather substantial amounts of information, enabling companies and researchers to analyse trends, conduct industry research, and make informed decisions based on real-time insights.

What is the projected growth of the web data extraction market?

The web data extraction market is projected to reach USD 1.17 billion in 2026, growing at a CAGR of 13.78% to USD 2.23 billion by 2031.

How does web scraping enhance analysis efficiency?

By transforming unstructured web content into organised datasets, web scraping significantly enhances the efficiency and accuracy of analysis, making it an essential tool across various sectors.

How can web scraping improve business decision-making?

Understanding what a web scraper does can lead to improved business decision-making by providing critical insights that drive strategic initiatives and competitive advantage.

What role do proxy servers play in web scraping?

Enterprise-class private proxy servers, such as those offered by Appstractor, ensure secure and reliable information gathering, which is crucial for maintaining information integrity and adaptability in data mining.

What types of proxy solutions does Appstractor provide?

Appstractor provides various proxy solutions, including rotating proxies for self-serve IPs and full-service options for turnkey information delivery, catering to the diverse needs of digital marketing professionals.

What challenges does AI data extraction pose for publishers?

The emergence of AI data extraction poses challenges for publishers, necessitating robust web collection strategies to maintain competitive positioning.

List of Sources

- Define Web Scraping and Its Purpose

- In Graphic Detail: AI licensing deals, protection measures aren’t slowing web scraping (https://digiday.com/media/in-graphic-detail-ai-licensing-deals-protection-measures-arent-slowing-web-scraping)

- Google's AI scraping of news could get scrutiny in Brazil, CADE chief says | MLex | Specialist news and analysis on legal risk and regulation (https://mlex.com/mlex/articles/2413380/google-s-ai-scraping-of-news-could-get-scrutiny-in-brazil-cade-chief-says)

- News companies are doubling down to fight against AI Web scrapers (https://inma.org/blogs/Product-and-Tech/post.cfm/news-companies-are-doubling-down-to-fight-against-ai-web-scrapers)

- The battle between news publishers and AI bots is heating up | The Current (https://thecurrent.com/marketing-strategy-battle-between-news-publishers-ai-bots-is-heating-up)

- Web Scraping Market Size, Growth Report, Share & Trends 2026 - 2031 (https://mordorintelligence.com/industry-reports/web-scraping-market)

- Explore Techniques and Methods of Web Scraping

- How AI Is Changing Web Scraping in 2026 (https://kadoa.com/blog/how-ai-is-changing-web-scraping-2026)

- Web Scraping Trends for 2025 and 2026 (https://ficstar.medium.com/web-scraping-trends-for-2025-and-2026-0568d38b2b05)

- State of Web Scraping 2026: Trends, Challenges & What’s Next (https://browserless.io/blog/state-of-web-scraping-2026)

- Web Scraping Roadmap in 2026: Insights from 30M Requests (https://aimultiple.com/web-scraping)

- Web Scraping Roadmap: Steps, Tools & Best Practices (2026) (https://brightdata.com/blog/web-data/web-scraping-roadmap)

- Highlight Applications and Use Cases of Web Scraping

- Web Scraping Trends for 2025 and 2026 (https://ficstar.medium.com/web-scraping-trends-for-2025-and-2026-0568d38b2b05)

- How to Use Web Scraping for Sales Leads Generation 2026 (https://zyndoo.com/blog/blog-5/how-to-use-web-scraping-for-sales-leads-generation-2026-21)

- Web Scraping Report 2026: Market Trends, Growth & Key Insights (https://promptcloud.com/blog/state-of-web-scraping-2026-report)

- The Best Web Scraping Companies For Competitive Data in 2026 (https://ficstar.medium.com/the-best-web-scraping-companies-for-competitive-data-in-2026-1f0ef031b3d0)

- How Businesses Use Web Scraping to Spot Early Trends Before the Market Reacts (https://linkedin.com/pulse/how-businesses-use-web-scraping-spot-early-trends-before-market-afdrc)

- Discuss Legal and Ethical Considerations in Web Scraping

- Importance and Best Practices of Ethical Web Scraping (https://secureitworld.com/article/ethical-web-scraping-best-practices-and-legal-considerations)

- Is Web Scraping Legal? GDPR, CCPA & CFAA Frameworks Explained (https://tendem.ai/blog/is-web-scraping-legal-compliance-overview)

- Is Web Scraping Legal? Laws & Best Practices Guide for 2026 (https://scraperapi.com/web-scraping/is-web-scraping-legal)

- Ethical Web Scraping: Principles and Practices (https://datacamp.com/blog/ethical-web-scraping)