Introduction

Web data extraction has emerged as a crucial asset for businesses aiming to leverage the extensive information available online. By mastering the nuances of web scraping, organisations can convert raw data into actionable insights that inform strategic decision-making. However, the realm of web data extraction presents several challenges, including:

- The selection of appropriate tools

- The assurance of data quality

To effectively navigate these complexities and optimise their data extraction efforts, businesses can implement targeted strategies.

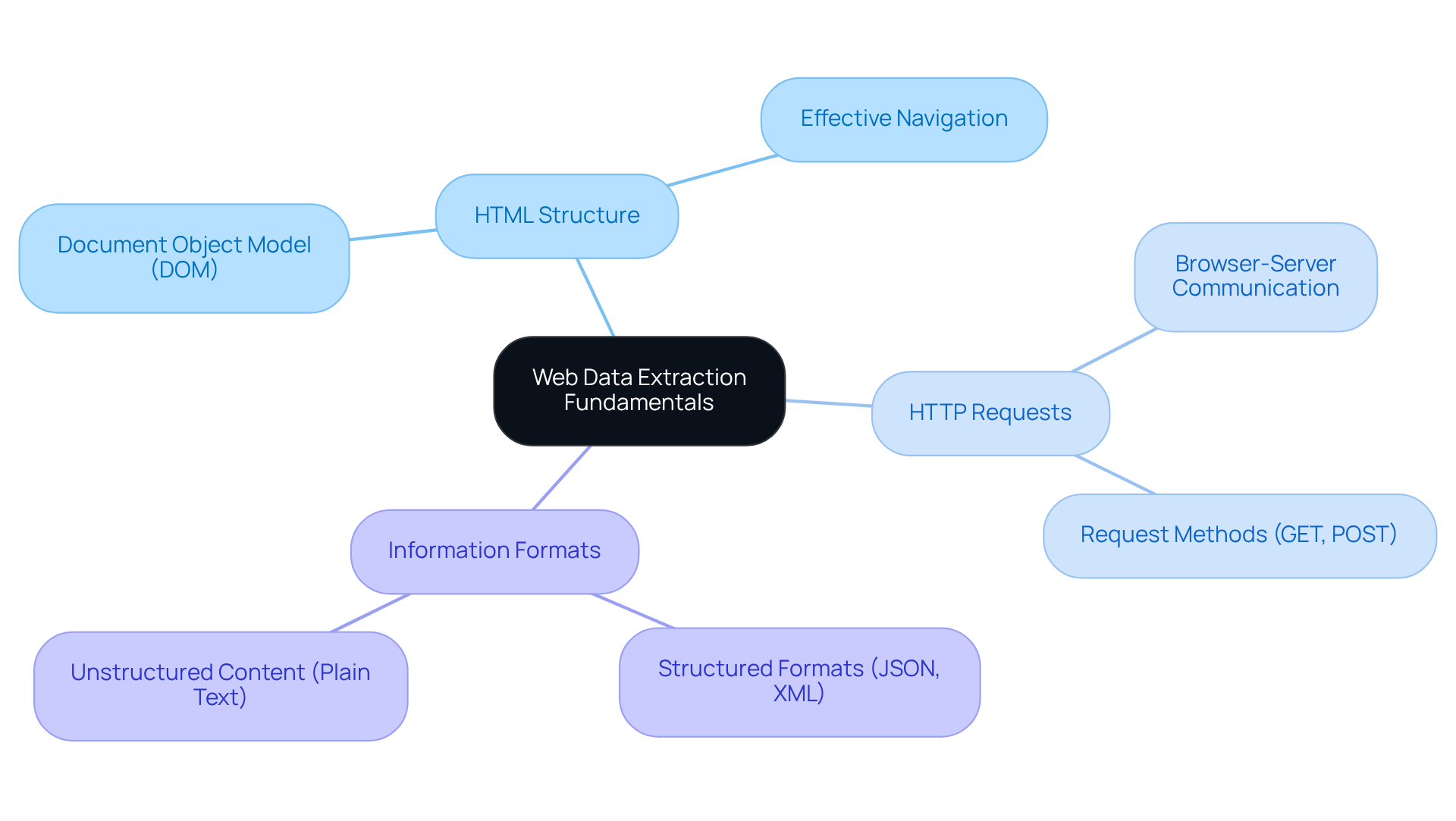

Understand Web Data Extraction Fundamentals

Web information extraction, often referred to as a web data extractor, is the automated process of collecting information from websites. This process involves a web data extractor to fetch web pages and extract specific data, which can then be organised into a usable format, such as a database or spreadsheet.

Key concepts in web scraping include:

- HTML Structure: A solid understanding of the Document Object Model (DOM) is crucial, as it enables effective navigation and data extraction from web pages.

- HTTP Requests: Grasping how web browsers communicate with servers through HTTP requests is vital for successful information extraction.

- Information Formats: Recognising the difference between structured formats (like JSON or XML) and unstructured content (such as plain text) is essential for selecting the right retrieval methods.

By mastering these fundamentals, businesses can utilise a web data extractor to leverage web information retrieval, gaining valuable insights to inform their decision-making processes.

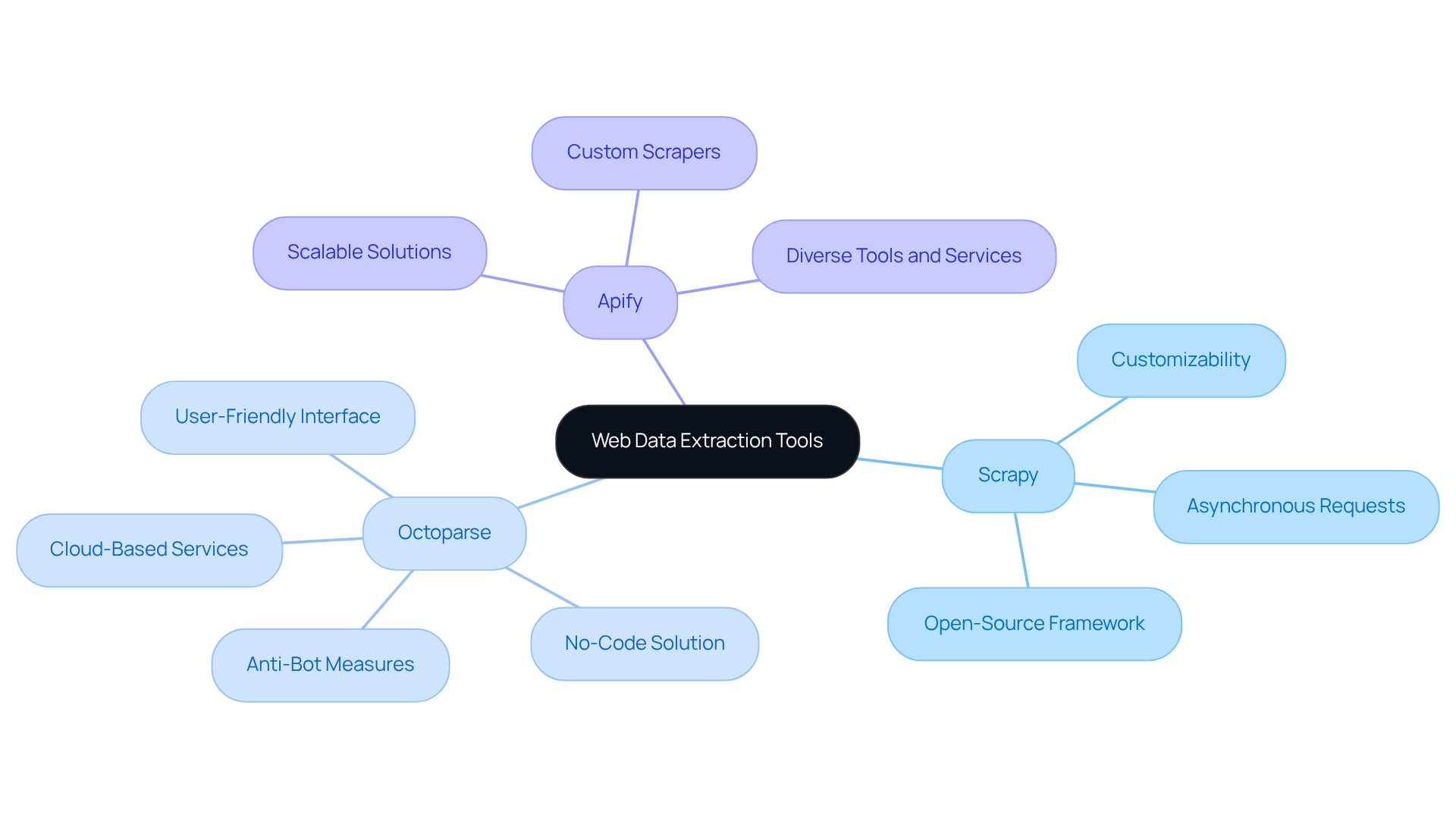

Choose the Right Web Data Extraction Tools

Choosing the appropriate web data extractor is essential for enhancing scraping efforts in the current digital landscape. Here are three prominent options:

- Scrapy: This open-source framework for Python is designed for the rapid development of web scrapers. Its high customizability and support for asynchronous requests make it a favourite among developers, particularly for complex scraping tasks.

- Octoparse: Known for its user-friendly, no-code interface, Octoparse simplifies the scraping process, making it accessible to non-technical users. It supports features such as infinite scrolling and can circumvent anti-bot measures, which is crucial for retrieving information from contemporary websites.

- Apify: As a cloud-based platform, Apify provides a diverse range of scraping tools and services, making it ideal for large-scale information extraction projects. Its flexibility enables users to develop custom scrapers or utilise pre-built solutions, addressing various information needs.

When selecting a tool, consider factors such as , scalability, and the specific formats required for your projects. Utilising advanced AWS Cloud Management features can significantly enhance your information retrieval efforts. For instance, employing AWS's cost management tools can assist in efficiently managing your budget while ensuring security and compliance in your information handling processes. User satisfaction ratings for these tools in 2026 indicate a growing preference for solutions that balance functionality with accessibility. Evaluating various tools can help determine the most suitable option for your specific needs, ensuring efficient and effective information retrieval. As Hiba Fathima notes, "Data quality determines AI quality," underscoring the importance of selecting the right tool for optimal results.

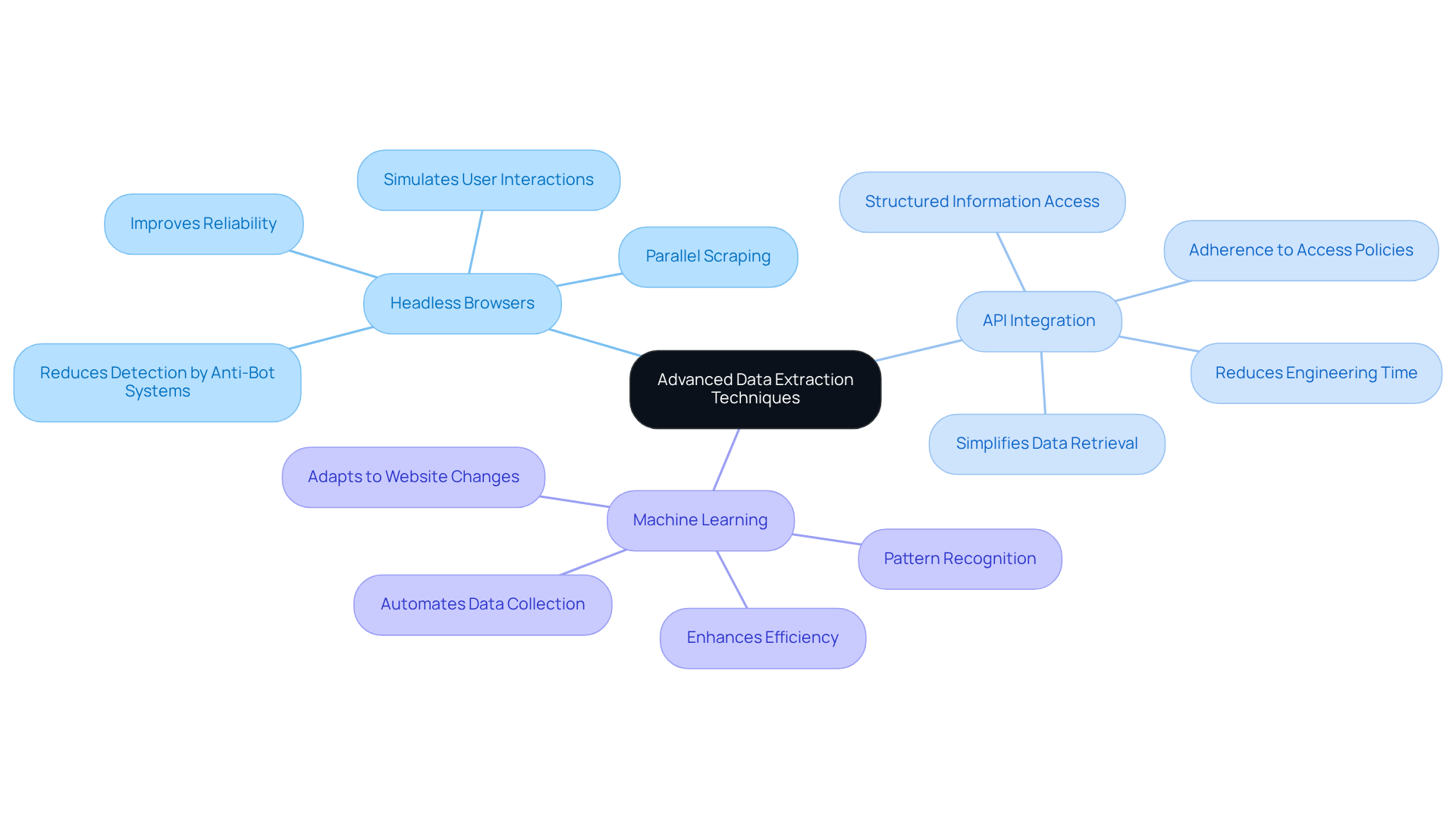

Implement Advanced Data Extraction Techniques

To maximise the effectiveness of web data extraction, consider implementing the following advanced techniques:

- Headless Browsers: Tools such as Puppeteer and Selenium simulate user interactions with web pages, enabling the extraction of data from dynamic sites that heavily rely on JavaScript. This method enhances reliability by mimicking human behaviour, making it less detectable by anti-bot systems. Notably, headless browsers allow running multiple scrapers in parallel due to their lack of a graphical user interface, significantly improving operational efficiency.

- API Integration: Utilising APIs provided by websites for structured information access is often more reliable than scraping HTML. In 2026, the trend towards API utilisation for information retrieval is anticipated to increase, as it simplifies the process and ensures adherence to access policies. It's crucial to observe that 40% of engineering time is dedicated to correcting scrapers, emphasising the necessity for dependable information gathering techniques. Appstractor provides smooth API integration alongside its rotating proxy servers, enabling businesses to effectively access organised information while ensuring adherence to access policies.

- Machine Learning: Utilising machine learning algorithms can greatly improve the retrieval method by recognising patterns in information and automating the collection of unstructured information. This approach not only improves efficiency but also adapts to changes in website layouts, reducing the need for constant maintenance. As Karan Sharma aptly puts it, "The real question is no longer 'Can you scrape it?' The real question is 'Can you scrape it responsibly, in real time, and without crossing a line?'" With Appstractor's , businesses can leverage a web data extractor for automated web information retrieval to enhance their management strategies.

These techniques enhance the extraction method, making it more efficient and less prone to errors, ultimately supporting businesses in their data-driven strategies.

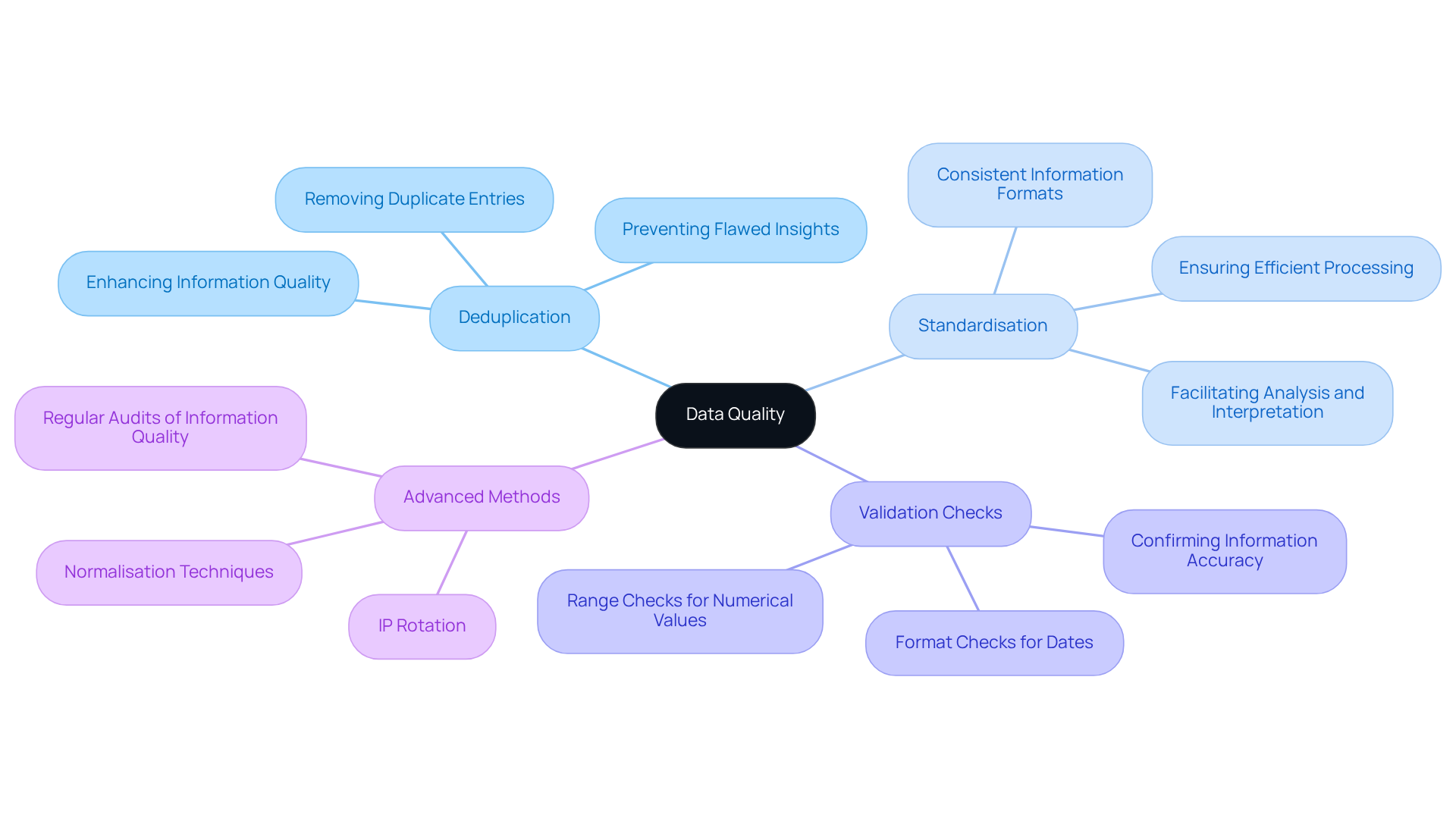

Ensure Data Quality Through Cleaning and Validation

To uphold the integrity of your extracted data, implementing robust cleaning and validation techniques is essential:

- Deduplication: Removing duplicate entries is crucial for maintaining a clean and accurate dataset. This process enhances information quality and prevents flawed insights from redundant details.

- Standardisation: Consistent information formats across your dataset facilitate easier analysis and interpretation. Standardisation ensures that all entries conform to expected formats, which is vital for efficient information processing.

- Validation Checks: Implementing validation checks is necessary to confirm that the information meets predefined criteria, including range checks for numerical values and format checks for dates, ensuring accuracy and reliability.

In addition to these practises, Appstractor employs advanced methods such as IP rotation and normalisation to further enhance quality. The integrated IP rotation function guarantees that information retrieval remains smooth and secure, while normalisation techniques assist in standardising entries prior to delivery. Regular audits of your information quality processes are crucial. They help identify potential issues early, thus preserving the dependability of your extraction efforts. As organisations increasingly recognise the significance of information quality, incorporating these practises can greatly improve operational efficiency and decision-making capabilities. Moreover, the trend towards real-time information validation, adopted by 42% of e-commerce platforms worldwide in 2024, illustrates the industry's shift towards immediate quality measures. As the Tendem Team states, "Quality assurance is not optional; it is what makes scraped information usable." Additionally, utilising authentication methods such as user:pass or IP-whitelist can further secure your data extraction processes.

Conclusion

Mastering web data extraction is crucial for businesses that want to leverage online information effectively. By grasping the fundamentals, selecting appropriate tools, implementing advanced techniques, and ensuring data quality, organisations can significantly enhance their data-driven decision-making processes.

Key insights include:

- Understanding HTML structure and HTTP requests.

- Choosing suitable web data extractors such as Scrapy, Octoparse, and Apify.

- Employing techniques like headless browsers and machine learning for more efficient data collection.

Additionally, maintaining high data quality through rigorous cleaning and validation practices is essential for ensuring the reliability of the extracted information.

As the landscape of web data extraction evolves, adopting these best practices will streamline information retrieval and empower businesses to make informed decisions. Embracing these strategies will enhance operational efficiency and ultimately drive success in an increasingly data-centric environment.

Frequently Asked Questions

What is web data extraction?

Web data extraction, also known as web scraping, is the automated process of collecting information from websites using a web data extractor.

What role does a web data extractor play in this process?

A web data extractor fetches web pages and extracts specific data, which can then be organised into a usable format, such as a database or spreadsheet.

Why is understanding HTML structure important for web scraping?

A solid understanding of the Document Object Model (DOM) is crucial for effective navigation and data extraction from web pages.

What are HTTP requests and why are they important in web data extraction?

HTTP requests are how web browsers communicate with servers, and grasping this concept is vital for successful information extraction.

What is the difference between structured and unstructured information formats?

Structured formats, like JSON or XML, have a defined organisation, while unstructured content, such as plain text, lacks a specific format. Recognising this difference is essential for selecting the right retrieval methods.

How can businesses benefit from mastering web data extraction fundamentals?

By mastering these fundamentals, businesses can utilise a web data extractor to leverage web information retrieval, gaining valuable insights to inform their decision-making processes.

List of Sources

- Understand Web Data Extraction Fundamentals

- How AI Is Changing Web Scraping in 2026 (https://kadoa.com/blog/how-ai-is-changing-web-scraping-2026)

- State of Web Scraping 2026: Trends, Challenges & What’s Next (https://browserless.io/blog/state-of-web-scraping-2026)

- 2026 Web Scraping Industry Report - PDF (https://zyte.com/whitepaper-ebook/2026-web-scraping-industry-report)

- Web Scraping Report 2026: Market Trends, Growth & Key Insights (https://promptcloud.com/blog/state-of-web-scraping-2026-report)

- The Future of Web Scraping: Trends, Predictions, and Technologies for 2026-2030 | Use Apify (https://use-apify.com/blog/future-web-scraping-trends-2026-2030)

- Choose the Right Web Data Extraction Tools

- Apify vs Octoparse 2026: No-Code Scraping & MCP Comparison | Use Apify (https://use-apify.com/docs/apify-vs-the-world/apify-vs-octoparse)

- The Best Web Scraping Companies For Competitive Data in 2026 (https://ficstar.medium.com/the-best-web-scraping-companies-for-competitive-data-in-2026-1f0ef031b3d0)

- Best Data Extraction Tools of 2026: Top 11+ Solutions (https://brightdata.com/blog/web-data/best-data-extraction-tools)

- Best Web Extraction Tools for AI in 2026 (https://firecrawl.dev/blog/best-web-extraction-tools)

- Best Web Scraping Tools in 2026: I Tested 30+ Tools, and These Are the Only Ones Worth Using (https://dev.to/nitinfab/best-web-scraping-tools-in-2026-i-tested-30-tools-and-these-are-the-only-ones-worth-using-11l3)

- Implement Advanced Data Extraction Techniques

- Web Scraping Report 2026: Market Trends, Growth & Key Insights (https://promptcloud.com/blog/state-of-web-scraping-2026-report)

- How AI Is Changing Web Scraping in 2026 (https://kadoa.com/blog/how-ai-is-changing-web-scraping-2026)

- State of Web Scraping 2026: Trends, Challenges & What’s Next (https://browserless.io/blog/state-of-web-scraping-2026)

- Why Serious Scrapers Use Headless Browsers (https://medium.com/@promptcloud20/why-serious-scrapers-use-headless-browsers-ac5692601510)

- Best headless browsers for web scraping in 2026 (https://zyte.com/learn/best-headless-browsers-for-web-scraping)

- Ensure Data Quality Through Cleaning and Validation

- Extracting article & news data: The importance of data quality - Zyte #1 Web Scraping Service (https://zyte.com/blog/news-api-blog-importance-of-article-quality)

- Why Data Validation Techniques in Web Scraping Crucial (https://actowizsolutions.com/why-data-validation-techniques-in-web-scraping-crucial.php)

- What Is Data Cleaning? Complete Guide to Data Quality (2026) (https://articsledge.com/post/data-cleaning)

- Data Quality Validation for Web Scraping Pipelines | UK Guide (https://ukdataservices.co.uk/blog/articles/data-quality-validation-pipelines)

- Data Quality Checklist for Web Scraping: 15-Point Framework (https://tendem.ai/blog/data-quality-checklist-web-scraping)