Introduction

Database scraping has emerged as a transformative tool for digital marketers, providing a means to harness extensive data from the web. By mastering the extraction of valuable insights, marketers can secure a competitive advantage through enhanced competitor analysis, targeted market research, and efficient lead generation.

However, as this practise becomes increasingly vital, several questions arise:

- How can marketers ensure they are scraping both ethically and effectively?

- What tools and strategies will enable them to navigate the complexities of this powerful technique?

Exploring these aspects reveals essential steps for leveraging database scraping to elevate marketing strategies and drive success.

Understand Database Scraping and Its Role in Digital Marketing

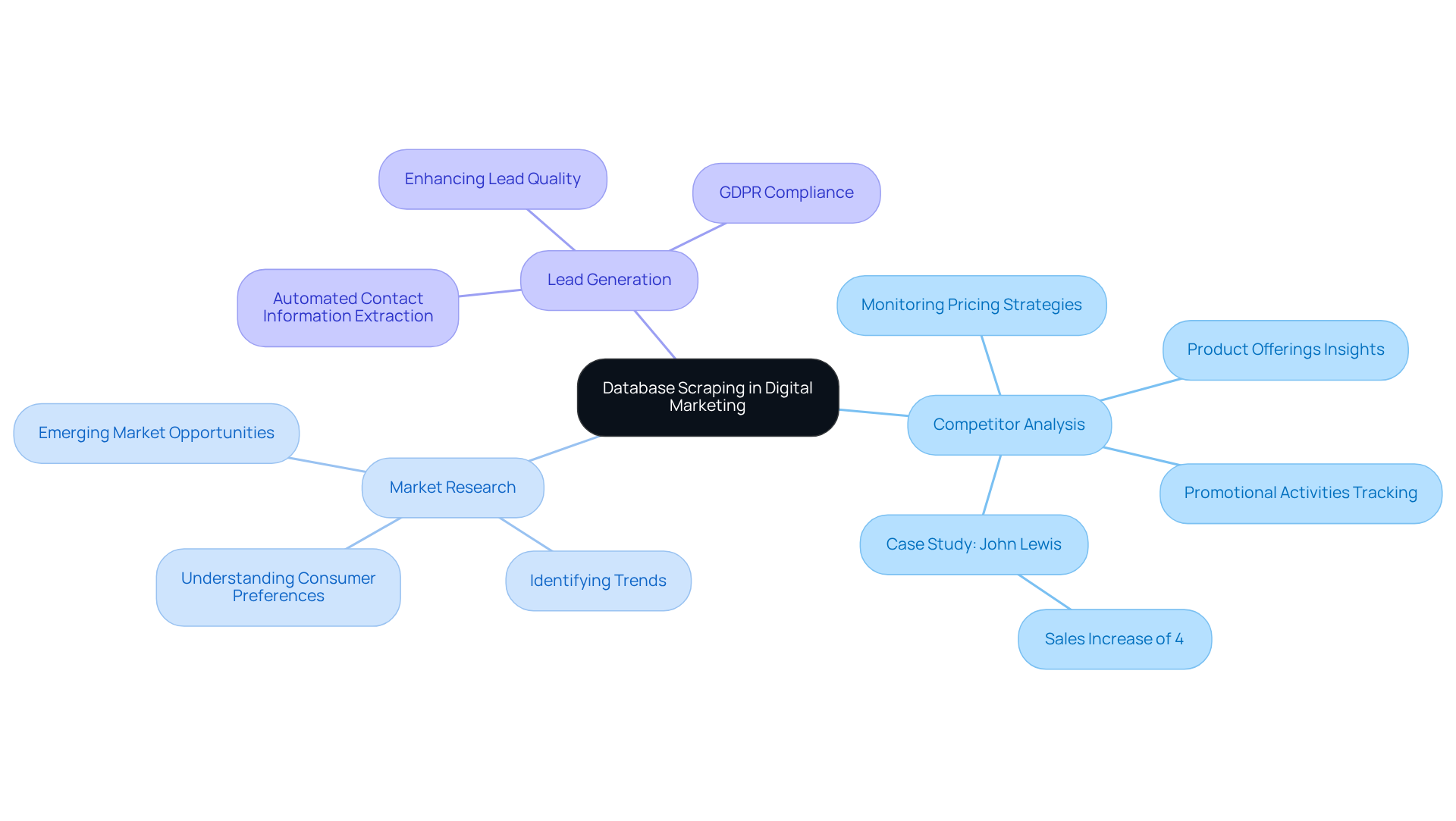

Database scraping refers to the automated retrieval of information from various online sources, including websites and databases, to gather valuable insights. This technique plays a pivotal role in digital marketing across several key areas:

- : Scraping competitor websites allows marketers to monitor pricing strategies, product offerings, and promotional activities. This enables them to adjust their strategies accordingly. facilitate and competitive assortment monitoring, providing essential insights. For instance, UK retailer John Lewis achieved a 4% sales increase through , demonstrating the tangible benefits of this approach.

- : Database scraping from diverse sources assists marketers in identifying trends, consumer preferences, and emerging market opportunities. Appstractor's solutions support , aiding in the crafting of targeted campaigns that resonate with audiences, ultimately driving engagement and conversions.

- : Automated extraction efficiently collects contact information and other pertinent details, streamlining the process. With Appstractor's , marketers can enhance lead quality while ensuring , allowing them to focus on high-potential prospects.

Understanding these applications is crucial for marketers aiming to effectively utilise through database scraping in their campaigns. Furthermore, as the worldwide web harvesting software industry is projected to reach $143.99 billion by 2032, the significance of data extraction in digital marketing will only grow, making it essential for companies to embrace these resources to remain competitive.

Set Up Your Database Scraping Tools and Environment

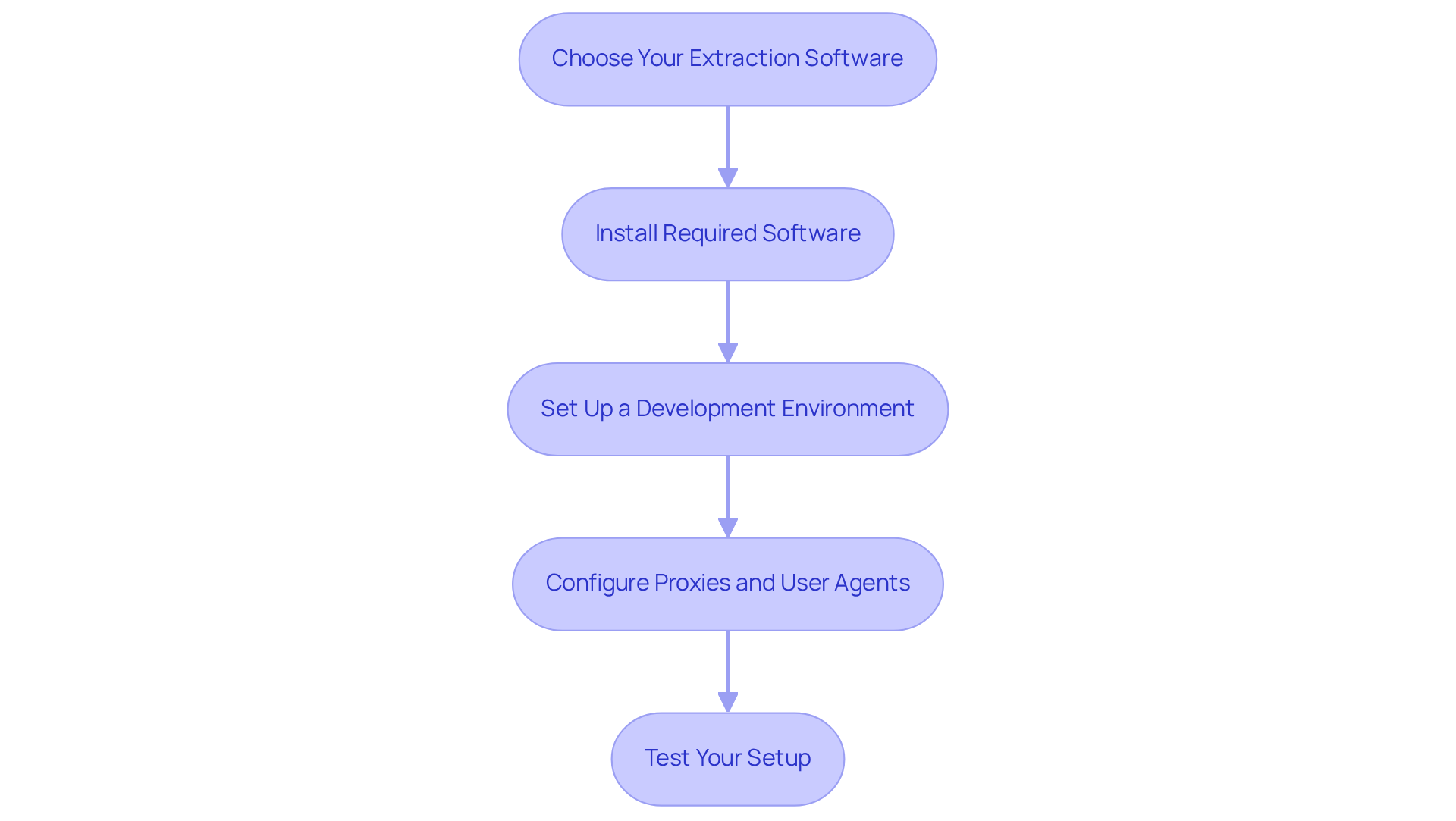

To effectively scrape databases, follow these essential steps to set up your tools and environment:

-

Choose Your : Select an extraction application that aligns with your needs. :

- Beautiful Soup: A Python library favoured by 43.5% of users for parsing HTML and XML documents.

- Scrapy: An open-source framework recognised for its scalability and efficiency in projects.

- Octoparse: A user-friendly, no-code web scraping solution ideal for those who prefer a visual interface.

-

Install Required Software: Depending on your selected application, install the necessary software. For Python-based software, ensure you have Python installed along with essential libraries such as Requests and Pandas.

-

Set Up a : Utilise an Integrated (IDE) such as PyCharm or Visual Studio Code to write and test your extraction scripts effectively.

-

Configure Proxies and User Agents: To reduce the risk of IP bans, set up proxies and user agents within your . This practice helps mimic human browsing behaviour, significantly reducing the likelihood of being blocked. Appstractor provides that can be configured swiftly, ensuring dependable information extraction with a global IP pool and outstanding assistance. Notably, 39.1% of users utilise for location-specific data gathering, emphasising their importance in data collection strategies.

-

Test Your Setup: Execute a simple extraction script to verify that everything is functioning correctly. Observe for any errors and resolve issues as needed to ensure a smooth data extraction process. The market is expected to attain between $2.2B and $3.5B by 2026, emphasising the increasing in digital marketing. With Appstractor's , you can automate seamlessly, ensuring compliance with GDPR while gaining valuable insights. go live within 24 hours, Hybrid audits take 1-4 days, and Full Service projects kick off in 5-7 business days.

Follow Best Practices for Ethical Database Scraping

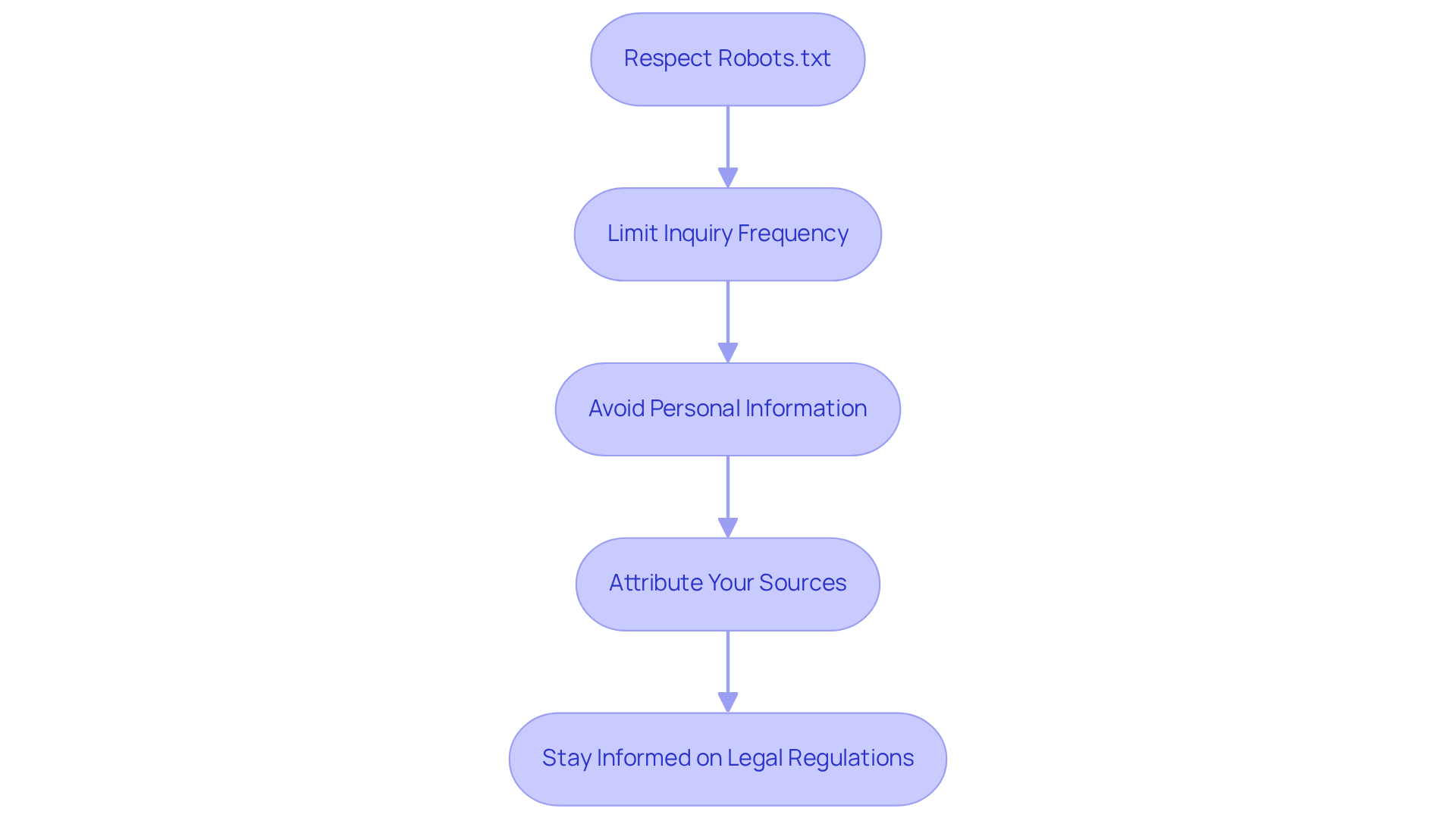

To ensure , adhere to the following best practices:

- Respect Robots.txt: Always check the website's robots.txt file to understand which pages are allowed to be scraped. This file provides essential guidelines on how web crawlers should interact with the site. Ignoring these directives can lead to , as many websites explicitly prohibit scraping in their terms of service. As Bryan Becker from Human Security notes, "Robots.txt has no enforcement mechanism. It’s a sign that says ‘please do not come in if you’re one of these things’ and there’s nothing there to stop you."

- : Avoid overwhelming servers by limiting the frequency of your inquiries. Introducing pauses between inquiries not only resembles human browsing patterns but also aids in sustaining server performance. For instance, a popular sports website experienced 13 million crawler visits from AI companies, resulting in only 600 actual site visits, highlighting the strain excessive requests can place on server resources.

- Avoid Personal Information: Do not scrape personal or sensitive details unless you have explicit permission. Concentrate on publicly accessible information to remain compliant with . The requires that collecting personal information necessitates a lawful basis, such as legitimate interests, to prevent potential legal issues. Violations of the can lead to significant legal risks, which 44.0% of retail and e-commerce firms express concern about.

- : When utilizing collected information, give credit to the original source. This practice fosters goodwill and transparency, which can enhance your reputation and relationships within the industry.

- : Keep up-to-date with the legal landscape surrounding information collection, including GDPR and other relevant laws, to ensure compliance. A Data Protection Impact Assessment (DPIA) should be carried out to evaluate the necessity and proportionality of processing personal information, ensuring that your extraction activities adhere to privacy principles and do not violate access controls. This includes evaluating risks to data subjects and implementing appropriate safeguards.

Troubleshoot Common Database Scraping Issues

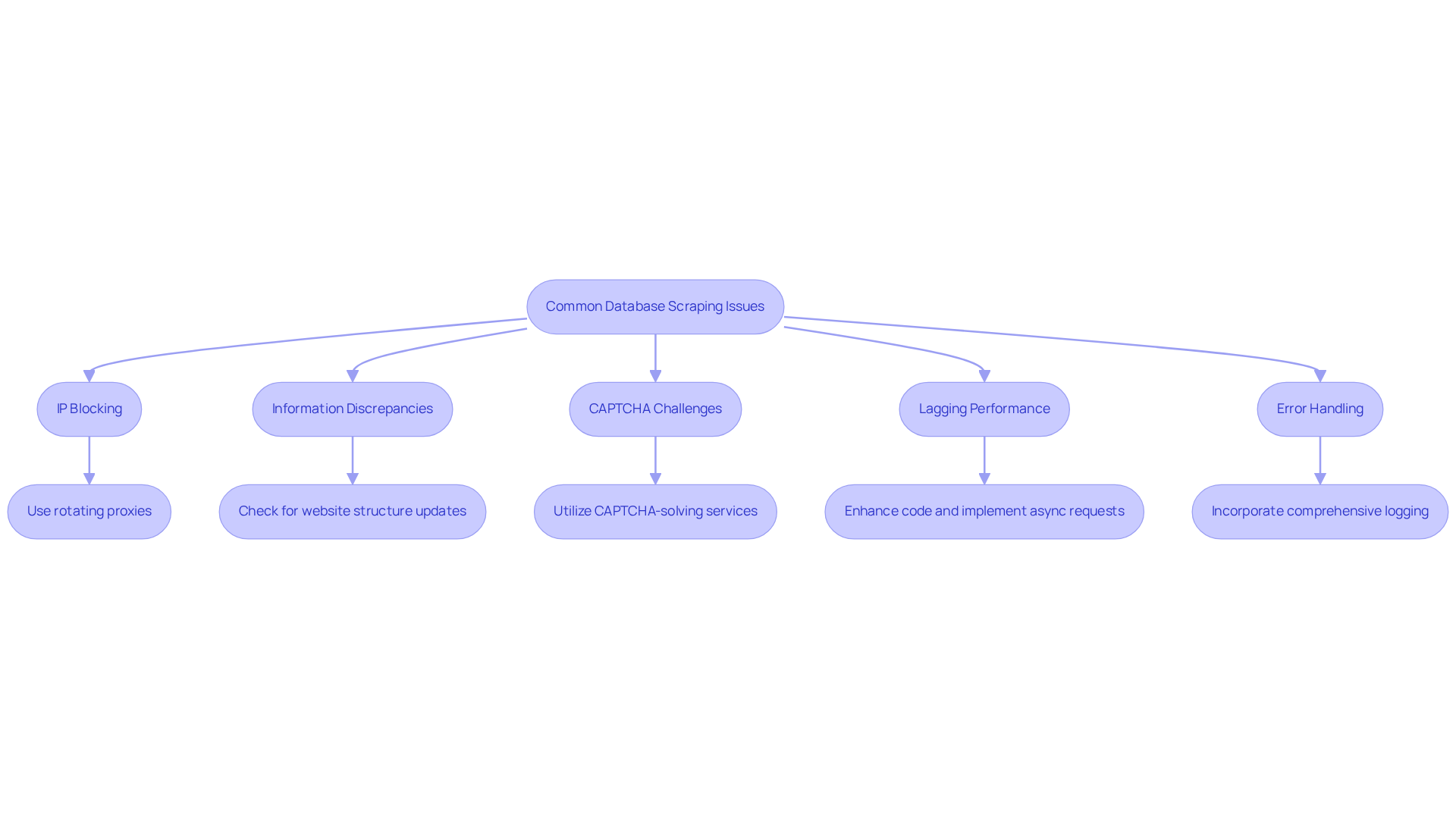

Several common issues may arise when engaging in database scraping. Here’s how to effectively :

- : To mitigate the risk of , utilise that distribute requests across a diverse range of IP addresses. This strategy significantly lowers the chances of being flagged as a bot, as it mimics human browsing behaviour.

- Information Discrepancies: Inconsistent scraped information often results from changes in the target website's structure. Consistently check for updates and modify your extraction logic to match any changes in the HTML structure, ensuring .

- : Encountering CAPTCHAs can hinder your . Utilise CAPTCHA-solving services or implement delays between submissions to reduce the chances of activating these security protocols, .

- : If your scraper encounters , enhance your code by removing superfluous calls and focusing on crucial information points. Implementing asynchronous requests can enhance speed and efficiency, enabling quicker data retrieval.

- : Robust is crucial for managing unexpected issues. Incorporate comprehensive logging to capture errors, allowing for thorough review and adjustments to your as needed. This proactive approach ensures resilience in your scraping operations.

Conclusion

In conclusion, mastering database scraping is crucial for digital marketers who seek to leverage data for a competitive edge. By effectively employing this technique, marketers can uncover valuable insights that inform decision-making and refine their strategies.

Key points discussed throughout the article include the role of database scraping in competitor analysis, market research, and lead generation. The article outlined the essential steps for establishing a scraping environment, from selecting appropriate tools to implementing ethical scraping practises. Additionally, addressing common troubleshooting issues facilitates a smoother data extraction process, enabling marketers to concentrate on deriving actionable insights rather than being hindered by technical difficulties.

As the digital landscape evolves, the importance of database scraping will only grow. Marketers are urged to adopt these techniques and tools to stay competitive and responsive to market dynamics. By following ethical guidelines and remaining aware of legal considerations, businesses can utilise data responsibly, ultimately enhancing their marketing effectiveness and building trust with their audience.

Frequently Asked Questions

What is database scraping?

Database scraping refers to the automated retrieval of information from various online sources, including websites and databases, to gather valuable insights.

How does database scraping benefit competitor analysis?

Scraping competitor websites allows marketers to monitor pricing strategies, product offerings, and promotional activities, enabling them to adjust their strategies accordingly. For example, UK retailer John Lewis achieved a 4% sales increase through effective competitor price monitoring.

In what ways does database scraping assist market research?

Database scraping helps marketers identify trends, consumer preferences, and emerging market opportunities, supporting comprehensive market analysis and the crafting of targeted campaigns that resonate with audiences.

How does database scraping streamline lead generation?

Automated extraction efficiently collects contact information and other pertinent details, enhancing lead quality while ensuring GDPR compliance, allowing marketers to focus on high-potential prospects.

Why is understanding database scraping important for marketers?

Understanding the applications of database scraping is crucial for marketers aiming to effectively utilise information extraction in their campaigns, which can lead to improved engagement and conversions.

What is the projected growth of the web harvesting software industry?

The worldwide web harvesting software industry is projected to reach $143.99 billion by 2032, indicating the growing significance of data extraction in digital marketing.

List of Sources

- Understand Database Scraping and Its Role in Digital Marketing

- Exploring Business Benefits of Web Scraping: Key Stats & Trends (https://browsercat.com/post/business-benefits-web-scraping-statistics-trends)

- Web Scraping Report 2026: Market Trends, Growth & Key Insights (https://promptcloud.com/blog/state-of-web-scraping-2026-report)

- eCommerce Data Scraping in 2026: The Ultimate Strategic Guide (https://groupbwt.com/blog/ecommerce-data-scraping)

- State of Web Scraping 2026: Trends, Challenges & What’s Next (https://browserless.io/blog/state-of-web-scraping-2026)

- 2026 Web Scraping Industry Report - PDF (https://zyte.com/whitepaper-ebook/2026-web-scraping-industry-report)

- Set Up Your Database Scraping Tools and Environment

- Best Web Scraping Tools in 2026: I Tested 30+ Tools, and These Are the Only Ones Worth Using (https://dev.to/nitinfab/best-web-scraping-tools-in-2026-i-tested-30-tools-and-these-are-the-only-ones-worth-using-11l3)

- Web Scraping Statistics & Trends You Need to Know in 2026 (https://scrapingdog.com/blog/web-scraping-statistics-and-trends)

- 3 Case Studies of AI Web Scraping Technology - Cloud Wars (https://cloudwars.com/cybersecurity/3-case-studies-of-ai-web-scraping-technology)

- Follow Best Practices for Ethical Database Scraping

- News companies are doubling down to fight against AI Web scrapers (https://inma.org/blogs/Product-and-Tech/post.cfm/news-companies-are-doubling-down-to-fight-against-ai-web-scrapers)

- Is Web Scraping Legal in the UK? GDPR & DPA 2018 Guide (2026) (https://ukdataservices.co.uk/blog/articles/web-scraping-compliance-uk-guide)

- Understanding Web Scraping Legality: Global Insights & Stats (https://browsercat.com/post/web-scraping-legality-global-statistics)

- Legal & Ethical Web Scraping- Compliance Guide 2025 (https://forage.ai/blog/legal-and-ethical-issues-in-web-scraping-what-you-need-to-know)

- Troubleshoot Common Database Scraping Issues

- Monitoring and Maintaining Web Scrapers: Best Practices for Long-Term Reliability (https://linkedin.com/pulse/monitoring-maintaining-web-scrapers-best-practices-long-term-hasan-b0isc)

- Top Web Scraping Challenges in 2026 (https://scrapingbee.com/blog/web-scraping-challenges)

- Web Scraping Best Practices: Avoiding IP Blocking and Captchas in Python (https://linkedin.com/pulse/web-scraping-best-practices-avoiding-ip-blocking-captchas-python-vhrrf)

- The Most Common Web Scraping Challenges in 2026 (https://research.aimultiple.com/web-scraping-challenges)

- How to Avoid Web Scraper IP Blocking? (https://scrapfly.io/blog/posts/how-to-avoid-web-scraping-blocking-ip-addresses)