Introduction

Web scraping has emerged as a vital tool for businesses seeking to leverage the extensive information available online, especially for extracting valuable contact details from various websites. This guide provides a thorough approach to web harvesting, detailing essential concepts, tools, and techniques that streamline the extraction process. As the web scraping landscape continues to evolve, it raises important questions:

- How can one effectively navigate the complexities of ethical considerations?

- What are the technical challenges involved?

- How can one ensure efficient and compliant data collection?

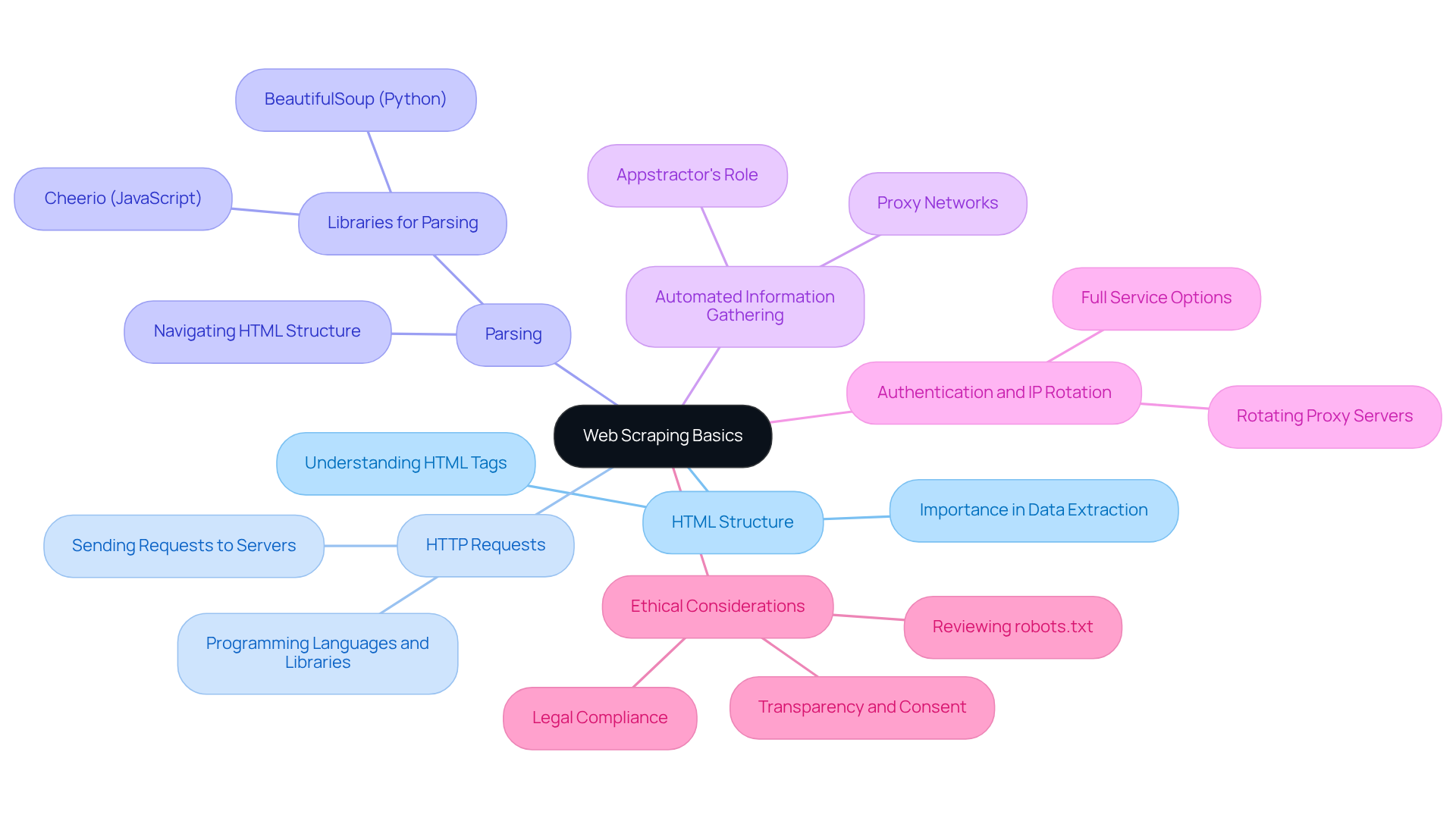

Understand Web Scraping Basics

Web harvesting is an automated method to extract contact info from websites. This process involves fetching a web page and to retrieve specific details. Below are some key concepts:

- HTML Structure: Websites are built using HTML, which defines the structure of the content. A solid understanding of HTML tags (such as

<div>,<span>,<a>, etc.) is crucial for identifying where the desired information resides. In 2026, approximately 65% of businesses had employed web harvesting to extract contact info from websites, highlighting its significance in modern information strategies. - HTTP Requests: The web extraction process typically begins by sending an HTTP request to a server to retrieve the web page. This can be accomplished using various programming languages and libraries, providing flexibility in implementation.

- Parsing: After retrieving the HTML content, it must be parsed to extract relevant information. Libraries like BeautifulSoup in Python or Cheerio in JavaScript are commonly used for this purpose, enabling developers to navigate the HTML structure effectively.

- Automated Information Gathering: Appstractor enhances the web scraping process by automating information collection through advanced proxy networks and scraping technology. This automation eliminates the need for manual information collection, facilitating the efficient gathering of structured information that helps to extract contact info from websites for businesses.

- Authentication and IP Rotation: Appstractor offers various proxy options, including Rotating Proxy Servers for self-serve IPs and Full Service for turnkey information delivery. These strategies ensure seamless integration and efficient information extraction while adhering to ethical standards.

- Ethical Considerations: It is essential to review the website's

robots.txtfile to understand the scraping rules set by the site owner. Following these guidelines is crucial to prevent legal issues and ensure responsible information collection practices. As the web evolves, adherence to ethical standards is increasingly important, with organisations expected to gather only essential information and respect website rules. F5 Labs emphasises that companies that build transparent systems and honour consent will not only comply with the future of the web but also help shape it.

In conclusion, understanding these fundamental concepts is vital for anyone looking to implement effective web extraction strategies. As you delve deeper into web harvesting, consider exploring advanced methods and tools, such as those offered by Appstractor, which can enhance your information gathering capabilities.

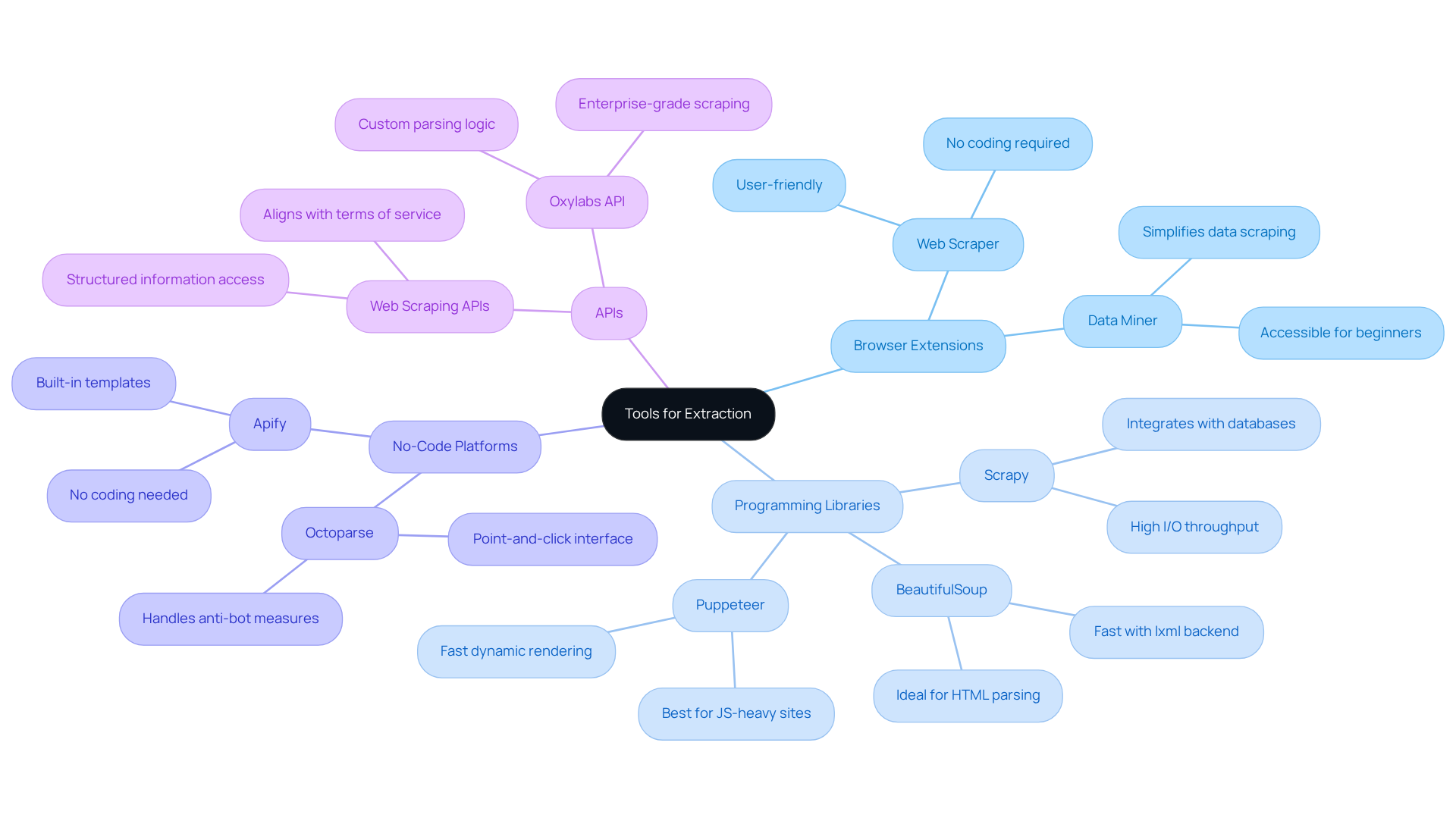

Choose the Right Tools for Extraction

Selecting the appropriate tools for web harvesting is crucial for efficient information extraction. Below are some popular options:

- Browser Extensions: User-friendly tools like Web Scraper and Data Miner cater to beginners, enabling data scraping without any coding knowledge. These extensions simplify the process, making it accessible for those new to web data extraction.

- Programming Libraries: For users with coding experience, libraries such as BeautifulSoup and Scrapy (both in Python) and Puppeteer (in JavaScript) provide robust capabilities for managing more complex data extraction tasks. These libraries offer enhanced customization and control over the data extraction process.

- No-Code Platforms: Tools like Octoparse and Apify provide no-code interfaces, appealing to users who prefer not to engage in programming. These platforms frequently offer built-in templates for typical data extraction scenarios, simplifying the setup process.

- APIs: Numerous websites offer APIs that enable structured information access. Utilising an API is often more effective and aligns with a site's terms of service, so it is advisable to before resorting to extraction methods.

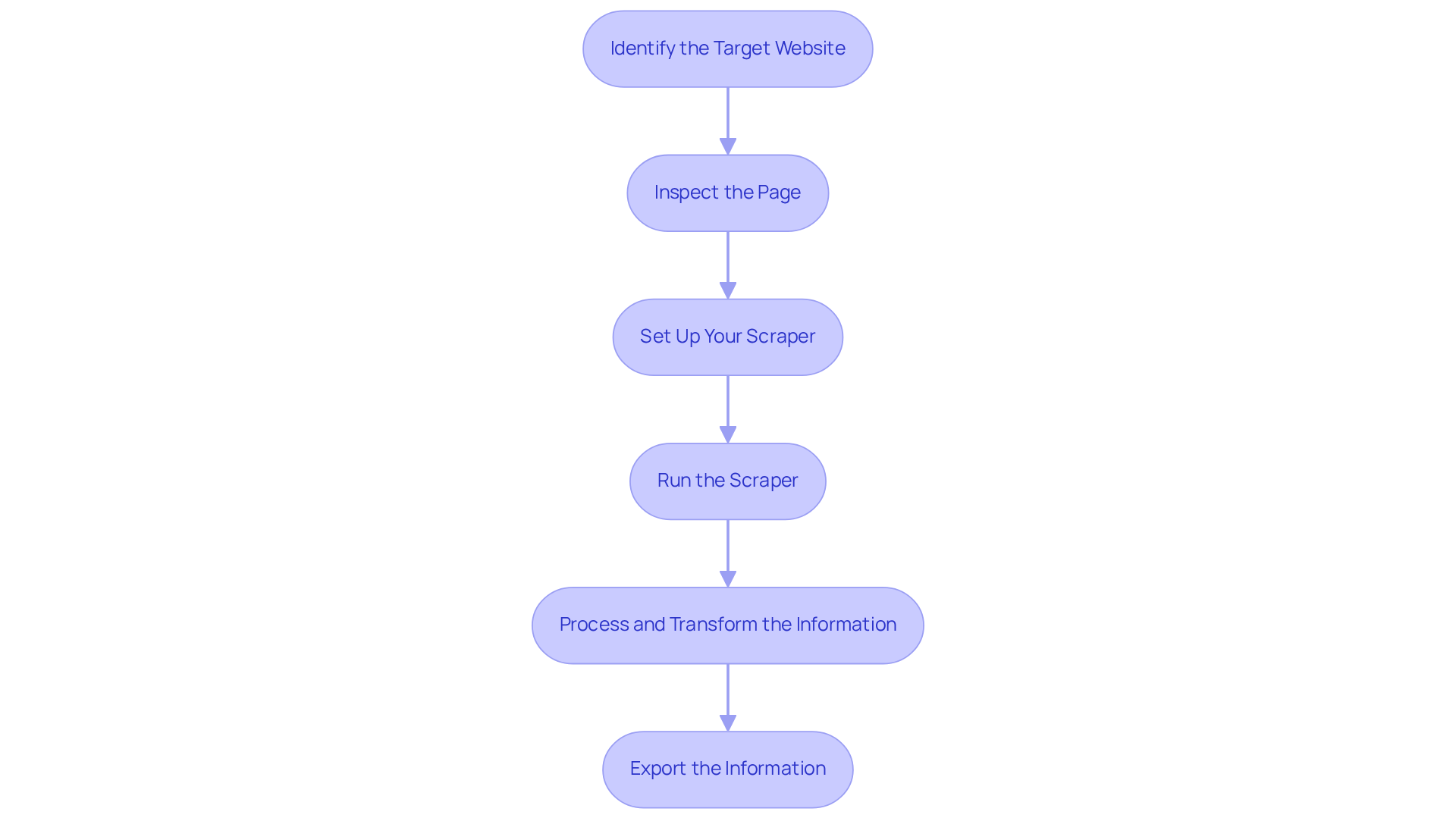

Execute the Extraction Process

To extract contact information from a website using Appstractor's advanced data mining solutions, follow these steps:

- Identify the Target Website: Choose a publicly accessible site that complies with regulations for ethical information extraction.

- Inspect the Page: Utilise your browser's developer tools by right-clicking on the page and selecting 'Inspect' to analyse the HTML structure. Focus on identifying elements that contain contact information, such as email addresses and phone numbers. This step is crucial as it allows you to visualise the HTML as a tree, making it easier to locate specific tags and attributes.

- Set Up Your Scraper: Depending on your chosen method:

- For browser extensions, configure the scraping settings to target the identified HTML elements.

- For programming libraries, write a script that sends an HTTP request to the website, retrieves the HTML content, and uses a library like BeautifulSoup to parse it for the required information. Consider utilising Appstractor's Rotating Proxy Servers for self-serve IPs or the Full Service option for complete information delivery, which can simplify this process.

- Run the Scraper: Carry out the scraping process while monitoring for any errors or issues that may arise during retrieval. Ensure that your script can adapt to potential changes in the website's structure, which can affect information retrieval. If you choose Appstractor's services, they manage these complexities for you, ensuring a smooth retrieval experience.

- Process and Transform the Information: After retrieval, it is essential to clean the information by removing HTML tags and ensuring consistency in formats. Appstractor's solutions include information normalisation and de-duplication processes, enhancing quality and usability for analysis.

- Export the Information: Once the retrieval and processing are complete, export the information into a structured format such as CSV or JSON. This organisation facilitates further analysis and utilisation of the ability to extract contact info from website.

By following these steps and utilising Appstractor's efficient web information collection solutions, you can effectively gather contact details while adhering to best practises in web scraping, including compliance with legal guidelines and ensuring high information quality.

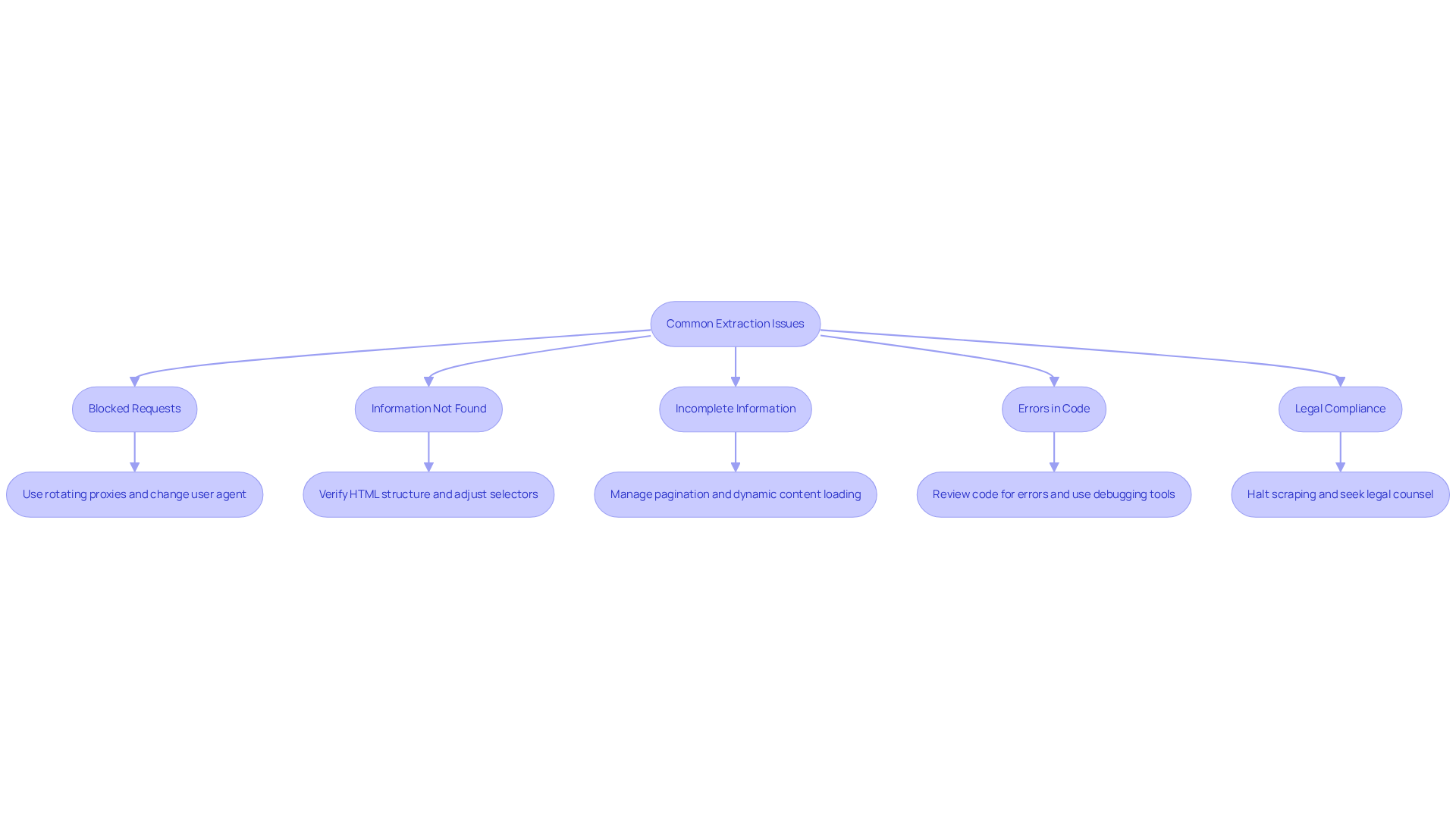

Troubleshoot Common Extraction Issues

Even experienced scrapers encounter obstacles during information extraction. Here are some prevalent issues and effective troubleshooting strategies:

- Blocked Requests: When requests are blocked, employing rotating proxies can help mask your IP address and distribute requests across multiple addresses, reducing the likelihood of detection. Changing your user agent to mimic a standard browser can also enhance success rates.

- Information Not Found: If your scraper fails to locate the desired information, verify the HTML structure. Websites frequently , which can disrupt your selectors. Adjusting your scraping logic to accommodate these changes is crucial.

- Incomplete Information: To tackle incomplete information extraction, ensure your scraper is set up to manage pagination and dynamic content loading. Many websites utilise JavaScript to load additional data, which requires specific handling techniques.

- Errors in Code: Regularly review your code for syntax errors or logical flaws. Utilising debugging tools can assist in pinpointing and resolving issues effectively.

- Legal Compliance: Adhering to legal and ethical standards is paramount in web data extraction. As of 2026, violations can lead to significant penalties, including fines up to €20 million for GDPR breaches. If you receive legal notices, halt scraping immediately and seek legal counsel to navigate compliance issues.

Conclusion

In conclusion, understanding how to extract contact information from websites is vital for businesses aiming to enhance their outreach and data collection strategies. This guide has provided a comprehensive overview of the web scraping process, highlighting the importance of grasping fundamental concepts such as HTML structure, HTTP requests, and ethical considerations. Mastering these elements enables individuals and organisations to effectively gather valuable information while adhering to best practises.

Key arguments presented include the necessity of selecting the right tools for extraction, whether through browser extensions, programming libraries, or no-code platforms. Each option caters to different skill levels, ensuring that anyone can engage in web scraping, regardless of technical expertise. Additionally, the article emphasises troubleshooting common issues that may arise during the extraction process, equipping users to address challenges effectively.

Ultimately, web scraping serves as a powerful tool for gathering contact information, but it is crucial to approach it responsibly. By respecting legal guidelines and ethical standards, businesses can optimise their data collection efforts while contributing positively to the evolving landscape of the web. Embracing these practises empowers organisations to harness the full potential of web scraping, fostering trust and transparency in their data collection endeavours.

Frequently Asked Questions

What is web scraping?

Web scraping, also known as web harvesting, is an automated method used to extract specific information, such as contact details, from websites by fetching web pages and parsing their content.

Why is understanding HTML important for web scraping?

Understanding HTML is crucial because websites are built using HTML, which defines the structure of the content. Knowledge of HTML tags (like

How does the web scraping process begin?

The web scraping process typically begins by sending an HTTP request to a server to retrieve the web page, which can be done using various programming languages and libraries.

What is the role of parsing in web scraping?

After retrieving the HTML content, parsing is needed to extract relevant information. Libraries such as BeautifulSoup in Python or Cheerio in JavaScript are commonly used to navigate the HTML structure effectively.

How does Appstractor enhance the web scraping process?

Appstractor enhances the web scraping process by automating information collection through advanced proxy networks and scraping technology, allowing for efficient gathering of structured information without manual effort.

What are rotating proxy servers and how do they assist in web scraping?

Rotating proxy servers provide self-serve IPs, allowing for seamless integration and efficient information extraction while adhering to ethical standards. Appstractor offers both rotating proxy options and full-service solutions for information delivery.

Why are ethical considerations important in web scraping?

Ethical considerations are important because it is necessary to review a website's robots.txt file to understand the scraping rules set by the site owner. Following these guidelines helps prevent legal issues and ensures responsible information collection practises.

What should organisations keep in mind regarding ethical web scraping?

Organisations should gather only essential information, respect website rules, and build transparent systems that honour consent, as this will help them comply with future web standards and contribute positively to the web's evolution.

List of Sources

- Understand Web Scraping Basics

- State of Web Scraping 2026: Trends, Challenges & What’s Next (https://browserless.io/blog/state-of-web-scraping-2026)

- Web Scraping Market Size, Growth Report, Share & Trends 2026 - 2031 (https://mordorintelligence.com/industry-reports/web-scraping-market)

- How AI Is Changing Web Scraping in 2026 (https://kadoa.com/blog/how-ai-is-changing-web-scraping-2026)

- Web Scraping Report 2026: Market Trends, Growth & Key Insights (https://promptcloud.com/blog/state-of-web-scraping-2026-report)

- Choose the Right Tools for Extraction

- Top 7 Web Scraping Tools for 2026: From Beginner to Pro — Comparison and Rankings (https://mobileproxy.space/en/pages/top-7-web-scraping-tools-for-2026-from-beginner-to-pro--comparison-and-rankings.html)

- Best Web Scraping Tools in 2026 (https://scrapfly.io/blog/posts/best-web-scraping-tools)

- Web Scraping Statistics & Trends You Need to Know in 2026 (https://scrapingdog.com/blog/web-scraping-statistics-and-trends)

- Best Web Scraping Tools in 2026: I Tested 30+ Tools, and These Are the Only Ones Worth Using (https://dev.to/nitinfab/best-web-scraping-tools-in-2026-i-tested-30-tools-and-these-are-the-only-ones-worth-using-11l3)

- The Top AI Web Scrapers of 2026: An Honest Review (https://kadoa.com/blog/best-ai-web-scrapers-2026)

- Execute the Extraction Process

- Web Scraping and HTML Basics (https://medium.com/@mervegamzenar/web-scraping-and-html-basics-6975ed22a6ae)

- Scraping a Web Page Part 1- Inspecting the HTML - The Data School (https://thedataschool.co.uk/conrad-wilson/scraping-a-web-page-part-1-inspecting-the-html)

- How to Use Web Scraping for Sales Leads Generation 2026 (https://zyndoo.com/blog/blog-5/how-to-use-web-scraping-for-sales-leads-generation-2026-21)

- B2B Lead Scraping: How to Build Targeted Prospect Lists in 2026 (https://tendem.ai/blog/b2b-lead-scraping-guide)

- Troubleshoot Common Extraction Issues

- Top Web Scraping Challenges in 2026 (https://scrapingbee.com/blog/web-scraping-challenges)

- Web Scraping Services: The Complete 2026 Buyer's Guide (https://tendem.ai/blog/web-scraping-services-guide)

- Stop Getting Blocked: 10 Common Web-Scraping Mistakes & Easy Fixes (https://firecrawl.dev/blog/web-scraping-mistakes-and-fixes)

- The Most Common Web Scraping Challenges in 2026 (https://aimultiple.com/web-scraping-challenges)