Introduction

Understanding the complexities of web crawling is essential in the current digital landscape, where search engines depend on automated processes to index and rank content. This article explores the fundamental role of web crawling in Search Engine Optimization (SEO), illustrating how it affects website visibility and influences search rankings. As web technologies continue to evolve, businesses face significant challenges in ensuring their sites remain crawlable and effectively indexed.

Define Web Crawling: Understanding the Basics

, often known as or spidering, is an automated process where search engines and various entities systematically navigate the internet to gather and index content from numerous web pages. This task is executed by software programmes called web crawlers or spiders, which traverse the web by following hyperlinks from one page to another.

The primary objective of is to collect , enabling search engines to deliver relevant search results to users. In 2026, nearly half of all internet traffic is attributed to bots, underscoring the growing reliance on . Understanding is crucial for optimising websites for search engines, as it significantly impacts how information is discovered and ranked online.

Businesses that utilise can gain valuable insights into competitor strategies, monitor keyword performance, and enhance their marketing efforts. provide , ad visibility, and competitive analysis while ensuring , which is essential for ethical data handling.

As technology evolves, staying informed about web scraping techniques and tools becomes increasingly important for digital marketers seeking to maintain a competitive edge. The decline in click-through rates from AI tools, which fell from 0.8% in Q2 to 0.27% in Q4 of 2025, highlights the necessity for effective strategies that Appstractor offers to navigate these shifts in SEO dynamics. Furthermore, the , which grew significantly in 2025, emphasise the importance of robust bot management strategies.

Explain the Importance of Crawling in SEO

What is is fundamental to Search Engine Optimization (SEO) because it dictates how search engines discover and index web pages. When a web crawler, such as Googlebot, visits a site, it meticulously examines the material, structure, and interlinking of pages. This analysis informs the search engine's algorithms regarding the site's relevance and quality. is essential for ensuring that new and revised material is registered quickly, allowing it to appear in search results. Without , even high-quality content can remain hidden from potential visitors, significantly limiting its reach.

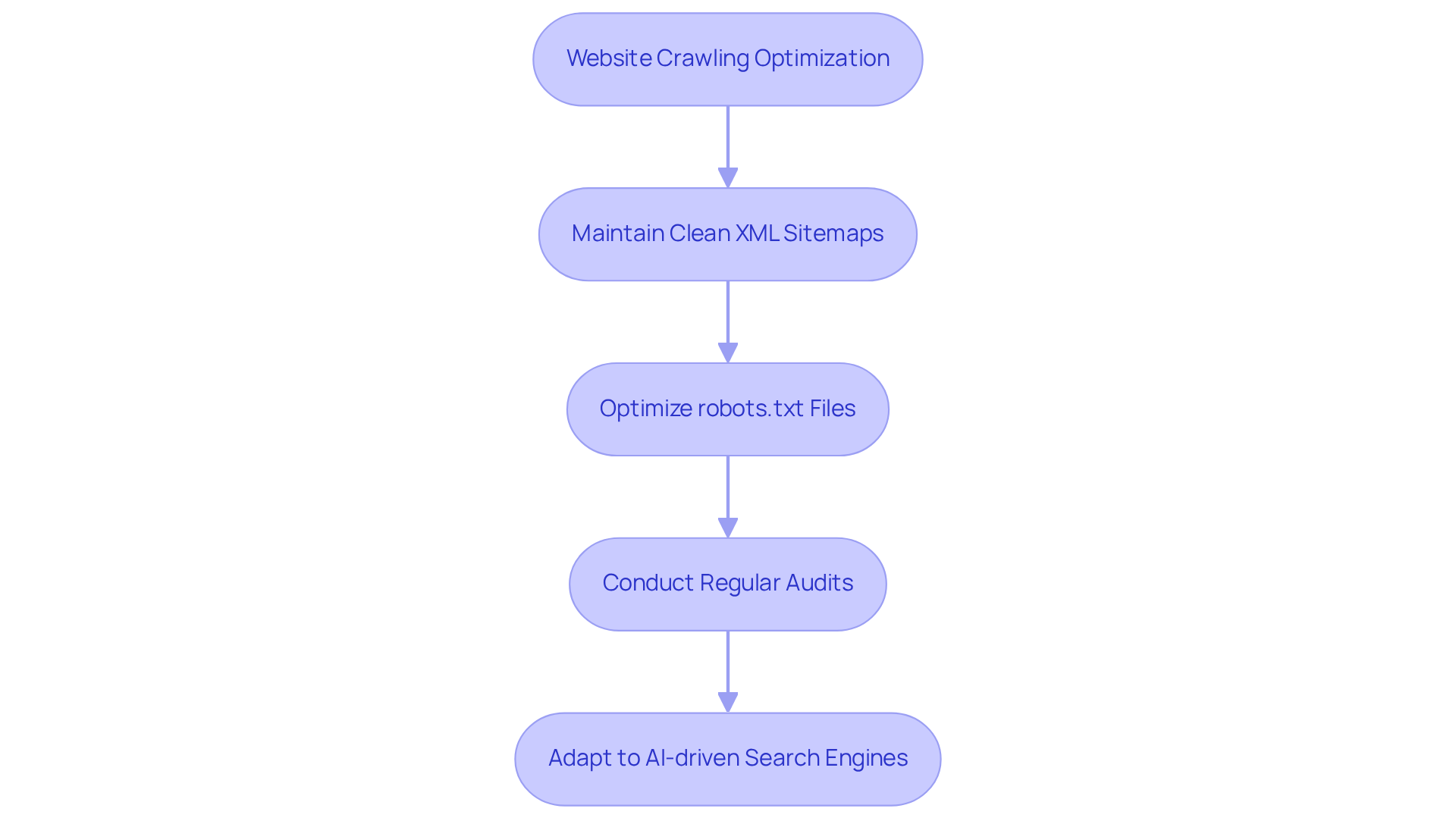

Understanding what is is crucial for optimising a site to enhance visibility and drive organic traffic. Businesses that prioritise crawlability by maintaining and optimising their robots.txt files can significantly improve their indexing rates. Regular audits of help ensure that high-value pages are prioritised, preventing low-value content from consuming resources that should be allocated to more impactful pages.

As evolve, the importance of crawlability becomes even more pronounced. Understanding what is helps ensure that websites are easily crawlable and structured for semantic understanding, which increases their chances of being indexed effectively and ranking well in search results. In competitive sectors, such as e-commerce and travel, ensuring that a site is crawlable is not just beneficial but essential for maintaining visibility and achieving business objectives. To assist users in leveraging effectively, user manuals provide guidance on through the use of rotating proxies and data extraction solutions.

Describe How Web Crawlers Operate: Mechanisms and Processes

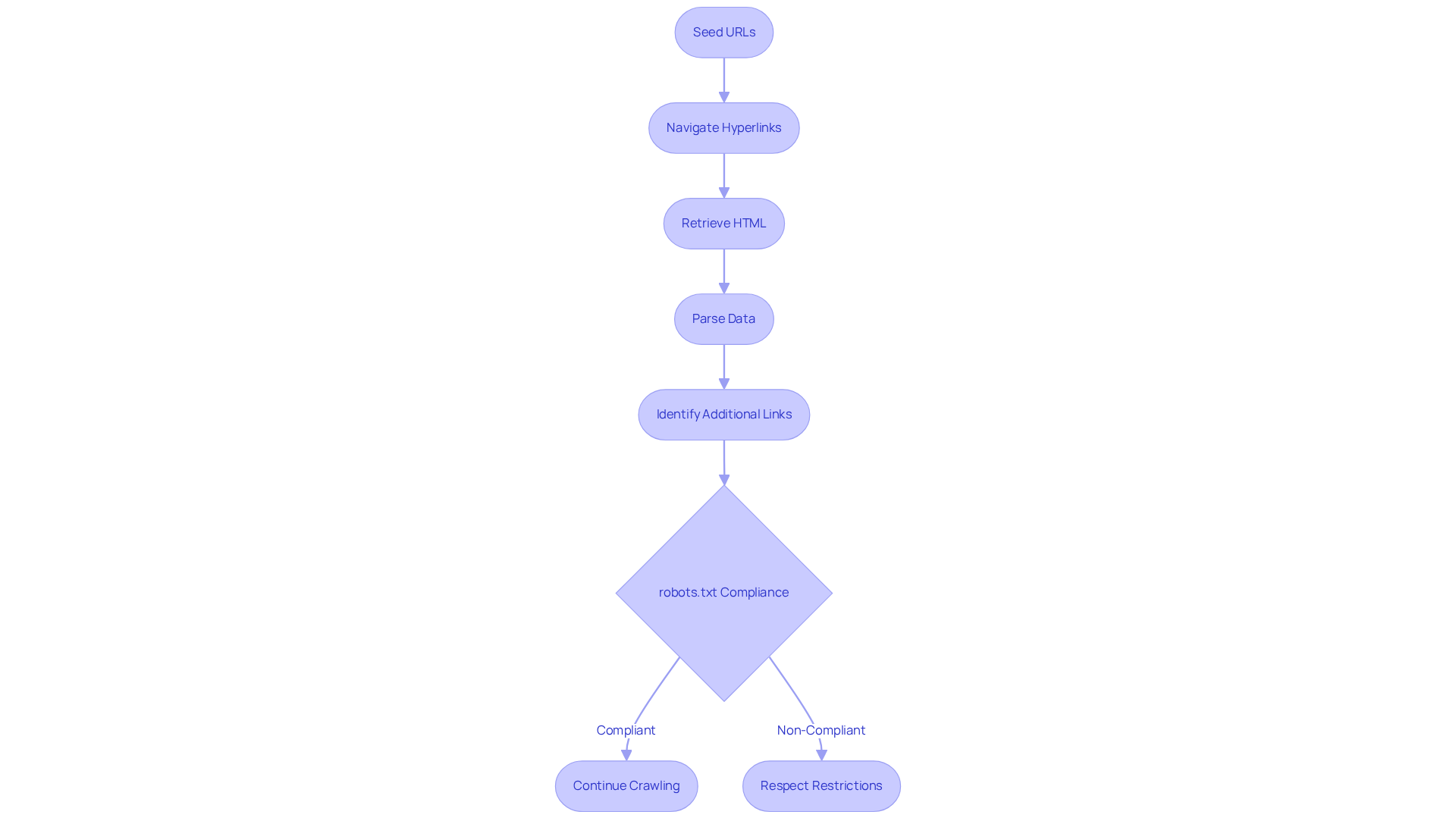

operate through a structured series of steps, beginning with a list of recognised URLs, commonly known as . From these initial points, bots navigate through hyperlinks to uncover new pages. This process involves retrieving the HTML information of each page, parsing it to extract relevant data, and identifying additional links for further crawling.

Significantly, automated agents adhere to the guidelines outlined in a website's , which specifies which pages should remain off-limits. This compliance is crucial for and respecting content restrictions. On average, advanced can process thousands of URLs daily, greatly enhancing .

The collected data is subsequently indexed by search engines, allowing users to access relevant information through search queries. For instance, some crawlers effectively follow to aggregate data from e-commerce sites, providing insights into pricing and inventory levels.

By utilising , Appstractor ensures that its are not only cost-effective but also scalable and secure, in alignment with . Additionally, features such as automated updates and anywhere access enhance the overall efficiency of web retrieval processes. As the landscape of web exploration evolves, understanding these mechanisms becomes essential for and ensuring compliance with emerging regulations.

Identify Challenges and Considerations in Web Crawling

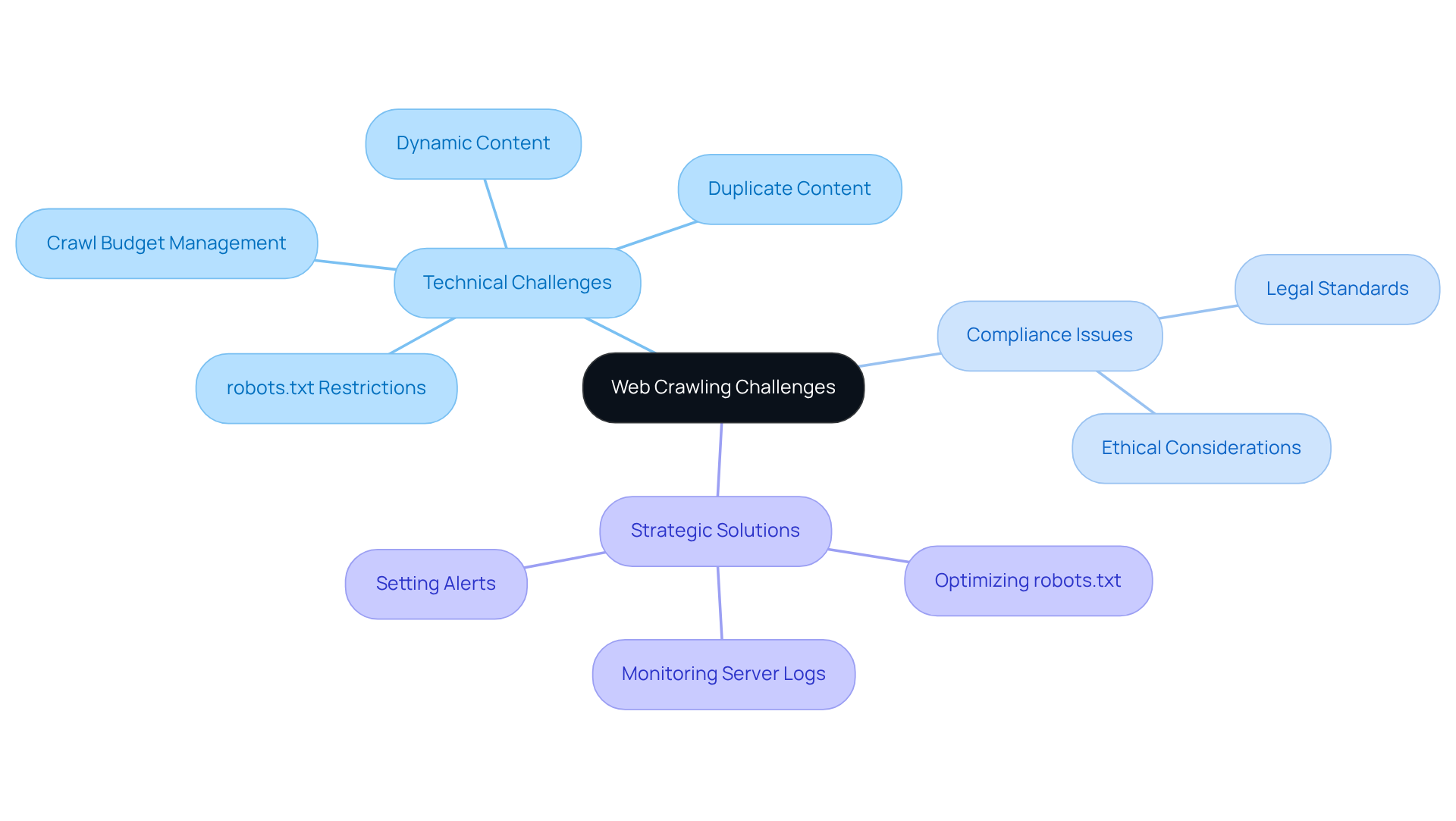

involves encountering several challenges that can significantly impact its efficiency and effectiveness. A primary concern is the use of by websites, which can restrict automated agents from accessing specific pages. As of mid-2025, around 14% of top sites had established explicit rules for AI bots in their , indicating a growing trend among publishers to manage bot traffic more effectively. This proactive strategy is crucial, as many AI programmes may not fully comply with these guidelines, leading to potential material misappropriation and reduced referral traffic, with crawl-to-refer ratios soaring as high as 50,000:1.

Dynamic content generated by JavaScript further complicates indexing efforts, as many bots struggle to execute scripts effectively. Additionally, challenges such as , , and adherence to legal and ethical standards add to the complexity. Websites lacking proper or featuring excessive redirects can confuse search engines, resulting in incomplete indexing and missed opportunities for visibility.

To address these challenges, and , including understanding . For example, companies can optimize their to clearly specify which areas of their site should be accessible to crawlers, thereby improving their chances of accurate indexing. Regular can also help identify trends in bot traffic and unusual spikes, while setting up alerts for these irregularities allows for timely adjustments to data retrieval strategies. By tackling these obstacles, businesses can and fully leverage their online presence.

Conclusion

Web crawling is a fundamental process that supports the functionality of search engines, ensuring effective indexing and ranking of websites. Understanding the mechanisms of web crawling allows businesses to enhance their online visibility and optimise content for improved search engine performance. This foundational knowledge is crucial for anyone aiming to refine their SEO strategy in an increasingly competitive digital landscape.

The article explores various aspects of web crawling, emphasising its definition, significance in SEO, operational mechanisms, and the challenges encountered by web crawlers. Key insights include:

- The necessity of maintaining clean sitemaps.

- Optimising robots.txt files.

- Addressing issues such as duplicate content and dynamic page rendering.

These elements are vital for ensuring that web crawlers can efficiently index a site, thereby increasing its chances of appearing in relevant search results.

Ultimately, the importance of web crawling transcends mere indexing; it is an essential component of a successful SEO strategy. Businesses must prioritise crawlability and remain informed about the evolving landscape of web crawling technologies. By adopting best practises and utilising advanced tools, organisations can navigate the complexities of SEO, ensuring their content reaches the intended audience and achieves the desired visibility in search engine results.

Frequently Asked Questions

What is web crawling?

Web crawling, also known as web scraping or spidering, is an automated process where software programmes called web crawlers or spiders navigate the internet to gather and index content from various web pages.

What is the primary objective of web crawling?

The primary objective of web crawling is to collect data for indexing, which enables search engines to deliver relevant search results to users.

How significant is the role of bots in internet traffic?

In 2026, nearly half of all internet traffic is attributed to bots, highlighting the growing reliance on automated processes for data extraction.

Why is understanding web crawling important for website optimization?

Understanding web crawling is crucial for optimising websites for search engines, as it significantly impacts how information is discovered and ranked online.

How can businesses benefit from web scraping for SEO?

Businesses can gain valuable insights into competitor strategies, monitor keyword performance, and enhance their marketing efforts through web scraping for SEO.

What services does Appstractor provide related to web scraping?

Appstractor offers advanced data scraping solutions that include real-time SERP monitoring, ad visibility, and competitive analysis, while ensuring GDPR compliance for ethical data handling.

Why is it important for digital marketers to stay informed about web scraping techniques?

Staying informed about web scraping techniques and tools is important for digital marketers to maintain a competitive edge as technology evolves.

What trend was observed regarding click-through rates from AI tools in 2025?

The click-through rates from AI tools declined from 0.8% in Q2 to 0.27% in Q4 of 2025, indicating the need for effective strategies to navigate shifts in SEO dynamics.

What challenges does AI bot scraping present?

The challenges posed by AI bot scraping grew significantly in 2025, emphasising the importance of having robust bot management strategies.

List of Sources

- Define Web Crawling: Understanding the Basics

- Eight in ten of world's biggest news websites now block AI training bots (https://pressgazette.co.uk/platforms/eight-in-ten-of-worlds-biggest-news-websites-now-block-ai-training-bots)

- News sites are locking out the internet archive to stop AI crawling. Is the 'open web' closing? (https://techxplore.com/news/2026-02-news-sites-internet-archive-ai.html)

- In Graphic Detail: AI licensing deals, protection measures aren’t slowing web scraping (https://digiday.com/media/in-graphic-detail-ai-licensing-deals-protection-measures-arent-slowing-web-scraping)

- thunderbit.com (https://thunderbit.com/blog/web-crawling-stats-and-industry-benchmarks)

- Google warns against 'breaking Search,' as pressure mounts over web future (https://businessinsider.com/google-warns-breaking-search-pressure-mounts-web-ai-cloudflare-2026-1)

- Explain the Importance of Crawling in SEO

- SEO in 2026: What will stay the same (https://searchengineland.com/seo-2026-stay-same-467688)

- SEO And AI In 2026: Adapt Or Get Buried In The Search Results (https://elearningindustry.com/advertise/elearning-marketing-resources/blog/seo-and-ai-in-2025-adapt-or-get-buried-in-the-search-results)

- The crawl crisis: Why brand visibility now depends on smarter indexing (https://emarketer.com/content/why-brand-visibility-depends-on-smarter-indexing)

- Googlebot News 2026 : Latest Crawling & Indexing Updates. (https://11digitalmind.com/googlebot-news-latest-updates2026-guide)

- Publishers fear AI summaries are hitting online traffic (https://bbc.co.uk/news/articles/c0mlvryx0exo)

- Describe How Web Crawlers Operate: Mechanisms and Processes

- Rise of AI crawlers and bots causing web traffic havoc (https://computerworld.com/article/4044127/rise-of-ai-crawlers-and-bots-causing-web-traffic-havoc.html)

- Press Advantage Examines How AI Prioritizes News-Based Content During Search Engine Crawling (https://usatoday.com/press-release/story/26051/press-advantage-examines-how-ai-prioritizes-news-based-content-during-search-engine-crawling)

- New AI web standards and scraping trends in 2026: rethinking robots.txt (https://dev.to/astro-official/new-ai-web-standards-and-scraping-trends-in-2026-rethinking-robotstxt-3730)

- How Yesterday’s Web-Crawling Policies Will Shape Tomorrow’s AI Leadership (https://datainnovation.org/2026/01/how-yesterdays-web-crawling-policies-will-shape-tomorrows-ai-leadership)

- How AI Bots Crawl News Content: A Look at AI Trends and the Media Industry’s Response (https://dev.arcxp.com/blog/ai-bot-traffic-trends)

- Identify Challenges and Considerations in Web Crawling

- Eight in ten of world's biggest news websites now block AI training bots (https://pressgazette.co.uk/platforms/eight-in-ten-of-worlds-biggest-news-websites-now-block-ai-training-bots)

- How many news websites block AI crawlers? (https://reutersinstitute.politics.ox.ac.uk/how-many-news-websites-block-ai-crawlers)

- News sites are locking out the internet archive to stop AI crawling. Is the 'open web' closing? (https://techxplore.com/news/2026-02-news-sites-internet-archive-ai.html)

- thunderbit.com (https://thunderbit.com/blog/web-crawling-stats-and-industry-benchmarks)