Introduction

Web page data extraction is evolving rapidly, with an increasing number of businesses recognising its potential to derive valuable insights from online information. As organisations rely more on automated scraping techniques, it becomes crucial to understand the various tools and methodologies available for effective data gathering.

However, with numerous options on the market, how can one navigate the complexities of selecting the right tool while adhering to legal and ethical standards?

This article explores the top web scraping tools and techniques of 2025, offering a comprehensive comparison that empowers readers to make informed decisions in their data extraction efforts.

Fundamentals of Web Page Data Extraction

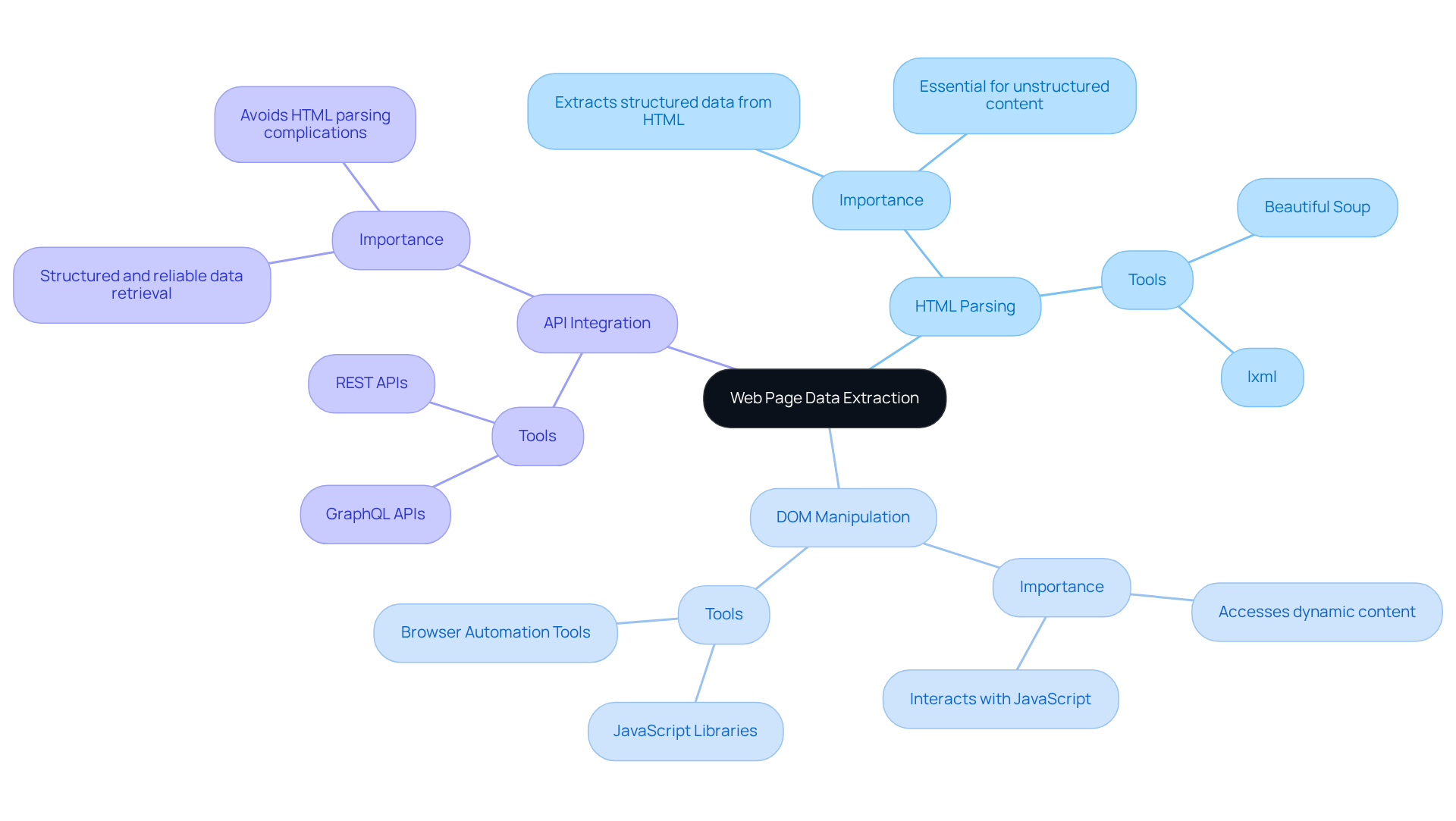

Web page data extraction, commonly referred to as web scraping, is the automated process of gathering information from websites. This process of web page data extraction involves fetching web pages, parsing HTML content, and extracting relevant information. Key techniques include:

-

HTML Parsing: This technique focuses on extracting data from the HTML structure of web pages, often utilising libraries such as Beautiful Soup or lxml. Effective HTML parsing is essential for web page data extraction, as it allows for the extraction of structured information from unstructured web content.

-

DOM Manipulation: This method employs JavaScript to interact with the Document Object Model (DOM) of a web page, which is crucial for accessing dynamic content that may not be present in the initial HTML response.

-

API Integration: When available, obtaining information through APIs offers a structured and reliable alternative to extraction, enabling efficient retrieval without the complications associated with parsing HTML.

By 2026, approximately 68% of companies are expected to employ tools for web page data extraction, indicating a growing reliance on automated information gathering techniques. As organisations increasingly adopt sophisticated gathering methods, understanding these essentials becomes vital for selecting the appropriate tools and ensuring successful information extraction projects.

Comparative Analysis of Leading Web Scraping Tools

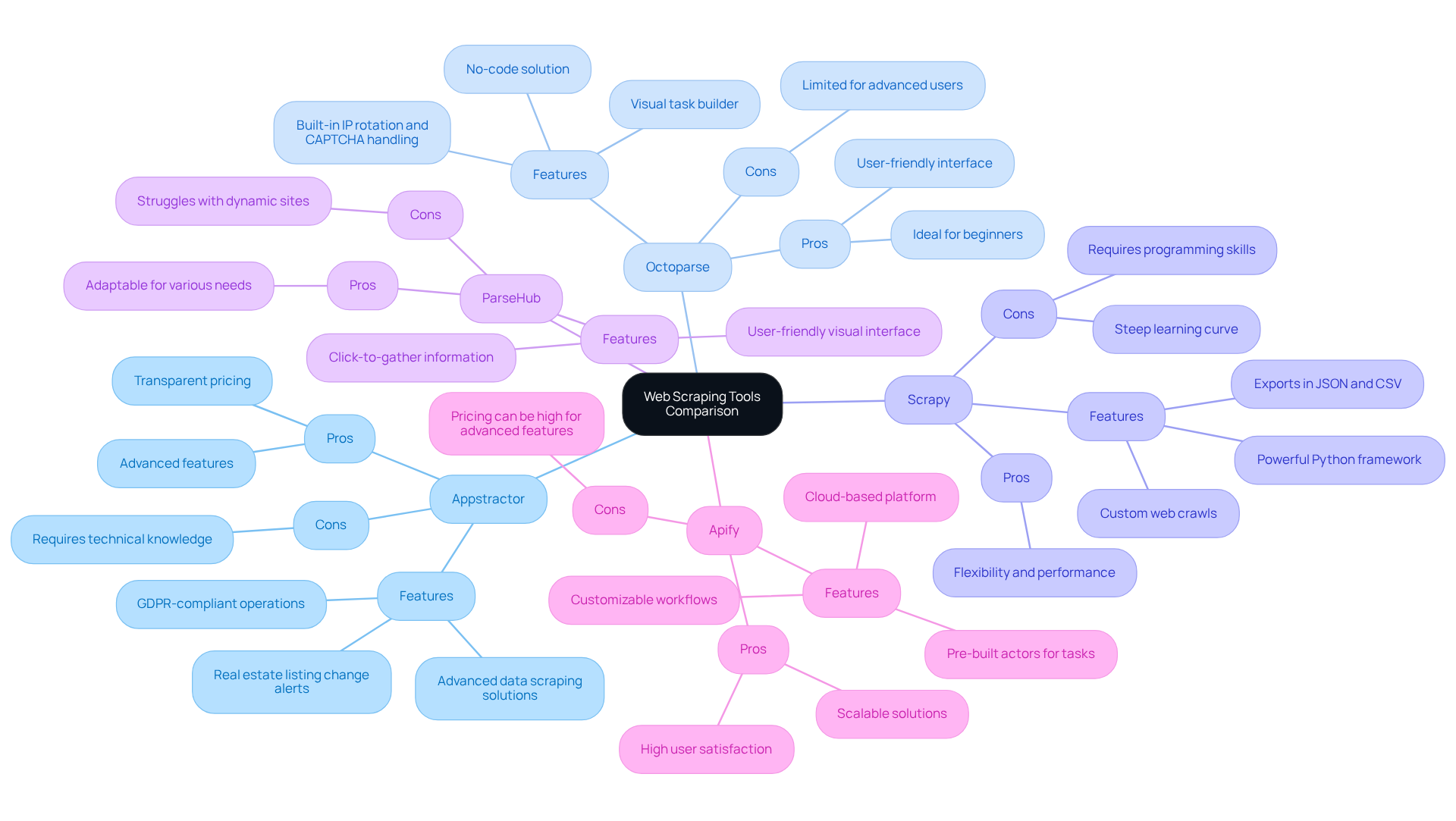

In 2025, several tools for web page data extraction distinguish themselves through unique features and capabilities, catering to diverse user needs. Here’s a comparative analysis of some of the leading options:

-

Appstractor: Known for its advanced data scraping solutions, Appstractor excels in providing real estate listing change alerts and compensation benchmarking. With 14 years of enterprise-grade data extraction experience and a global self-healing IP pool, it ensures continuous uptime and GDPR-compliant operations, making it a dependable option for digital marketing specialists. Pros: Advanced features, GDPR compliance, transparent pricing. Cons: May require technical knowledge for optimal use.

-

Octoparse: This no-code solution is designed for users without programming knowledge, offering a user-friendly interface that simplifies data extraction. Its visual task builder allows users to select webpage elements easily, making it ideal for beginners. Recent updates have enhanced its capabilities for web page data extraction, including built-in IP rotation and CAPTCHA handling, which improve data extraction reliability. User satisfaction ratings indicate that Octoparse is particularly favoured among marketers and eCommerce operators for its ease of use and efficiency.

-

Scrapy: A powerful Python framework, Scrapy excels in managing large-scale data extraction projects, making it a preferred choice for developers. While it offers powerful features for custom web crawls, it requires programming skills, which can be a barrier for non-technical users. Despite its steep learning curve, Scrapy's flexibility and performance have received positive feedback, particularly for complex web page data extraction tasks. Users value its capability to export information in different formats, including JSON and CSV.

-

ParseHub: Renowned for its user-friendly visual interface, ParseHub enables users to click on elements to gather information, making it adaptable for various extraction needs. However, it may struggle with highly dynamic sites, which can limit its effectiveness in certain scenarios. User satisfaction ratings reflect a mixed response, with some praising its ease of use while others note challenges with complex web structures.

-

Apify: This cloud-based platform provides pre-built actors for various data extraction tasks, making it scalable and suitable for businesses requiring robust solutions. Apify's flexibility allows users to customise workflows, and its integration capabilities enhance its appeal for data-driven organisations. Users have reported high satisfaction levels, especially for its capability to manage real-time information retrieval efficiently.

Each tool presents distinct advantages and limitations, underscoring the importance of assessing specific needs before making a choice. As the web harvesting market continues to evolve, tools like Octoparse and Scrapy are adapting to meet the demands of users seeking efficient and effective information retrieval solutions.

Techniques for Effective Web Data Extraction

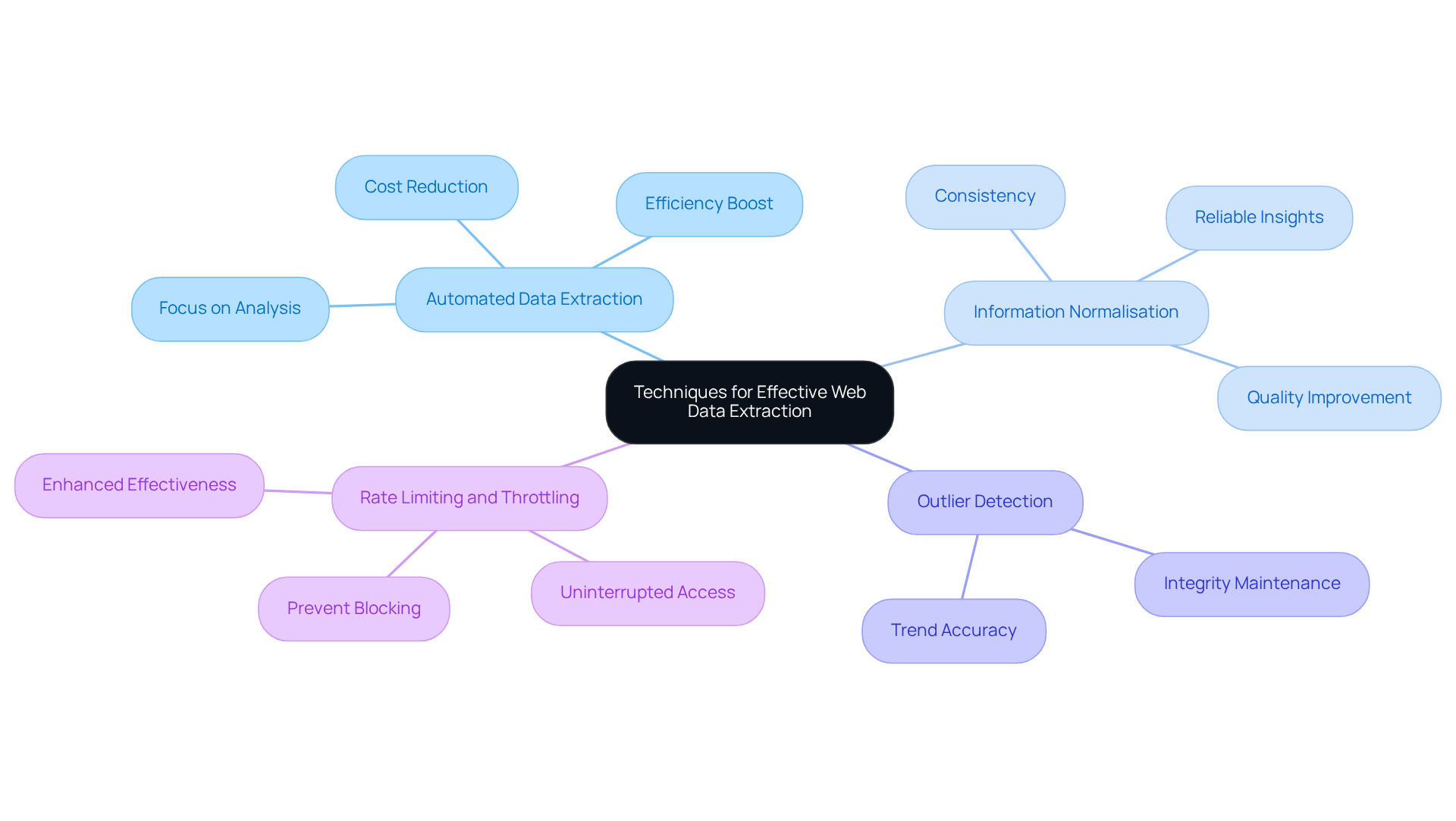

The implementation of robust methodologies is essential for effective web page data extraction. The key techniques include:

-

Automated Data Extraction: By leveraging scripts and specialised tools, organisations can streamline the scraping process, minimising manual intervention and significantly boosting efficiency. Automation can lead to a 70% reduction in expenses associated with continuous scraping and preparation, allowing teams to focus on analysis rather than collection.

-

Information Normalisation: This process ensures that the extracted information is consistent and organised, which is essential for accurate analysis and reporting. Techniques such as deduplication, standardisation, and normalisation enhance quality by eliminating redundant or inconsistent information, ultimately improving the reliability of insights derived from the data.

-

Outlier Detection: Implementing algorithms to identify and manage anomalies within the information is crucial for maintaining the integrity of insights. By addressing outliers, organisations can ensure that their analyses reflect true trends rather than skewed results caused by inaccurate figures.

-

Rate Limiting and Throttling: Controlling the frequency of requests sent to websites is vital to prevent being blocked, thus ensuring uninterrupted access to valuable information sources. This practise not only safeguards the extraction process but also enhances the overall effectiveness of information retrieval.

Together, these methodologies enhance the process of web page data extraction and significantly improve the quality of the data gathered, enabling organisations to make informed decisions based on accurate and trustworthy insights.

Legal and Ethical Considerations in Web Scraping

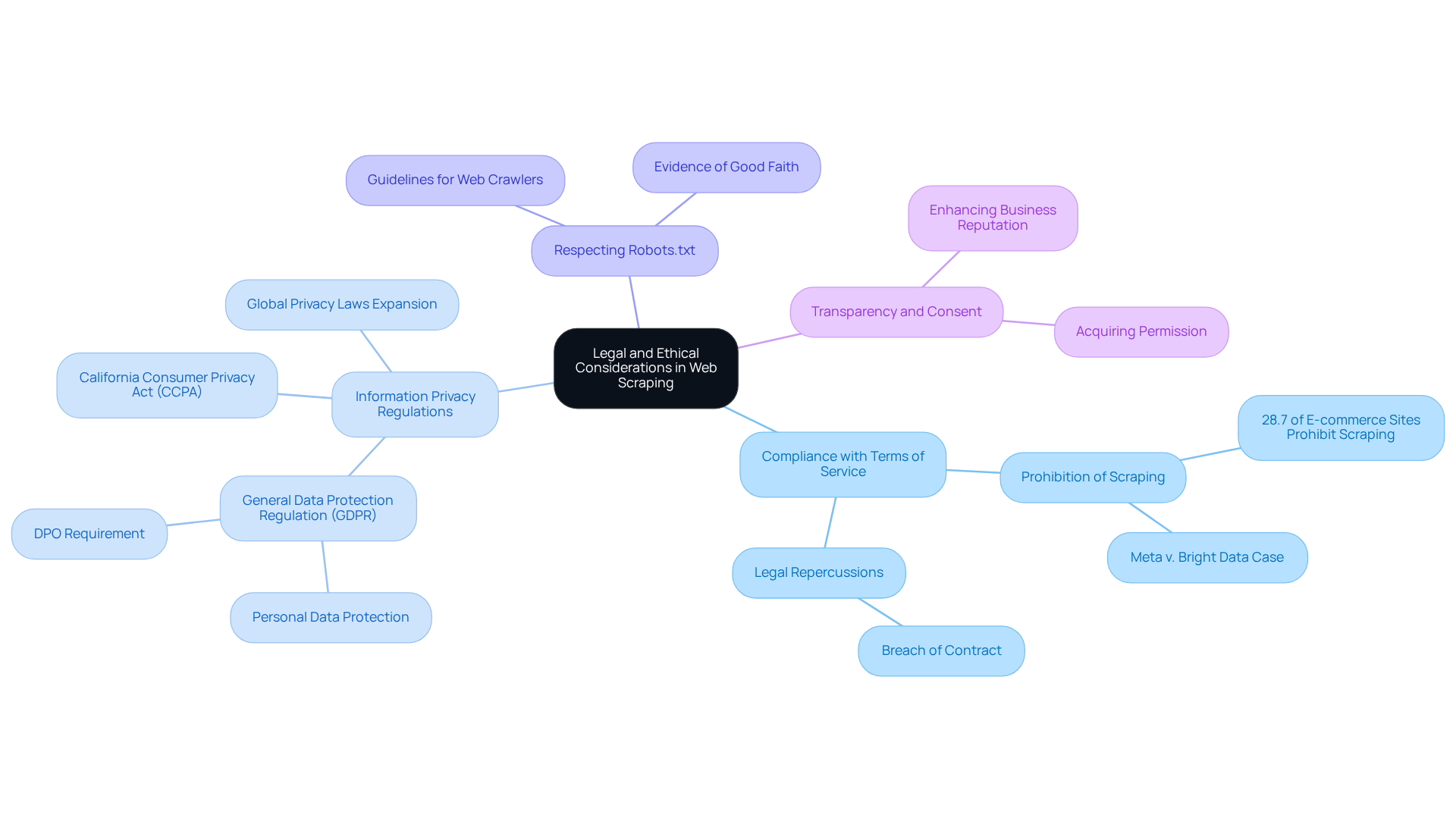

As web page data extraction gains traction, understanding its legal and ethical implications is paramount. Key considerations include:

-

Compliance with Terms of Service: A significant percentage of websites, particularly in e-commerce, approximately 28.7%, explicitly prohibit scraping in their terms of service. Violating these terms can lead to legal repercussions, as illustrated in cases like Meta v. Bright Data, where scraping content subject to terms of service restrictions was deemed a breach of contract.

-

Information Privacy Regulations: Laws such as the General Data Protection Regulation (GDPR) and the California Consumer Privacy Act (CCPA) impose stringent guidelines on information collection, especially regarding personal details. Organisations must ensure adherence to these regulations to avoid substantial penalties, as the responsibility for information protection now heavily rests on information controllers. The landscape of global privacy laws is expanding, further complicating data collection activities.

-

Respecting Robots.txt: This file serves as a guideline for web crawlers, indicating which sections of a website are permissible for extraction. Following these directives is essential for upholding ethical data collection practises and demonstrating good faith in legal assessments, as respecting robots.txt may be viewed as evidence of good faith.

-

Transparency and Consent: Whenever feasible, acquiring permission from website owners prior to extracting their information not only fosters goodwill but also mitigates legal risks. Ethical data collection practises can significantly enhance a business's reputation and operational integrity.

Navigating these considerations is essential for any organisation involved in web page data extraction, as it ensures adherence to ethical standards and legal compliance while maximising the benefits of data extraction.

Conclusion

In conclusion, web page data extraction stands as a vital element for businesses seeking to effectively leverage online information. As this practise continues to gain traction, it becomes increasingly important to understand the array of tools and techniques available for successful data collection. This article has outlined fundamental methods such as HTML parsing, DOM manipulation, and API integration, underscoring their significance in navigating the complexities of web scraping.

A comparative analysis of leading web scraping tools - Appstractor, Octoparse, Scrapy, ParseHub, and Apify - reveals the diverse needs of users in 2025. Each tool presents unique features tailored to specific extraction requirements, catering to everyone from beginners with user-friendly interfaces to developers seeking powerful frameworks. Furthermore, effective methodologies such as automated data extraction, information normalisation, and ethical considerations emphasise the necessity of structured approaches within the web scraping landscape.

Given these insights, organisations must prioritise not only the selection of appropriate tools but also adherence to legal and ethical standards in their data extraction practises. By remaining informed about the latest techniques and regulations, businesses can harness the power of web scraping responsibly and effectively, ensuring they stay competitive in an increasingly data-driven environment.

Frequently Asked Questions

What is web page data extraction?

Web page data extraction, commonly known as web scraping, is the automated process of gathering information from websites by fetching web pages, parsing HTML content, and extracting relevant information.

What are the key techniques used in web page data extraction?

The key techniques include HTML Parsing, DOM Manipulation, and API Integration.

What is HTML Parsing?

HTML Parsing focuses on extracting data from the HTML structure of web pages, often using libraries such as Beautiful Soup or lxml, which is essential for obtaining structured information from unstructured web content.

How does DOM Manipulation work in web data extraction?

DOM Manipulation employs JavaScript to interact with the Document Object Model (DOM) of a web page, allowing access to dynamic content that may not be present in the initial HTML response.

What is API Integration in the context of web page data extraction?

API Integration involves obtaining information through APIs when available, providing a structured and reliable alternative to HTML parsing, and enabling efficient data retrieval without the complications of parsing HTML.

What is the expected trend in the use of web page data extraction tools by 2026?

By 2026, approximately 68% of companies are expected to employ tools for web page data extraction, indicating a growing reliance on automated information gathering techniques.

List of Sources

- Fundamentals of Web Page Data Extraction

- How AI Is Changing Web Scraping in 2026 (https://kadoa.com/blog/how-ai-is-changing-web-scraping-2026)

- State of Web Scraping 2026: Trends, Challenges & What’s Next (https://browserless.io/blog/state-of-web-scraping-2026)

- Web Scraping Market Size, Growth Report, Share & Trends 2026 - 2031 (https://mordorintelligence.com/industry-reports/web-scraping-market)

- Web Scraping Report 2026: Market Trends, Growth & Key Insights (https://promptcloud.com/blog/state-of-web-scraping-2026-report)

- Comparative Analysis of Leading Web Scraping Tools

- Top Ten Web Scraping Tools for 2025: Free and Paid — Retail Technology Innovation Hub (https://retailtechinnovationhub.com/home/2025/10/12/top-ten-web-scraping-tools-for-2025)

- Web Scraping Market Size, Growth Report, Share & Trends 2026 - 2031 (https://mordorintelligence.com/industry-reports/web-scraping-market)

- 10 Web Scraping Tools Powering Smarter Data Strategies in 2026 (https://designrush.com/agency/it-services/trends/web-scraping-tools)

- Web Scraping Market Report 2025 | ScrapeOps (https://scrapeops.io/web-scraping-playbook/web-scraping-market-report-2025)

- Techniques for Effective Web Data Extraction

- Case Studies For Web Scraping and Data Extractions - X-Byte (https://xbyte.io/case-studies)

- Python Web Scraping: Quotes from Goodreads.com (https://paulvanderlaken.com/2019/12/27/web-scraping-python-goodreads-quotes)

- Advanced Tips for Effective Data Extraction - Dataversity (https://dataversity.net/articles/beyond-the-basics-advanced-tips-for-effective-data-extraction)

- AI Web Scraping Case Study | Intelligent Data Extraction | Softblues (https://softblues.io/case-studies/ai-powered-web-scraping-system)

- 3 Case Studies of AI Web Scraping Technology - Cloud Wars (https://cloudwars.com/cybersecurity/3-case-studies-of-ai-web-scraping-technology)

- Legal and Ethical Considerations in Web Scraping

- Understanding Web Scraping Legality: Global Insights & Stats (https://browsercat.com/post/web-scraping-legality-global-statistics)

- The Essential Business Guide to GDPR Quotes by Alistair Dickinson (https://goodreads.com/work/quotes/61434761-the-essential-business-guide-to-gdpr-a-business-owner-s-perspective-to)

- Is Web Scraping Legal? GDPR, CCPA & CFAA Frameworks Explained (https://tendem.ai/blog/is-web-scraping-legal-compliance-overview)