Introduction

Scraping Google search results has become an essential tool for data enthusiasts and professionals, offering access to a wealth of information with just a few lines of code. This guide explores the fundamentals of using Python for web scraping, equipping readers with the skills necessary to efficiently extract valuable insights from search results. However, the complexities of web scraping introduce important considerations regarding legality, technical requirements, and best practises.

What are the key factors that can determine the success of a scraping project?

Understand Prerequisites for Scraping Google Search Results

Before addressing the process of , it is essential to understand the prerequisites:

-

: Familiarity with Python programming is crucial, as the scraping will be executed using Python scripts.

-

: A fundamental understanding of HTML structure and CSS selectors is vital for effectively navigating and retrieving information from web pages. Industry leaders emphasise that grasping these elements is key to successful information extraction. As specialists note, ""

-

: :

requests: For making HTTP requests to fetch web pages.BeautifulSoup: For parsing HTML and extracting information.pandas: For manipulation and analysis of data.Selenium: For handling JavaScript rendering and simulating browser behaviour, which is necessary due to Google's recent updates requiring JavaScript to access its pages.

-

: Be aware of the legal implications of web extraction, including compliance with Google's terms of service and local regulations regarding data usage. Collecting public data is generally legal, provided that security measures are not circumvented, as established by the landmark hiQ Labs v. LinkedIn case, which clarified the legal framework for gathering public data.

-

: Set up a Python , such as Anaconda or a virtual environment, to manage dependencies efficiently. Additionally, be mindful of the using Python, and consider implementing best practises to mitigate these challenges.

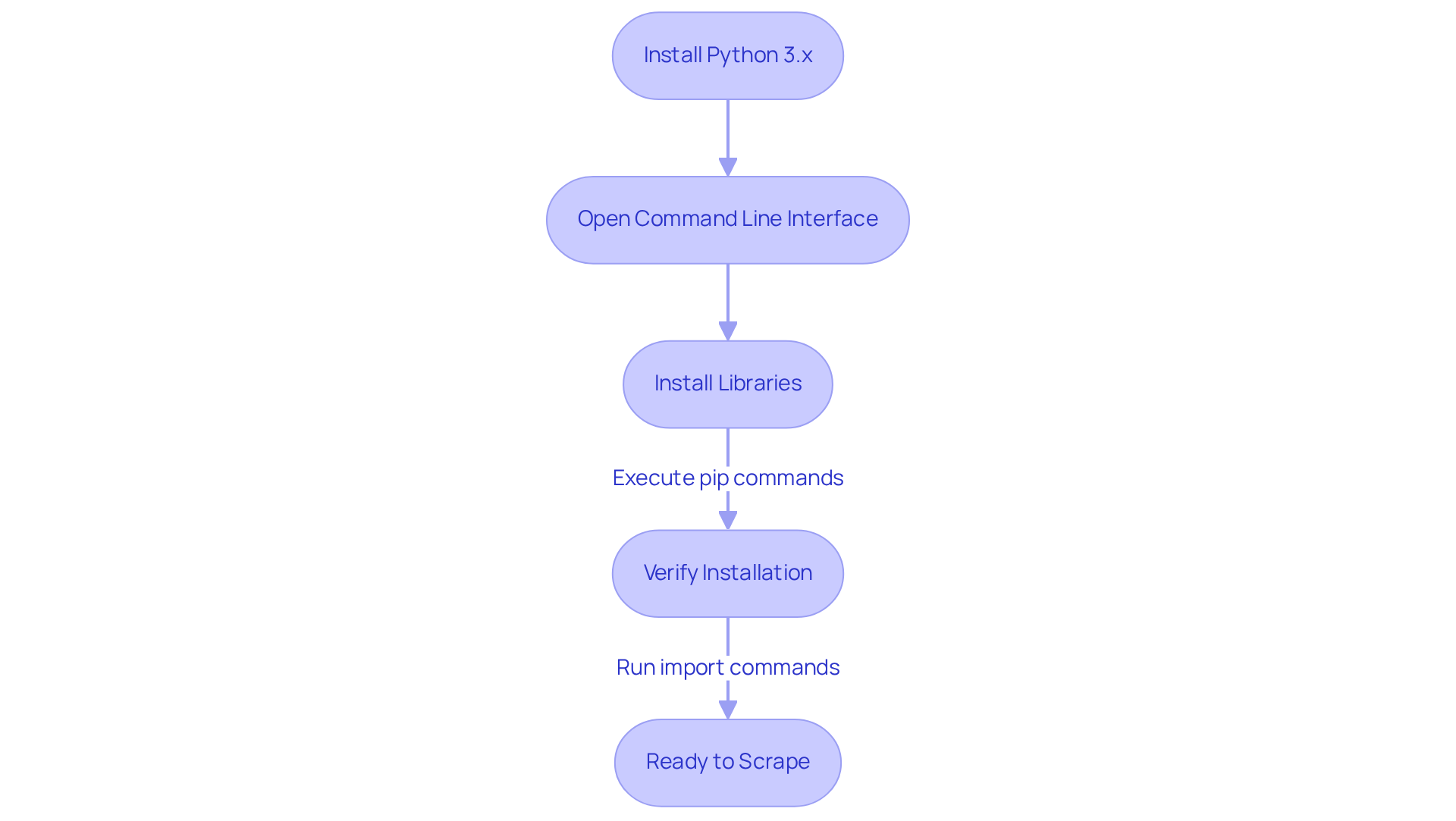

Install Required Libraries and Tools for Python Scraping

To effectively , follow these steps to install the :

-

Install : Ensure you have 3.x installed on your machine. Download it from the official Python website.

-

Open : Access your (Terminal for macOS/Linux or Command Prompt for Windows).

-

Install Libraries: Execute the following commands to install the required libraries:

pip install requests pip install beautifulsoup4 pip install pandas -

: After installation, confirm that the libraries are correctly installed by running:

import requests import bs4 import pandasIf no errors occur, you are ready to proceed.

The Requests and are widely adopted in the community, especially for those looking to using Python due to their ease of use and powerful capabilities. These libraries are utilised by over 70% of web data collection professionals, highlighting their importance in the field. Verifying library installations is crucial, as it ensures that your environment is set up correctly for successful . As noted by Antonello Zanini, a technical writer, "A solid foundation in can significantly enhance your efficiency."

Additionally, consider using proxies to avoid IP blocking, as many websites implement measures to prevent data extraction. It is also essential to be aware of the legality of web data extraction; always check the terms of service of the websites you intend to harvest. Lastly, having a will greatly assist you in identifying the elements you wish to extract.

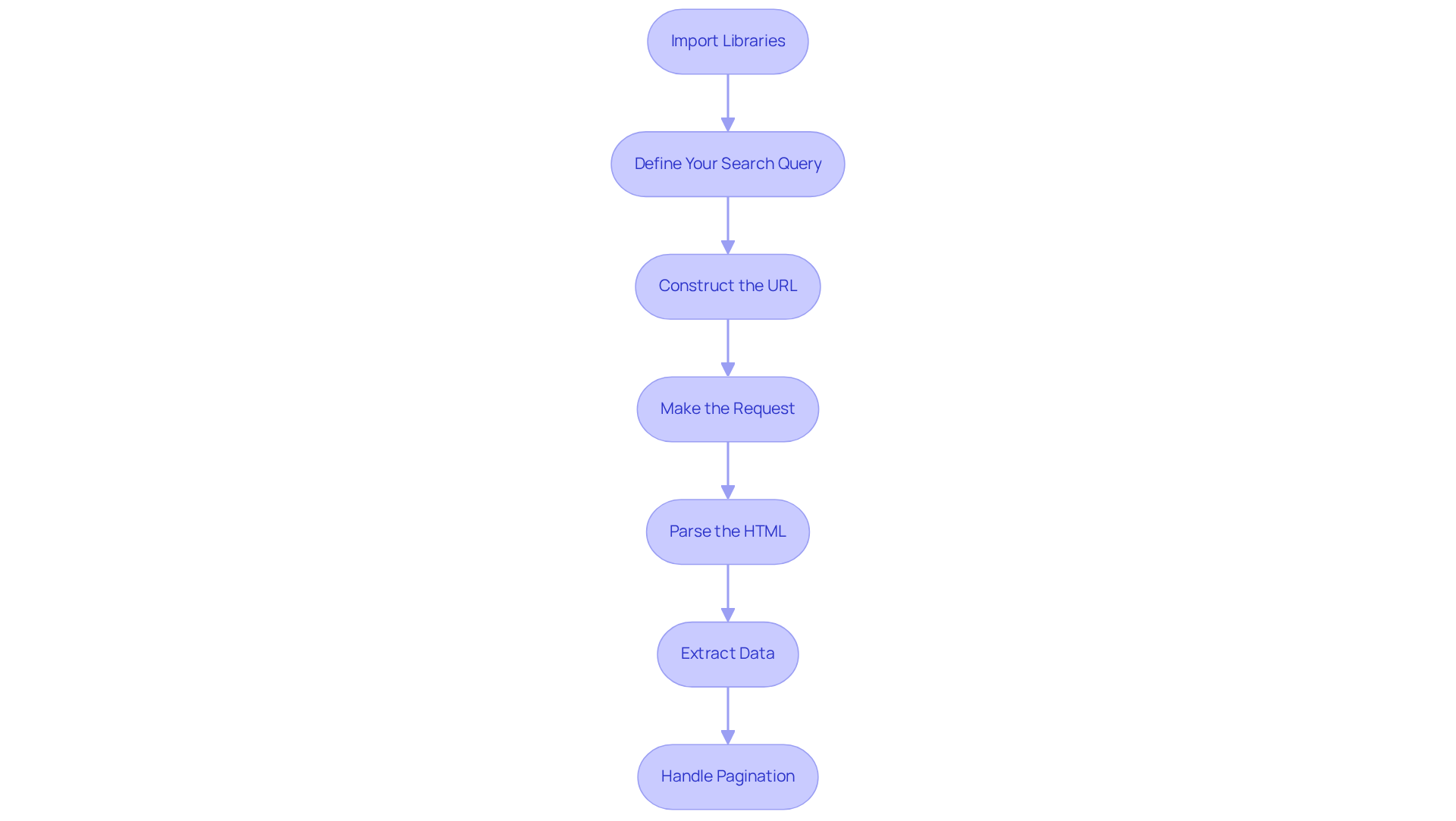

Execute the Scraping Process to Collect Google Search Results

Now that your environment is set up, it’s time to execute the :

-

Import Libraries: Begin by importing the necessary libraries in your Python script:

import requests from bs4 import BeautifulSoup -

Define Your Search Query: Create a variable for your search query. For example:

query = 'latest technology news' -

Construct the URL: Build the using your query:

url = f'https://www.google.com/search?q={query}' -

Make the Request: Utilise the

requestslibrary to fetch the search results page:response = requests.get(url) -

Parse the HTML: Use BeautifulSoup to parse the :

soup = BeautifulSoup(response.text, 'html.parser') -

Extract Data: Identify the HTML elements containing the search results and extract the desired information:

results = soup.find_all('h3') # Example for extracting titles for result in results: print(result.text) -

Handle Pagination: To scrape multiple pages, implement logic to navigate through . Increase the 'start' parameter in your URL to access more entries, ensuring compliance with Google’s limits, which currently caps listings at 10 per page.

Important Note: Always verify the of the website before collecting information. Additionally, consider utilising APIs such as HasData’s Google SERP API for organised outputs, which can streamline the and help prevent issues like CAPTCHA and blocking. By leveraging , you can effectively gather relevant search results from Google, enabling you to analyse trends and gather insights efficiently while ensuring .

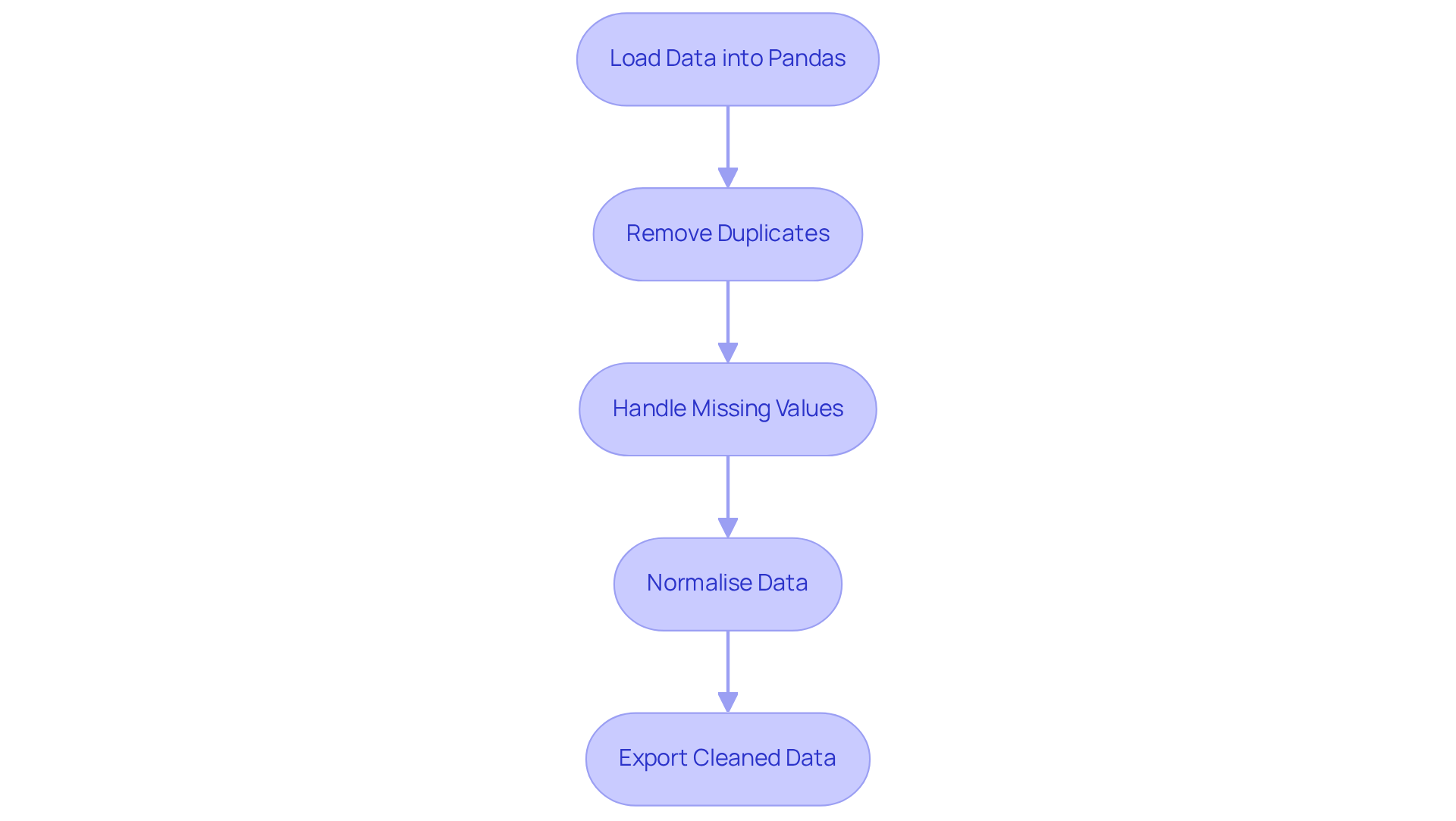

Clean and Process Scraped Data for Usability

After you scrape Google search results using Python, it is crucial to . This ensures that the data is structured and ready for .

-

Load Data into Pandas: Begin by storing the for easier manipulation:

import pandas as pd data = {'titles': titles} # Assuming titles is a list of scraped titles df = pd.DataFrame(data) -

Remove Duplicates: Next, eliminate any duplicate entries to maintain :

df.drop_duplicates(inplace=True) -

: It is important to check for and in your DataFrame:

df.fillna('N/A', inplace=True) # Replace missing values with 'N/A' -

Normalise Data: Standardise the format of your data, such as converting all text to lowercase:

df['titles'] = df['titles'].str.lower() -

: Finally, export the to a CSV file for further analysis:

df.to_csv('cleaned_data.csv', index=False)

Utilising Pandas for is essential, with nearly 50% of data analysts relying on it for effective data processing. As one analyst noted, "Pandas streamlines intricate tasks, allowing for greater emphasis on analysis instead of handling information." By implementing these techniques, you can ensure that your data scraped from Google search results using Python is clean, structured, and ready for insightful analysis.

Conclusion

Mastering the art of scraping Google search results with Python opens up a world of possibilities for data analysis and trend tracking. This guide has provided a comprehensive roadmap, covering everything from the necessary prerequisites to executing the scraping process and cleaning the data for effective usability. By grasping the fundamentals of Python, HTML, and essential libraries, anyone can embark on this journey of data extraction.

Key insights highlighted throughout the article include:

- The importance of setting up a proper development environment.

- The critical role of libraries like

requests,BeautifulSoup, andpandas. - The need to navigate the legal landscape surrounding web scraping.

Additionally, practical steps were outlined for executing the scraping process, handling pagination, and ensuring data integrity through cleaning techniques. Each of these elements contributes to a robust approach to web scraping that not only meets technical requirements but also adheres to ethical standards.

As the demand for data-driven insights continues to grow, the ability to scrape and analyse web data effectively becomes increasingly valuable. By applying the techniques outlined in this guide, individuals can enhance their data collection skills and leverage this knowledge to make informed decisions based on real-time information. Embrace the power of Python and start scraping today to unlock a wealth of data at your fingertips.

Frequently Asked Questions

What are the prerequisites for scraping Google search results?

The prerequisites include basic knowledge of Python, understanding HTML and CSS, having the required libraries installed, being aware of legal considerations, and setting up a development environment.

Why is basic knowledge of Python important for scraping?

Familiarity with Python programming is crucial because the scraping process will be executed using Python scripts.

What is the significance of understanding HTML and CSS in web scraping?

A fundamental understanding of HTML structure and CSS selectors is vital for effectively navigating and retrieving information from web pages, as it aids in the extraction process.

Which libraries are required for scraping Google search results?

The required libraries are requests (for making HTTP requests), BeautifulSoup (for parsing HTML), pandas (for data manipulation and analysis), and Selenium (for handling JavaScript rendering).

What role does Selenium play in scraping Google search results?

Selenium is used to handle JavaScript rendering and simulate browser behavior, which is necessary due to Google's updates requiring JavaScript to access its pages.

What legal considerations should be taken into account when scraping?

It is important to comply with Google’s terms of service and local regulations regarding data usage. Collecting public data is generally legal, provided that security measures are not circumvented.

What should I do to set up a development environment for scraping?

Set up a Python development environment, such as Anaconda or a virtual environment, to manage dependencies efficiently.

What risks should I be aware of when scraping Google search results?

There is a potential risk of being blocked by Google when scraping search results, so it is important to implement best practises to mitigate these challenges.

List of Sources

- Understand Prerequisites for Scraping Google Search Results

- Scraping News Results from Google Search (https://medium.com/@hasdata/scraping-news-results-from-google-search-b7ee45e72043)

- How to Scrape Google Search Results in Python (2026)- Without Getting Blocked (https://scrapingdog.com/blog/scrape-google-search-results)

- Is Web Scraping Legal? Key Insights and Guidelines You Need to Know (https://scrapingbee.com/blog/is-web-scraping-legal)

- How to Scrape Google Search Results in 2026 (https://scrapfly.io/blog/posts/how-to-scrape-google)

- Install Required Libraries and Tools for Python Scraping

- Stop Getting Blocked: Python Web Scraping Tools That Actually Work in 2026 (https://medium.com/@inprogrammer/best-python-web-scraping-tools-2026-updated-87ef4a0b21ff)

- Web Scraping Roadmap: Steps, Tools & Best Practices (2026) (https://brightdata.com/blog/web-data/web-scraping-roadmap)

- Python Web Scraping Tutorial: Step-By-Step (2026) (https://oxylabs.io/blog/python-web-scraping)

- 4 Python Web Scraping Libraries To Mining News Data | NewsCatcher (https://newscatcherapi.com/blog-posts/python-web-scraping-libraries-to-mine-news-data)

- Web Scraping for News Articles using Python– Best Way In 2026 (https://proxyscrape.com/blog/web-scraping-for-news-articles-using-python)

- Execute the Scraping Process to Collect Google Search Results

- Scraping Google news using Python (2026 Tutorial) (https://serpapi.com/blog/scraping-google-news-using-python-tutorial)

- How To Scrape Google News using Python (https://autom.dev/blog/scrape-google-news-with-python)

- How to Scrape Google News With Python (https://decodo.com/blog/how-to-scrape-google-news)

- Scraping News Results from Google Search (https://medium.com/@hasdata/scraping-news-results-from-google-search-b7ee45e72043)

- How to Scrape Google News with Python – 2026 Guide (https://scrapingdog.com/blog/scrape-google-news)

- Clean and Process Scraped Data for Usability

- Web Scraping Trends 2026: How Automation Will Transform Data-Driven Businesses (https://iveerdata.com/web-scraping-trends-2026-how-automation-will-transform-data-driven-businesses)

- Data Cleaning Tools and Techniques for Non-Coders (https://gijn.org/stories/data-cleaning-tools-and-techniques-for-non-coders)

- How to Scrape News Articles With AI and Python (https://brightdata.com/blog/web-data/how-to-scrape-news-articles)

- Web Scraping Report 2026: Market Trends, Growth & Key Insights (https://promptcloud.com/blog/state-of-web-scraping-2026-report)