Introduction

In today’s data-driven landscape, effective job site scraping has emerged as a crucial skill for companies aiming to extract insights from a vast array of online job listings. Mastering the appropriate tools and techniques allows individuals to streamline their data collection processes, ensuring the acquisition of relevant and actionable information.

However, the challenge extends beyond mere technical execution. Navigating the ethical and legal complexities associated with web scraping is equally important. How can one strike a balance between efficiency and compliance while maximising the value of scraped data?

Identify Essential Tools and Techniques for Job Site Scraping

To effectively perform scraping job sites, leveraging the right tools and techniques is crucial. Here are some recommended options:

- Scraping Frameworks: , enabling the creation of custom scrapers tailored to specific job sites. Scrapy excels in managing large-scale data extraction projects, orchestrating crawl queues, and handling retries, making it ideal for . BeautifulSoup is particularly effective when used with the lxml backend, enhancing performance and speed, especially with malformed HTML.

- : For dynamic websites that require user interaction, Selenium is a robust choice. It enables intricate tasks like signing in and browsing job listings, ensuring that all required information is captured. Given that many modern job sites rely on client-side JavaScript, scraping job sites with can help simulate user behaviour and retrieve meaningful content.

- : Services such as Apify provide pre-built APIs specifically designed for job extraction. These solutions simplify the process for users without extensive coding knowledge, allowing them to focus on data analysis rather than technical implementation. Furthermore, end-to-end APIs such as Zyte API have been acknowledged for their high success rates in bypassing restrictions, making them a dependable option for scraping job sites to extract listings.

- , such as rotating proxies offered by Appstractor, are essential for avoiding IP bans while scraping job sites and ensuring uninterrupted access to job listings. ensures continuous uptime, allowing scraping activities to remain stealthy and effective, particularly on sites with strict anti-bot measures. Their exceptional support and transparent pricing further enhance the reliability of their , backed by 14 years of enterprise-grade experience.

- Information Storage Solutions: . Utilising databases like MongoDB or enables organised storage and easy retrieval of extracted information, facilitating further analysis and reporting.

By meticulously choosing and merging these tools, including Appstractor's , users can optimise their data collection processes, enhance data quality, and ultimately improve their insights into job market trends.

Ensure Ethical Compliance and Legal Considerations in Scraping

When scraping job sites for information, adhering to is paramount. Here are essential considerations:

- : Always check the robots.txt file of the website to identify which pages can be scraped and which are restricted. Adhering to these guidelines is vital, as .

- : Review the website's to confirm that data extraction is permitted. Violating these terms can lead to legal repercussions, as seen in various cases where companies faced claims for unauthorized access.

- : Refrain from collecting personal information unless explicit consent is obtained. This is especially crucial in jurisdictions with strict privacy protection laws, such as the , which impose significant penalties for non-compliance.

- : Implement to prevent overwhelming the server, which can result in IP bans and disrupt the website's functionality. Ethical data collection practices emphasize controlling request rates to avoid overloading servers.

- Clarity: Maintain clarity regarding your collection activities, especially when gathering information for commercial purposes. Identifying oneself with a clear User-Agent fosters trust and minimizes the risk of backlash from website operators.

By adhering to these guidelines, businesses can engage in responsible and ethical practices, such as scraping job sites, ensuring compliance with legal standards and fostering positive relationships with information providers.

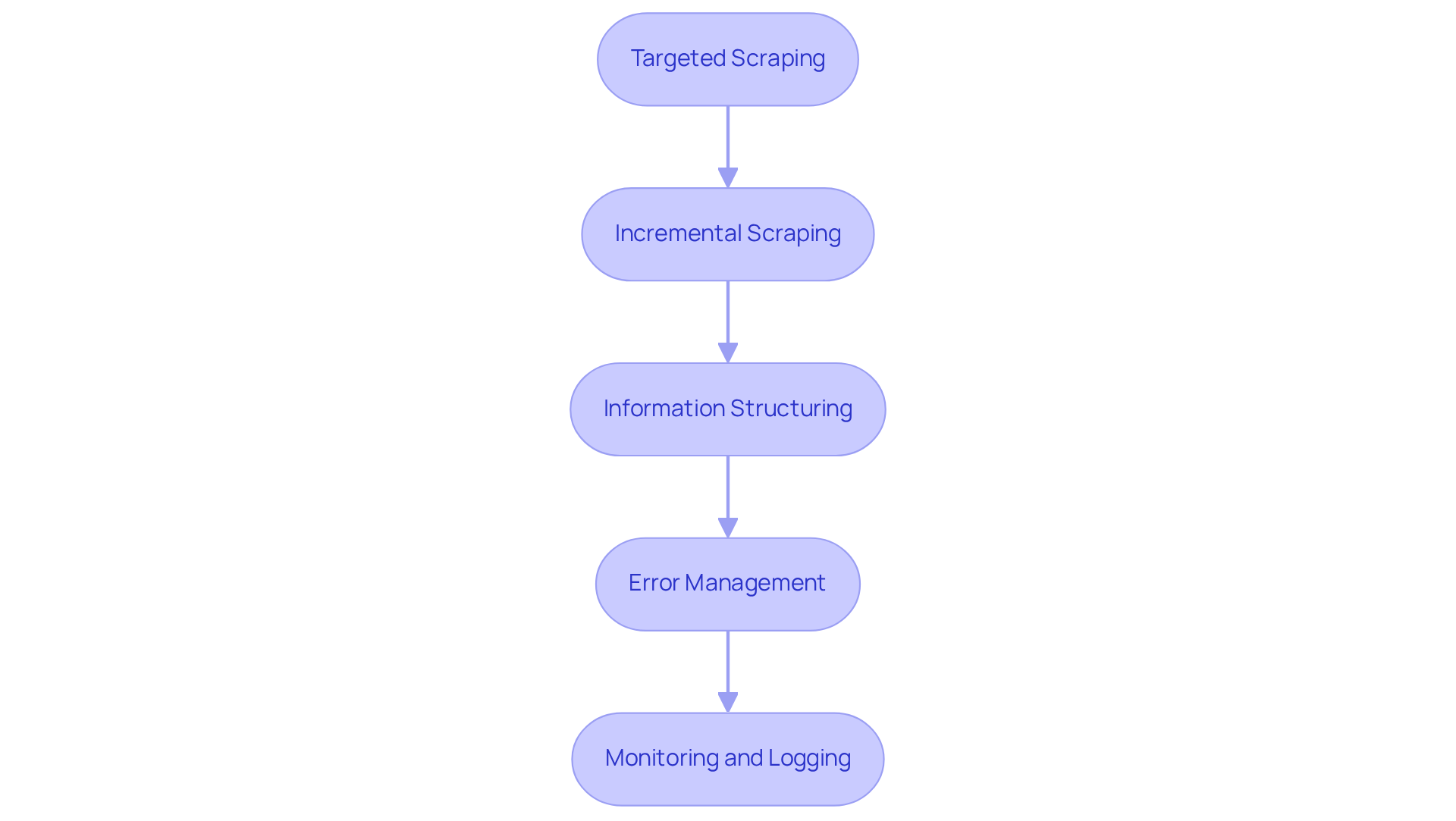

Optimize Data Collection Strategies for Enhanced Results

To enhance the effectiveness of , consider implementing the following optimization strategies:

- : Focus on specific job categories or geographic areas to minimise the collection of unrelated information. This targeted approach not only increases the relevance of the data but also simplifies the analysis process.

- : Rather than extracting all information at once, utilise methods to gather only new or revised job postings. This technique reduces server load and boosts overall efficiency, allowing for quicker access to .

- : Establish a clear framework for the data you intend to collect, including job title, company name, location, and salary. A well-defined structure ensures consistency and facilitates subsequent analysis, making it easier to derive insights.

- : Develop robust systems to address issues such as missing information or changes in website structure. Effective error management is crucial for maintaining the integrity of your and ensuring reliable .

- : Implement monitoring systems to track the performance of your scrapers. By documenting both errors and successful data retrievals, you can pinpoint areas for improvement and ensure the accuracy of the collected information.

By adopting these strategies, users can significantly enhance the quality and relevance of the information gathered through scraping job sites, ultimately leading to more informed decision-making.

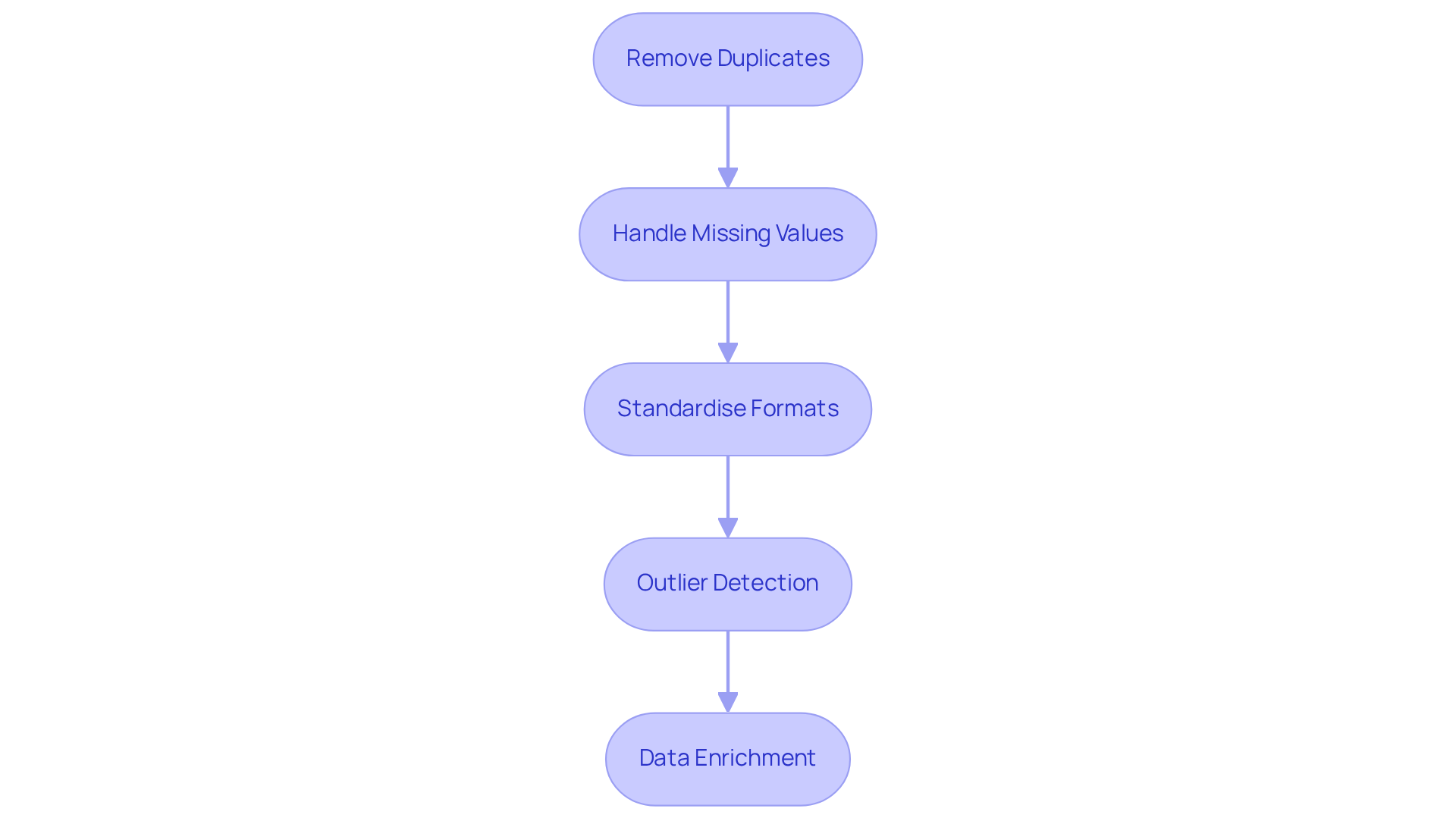

Implement Data Cleaning and Enrichment Techniques

Once information is scraped, cleaning and enriching it is crucial for ensuring usability. Here are effective techniques to enhance :

- : Identifying and eliminating duplicate entries is vital for maintaining a clean dataset. Employing processing libraries such as Pandas in Python can simplify this procedure, greatly enhancing information integrity.

- : Addressing absent information requires careful consideration. Depending on the context, options include filling in gaps using imputation techniques, removing incomplete records, or leaving them as is. Utilizing the can help in recognizing patterns that guide how to address these gaps intelligently, preserving valuable insights while improving overall information quality.

- Standardise Formats: Consistency in information formats is crucial for effective evaluation. Maintaining consistency in date formats, capitalization, and other characteristics promotes smoother information processing and minimises mistakes during evaluation.

- : Employ statistical methods to identify and manage outliers that could skew analysis results. Techniques such as setting thresholds or applying machine learning algorithms can help in recognising these anomalies and deciding how to address them.

- : Enhance the dataset by integrating additional information from reliable sources, such as salary benchmarks or company profiles. The can significantly assist in this process by extracting valuable information from native mobile apps while ensuring compliance with GDPR standards. This not only offers deeper insights but also adds context to the raw information, enhancing its value for decision-making.

By implementing these techniques, users can transform their raw data obtained from scraping job sites into a valuable asset for analysis and informed decision-making.

Conclusion

Mastering the art of scraping job sites necessitates a strategic approach that integrates the right tools, ethical considerations, and effective data management techniques. By understanding and implementing best practises, users can navigate the complexities of job site scraping, ensuring not only successful data extraction but also compliance with legal and ethical standards.

Key insights from this article underscore the significance of selecting appropriate scraping frameworks, automation tools, and data extraction APIs to streamline the process. Additionally, maintaining ethical practises - such as respecting robots.txt files and adhering to privacy laws - is crucial for fostering positive relationships with data providers. Strategies like targeted and incremental scraping, coupled with robust data cleaning and enrichment techniques, further enhance the quality and relevance of the collected information.

As the landscape of job site scraping continues to evolve, staying informed about best practises and emerging tools will empower users to make data-driven decisions effectively. Embracing these methodologies not only optimises the scraping process but also contributes to a more transparent and responsible data collection environment.

Frequently Asked Questions

What are the recommended scraping frameworks for job site scraping?

The recommended scraping frameworks for job site scraping are Scrapy and BeautifulSoup. Scrapy is ideal for large-scale data extraction projects, while BeautifulSoup is effective for parsing HTML, especially when used with the lxml backend.

How does Scrapy help in scraping job sites?

Scrapy excels in managing large-scale data extraction by orchestrating crawl queues and handling retries, making it effective for scraping job sites.

What is the role of BeautifulSoup in job site scraping?

BeautifulSoup is particularly useful for parsing and navigating HTML, enhancing performance and speed, especially with malformed HTML, when used with the lxml backend.

Which browser automation tool is recommended for dynamic job sites?

Selenium is recommended for dynamic job sites as it allows for user interaction, enabling tasks like signing in and browsing job listings to capture all required information.

Why is browser automation important for scraping job sites?

Browser automation is important because many modern job sites rely on client-side JavaScript, and tools like Selenium can simulate user behaviour to retrieve meaningful content.

What are data extraction APIs, and how do they assist in job site scraping?

Data extraction APIs, such as Apify, provide pre-built solutions for job extraction, simplifying the process for users without extensive coding knowledge and allowing them to focus on data analysis.

What is the Zyte API, and why is it recommended?

The Zyte API is an end-to-end data extraction API known for its high success rates in bypassing restrictions, making it a dependable option for scraping job sites to extract listings.

How can proxy services enhance job site scraping?

Proxy services, like rotating proxies from Appstractor, help avoid IP bans and ensure uninterrupted access to job listings, which is crucial for scraping activities, especially on sites with strict anti-bot measures.

What is the benefit of using Appstractor's proxy services?

Appstractor's proxy services offer a global self-healing IP pool for continuous uptime, ensuring stealthy and effective scraping activities, along with exceptional support and transparent pricing.

What are the recommended information storage solutions for managing extracted data?

Recommended information storage solutions include databases like MongoDB and cloud storage options, which facilitate organised storage and easy retrieval of extracted information for further analysis and reporting.

List of Sources

- Identify Essential Tools and Techniques for Job Site Scraping

- Best Web Scraping Tools in 2026 (https://scrapfly.io/blog/posts/best-web-scraping-tools)

- The best web scraping tools in 2026 (https://zyte.com/learn/best-web-scraping-tools)

- 3 Case Studies of AI Web Scraping Technology - Cloud Wars (https://cloudwars.com/cybersecurity/3-case-studies-of-ai-web-scraping-technology)

- Ensure Ethical Compliance and Legal Considerations in Scraping

- Importance and Best Practices of Ethical Web Scraping (https://secureitworld.com/article/ethical-web-scraping-best-practices-and-legal-considerations)

- Legality of Web Scraping in 2026 — An Overview | Grepsr (https://grepsr.com/blog/overview-web-scraping-legality)

- The Legal Landscape of Web Scraping (https://quinnemanuel.com/the-firm/publications/the-legal-landscape-of-web-scraping)

- Optimize Data Collection Strategies for Enhanced Results

- scrapingapi.ai (https://scrapingapi.ai/blog/the-rise-of-ai-in-web-scraping)

- Job Scraping Techniques to Collect Hiring Market Data (https://blog.datahut.co/post/job-scraping-scraping-job-postings)

- The Ultimate Guide to Job Scraping (https://theirstack.com/en/blog/the-ultimate-guide-to-job-scraping)

- Web Scraping Statistics & Trends You Need to Know in 2026 (https://scrapingdog.com/blog/web-scraping-statistics-and-trends)

- Human-Verified AI Data: The Quality Difference in Scraping (https://tendem.ai/blog/human-verified-data-scraping)

- Implement Data Cleaning and Enrichment Techniques

- Data Cleaning Tools and Techniques for Non-Coders (https://gijn.org/stories/data-cleaning-tools-and-techniques-for-non-coders)

- Data Cleaning Techniques After API Extraction (2026) | Parseur® (https://parseur.com/blog/data-cleaning-techniques)

- Essential Data Cleaning: Your 2026 Guide - AI-Driven Data Intelligence & Web Scraping Solutions (https://hirinfotech.com/essential-data-cleaning-your-2026-guide)