Introduction

Proxy servers are essential in the realm of web scraping, serving as vital intermediaries that improve both efficiency and security. Utilising a Python proxy server allows users to navigate the complexities of data extraction while ensuring anonymity and circumventing geographical restrictions.

However, setting up such a server can present various challenges. This raises an important question: How can one effectively configure a Python proxy server to maximise its potential while steering clear of common pitfalls?

This guide provides a comprehensive, step-by-step approach to mastering the setup of a Python proxy server, equipping users with the knowledge to address any issues that may arise during the process.

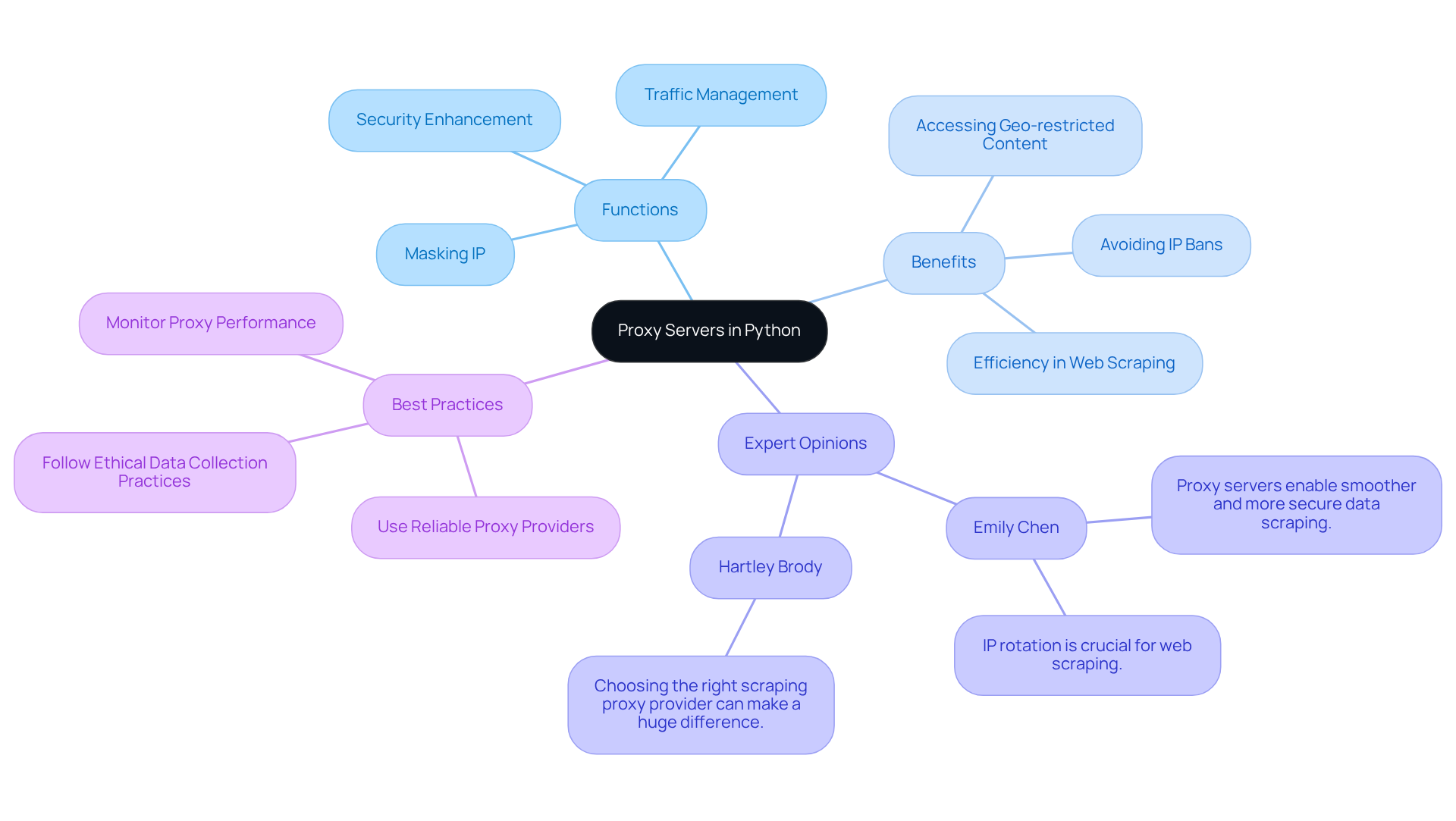

Understand Proxy Servers and Their Importance in Python

Proxy systems serve as essential intermediaries between clients and the internet, efficiently directing requests and responses. They are crucial in web scraping, as they mask the user's IP address, manage traffic, and enhance security. By utilising a python proxy server, users can significantly enhance their web scraping efficiency, effectively evading IP bans and accessing geo-restricted content.

For instance, employing rotating servers minimises the risk of detection and facilitates smooth information retrieval from diverse sources. This capability is particularly vital for large-scale projects, where maintaining anonymity and avoiding rate limits are of utmost importance.

Appstractor's enterprise-grade information scraping solutions, backed by a global self-healing IP pool, ensure continuous uptime and exceptional support - both essential for successful scraping endeavours. Experts in the field, such as Samantha Nobile from Koozai and Paul Rogers, emphasise that the strategic use of intermediaries not only enhances performance but also ensures compliance with ethical information gathering practises, including GDPR regulations.

Understanding the functionality and advantages of intermediaries is crucial for anyone aiming to leverage a programming language for data extraction tasks, paving the way for successful and efficient scraping initiatives.

Gather Required Tools and Libraries for Setup

To successfully set up a Python proxy server, you will need the following essential tools and libraries:

-

Programming Language: Ensure that Python (version 3.8 or higher recommended) is installed on your machine. You can download it from the official website, with the latest version recommended for optimal performance.

-

Requests Library: This library simplifies HTTP requests in Python, making it easier to interact with web resources. Install it using the following command:

pip install requests -

Flask: A lightweight web framework perfect for building a simple intermediary. Install Flask with:

pip install Flask -

Gateway List: A dependable compilation of intermediary hosts is essential for your configuration. You can find free list services online or opt for a paid option like Appstractor, which provides rotating servers that activate within 24 hours. This guarantees improved reliability and performance, particularly for businesses requiring efficient web information extraction solutions.

Utilising a Python proxy server aids in concealing your actual IP address, circumventing rate restrictions, and extracting data from sites that prevent direct access. This makes them a crucial part of your configuration. Appstractor's comprehensive services also offer turnkey information delivery, enabling seamless integration into your projects.

Having these tools prepared will facilitate a smooth setup process, allowing you to proceed with the subsequent steps without any interruptions.

Configure Your Python Proxy Server: Step-by-Step Instructions

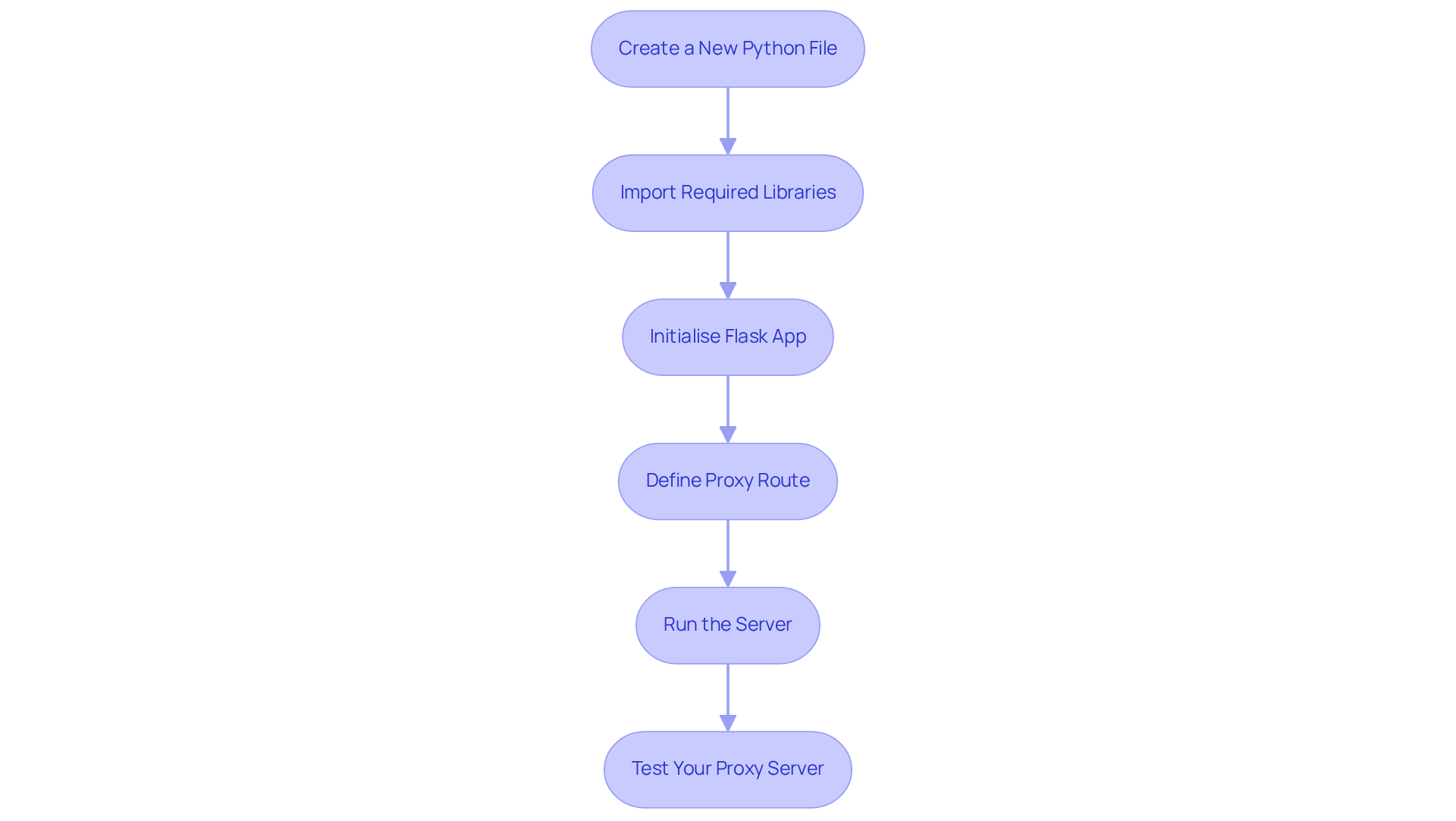

To configure your Python proxy server using Appstractor's efficient web data extraction solutions, follow these steps:

- Create a New Python File: Open your preferred code editor and create a new file named

proxy_server.py. - Import Required Libraries: At the top of your file, import the necessary libraries:

from flask import Flask, request import requests - Initialise Flask App: Create an instance of the Flask app:

app = Flask(__name__) - Define Proxy Route: Set up a route to handle incoming requests:

@app.route('/proxy', methods=['GET', 'POST']) def proxy(): url = request.args.get('url') response = requests.get(url) return response.content - Run the Server: Add the following code to run the server:

if __name__ == '__main__': app.run(port=5000) - Test Your Proxy Server: Run your script in the terminal:

Then, in your browser, navigate topython proxy_server.pyhttp://localhost:5000/proxy?url=http://example.comto test if your proxy server is functioning correctly.

Common Issues to Consider

When using your proxy server, be aware of potential issues such as:

- Error Code 403: This indicates that the server refuses to authorise the request. Ensure that the URL you are trying to access is correct and that you have permission to view it.

- Error Code 407: This indicates that authentication through an intermediary is required. If your intermediary host requires authentication, include your credentials in the URL format.

Modifying HTTP Headers

To enhance your proxy server's functionality and avoid detection by target websites, consider modifying HTTP headers. For example, you can set the User-Agent header to mimic a real browser, which can improve your web scraping efficiency.

By adhering to these steps and taking into account these typical problems, you will have a basic script operational, prepared to manage requests effectively. For more advanced data mining solutions, consider utilising Appstractor's rotating servers and full-service options to enhance your web data extraction process.

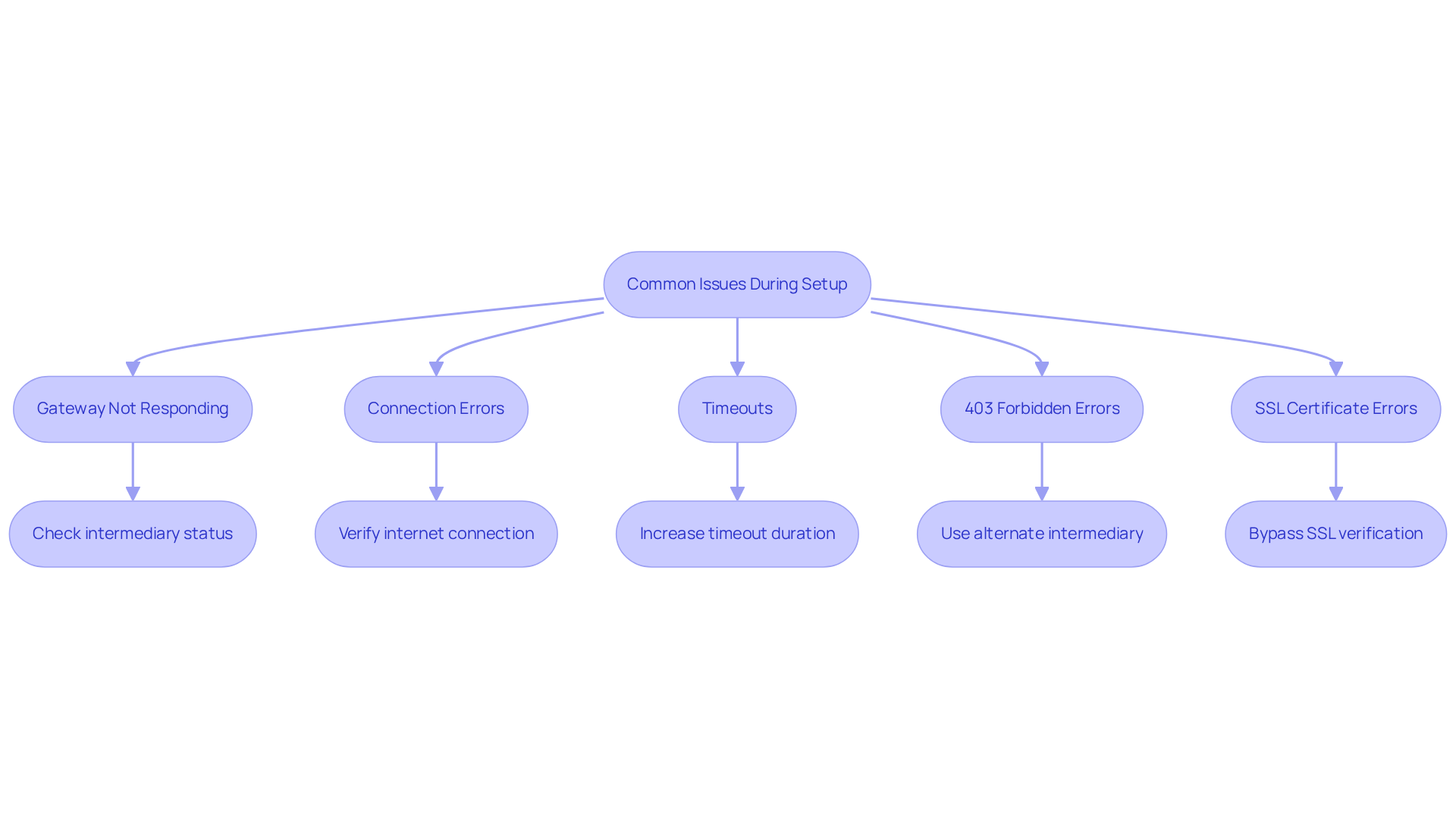

Troubleshoot Common Issues During Setup

When configuring your python proxy server with Appstractor's services, you may encounter several common challenges. Below are solutions designed to assist you in troubleshooting effectively:

- Gateway Not Responding: Ensure that the intermediary you are trying to reach is online and functioning. Review your list of intermediaries for valid options, particularly if you are utilising Appstractor's Rotating Servers, which activate within 24 hours.

- Connection Errors: Should you face connection issues, verify that your internet connection is stable and that the gateway server is accessible. For a more reliable solution, consider using Appstractor's Full Service for turnkey data delivery.

- Timeouts: If requests are timing out, you may need to increase the timeout duration in your requests:

response = requests.get(url, timeout=10) - 403 Forbidden Errors: This error may arise if the target website blocks your intermediary. In such cases, try using an alternate intermediary from Appstractor or check if the website imposes restrictions against scraping.

- SSL Certificate Errors: If you encounter SSL errors, you can bypass SSL verification (though this is not recommended for production) by adding:

response = requests.get(url, verify=False)

By understanding these common issues and their respective solutions, you can troubleshoot effectively and ensure your proxy server operates smoothly, leveraging Appstractor's advanced data mining solutions for seamless integration.

Conclusion

Mastering the setup of a Python proxy server is crucial for anyone aiming to enhance their web scraping capabilities. Proxy servers serve as intermediaries between clients and the internet, effectively masking IP addresses while improving traffic management and security. This guide offers a comprehensive approach to understanding the significance of proxy servers, equipping users with essential tools and step-by-step instructions for successful configuration.

Key insights include:

- The importance of utilising reliable libraries such as Requests and Flask

- The necessity of selecting dependable gateway lists for optimal performance

- Troubleshooting common issues, including connexion errors and timeout problems

These insights ensure users are well-prepared to tackle potential setbacks during setup. Additionally, leveraging services like Appstractor can further enhance the efficiency and reliability of web scraping projects.

In conclusion, the ability to effectively set up and utilise a Python proxy server is a valuable skill for developers and data analysts alike. By adhering to the outlined steps and grasping the underlying principles, users can significantly boost their web scraping efficiency. Embracing these practises not only facilitates better data extraction but also promotes ethical information gathering, ensuring compliance with regulations. Take the next step in your Python journey by implementing these strategies and maximising the potential of your web scraping endeavours.

Frequently Asked Questions

What is a proxy server?

A proxy server is an intermediary that directs requests and responses between clients and the internet, enhancing efficiency in web communication.

Why are proxy servers important for web scraping?

Proxy servers are important for web scraping because they mask the user's IP address, manage traffic, enhance security, and help evade IP bans while accessing geo-restricted content.

How do rotating proxy servers benefit web scraping?

Rotating proxy servers minimise the risk of detection and facilitate smooth information retrieval from various sources, which is particularly important for large-scale projects.

What are the advantages of using Appstractor's information scraping solutions?

Appstractor's solutions offer a global self-healing IP pool, ensuring continuous uptime and exceptional support, which are essential for successful scraping endeavours.

What do experts say about the use of intermediaries in web scraping?

Experts, such as Samantha Nobile from Koozai and Paul Rogers, emphasise that strategic use of intermediaries enhances performance and ensures compliance with ethical information gathering practises, including GDPR regulations.

Why is it important to understand proxy servers when using Python for data extraction?

Understanding proxy servers is crucial for leveraging Python effectively in data extraction tasks, as it enables users to enhance their scraping efficiency and maintain anonymity.

List of Sources

- Understand Proxy Servers and Their Importance in Python

- Benefits of Using Proxies for Python Web Scraping | Zenscrape (https://zenscrape.com/the-benefits-of-using-proxies-for-python-web-scraping)

- How to Use Proxies for Web Scraping: Types, Benefits, and Best Practices (https://proxywing.com/blog/using-proxies-for-web-scraping-everything-you-need-to-know)

- Web Scraping with Proxies: The Complete Guide to Scaling Your Web Scraper (https://blog.hartleybrody.com/web-scraping-proxies)

- What is a Proxy Server and How to Choose Scraping Proxy Provider (https://scrapeless.com/en/blog/proxy-server)

- Gather Required Tools and Libraries for Setup

- Simple Python proxy server based on Flask and Requests with support for GET and POST requests. (https://gist.github.com/stewartadam/f59f47614da1a9ab62d9881ae4fbe656)

- Building a Custom Proxy Rotator with Python - Residential proxies - DataImpulse (https://dataimpulse.com/blog/building-a-custom-proxy-rotator-with-python-a-step-by-step-tutorial)

- How to use a proxy with Python Requests? (https://scrapingbee.com/blog/python-requests-proxy)

- Proxy with Python Requests: 3 Setup Methods Explained (https://webshare.io/academy-article/python-requests-proxy)

- GitHub - fmassa/python-lib-stats: Find usage statistics (imports, function calls, attribute access) for Python code-bases (https://github.com/fmassa/python-lib-stats)

- Configure Your Python Proxy Server: Step-by-Step Instructions

- Making a flask proxy server, online, in 10 lines of code. (https://medium.com/@zwork101/making-a-flask-proxy-server-online-in-10-lines-of-code-44b8721bca6)

- Proxy with Python Requests: 3 Setup Methods Explained (https://webshare.io/academy-article/python-requests-proxy)

- Building a Python Proxy Server: A Step-by-Step Guide to Network Programming (https://scrapeless.com/en/blog/python-proxy-server)

- Simple Python proxy server based on Flask and Requests with support for GET and POST requests. (https://gist.github.com/stewartadam/f59f47614da1a9ab62d9881ae4fbe656)