Introduction

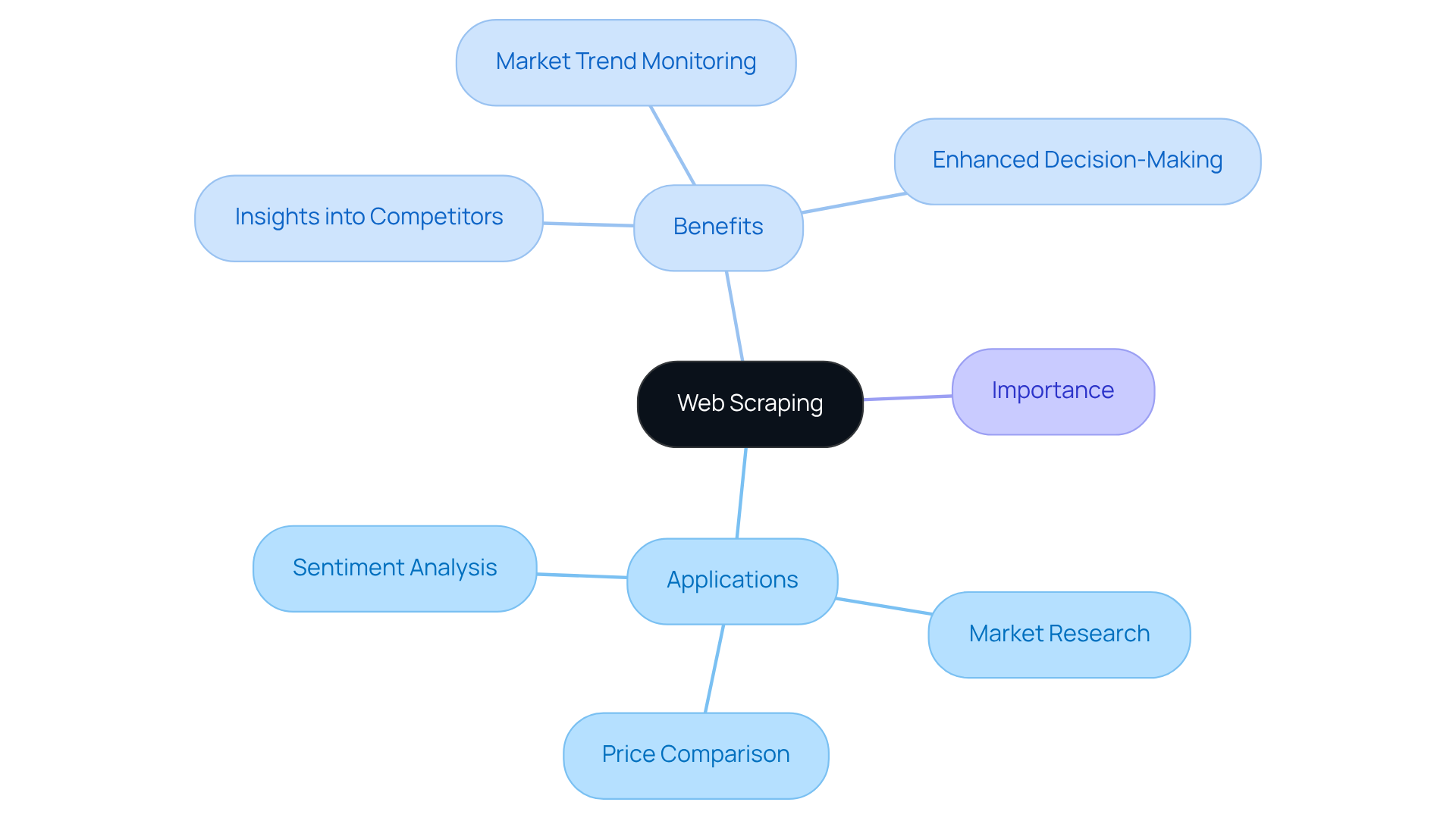

Web scraping has become an essential tool for businesses aiming to leverage the extensive data available online. By automating the collection of information from various websites, organisations can gain valuable insights into market trends, competitor strategies, and customer sentiments. This ultimately enhances their decision-making processes.

However, navigating the complexities of web scraping - such as establishing the right environment and addressing common challenges - can be daunting for many. To master web scraping with JavaScript and fully realise its potential, what essential steps should one take?

Understand Web Scraping and Its Importance in Data Collection

Web harvesting is an automated method for retrieving information from websites. This technique enables businesses to gather large volumes of data quickly and efficiently, serving various purposes such as:

- Market research

- Price comparison

- Sentiment analysis

In the current digital landscape, where data is a crucial asset, understanding web extraction is vital for effectively leveraging online information.

By employing web data extraction, organisations can gain valuable insights into competitor strategies, monitor market trends, and enhance their decision-making processes. This capability not only streamlines information gathering but also positions businesses to respond proactively to market dynamics.

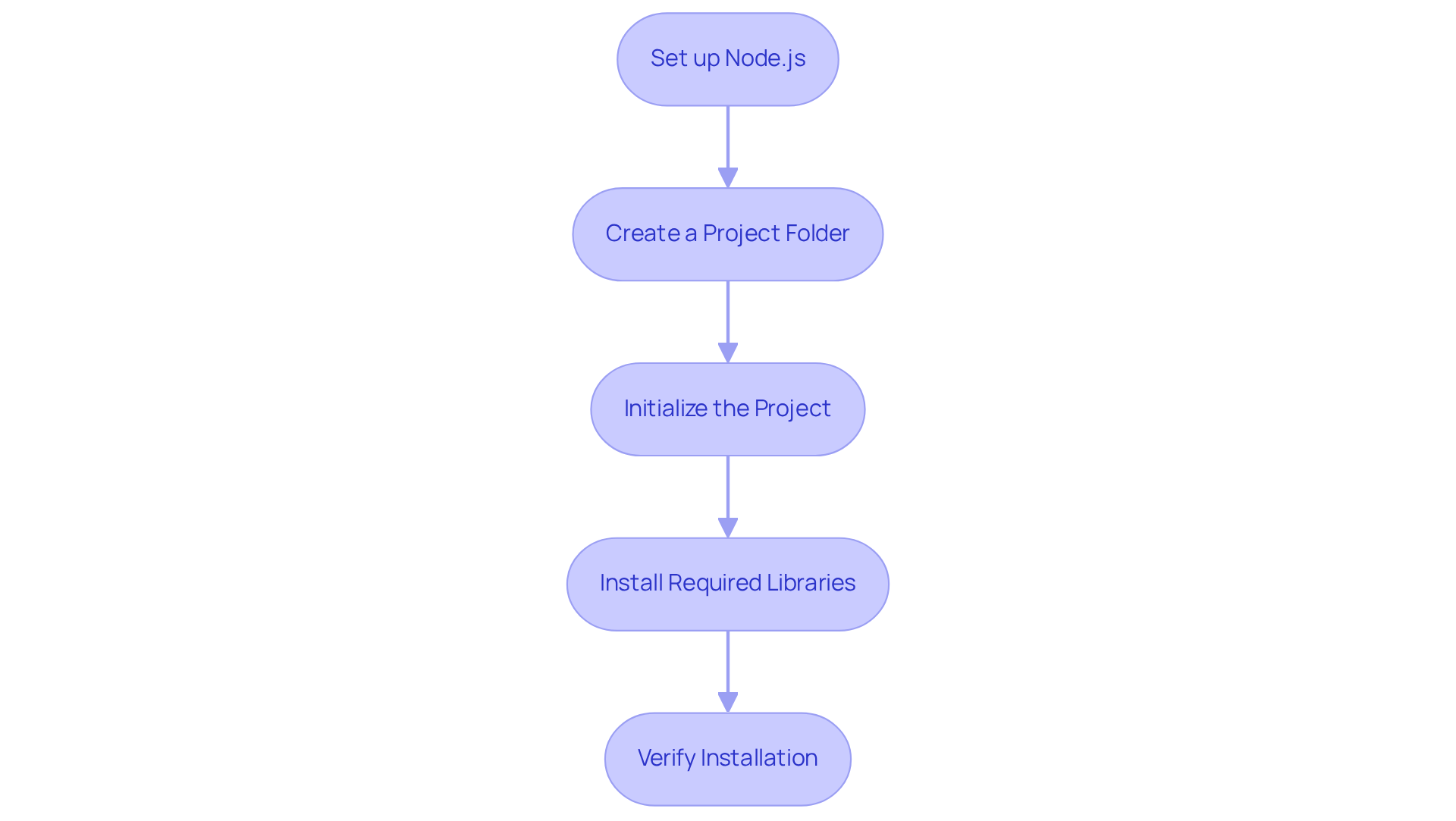

Set Up Your JavaScript Environment for Web Scraping

To begin web scraping with JavaScript, it is essential to properly set up your environment. Follow these structured steps:

- Set up Node.js: Download and install Node.js from the official website. This setup enables you to run JavaScript on your server.

- Create a Project Folder: Establish a new folder for your project. This organization will help keep your files structured and manageable.

- Initialize the Project: Open your terminal, navigate to your project folder, and execute

npm init -yto create a package.json file. - Install Required Libraries: Utilize the following commands to install essential libraries:

npm install axios cheeriofor making HTTP requests and parsing HTML.- Optionally, install

puppeteerfor harvesting dynamic content:npm install puppeteer.

- Verify Installation: Confirm that Node.js and the libraries are correctly installed by running

node -vand checking the installed packages in your project folder.

With your environment set up, you are now prepared to start building your web scraper for web scraping with JavaScript.

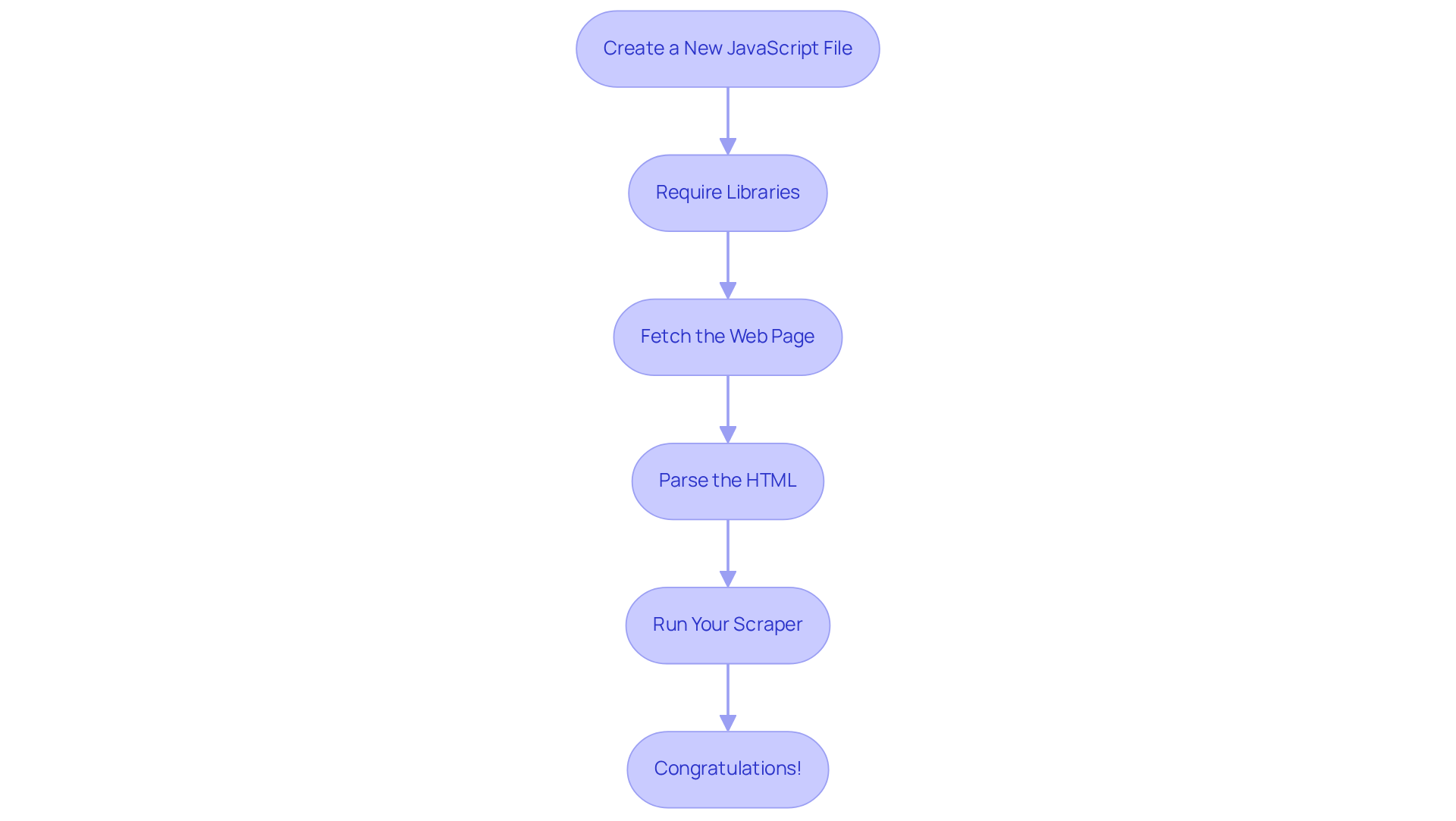

Build Your First Web Scraper: A Step-by-Step Guide

Now that your environment is set up, let's build your first web scraper using Appstractor's efficient web data extraction solutions:

- Create a New JavaScript File: In your project folder, create a new file named

scraper.js. - Require Libraries: At the top of your

scraper.jsfile, include the following code:const axios = require('axios'); const cheerio = require('cheerio'); - Fetch the Web Page: Use Axios to fetch the HTML content of the target website:

axios.get('https://example.com') .then(response => { const html = response.data; const $ = cheerio.load(html); // Your scraping logic will go here }) .catch(error => console.error(error)); - Parse the HTML: Inside the

.then()block, use Cheerio to select and extract the desired information. For example, to get all the headings:$('h1, h2, h3').each((index, element) => { console.log($(element).text()); }); - Run Your Scraper: Save your file and run it in the terminal using

node scraper.js. You should see the extracted headings printed in the console.

By utilising Appstractor's rotating proxies and comprehensive services, you can enhance your capabilities for web scraping with javascript. This ensures efficient and automated information retrieval tailored to your business requirements. Congratulations! You've built your first web scraper. You can now adjust the extraction logic to obtain various types of data as needed.

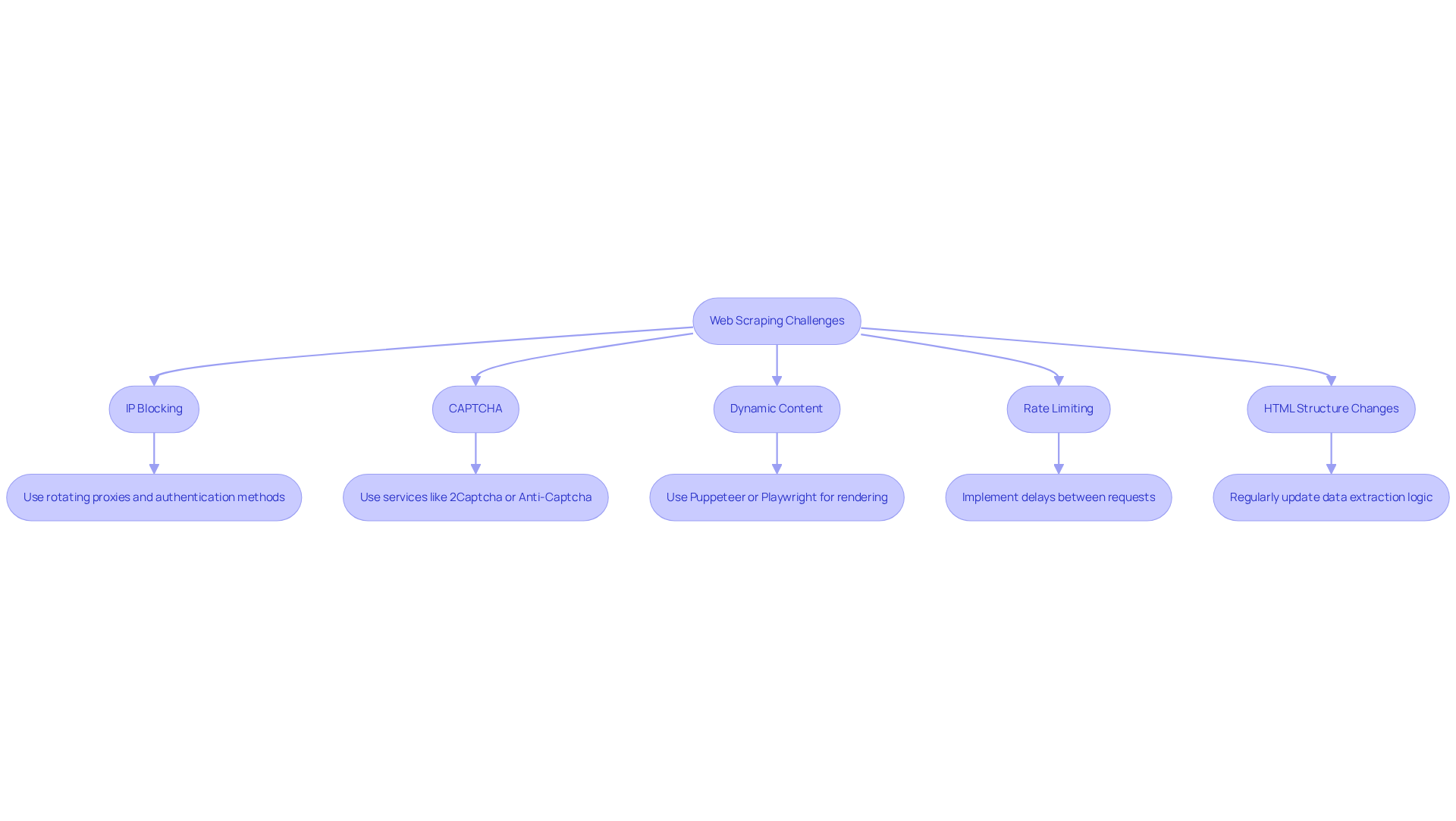

Overcome Challenges in Web Scraping: Tips and Best Practices

Web harvesting can present various challenges. Here are some common issues and strategies to overcome them:

-

IP Blocking: Websites may block your IP if they detect data extraction activity. To avoid this, utilize Appstractor's rotating proxies, which are part of a global self-healing IP pool designed for continuous uptime. This ensures that your data extraction activities remain unnoticed and uninterrupted. Additionally, consider employing authentication methods such as user:pass or IP-whitelist to further enhance your IP rotation strategy.

-

CAPTCHA: Many sites implement CAPTCHAs to prevent automated access. To address this, consider using services like 2Captcha or Anti-Captcha to programmatically solve these challenges.

-

Dynamic Content: If the website utilizes JavaScript to load content, you may need to use web scraping with javascript techniques, such as Puppeteer or Playwright, to render the page before data extraction.

-

Rate Limiting: To avoid being flagged as a bot, implement delays between requests. Use

setTimeoutin your code to space out your data collection activities. Appstractor's integrated rotation capabilities can assist in managing session stickiness, enabling smoother information extraction. -

HTML Structure Changes: Websites often change their layout, which can disrupt your scraper. Regularly check and update your data extraction logic to adapt to these changes.

By being aware of these challenges and implementing best practices, including leveraging Appstractor's enterprise-grade data scraping solutions and exceptional support, you can enhance the reliability and effectiveness of your web scraping with javascript efforts.

Conclusion

Mastering web scraping with JavaScript presents a multitude of opportunities in data collection and analysis. This tutorial has outlined the essential steps to establish a successful web scraping environment, construct a functional scraper, and navigate common challenges encountered in the process. By grasping the significance of web scraping, individuals can leverage extensive data to inform strategic decisions and secure a competitive advantage.

Key insights include:

- The importance of web scraping for market research

- Price comparison

- Sentiment analysis

The tutorial also details the step-by-step setup of a JavaScript environment using Node.js, along with essential libraries such as Axios and Cheerio. Additionally, addressing challenges like IP blocking, CAPTCHA, and dynamic content loading is vital for sustaining an effective scraping strategy. Implementing best practises such as utilising rotating proxies and managing request rates enhances the reliability of data extraction efforts.

As data remains a critical asset for businesses, harnessing the power of web scraping with JavaScript is increasingly relevant. Adopting these techniques equips individuals and organisations with the necessary tools for efficient information retrieval and fosters a proactive approach to adapting to an ever-evolving digital landscape. Take the next step in mastering web scraping and unlock the potential of data-driven insights for your projects.

Frequently Asked Questions

What is web scraping?

Web scraping is an automated method for retrieving information from websites.

Why is web scraping important for businesses?

Web scraping allows businesses to gather large volumes of data quickly and efficiently, which can be used for purposes such as market research, price comparison, and sentiment analysis.

How does web scraping benefit organisations?

By employing web data extraction, organisations can gain valuable insights into competitor strategies, monitor market trends, and enhance their decision-making processes.

What are some applications of web scraping?

Applications of web scraping include market research, price comparison, and sentiment analysis.

How does web scraping help businesses respond to market dynamics?

Web scraping streamlines information gathering, enabling businesses to proactively respond to changes in the market.