Introduction

Web scraping has emerged as a powerful tool for extracting valuable data from the vast expanse of the internet. This capability enables businesses and researchers to make informed decisions based on comprehensive insights. This tutorial serves as a comprehensive guide for beginners, detailing the essential tools, techniques, and best practises necessary to build and optimise a web scraper.

However, as the landscape of web scraping evolves, it brings forth challenges such as ethical considerations and anti-bot measures. These issues raise critical questions:

- How can one effectively gather data while respecting website policies and avoiding detection?

Understand Web Scraping: Definition and Applications

A defines web harvesting as the . This procedure, as outlined in the , involves fetching web pages and , which can then be organised in a structured format for analysis or further processing.

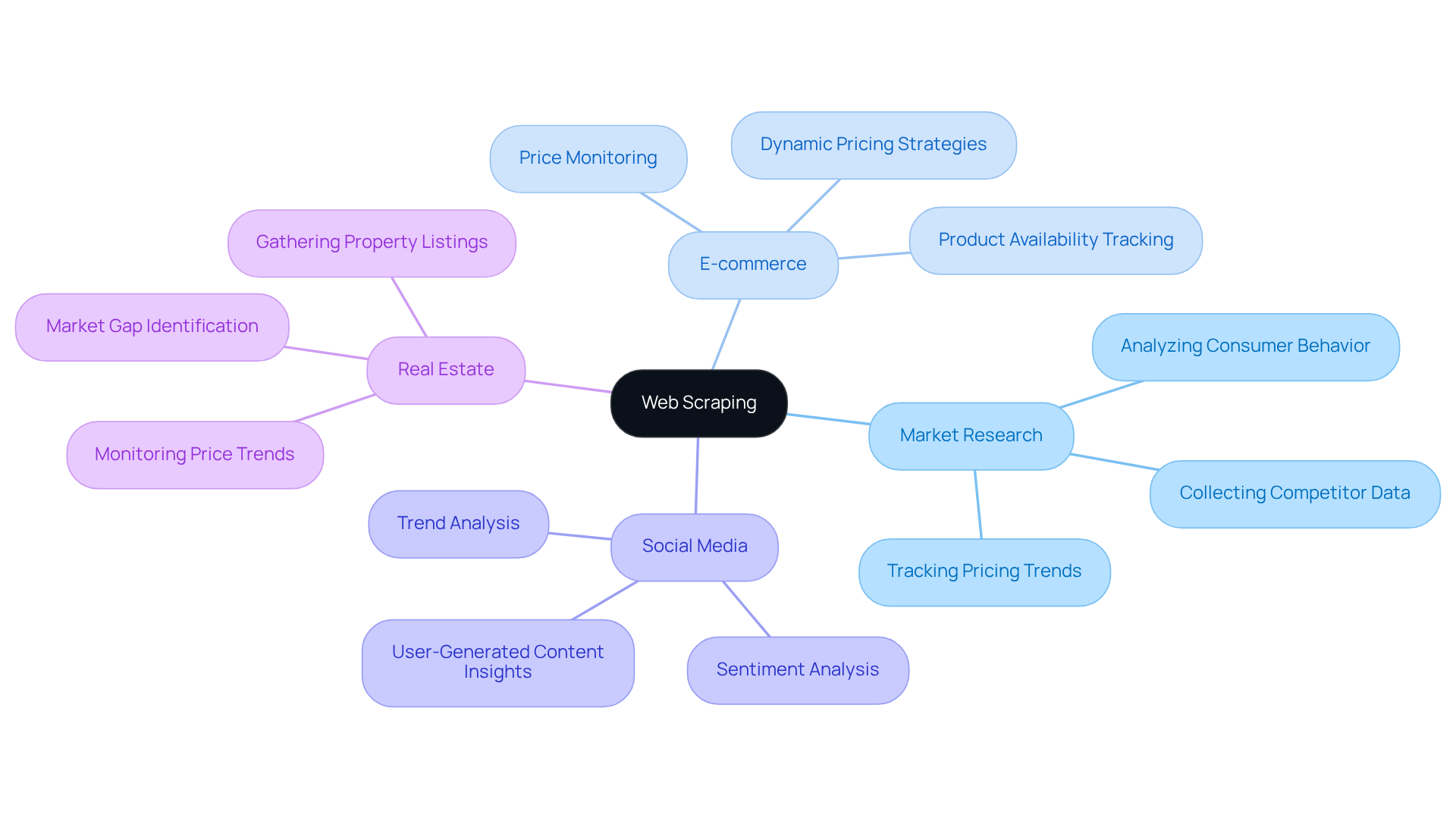

The applications of are diverse and impactful:

- : .

- E-commerce: .

- Social Media: Analysing trends and sentiments derived from user-generated content.

- Real Estate: Gathering property listings and monitoring .

By understanding these applications, as outlined in the , one can appreciate the significant role of web scraping in facilitating .

Set Up Your Environment: Tools and Libraries for Web Scraping

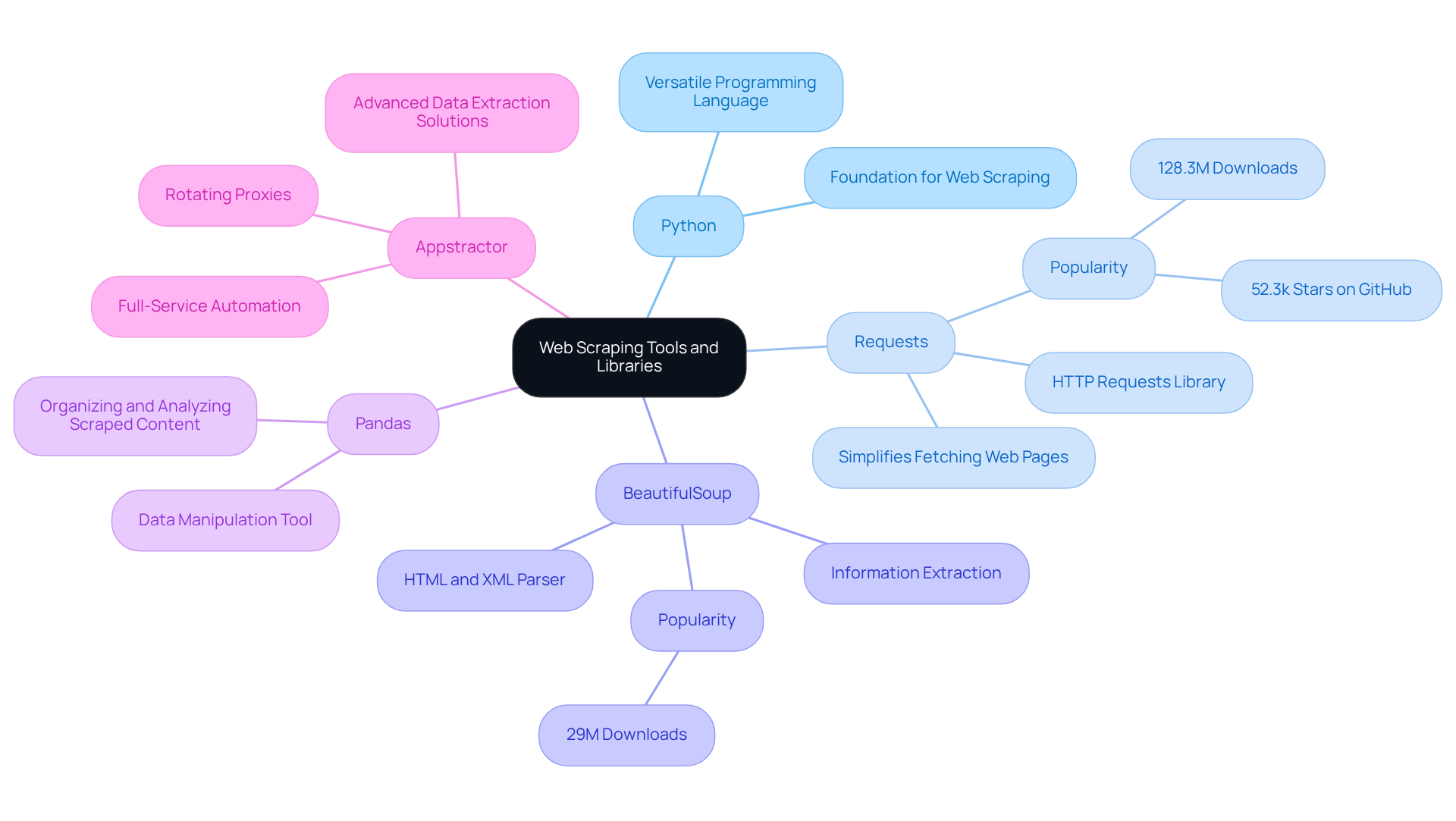

To embark on your , it's crucial to follow a to establish a robust development environment. Here are the key tools and libraries you will need:

- Python: A versatile programming language that serves as the backbone for web scraping projects.

- Requests: This library simplifies the process of , allowing you to fetch web pages effortlessly. It is the most popular HTTP client in Python, known for its intuitive API and extensive online resources.

- BeautifulSoup: Recognised as the most commonly used HTML parser in Python, BeautifulSoup excels at parsing HTML and XML files, enabling straightforward information extraction from intricate web structures.

- Pandas: A that assists in organising and analysing the content you scrape, making it easier to derive insights.

In addition to these tools, consider leveraging . Their rotating proxies enhance the functionality of these libraries by managing IP addresses effectively, ensuring continuous uptime and compliance with . For enterprises pursuing a more hands-off strategy, Appstractor's can automate the information gathering process, enabling you to concentrate on analysis instead of logistics.

Installation Steps:

-

Download and install Python from the official website.

-

Utilize pip to install the necessary libraries:

pip install requests beautifulsoup4 pandas

This setup will equip you with a solid foundation for building and optimising your web scraper, enabling you to leverage the full potential of . Furthermore, always keep in mind to adhere to to uphold ethical standards in your information collection.

Build Your First Web Scraper: Step-by-Step Guide

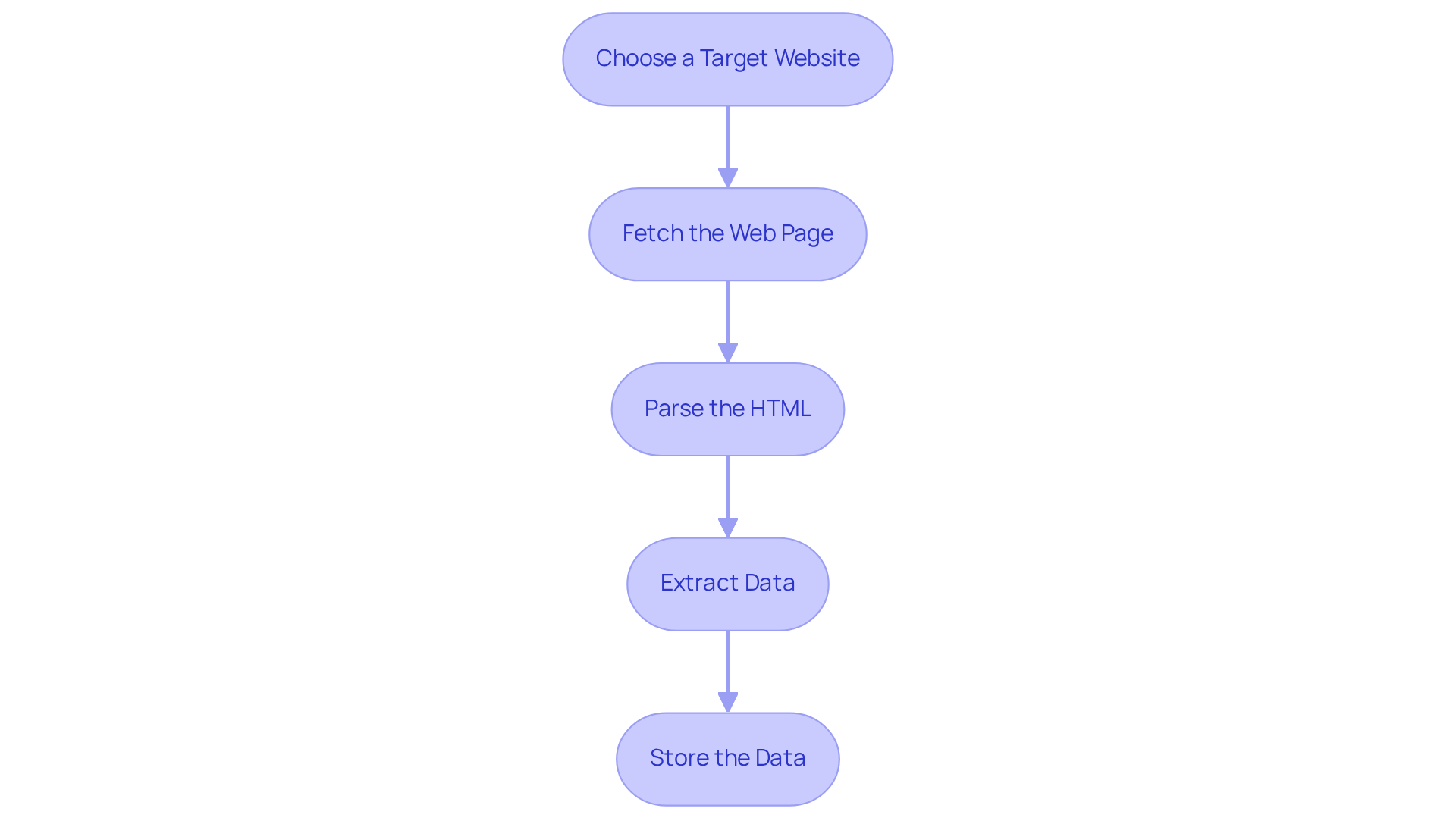

To build your first , follow these structured steps:

-

: Start by selecting a straightforward website to scrape, such as a news site or a product page.

-

Fetch the Web Page: to retrieve the :

import requests url = 'http://example.com' response = requests.get(url) html_content = response.text -

Parse the HTML: Employ the :

from bs4 import BeautifulSoup soup = BeautifulSoup(html_content, 'html.parser') -

Extract Data: Identify the and use BeautifulSoup methods to retrieve it:

titles = soup.find_all('h2') # Example for extracting all h2 tags for title in titles: print(title.text) -

Store the Data: Finally, use Pandas to save the into a CSV file:

import pandas as pd data = {'Title': [title.text for title in titles]} df = pd.DataFrame(data) df.to_csv('scraped_data.csv', index=False)

By following these steps, you will successfully build your first !

Master Advanced Techniques: Best Practices and Error Handling

To ensure your web scraper is both effective and resilient, adhere to the following best practices:

-

Respect Robots.txt: Always check the website's robots.txt file to understand the . This practice aligns with ethical standards and helps avoid potential legal issues.

-

Implement : Utilize try-except blocks to manage potential errors, such as network issues or changes in HTML structure. For example:

try: # Your scraping code except Exception as e: print(f'Error occurred: {e}')This approach is crucial as the prevalence of errors like is increasing, reflecting the ongoing arms race between scrapers and anti-bot systems.

-

: Regularly change your User-Agent string to mimic different browsers. This practice helps avoid detection and potential blocks by the target website.

-

Limit Request Rates: To prevent overwhelming the server, space out your requests. Implementing

time.sleep()can introduce necessary delays between requests, reducing the likelihood of triggering rate limits. -

Leverage : Utilize Appstractor's , which provide both self-service and managed services. This ensures optimal information extraction with a 99.9% uptime guarantee, enhancing the resilience of your crawlers and allowing for continuous monitoring of your scraping activities.

-

: After scraping, ensure your information is cleaned and normalized for consistency and usability. This step is vital as businesses increasingly rely on , with 70% of AI models depending on scraped data.

By mastering these advanced techniques presented in the web scraper tutorial, you will significantly enhance the reliability and efficiency of your web , positioning yourself for success in the evolving landscape of data collection.

Conclusion

Web scraping is a crucial tool in the digital landscape, enabling automated data extraction from websites to support informed decision-making across various industries. By mastering web scraping, individuals can leverage data, transforming raw information into actionable insights that drive business strategies and enhance market understanding.

This tutorial explored key concepts, including essential tools and libraries for successful web scraping, such as:

- Python

- Requests

- BeautifulSoup

- Pandas

Step-by-step instructions guided readers through building a basic scraper, while advanced techniques highlighted best practises for maintaining ethical standards, ensuring robust error handling, and optimising scraping efficiency. The importance of adhering to guidelines-such as respecting robots.txt and implementing user-agent rotation-was emphasised to safeguard against potential pitfalls.

Ultimately, the ability to effectively scrape and analyse data is increasingly vital in today’s data-driven world. By applying the knowledge gained from this tutorial, individuals can create their first web scraper and refine their skills to adapt to the evolving challenges of data extraction. Embracing these practises will empower users to harness the full potential of web scraping, paving the way for innovative applications and informed decision-making in their respective fields.

Frequently Asked Questions

What is web scraping?

Web scraping is the automated process of retrieving information from websites, which involves fetching web pages and extracting specific data to organise it in a structured format for analysis or further processing.

What are the main applications of web scraping?

The main applications of web scraping include market research, e-commerce price tracking, social media trend analysis, and real estate data gathering.

How is web scraping used in market research?

In market research, web scraping is used to collect data on competitors, pricing, and consumer behaviour.

What role does web scraping play in e-commerce?

In e-commerce, web scraping is used to track product prices and availability across various platforms.

How can web scraping benefit social media analysis?

Web scraping can benefit social media analysis by allowing for the analysis of trends and sentiments derived from user-generated content.

What is the significance of web scraping in real estate?

In real estate, web scraping is significant for gathering property listings and monitoring price trends.

List of Sources

- Understand Web Scraping: Definition and Applications

- ficstar.medium.com (https://ficstar.medium.com/web-scraping-trends-for-2025-and-2026-0568d38b2b05?source=rss------ai-5)

- One moment, please... (https://dataprixa.com/web-scraping-statistics-trends)

- Web Scraping Market: Analysis of Future Demand and Leading Key Players Through 2030 - Game Developers Conference News Today - EIN Presswire (https://gdc.einnews.com/amp/pr_news/886871086/web-scraping-market-analysis-of-future-demand-and-leading-key-players-through-2030)

- scrapegraphai.com (https://scrapegraphai.com/blog/automation-web-scraping)

- State of Web Scraping 2026: Trends, Challenges & What’s Next (https://browserless.io/blog/state-of-web-scraping-2026)

- Set Up Your Environment: Tools and Libraries for Web Scraping

- Top 7 Python Web Scraping Libraries (https://brightdata.com/blog/web-data/python-web-scraping-libraries)

- Simplifying News Scraping with Python’s Newspaper4k Library (https://pub.aimind.so/simplifying-news-scraping-with-pythons-newspaper4k-library-a89e1d50336c)

- Stop Getting Blocked: Python Web Scraping Tools That Actually Work in 2026 (https://medium.com/@inprogrammer/best-python-web-scraping-tools-2026-updated-87ef4a0b21ff)

- Build Your First Web Scraper: Step-by-Step Guide

- One moment, please... (https://dataprixa.com/web-scraping-statistics-trends)

- Web Scraping News Articles with Python (2026 Guide) (https://capsolver.com/blog/web-scraping/web-scraping-news)

- actowizsolutions.com (https://actowizsolutions.com/web-scraping-industry-report-data-first-ai-revolution.php)

- Web Scraping for News Articles using Python– Best Way In 2026 (https://proxyscrape.com/blog/web-scraping-for-news-articles-using-python)

- How to Scrape News Articles With AI and Python (https://brightdata.com/blog/web-data/how-to-scrape-news-articles)

- Master Advanced Techniques: Best Practices and Error Handling

- ficstar.medium.com (https://ficstar.medium.com/web-scraping-trends-for-2025-and-2026-0568d38b2b05?source=rss------ai-5)

- Web Scraping Statistics & Trends You Need to Know in 2026 (https://scrapingdog.com/blog/web-scraping-statistics-and-trends)

- actowizsolutions.com (https://actowizsolutions.com/web-scraping-industry-report-data-first-ai-revolution.php)

- webdatacrawler.com (https://webdatacrawler.com/case-study.php)

- capsolver.com (https://capsolver.com/blog/web-scraping/402-403-404-429-errors-web-scraping)