Introduction

Mastering the art of web scraping is essential for extracting valuable information, especially from Google Search. As businesses increasingly depend on external web data for insights, effectively utilising Python for this purpose becomes crucial. However, the complexities of web scraping - such as ethical considerations and technical challenges - raise the question: how can one navigate this landscape to create a reliable Google Search scraper? This guide provides a structured, step-by-step approach to equip readers with the necessary skills to scrape Google Search results efficiently and ethically.

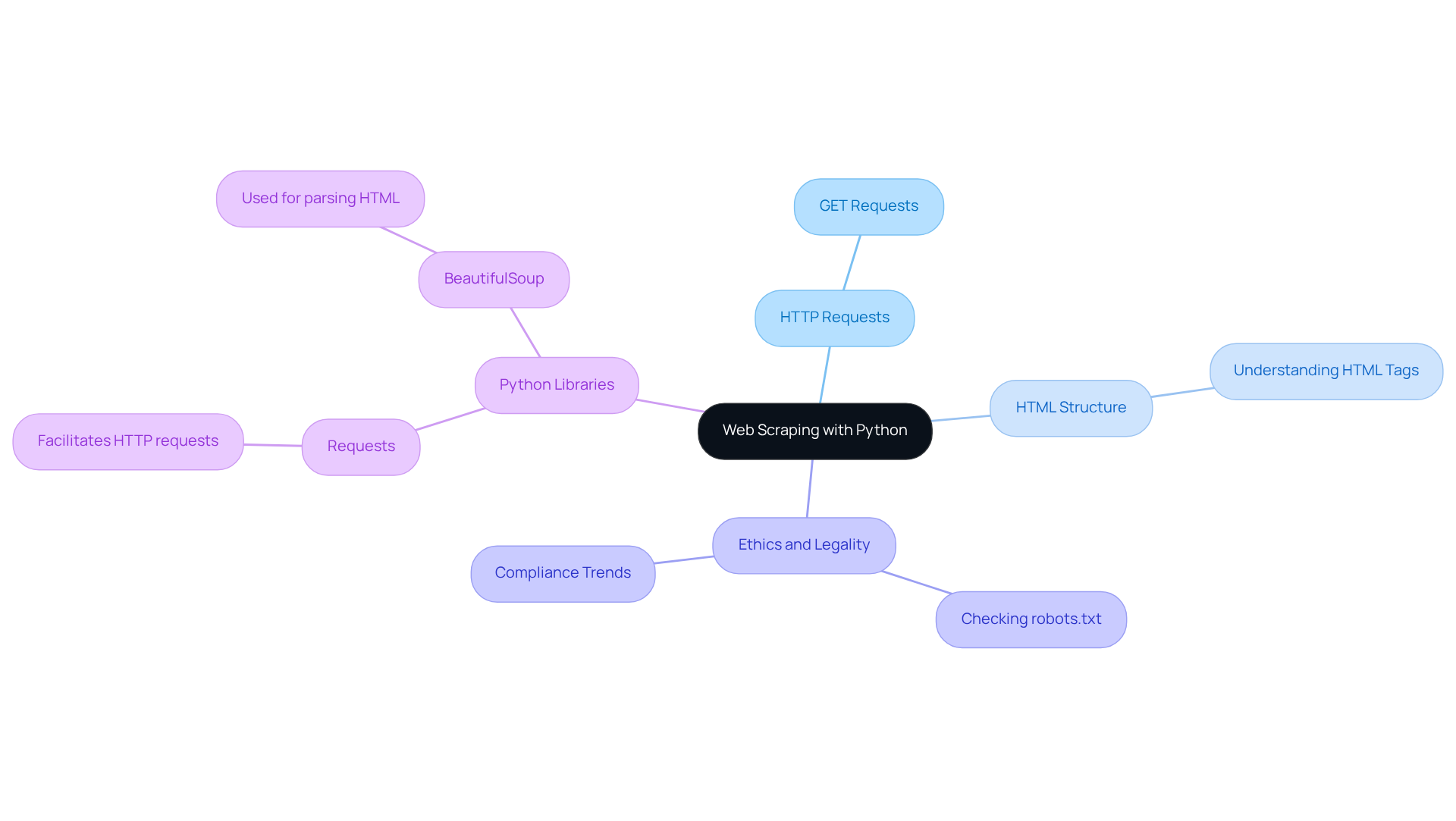

Understand the Basics of Web Scraping with Python

The automated process of extracting information from websites, particularly relevant in the context of Google Search, can be achieved using a python google search scraper. This involves utilizing a python google search scraper to send requests to Google's servers and parse the returned HTML to retrieve valuable information. Key concepts include:

- HTTP Requests: Mastering how to send GET requests is essential for effectively retrieving web pages. This skill enables developers to engage with web servers and obtain the information they require.

- HTML Structure: A solid understanding of HTML tags and the arrangement of information on web pages is crucial. Industry leaders stress that understanding the intricacies of HTML structure is vital for successful web extraction projects, particularly when utilizing a python google search scraper, as it directly impacts the accuracy of data retrieval.

- Ethics and Legality: It is imperative to check a website's

robots.txtfile to understand what is permissible to scrape. Adhering to ethical standards and legal obligations is increasingly significant, particularly as 65% of businesses are expected to utilize external web information for market analysis by 2026. This trend underscores the necessity for compliance with evolving regulations regarding information collection.

Popular Python libraries for web extraction, such as Requests, which facilitates making HTTP requests, and BeautifulSoup, which is used for parsing HTML, are essential tools for a python google search scraper. These tools streamline the scraping process and enhance efficiency.

In addition to these fundamentals, Appstractor offers sophisticated information extraction solutions tailored for the real estate and employment sectors. With features such as real estate listing change alerts, compensation benchmarking, and a global self-healing IP pool for continuous uptime, Appstractor ensures users can access timely and relevant data. Their operations are fully GDPR-compliant, providing peace of mind while utilizing enterprise-grade data extraction technology. Support is measured by time-to-resolution, ensuring that engineers remain engaged until issues are resolved.

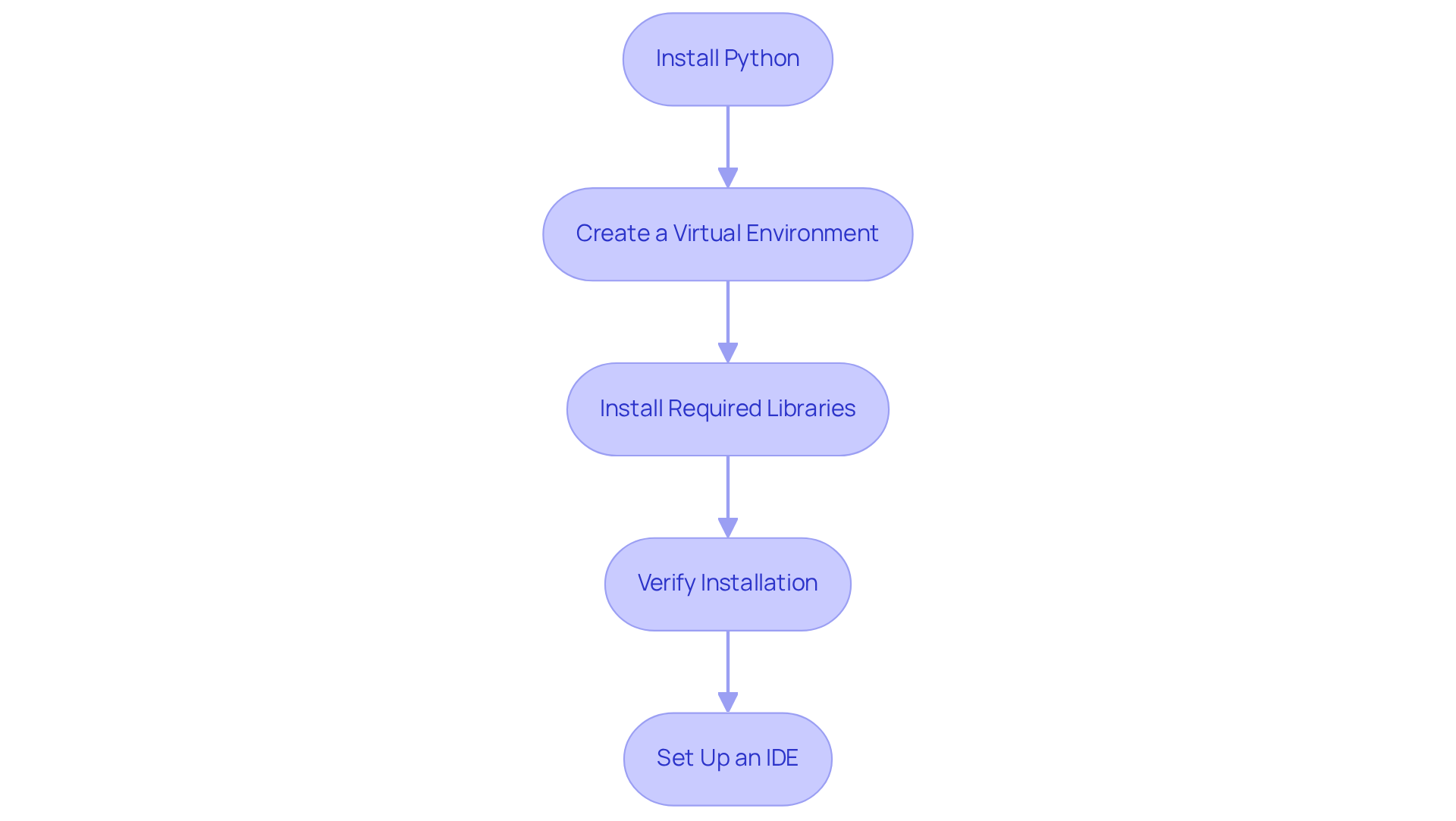

Set Up Your Python Environment for Scraping

To effectively set up your Python environment for web scraping, follow these essential steps:

-

Install Python: Ensure Python is installed on your machine. Download it from python.org.

-

Create a Virtual Environment: This step is crucial for managing project dependencies. Execute the following commands in your terminal:

python -m venv myenv source myenv/bin/activate # On Windows, use `myenv\Scripts\activate` -

Install Required Libraries: Utilize pip to install the necessary libraries for web scraping:

pip install requests beautifulsoup4 scrapy selenium requests-html -

Verify Installation: Confirm that the libraries are installed correctly by running:

import requests import bs4 print('Libraries installed successfully!') -

Set Up an IDE: Choose an Integrated Development Environment (IDE) like PyCharm or VSCode to streamline your coding process.

As noted by Python developers, "Using virtual environments is a best practice for managing dependencies and ensuring project isolation," which is vital for maintaining clean and efficient code. In 2026, the most popular libraries for web data extraction include Requests, Beautiful Soup, Scrapy, Selenium, and Requests-HTML, each offering unique capabilities for handling various tasks, including rendering JavaScript with Requests-HTML. Furthermore, always verify a website's robots.txt file and Terms of Service prior to data extraction to ensure adherence to ethical practices. By following these steps, you'll be well-equipped to embark on your web scraping journey.

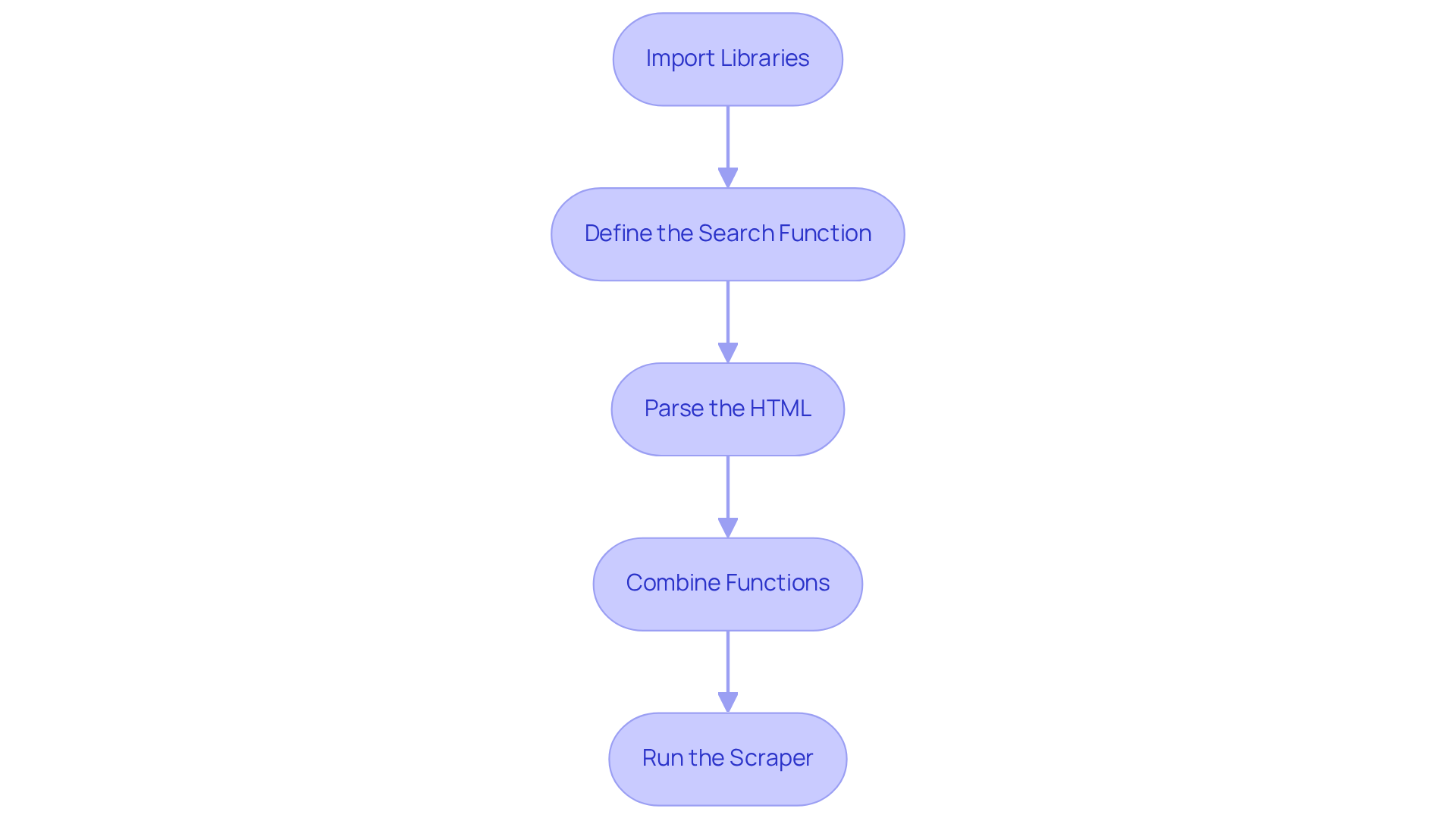

Write the Code for Your Google Search Scraper

To create an effective Google Search scraper, follow these coding steps:

-

Import Libraries:

import requests from bs4 import BeautifulSoup -

Define the Search Function:

def google_search(query): url = f'https://www.google.com/search?q={query}' headers = {'User-Agent': 'Mozilla/5.0'} response = requests.get(url, headers=headers) return response.text -

Parse the HTML:

def parse_results(html): soup = BeautifulSoup(html, 'html.parser') results = [] for g in soup.find_all('div', class_='BVG0Nb'): title = g.find('h3').text link = g.find('a')['href'] results.append({'title': title, 'link': link}) return results -

Combine Functions:

def main(query): html = google_search(query) results = parse_results(html) for result in results: print(result) -

Run the Scraper:

if __name__ == '__main__': main('your search term')

This structured approach simplifies the scraping process and underscores the importance of a clear search function. As John Warnock noted, "It’s very important that a programmer be able to look at a piece of code like a bad chapter of a book and scrap it without looking back." This highlights the necessity for clarity and simplicity in programming, ensuring that the code remains maintainable and efficient. Furthermore, utilising Appstractor's sophisticated information extraction solutions can enhance your web harvesting efforts. Options like Rotating Proxy Servers and Full Service allow for seamless automation of information extraction, ensuring you gather valuable insights for your SEO strategies. By adhering to these steps, you can leverage the power of web scraping to enhance your SEO efforts and collect crucial information.

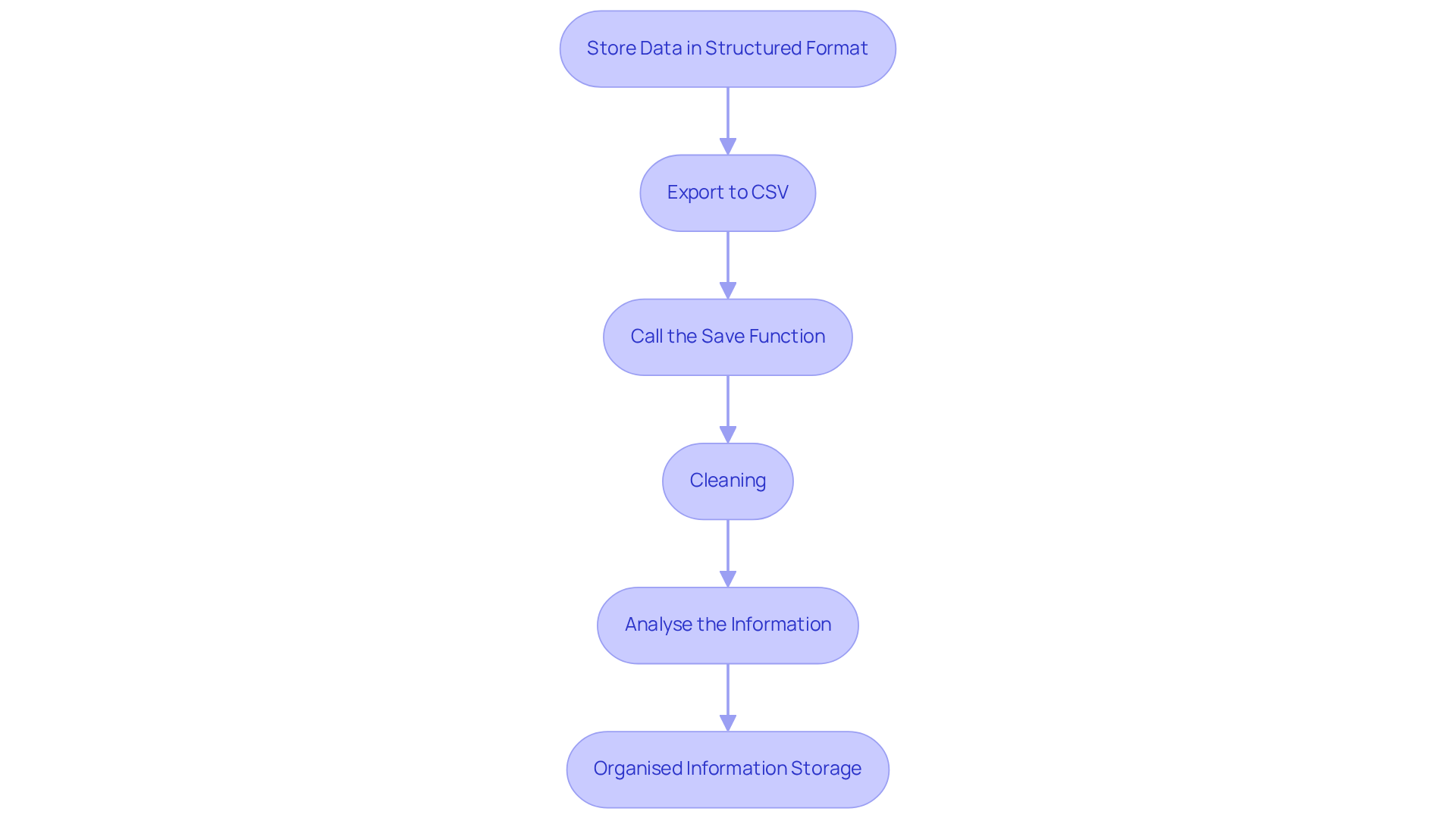

Extract and Manage Data from Google Search Results

Effectively managing your scraped data is crucial for maximising its value, particularly when leveraging Appstractor's efficient web data extraction solutions. Here’s a streamlined approach to extract and manage your data:

- Store Data in a Structured Format: Organise your findings using a list of dictionaries, which allows for easy manipulation and access.

- Export to CSV: To preserve your results for future analysis, export them to a CSV file using the following code:

import csv def save_to_csv(results): keys = results[0].keys() with open('results.csv', 'w', newline='', encoding='utf-8') as output_file: dict_writer = csv.DictWriter(output_file, fieldnames=keys) dict_writer.writeheader() dict_writer.writerows(results) - Call the Save Function: After scraping, invoke the

save_to_csvfunction to save your data:save_to_csv(results) - Cleaning: Prioritise integrity by ensuring your dataset is free of errors, eliminating duplicates and irrelevant entries. Utilise libraries like

pandasfor advanced information manipulation and cleaning, which can be seamlessly integrated with Appstractor's management solutions to enhance the cleaning process. - Analyse the Information: Leverage the insights from your collected information for various analyses, such as market research or competitor assessments. In 2026, roughly 72% of businesses are exporting their collected information to CSV, highlighting the significance of structured information storage for effective decision-making. As Pearl Zhu mentions, "We are progressing gradually into a period where large information sets are the starting point, not the conclusion."

Organised information storage is essential for converting raw information into actionable insights, allowing businesses to make informed choices and remain competitive. With Appstractor's rotating proxies and full-service options, you can improve your web data collection capabilities and ensure continuous monitoring of your information.

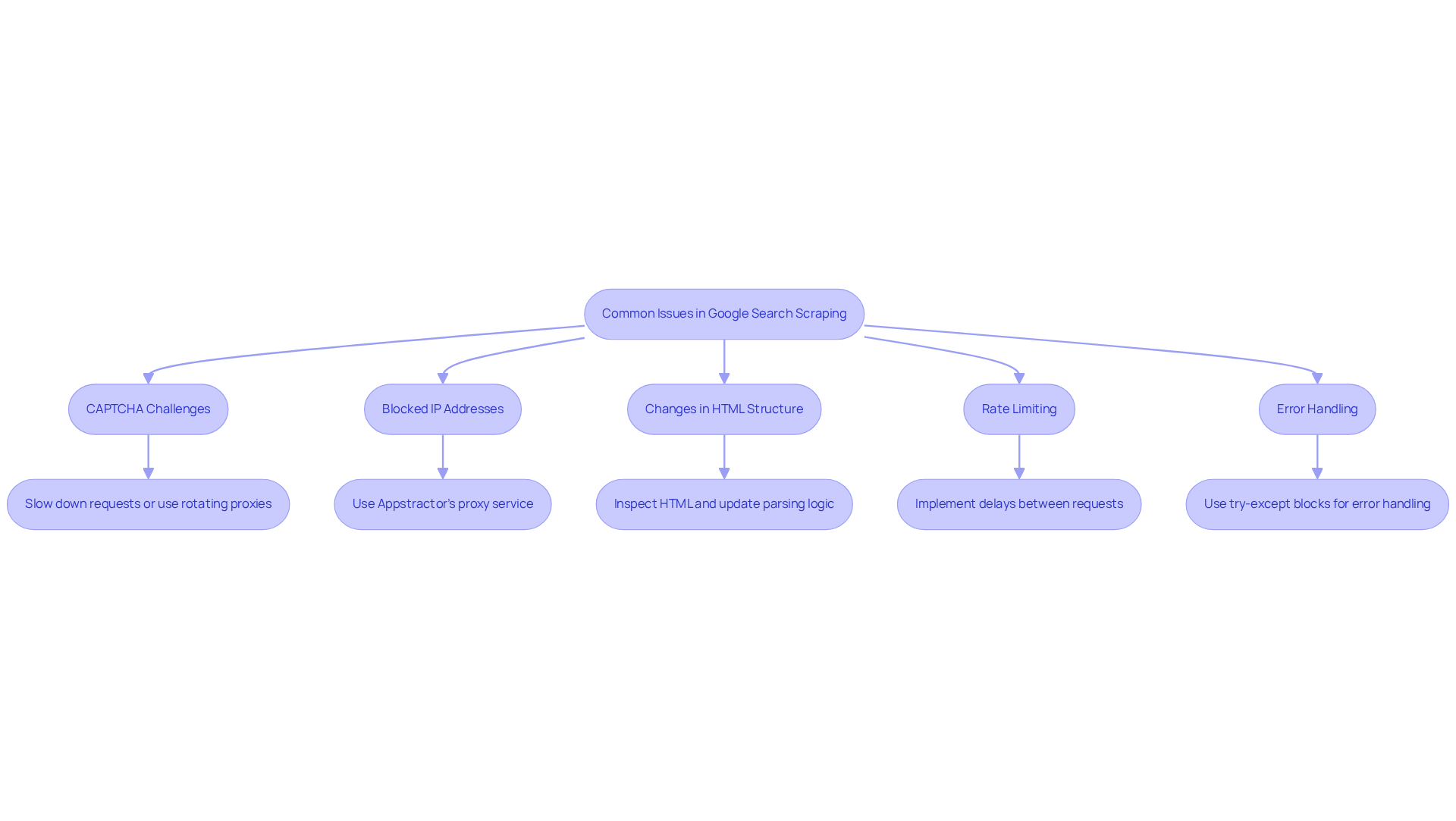

Troubleshoot Common Issues in Google Search Scraping

When extracting Google Search results, several common issues may arise. Here’s how to troubleshoot them:

-

CAPTCHA Challenges: Receiving a CAPTCHA indicates that Google has detected unusual activity. To mitigate this, slow down your requests or utilise rotating proxies from Appstractor. This approach helps distribute your requests across multiple IPs, reducing the likelihood of detection.

-

Blocked IP Addresses: If your IP gets blocked, consider using Appstractor's proxy service to change your IP address. Their Rotating Proxy Servers can be activated within 24 hours, providing you with self-serve IPs that enhance your scraping efficiency.

-

Changes in HTML Structure: Google frequently updates its HTML structure. If your scraper stops functioning, inspect the HTML to identify changes and update your parsing logic accordingly.

-

Rate Limiting: Google may limit the number of requests from a single IP. To avoid being flagged, implement delays between requests using

time.sleep(). Additionally, utilising Appstractor's Full Service or Hybrid options can help manage request rates effectively. -

Error Handling: Implement error handling in your code to manage exceptions gracefully. Use try-except blocks to catch and log errors, allowing your scraper to continue running. With Appstractor's advanced data mining solutions, you can automate data extraction while ensuring secure and efficient data handling.

Conclusion

In conclusion, mastering the art of web scraping with Python, especially for Google Search, unlocks a wealth of opportunities for data extraction and analysis. By adhering to the structured approach outlined in this guide, individuals can effectively leverage Python to extract valuable insights from search results. The integration of ethical practises, sound coding techniques, and efficient data management ensures that this powerful tool is utilised responsibly and effectively.

Key steps include:

- Understanding the fundamentals of web scraping

- Setting up a robust Python environment

- Writing a functional scraper

- Managing and analysing the scraped data

- Troubleshooting common issues

Each of these components is vital in developing a successful Google Search scraper, empowering users to navigate the complexities of web data extraction with confidence.

As the demand for data-driven decision-making continues to grow, adopting web scraping techniques becomes increasingly crucial. Whether for market research, SEO strategies, or competitive analysis, the insights derived from a well-executed scraping project can significantly bolster strategic initiatives. By embracing best practises and utilising advanced tools like Appstractor, aspiring developers can enhance their web scraping skills and maintain a competitive edge in an ever-evolving digital landscape.

Frequently Asked Questions

What is web scraping and how is it related to Python?

Web scraping is the automated process of extracting information from websites. In the context of Python, it involves using a python google search scraper to send requests to web servers and parse the returned HTML to retrieve valuable information.

What are HTTP requests and why are they important for web scraping?

HTTP requests are messages sent by a client to a server to request data. Mastering how to send GET requests is essential for effectively retrieving web pages, allowing developers to engage with web servers and obtain the information they require.

Why is understanding HTML structure important for web scraping?

A solid understanding of HTML tags and the arrangement of information on web pages is crucial for successful web extraction projects. It directly impacts the accuracy of data retrieval when using a python google search scraper.

What ethical and legal considerations should be taken into account when web scraping?

It is imperative to check a website's robots.txt file to understand what is permissible to scrape. Adhering to ethical standards and legal obligations is essential, especially as many businesses increasingly rely on external web information for market analysis.

What are some popular Python libraries for web scraping?

Popular Python libraries for web scraping include Requests for making HTTP requests, BeautifulSoup for parsing HTML, Scrapy for comprehensive scraping tasks, Selenium for automating web browsers, and Requests-HTML for rendering JavaScript.

How do you set up a Python environment for web scraping?

To set up a Python environment for web scraping, you should: 1. Install Python from python.org. 2. Create a virtual environment using the command python -m venv myenv and activate it. 3. Install required libraries using pip, such as pip install requests beautifulsoup4 scrapy selenium requests-html. 4. Verify the installation by running import commands for the libraries. 5. Set up an Integrated Development Environment (IDE) like PyCharm or VSCode.

What is Appstractor and what features does it offer for data extraction?

Appstractor provides sophisticated information extraction solutions tailored for the real estate and employment sectors. Features include real estate listing change alerts, compensation benchmarking, and a global self-healing IP pool for continuous uptime, all while ensuring GDPR compliance.

Why is it important to verify a website's robots.txt file and Terms of Service before scraping?

Verifying a website's robots.txt file and Terms of Service is crucial for ensuring adherence to ethical practices and legal obligations regarding data extraction, helping to avoid potential legal issues.

List of Sources

- Understand the Basics of Web Scraping with Python

- Exploring Business Benefits of Web Scraping: Key Stats & Trends (https://browsercat.com/post/business-benefits-web-scraping-statistics-trends)

- Web Scraping Services Market Outlook 2026-2034 (https://intelmarketresearch.com/web-scraping-services-market-35740)

- Web Scraping Market Size, Growth Report, Share & Trends 2026 - 2031 (https://mordorintelligence.com/industry-reports/web-scraping-market)

- Web data for scraping developers in 2026: AI fuels the agentic future (https://zyte.com/blog/web-data-for-scraping-developers)

- State of Web Scraping 2026: Trends, Challenges & What’s Next (https://browserless.io/blog/state-of-web-scraping-2026)

- Set Up Your Python Environment for Scraping

- Python Web Scraping Tutorial: Step-By-Step (2025) (https://oxylabs.io/blog/python-web-scraping)

- A Comprehensive Guide to Web Scraping with Python (https://medium.com/@me.mdhamim/a-comprehensive-guide-to-web-scraping-with-python-03cd6d7737fd)

- Top Python Web Scraping Libraries 2026 (https://capsolver.com/blog/web-scraping/best-python-web-scraping-libraries)

- Web Scraping for Beginners: A Step-by-Step Guide (https://firecrawl.dev/blog/web-scraping-intro-for-beginners)

- How to Create a Python Virtual Environment(Step-by-Step Guide) - GeeksforGeeks (https://geeksforgeeks.org/python/create-virtual-environment-using-venv-python)

- Write the Code for Your Google Search Scraper

- Inspiring Quotes for Software Developers - Kartaca (https://kartaca.com/en/inspiring-quotes-for-software-developers)

- Scraping Google news using Python (2026 Tutorial) (https://serpapi.com/blog/scraping-google-news-using-python-tutorial)

- How to scrape Google News with Python in 2023 (Full Code included) (https://lobstr.io/blog/how-to-scrape-google-news-with-python)

- Inspiring Software Development Quotes To Fuel Your Coding Journey | Rare Crew (https://rarecrew.com/blog/post/inspiring-software-development-quotes-to-fuel-your-coding-journey)

- Extract and Manage Data from Google Search Results

- 19 Inspirational Quotes About Data | The Pipeline | ZoomInfo (https://pipeline.zoominfo.com/operations/19-inspirational-quotes-about-data)

- Data Management Quotes To Live By | InfoCentric (https://infocentric.com.au/2022/04/28/data-management-quotes)

- 23 Must-Read Quotes About Data [& What They Really Mean] (https://careerfoundry.com/en/blog/data-analytics/inspirational-data-quotes)

- Web Scraping Statistics & Trends You Need to Know in 2025 (https://kanhasoft.com/blog/web-scraping-statistics-trends-you-need-to-know-in-2025)