Introduction

Mastering PHP web scraping is an essential skill in today’s data-driven landscape. The ability to extract valuable insights from websites can significantly influence business strategies. This article explores the fundamental techniques, tools, and ethical considerations that shape effective PHP scraping practises.

As developers navigate this complex terrain, they often face challenges such as:

- CAPTCHA

- IP blocking

- Legal compliance

This raises an important question: how can one leverage the power of PHP scraping while ensuring ethical integrity and overcoming these obstacles?

Understand PHP Web Scraping Fundamentals

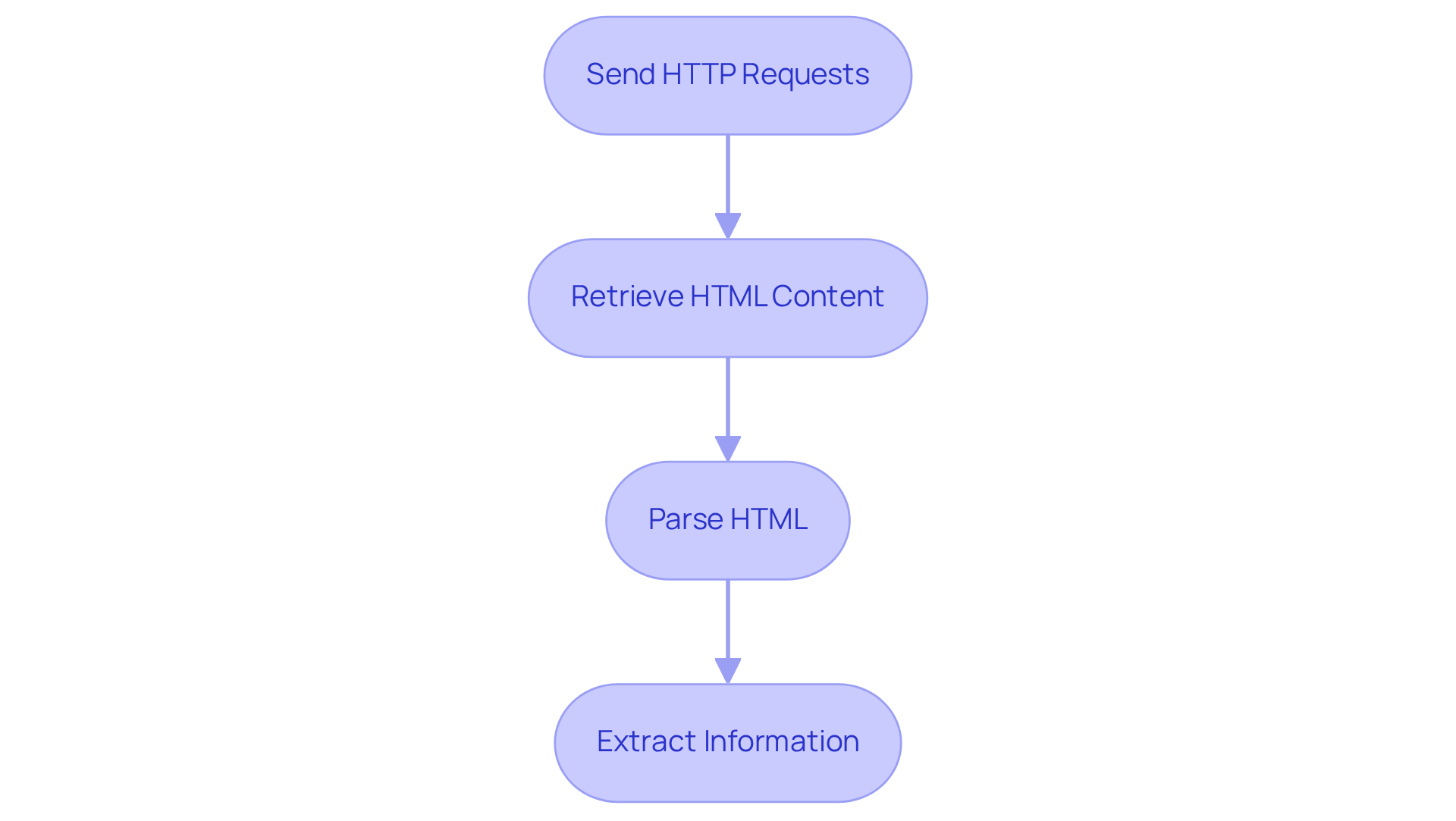

A php scraper involves using PHP scripts to extract valuable data from websites, particularly insights relevant to real estate and job markets. This process typically includes sending HTTP messages to a target URL, retrieving the HTML content, and parsing it to extract the desired information. Key concepts include:

- HTTP Requests: Mastering the art of sending GET and POST requests is crucial. PHP functions like

file_get_contents()and libraries such as cURL are commonly employed for this purpose, enabling developers to interact effectively with web servers. As noted by Kanhasoft, "A good scraping strategy isn’t just about collecting data-it’s about doing it in a way that’s sustainable, adaptable, and bulletproof against the inevitable curveballs the internet throws at you." - HTML Parsing: Utilising libraries like Simple HTML DOM or DOMDocument allows developers to navigate and manipulate the HTML structure efficiently. These tools simplify the extraction of information from complex web pages, which is essential for applications like Appstractor's real estate listing change alerts.

- Information Extraction: Identifying specific elements-such as text, images, or links-is essential. Developers can employ various methods to retrieve this information, ensuring that the scraper captures the necessary content accurately. Appstractor's solutions also include compensation benchmarking, providing valuable insights for digital marketing specialists.

Additionally, Appstractor features a global self-healing IP pool, ensuring continuous uptime and reliability in data extraction operations. The global web data extraction market is projected to exceed $9 billion in 2025, underscoring the growing importance of these skills in the digital economy. By mastering these fundamentals, developers can create robust PHP scrapers capable of handling diverse web content, adapting to current trends in web data extraction, and contributing to successful projects in PHP web extraction. For instance, a mid-sized e-commerce retailer that implemented a custom data extraction system experienced a 300% increase in ROI on promotional campaigns, showcasing the effectiveness of these techniques. Furthermore, it is essential to recognise common pitfalls in PHP web extraction, such as ensuring adherence to legal standards and ethical information collection practises, to prevent potential issues, particularly regarding GDPR compliance that Appstractor follows.

Select Effective PHP Libraries and Tools

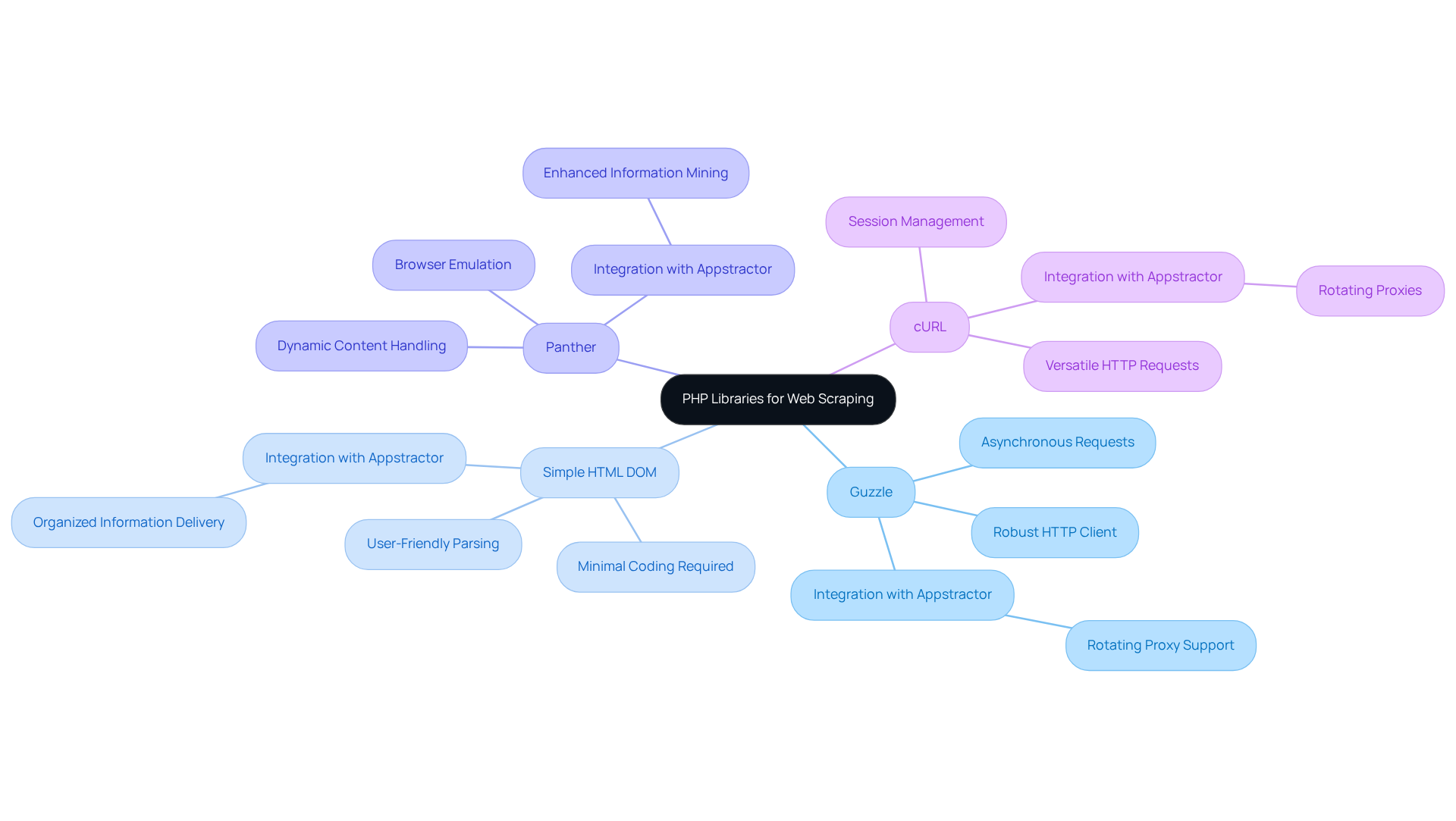

In the realm of PHP web scraping, several libraries stand out for their ability to streamline the process, particularly when paired with Appstractor's efficient web data extraction solutions:

-

Guzzle: This robust HTTP client simplifies the task of sending requests and managing responses. Guzzle is one of the most developed and frequently downloaded HTTP clients for PHP. Its support for asynchronous requests makes it especially efficient for gathering multiple pages simultaneously, enabling developers to enhance their information collection efforts. When paired with Appstractor's rotating proxy servers, Guzzle boosts extraction efficiency by managing multiple IPs effortlessly.

-

Simple HTML DOM: Known for its user-friendly approach, this library facilitates easy parsing of HTML documents. Created for PHP versions starting from 5.6, programmers can navigate the DOM effortlessly and retrieve information with minimal coding, making it a popular option for simple extraction tasks. Appstractor's full-service option can further streamline this process by providing organised information directly to users.

-

Panther: Designed to emulate a browser environment, Panther is ideal for scraping dynamic content from websites that heavily utilise JavaScript. This capability enables developers to access fully rendered pages, ensuring thorough information extraction. Employing Appstractor's advanced information mining solutions can enhance Panther's capabilities by offering extra scale and expertise.

-

cURL: A versatile tool for making HTTP requests, cURL is essential for managing more complex data extraction tasks, including those that require authentication and session management. Its flexibility makes it a staple in many developers' toolkits. When combined with Appstractor's services, cURL can utilise rotating proxies to maintain session integrity and improve information collection.

Choosing the suitable library depends on the specific requirements of the extraction project, including the intricacy of the target website and the type of information being gathered. While Guzzle's efficiency in managing queries and its popularity in the web extraction community highlight its effectiveness, it is crucial to consider the potential drawbacks of using these libraries, such as the challenges a PHP scraper may face in extracting dynamic content. Simple HTML DOM remains a go-to for simpler tasks due to its ease of use, especially when paired with Appstractor's automated solutions.

Navigate Common Web Scraping Challenges

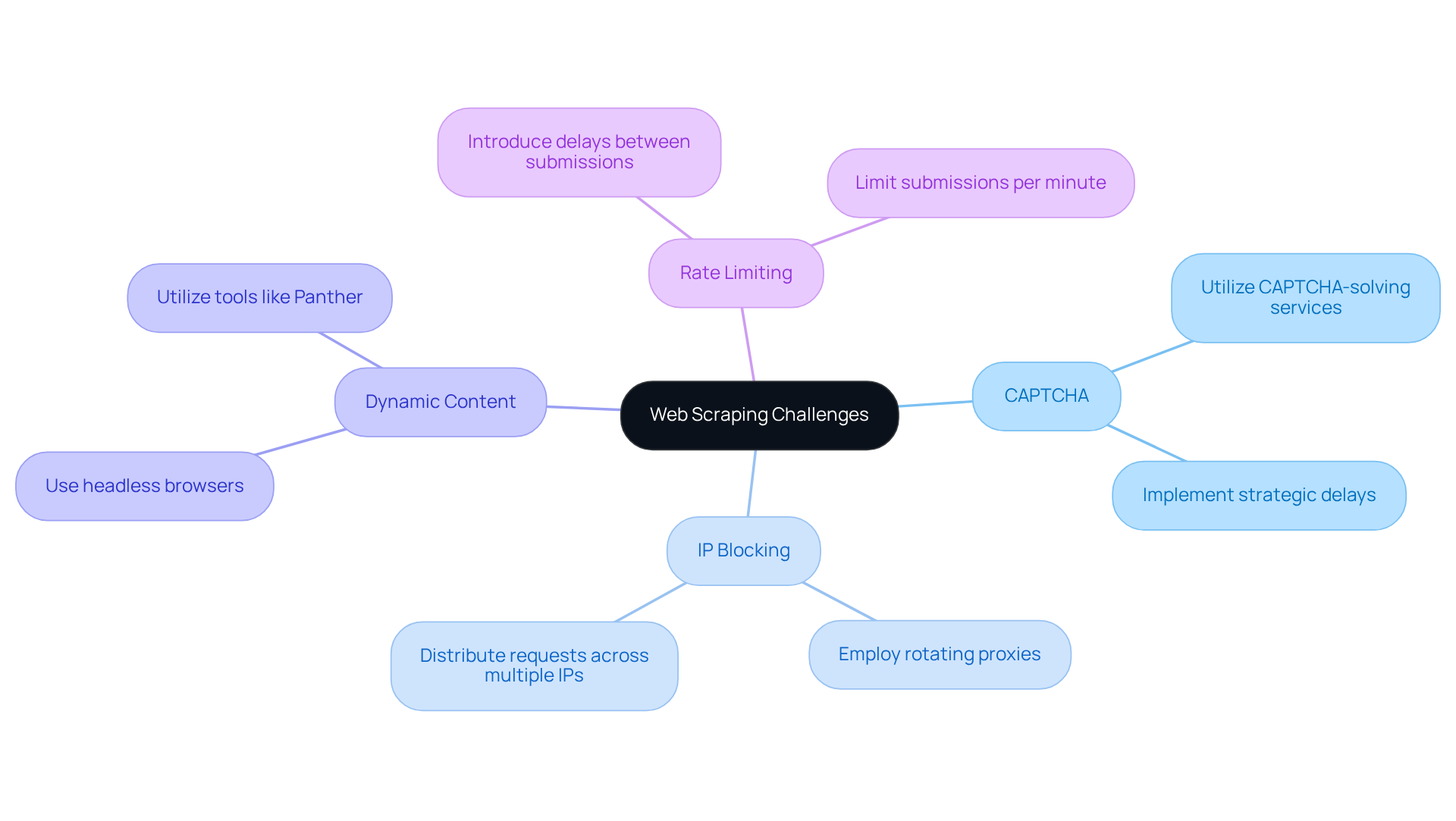

Web harvesting often presents various challenges that can obstruct information extraction efforts. Key issues include:

-

CAPTCHA: Many websites deploy CAPTCHA systems to thwart automated access. To effectively navigate this obstacle, developers can utilise CAPTCHA-solving services or implement strategic delays between submissions, mimicking human behaviour to reduce detection rates. The impact of CAPTCHA on scraping success rates can be significant, as it often leads to increased failure in data retrieval if not addressed properly.

-

IP Blocking: Repeated attempts from a single IP address can activate blocking mechanisms. To mitigate this risk, employing rotating proxies is essential. This approach distributes requests across multiple IP addresses, significantly lowering the likelihood of being blocked. Case studies show that companies employing rotating proxies have experienced enhanced success rates in their extraction activities, as they can sustain steady access to target information. Appstractor's Rotating Proxy Servers can be deployed within 24 hours, providing a quick solution to this challenge.

-

Dynamic Content: Websites that utilise JavaScript for dynamic content loading pose additional challenges for scrapers. Tools such as Panther or headless browsers are effective in rendering JavaScript, enabling successful data extraction from these complex sites. The ability to handle dynamic content is crucial, as many modern websites rely on such technologies to deliver personalised user experiences.

-

Rate Limiting: To prevent overwhelming servers and triggering anti-bot measures, implementing rate limiting in data extraction scripts is vital. This can be accomplished by introducing delays between submissions or limiting the number of submissions per minute. By controlling request rates, developers can improve the reliability of their data collection efforts and ensure compliance with website policies.

Additionally, leveraging flexible data formats and endpoints for integration, such as JSON, CSV, and direct database inserts, can further streamline the data extraction process. By proactively addressing these challenges, developers can significantly enhance the reliability and effectiveness of their web data collection initiatives, utilising Appstractor's robust proxy solutions.

Adhere to Ethical and Legal Standards in Scraping

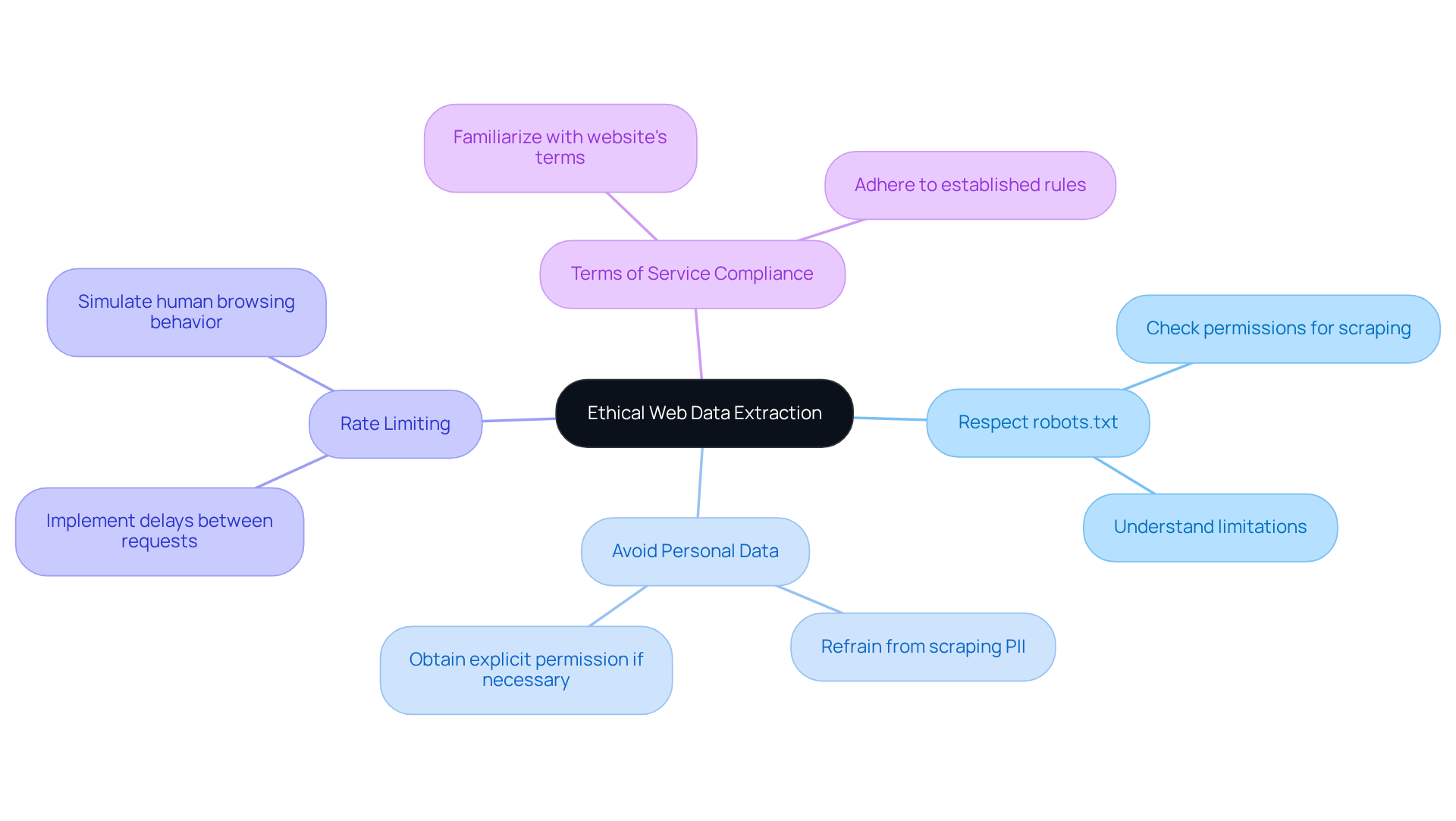

Ethical web data extraction is crucial for respecting the rights of both website owners and users. To ensure responsible practices, consider the following key guidelines:

- Respect

robots.txt: Always check therobots.txtfile of a website to determine which pages are permissible for scraping. - Avoid Personal Data: Refrain from scraping personally identifiable information (PII) unless explicit permission has been granted.

- Rate Limiting: Implement rate limiting to prevent overwhelming the server and to simulate human browsing behavior.

- Terms of Service Compliance: Familiarize yourself with the website's terms of service to ensure that your data collection activities adhere to established rules.

By following these ethical standards, developers can conduct their scraping practices in a responsible and legally compliant manner.

Conclusion

In conclusion, mastering PHP web scraping techniques equips developers with essential skills to extract valuable data efficiently and ethically. Understanding the fundamentals of PHP scraping, selecting the right libraries and tools, navigating common challenges, and adhering to ethical standards are crucial. By integrating these practices, developers can create robust scrapers that gather information effectively while respecting legal and ethical boundaries.

Key insights include the significance of mastering HTTP requests and HTML parsing, along with the advantages of using libraries such as Guzzle, Simple HTML DOM, and Panther. The article also addresses common obstacles like CAPTCHA, IP blocking, and dynamic content, providing practical solutions to enhance scraping success. Furthermore, it underscores the necessity of following ethical guidelines, including compliance with robots.txt and avoiding the collection of personally identifiable information.

In a rapidly evolving digital landscape, the ability to scrape data responsibly and effectively is more critical than ever. By embracing these best practices, developers can unlock new opportunities for data-driven decision-making and ensure their scraping activities contribute positively to the broader web ecosystem. The knowledge gained from this article serves as a foundation for navigating the complexities of PHP web scraping, empowering developers to harness the full potential of data extraction in their projects.

Frequently Asked Questions

What is PHP web scraping?

PHP web scraping involves using PHP scripts to extract valuable data from websites, particularly insights relevant to real estate and job markets.

What are the key steps in the PHP web scraping process?

The key steps include sending HTTP messages to a target URL, retrieving the HTML content, and parsing it to extract the desired information.

Why are HTTP requests important in web scraping?

Mastering HTTP requests, such as GET and POST, is crucial for interacting effectively with web servers. PHP functions like file_get_contents() and libraries such as cURL are commonly used for this purpose.

What tools can be used for HTML parsing in PHP?

Libraries like Simple HTML DOM and DOMDocument are utilised to navigate and manipulate the HTML structure, simplifying the extraction of information from complex web pages.

How do developers extract specific information from web pages?

Developers identify specific elements such as text, images, or links and use various methods to retrieve this information accurately.

What is the significance of a global self-healing IP pool in web scraping?

A global self-healing IP pool ensures continuous uptime and reliability in data extraction operations, which is crucial for effective web scraping.

What is the projected market size for global web data extraction by 2025?

The global web data extraction market is projected to exceed $9 billion by 2025, highlighting the growing importance of web scraping skills in the digital economy.

Can you provide an example of the effectiveness of PHP web scraping?

A mid-sized e-commerce retailer that implemented a custom data extraction system experienced a 300% increase in ROI on promotional campaigns, demonstrating the effectiveness of these techniques.

What are some common pitfalls in PHP web extraction?

Common pitfalls include failing to adhere to legal standards and ethical information collection practises, particularly regarding GDPR compliance.

Why is it important to follow ethical practises in web scraping?

Following ethical practises in web scraping helps prevent potential legal issues and ensures compliance with regulations like GDPR.

List of Sources

- Understand PHP Web Scraping Fundamentals

- Web Scraping Statistics & Trends You Need to Know in 2025 (https://open.substack.com/pub/scrapetalk/p/web-scraping-statistics-and-trends?r=1m1mci&utm_campaign=post&utm_medium=web)

- Web Scraping Statistics & Trends You Need to Know in 2025 (https://kanhasoft.com/blog/web-scraping-statistics-trends-you-need-to-know-in-2025)

- Web Scraping with PHP Tutorial with Example Scripts (2026) (https://scrapingbee.com/blog/web-scraping-php)

- State of Web Scraping 2026: Trends, Challenges & What’s Next (https://browserless.io/blog/state-of-web-scraping-2026)

- Web Scraping Statistics & Trends You Need to Know in 2026 (https://scrapingdog.com/blog/web-scraping-statistics-and-trends)

- Select Effective PHP Libraries and Tools

- Web Scraping with PHP: The Ultimate Guide To Web Scraping - WebScrapingAPI (https://webscrapingapi.com/php-web-scraping)

- Web Scraping with PHP Tutorial with Example Scripts (2026) (https://scrapingbee.com/blog/web-scraping-php)

- Web Scraping PHP Tutorial with Scripts and Examples (2025) (https://oxylabs.io/blog/web-scraping-php)

- Navigate Common Web Scraping Challenges

- State of Web Scraping 2026: Trends, Challenges & What’s Next (https://browserless.io/blog/state-of-web-scraping-2026)

- Web Scraping vs AI for Data Collection With Case Studies | Raagz IT (https://raagz.it/comparison-of-web-scraping-and-ai)

- Web Scraping Statistics & Trends You Need to Know in 2026 (https://scrapingdog.com/blog/web-scraping-statistics-and-trends)

- Top Web Scraping Challenges in 2026 (https://scrapingbee.com/blog/web-scraping-challenges)

- Adhere to Ethical and Legal Standards in Scraping

- Web Scraping Statistics & Trends You Need to Know in 2026 (https://scrapingdog.com/blog/web-scraping-statistics-and-trends)

- Web Scraping Report 2026: Market Trends, Growth & Key Insights (https://promptcloud.com/blog/state-of-web-scraping-2026-report)

- The Complete Guide to Ethical Web Scraping (https://rayobyte.com/blog/ethical-web-scraping)

- Ethical Web Scraping: Principles and Practices (https://datacamp.com/blog/ethical-web-scraping)