Introduction

Web scraping has become an essential tool for businesses looking to leverage online data effectively. In an ever-evolving digital landscape, the ability to extract relevant information efficiently can significantly bolster competitive strategies and enhance customer insights. However, as sophisticated anti-bot measures and complex website architectures emerge, organisations face a pressing question: how can they navigate these challenges while mastering data extraction?

This guide explores the essential steps and tools for web scraping, equipping readers with the knowledge to derive valuable insights from the web while overcoming common obstacles.

Understand Web Scraping Basics

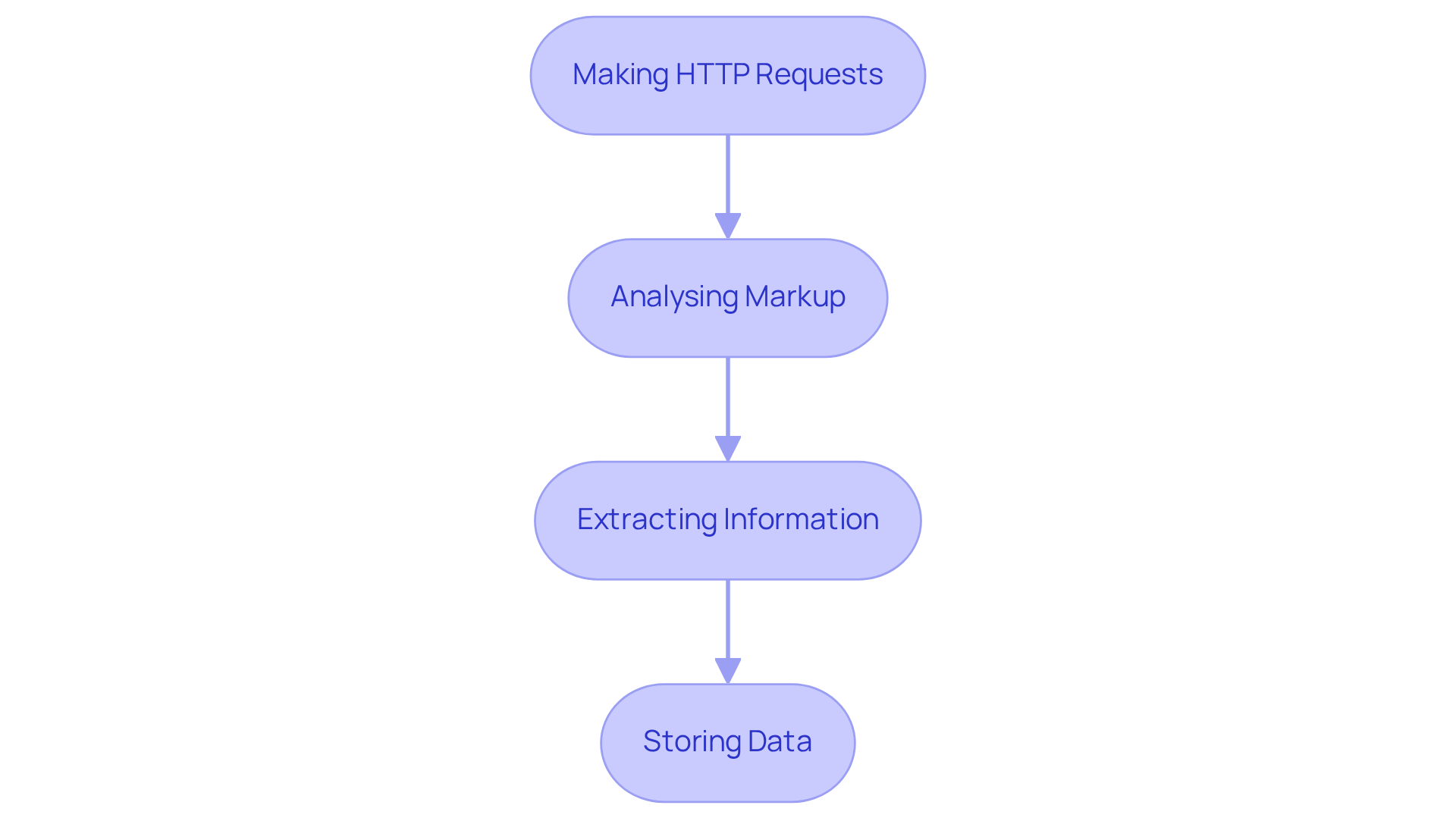

Web scraping is the automated process of extracting data from websites, encompassing several critical steps that ensure effective data retrieval:

- Making HTTP Requests: The initial step involves sending a request to a server to retrieve the webpage content. This foundational action is crucial for obtaining the information.

- Analysing Markup: Once the content is retrieved, it must be examined to identify the information of interest. Libraries like Beautiful Soup and Scrapy in Python are frequently used for this task, allowing users to traverse the webpage structure effectively.

- Extracting Information: After parsing, relevant information is obtained based on specific HTML tags or attributes. This step is vital for isolating the information needed for analysis.

- Storing Data: Finally, the extracted data is organised and stored in a structured format, such as CSV or a database, facilitating further analysis and utilisation.

The importance of being able to extract website data in 2026 cannot be overstated. As businesses increasingly rely on data-driven strategies, the ability to extract website data for gathering timely and accurate information from various online sources is essential. Companies across sectors, from retail to finance, are utilising strategies to extract website data in order to enhance competitive analysis, optimise pricing strategies, and improve customer targeting. Appstractor's advanced information gathering solutions, including real estate listing change alerts, compensation benchmarking, and a global self-healing IP pool, empower organisations to extract valuable insights while ensuring GDPR compliance. Furthermore, Appstractor provides clear pricing choices, simplifying it for companies to handle their information extraction requirements.

However, organisations must also navigate challenges posed by modern anti-bot systems, which utilise advanced detection techniques that can complicate information extraction efforts. The shift towards controlled scraping infrastructure is becoming more prevalent, enabling companies to concentrate on information gathering instead of the challenges of managing their own scraping systems. Adherence to protection regulations is also influencing how information pipelines are designed, ensuring responsible handling practises.

Experts highlight that comprehending HTTP requests and HTML parsing is essential to extract website data successfully. As Alejandro Loyola, a technical support specialist, observes, "The environment surrounding extraction has expanded rapidly, and teams now have more choices for gathering and processing web information." This evolution emphasises the necessity for strong data extraction methods that can adjust to the complexities of contemporary websites, ensuring that organisations can retrieve valuable insights efficiently.

Choose the Right Tools for Web Scraping

Selecting the appropriate instruments for web harvesting is essential for effectively extracting website data. Below is a breakdown of popular options available in 2026:

-

Programming Libraries: For those with coding expertise, libraries such as Scrapy, Beautiful Soup, and Selenium stand out. Scrapy is especially recognised for its high-performance features, making it suitable for large-scale crawling and information pipelines. Selenium continues to be significant for testing and particular compatibility requirements, providing flexibility and control to extract website data effectively.

-

No-Code Tools: If coding isn't your strength, no-code platforms like Octoparse and ParseHub are excellent alternatives. These tools feature intuitive interfaces that allow users to extract website data by creating scrapers without any programming knowledge. Octoparse, for instance, is recommended for beginners due to its minimal learning curve and quick setup, making it suitable for small to medium projects.

-

Browser Extensions: Tools such as Web Scraper and Data Miner can be easily integrated into your browser, facilitating rapid information extraction directly from web pages. These extensions are particularly useful for quick tasks and for users who want to extract website data in a straightforward manner.

-

APIs: Numerous websites offer APIs that allow organised information retrieval without the necessity for extraction. Always check for an available API before resorting to scraping, as this can save time and ensure compliance with site terms.

-

Appstractor's Solutions: For businesses seeking to extract website data efficiently, Appstractor offers advanced mining solutions that automate the collection and delivery of structured content. With choices such as Rotating Proxy Servers for self-serve IPs and Full Service for turnkey information delivery, Appstractor offers adaptable proxy alternatives that can effortlessly merge into your current workflows. Their services support various formats, including JSON, CSV, and Parquet, ensuring that you can easily manage and utilise the extracted information. Activation timelines vary: Rotating Proxy Servers go live within 24 hours, while Full Service projects kick off in 5-7 business days.

When choosing a tool, consider your project requirements, technical skills, and specific extraction needs. As Svetlana Zakharova, an independent analyst, emphasises, selecting the appropriate solution is crucial for efficient information gathering without unnecessary complexity.

Build Your First Web Scraper

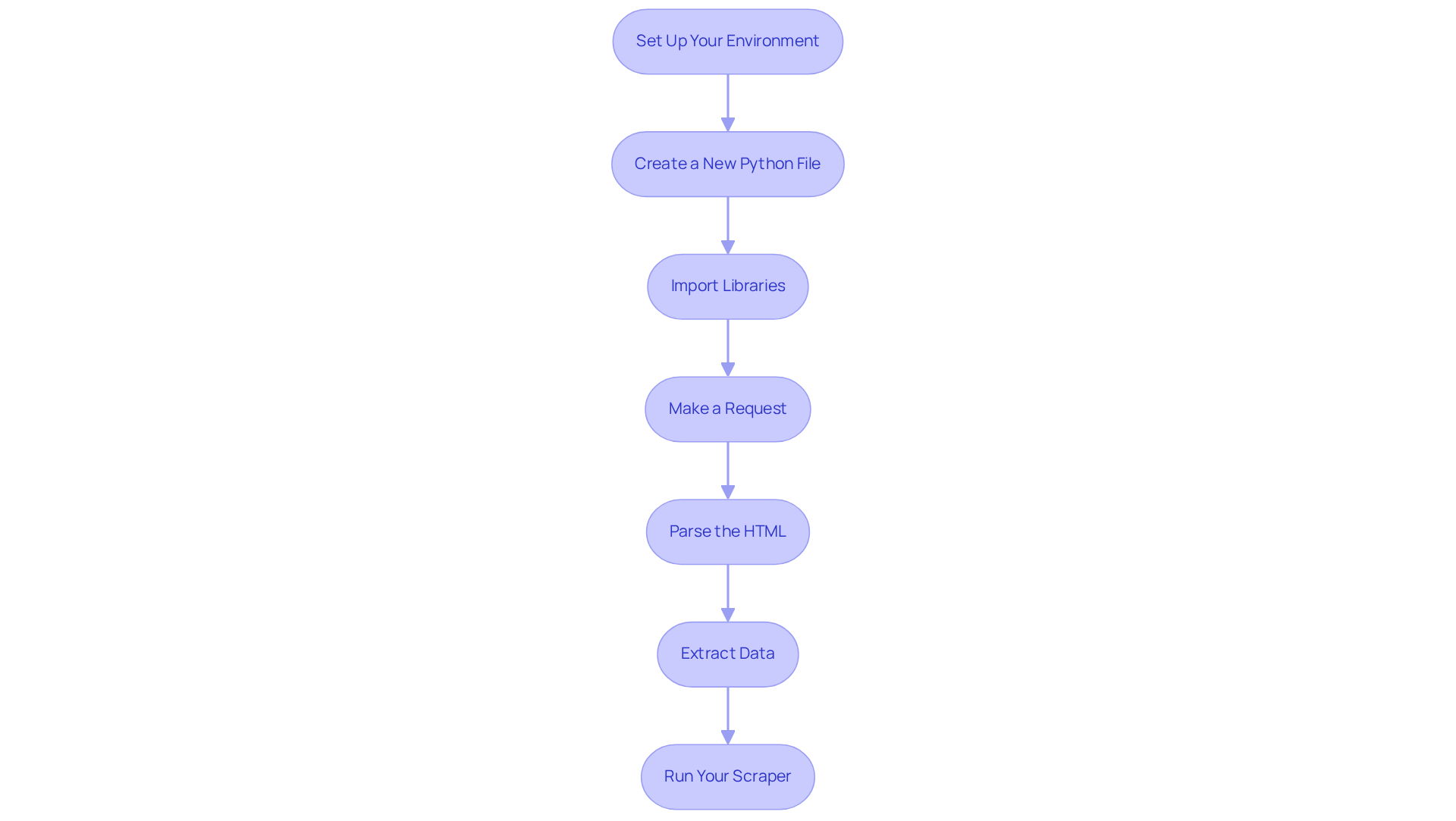

Building your first web scraper can be an exciting venture, particularly when leveraging efficient solutions like those offered by Appstractor. To create a simple scraper using Python and Beautiful Soup, follow these essential steps:

- Set Up Your Environment: Install Python and the necessary libraries by running:

pip install requests beautifulsoup4 - Create a New Python File: Open your IDE and create a new Python file, e.g.,

scraper.py. - Import Libraries: At the top of your file, import the libraries:

import requests from bs4 import BeautifulSoup - Make a Request: Use the

requestslibrary to fetch the webpage:url = 'https://example.com' response = requests.get(url) - Parse the HTML: Create a Beautiful Soup object to parse the HTML:

soup = BeautifulSoup(response.text, 'html.parser') - Extract Data: Identify the HTML elements containing the data you want and extract it:

titles = soup.find_all('h2') for title in titles: print(title.text) - Run Your Scraper: Execute your script to see the extracted information in your console.

Congratulations! You've built your first web scraper. To further enhance your skills, consider integrating Appstractor's advanced information mining solutions, which automate the ability to extract website data and offer flexible proxy options. This integration can streamline your data collection process, allowing you to focus on analysis rather than extracting website data manually.

Python is the dominant language used to extract website data, boasting a 69.6% adoption rate among developers, making it a reliable choice for your projects. Be mindful of common errors, such as failing to manage exceptions or neglecting to adhere to website terms of service, to ensure a smooth process to extract website data. As Antonello Zanini, a Technical Writer, notes, "These APIs offer structured and comprehensive data, tailored for each news source." Furthermore, BeautifulSoup is utilized by 43.5% of developers to extract website data through straightforward, adaptable HTML/XML parsing, underscoring its efficiency in web harvesting. With the web data extraction market projected to reach between $2.2 billion and $3.5 billion by 2026, it is increasingly pertinent to master skills that allow you to extract website data.

Troubleshoot Common Web Scraping Issues

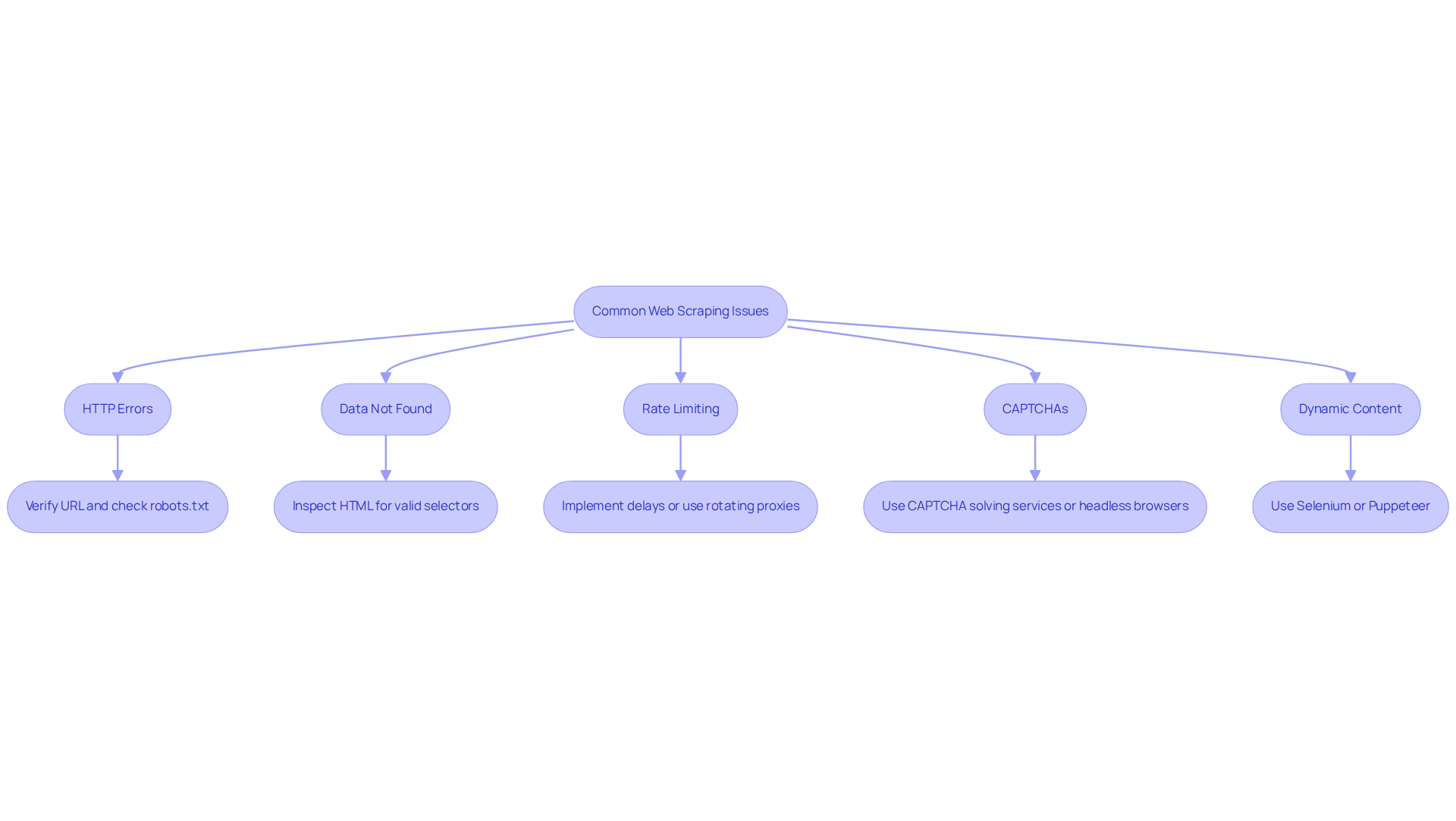

Even the most seasoned web scrapers encounter challenges. Below are common issues and their troubleshooting methods:

- HTTP Errors: If you encounter a 404 or 403 error, verify the URL for mistakes or ensure that the website allows you to extract website data. Review the

robots.txtfile for permissions. - Data Not Found: If your scraper returns empty results, the website structure may have changed. Inspect the HTML to ensure your selectors are still valid.

- Rate Limiting: Receiving a 429 error indicates that the website may be blocking your requests due to excessive frequency. Implement delays between requests or utilise rotating proxies. Appstractor's built-in IP rotation can assist in managing this by allowing seamless IP switching, thereby reducing the risk of being blocked.

- Some sites employ CAPTCHAs to prevent users from extracting website data. Consider using services that solve CAPTCHAs or switch to headless browsers capable of handling these challenges.

- Dynamic Content: If data is loaded dynamically via JavaScript, tools like Selenium or Puppeteer may be necessary to render the page before extracting information.

By understanding these common issues and their solutions, you can significantly enhance the reliability of your web scraping projects.

Conclusion

Mastering the art of extracting website data is a valuable skill that significantly enhances data-driven decision-making across various industries. This guide outlines essential steps and tools necessary for effective web scraping, emphasising the importance of understanding the underlying processes involved-from making HTTP requests to storing the extracted data in a structured format.

Key insights include the necessity of choosing the right tools, whether programming libraries like Scrapy and Beautiful Soup or user-friendly no-code options such as Octoparse. Additionally, the guide highlights the importance of troubleshooting common web scraping issues, ensuring users can navigate challenges effectively. With the web data extraction market projected to grow significantly, acquiring these skills is not just beneficial but essential for staying competitive.

Ultimately, embracing web scraping techniques empowers individuals and organisations to harness the wealth of information available online. By mastering these essential steps and leveraging the right tools, one can unlock valuable insights that drive strategic decisions and foster innovation. Engaging with this evolving field not only enhances personal capabilities but also contributes to the broader landscape of data utilisation in the digital age.

Frequently Asked Questions

What is web scraping?

Web scraping is the automated process of extracting data from websites, involving several critical steps to ensure effective data retrieval.

What are the initial steps involved in web scraping?

The initial steps include making HTTP requests to retrieve webpage content, analysing the markup to identify relevant information, extracting information based on specific HTML tags or attributes, and storing the data in a structured format.

What tools are commonly used for analysing markup in web scraping?

Libraries like Beautiful Soup and Scrapy in Python are frequently used for analysing markup and traversing webpage structures effectively.

Why is extracting website data important in 2026?

Extracting website data is crucial as businesses increasingly rely on data-driven strategies. It helps in gathering timely and accurate information for competitive analysis, optimising pricing strategies, and improving customer targeting.

How does Appstractor assist organisations in data extraction?

Appstractor offers advanced information gathering solutions, such as real estate listing change alerts and compensation benchmarking, while ensuring GDPR compliance and providing clear pricing choices for companies.

What challenges do organisations face in web scraping?

Organisations must navigate challenges from modern anti-bot systems that use advanced detection techniques, complicating information extraction efforts.

What is the trend in web scraping infrastructure?

There is a shift towards controlled scraping infrastructure, allowing companies to focus on information gathering rather than managing their own scraping systems.

Why is understanding HTTP requests and HTML parsing essential for web scraping?

Comprehending HTTP requests and HTML parsing is essential for successfully extracting website data, as it helps teams adapt to the complexities of contemporary websites and retrieve valuable insights efficiently.

List of Sources

- Understand Web Scraping Basics

- Web Scraping Statistics & Trends You Need to Know in 2026 (https://scrapingdog.com/blog/web-scraping-statistics-and-trends)

- Case Study: How A Retail Company Unleashed The Power Of Data With ProWebScraper’s Web Scraping Service (https://prowebscraper.com/blog/case-study-how-a-retail-company-unleashed-the-power-of-data-with-prowebscraper-web-scraping-service)

- How AI Is Changing Web Scraping in 2026 (https://kadoa.com/blog/how-ai-is-changing-web-scraping-2026)

- State of Web Scraping 2026: Trends, Challenges & What’s Next (https://browserless.io/blog/state-of-web-scraping-2026)

- Web data for scraping developers in 2026: AI fuels the agentic future (https://zyte.com/blog/web-data-for-scraping-developers)

- Choose the Right Tools for Web Scraping

- 7 Best Web Scraping Tools Ranked (2026) (https://scrapingbee.com/blog/web-scraping-tools)

- Top 7 Web Scraping Tools for 2026: From Beginner to Pro — Comparison and Rankings (https://mobileproxy.space/en/pages/top-7-web-scraping-tools-for-2026-from-beginner-to-pro--comparison-and-rankings.html)

- 16 Digital Marketing Quotes From Top 16 Experts (https://medium.com/@spelloutmarketing/16-digital-marketing-quotes-from-top-16-experts-6622a86de3b9)

- The best web scraping tools in 2026 (https://zyte.com/learn/best-web-scraping-tools)

- Build Your First Web Scraper

- Python Web Scraping: Quotes from Goodreads.com (https://paulvanderlaken.com/2019/12/27/web-scraping-python-goodreads-quotes)

- Web Scraping Statistics & Trends You Need to Know in 2026 (https://scrapingdog.com/blog/web-scraping-statistics-and-trends)

- How to Scrape News Articles With AI and Python (https://brightdata.com/blog/web-data/how-to-scrape-news-articles)

- Quotes to Scrape | Viswa Vivek T. (https://linkedin.com/posts/viswa-vivek-tupakula_quotes-to-scrape-activity-7148042831750676480-ZqLO)