Introduction

The digital age has fundamentally transformed business operations, positioning data as the new currency that drives marketing success. Organisations are increasingly leveraging data extraction techniques - from web scraping to API integration - to uncover insights that can significantly enhance their strategies and decision-making processes.

However, navigating the complexities of data retrieval, validation, and automation presents challenges, particularly in ensuring compliance with legal standards. Marketers must consider how to effectively harness these powerful tools to not only gather data but also convert it into actionable intelligence that propels their campaigns forward.

Understand Data Extraction Basics

Information retrieval involves extracting data from the web, as well as acquiring details from various sources, such as databases or APIs, and transforming this data into a usable format for analysis. Understanding the following key concepts is essential:

-

Data Sources: Familiarise yourself with the types of data sources available, including structured data from databases and unstructured data from web pages. By 2026, a significant percentage of businesses will utilise web scraping to extract data from the web as a primary technique for data gathering, underscoring its growing importance in the digital landscape.

-

Data Retrieval Methods: Common methods include web scraping, API calls, and manual collection. Web scraping has evolved into a strategic field that enables organisations to extract data from the web and manage millions of documents swiftly and consistently, which is vital for maintaining a competitive edge. The service enhances this method with rotating proxy servers and comprehensive options, ensuring efficient information retrieval while preserving data integrity through techniques like hashing rows, dropping duplicates, and normalising encodings.

-

Formats: Various file formats, including JSON, XML, and CSV, play a crucial role in the retrieval process. The service offers flexible information delivery options, allowing users to choose from formats such as JSON, CSV, and Parquet, which can significantly influence how information is processed and utilised.

-

Authentication and IP Rotation: Recognise the importance of authentication and IP rotation strategies in information extraction. Appstractor provides integrated rotation and persistent sessions lasting up to 10 minutes for log-ins, ensuring secure and efficient access to resources.

-

Legal Considerations: Navigating the legal landscape is critical. Organisations must be aware of copyright issues and terms of service for websites. Adherence to privacy protection regulations, such as GDPR, is essential, particularly when handling personal information. For instance, obtaining clear consent is crucial for specific web scraping activities, especially those involving personal data.

By mastering these fundamentals, you will be better equipped to address the complexities of information retrieval in marketing, ensuring that your strategies are both effective and compliant.

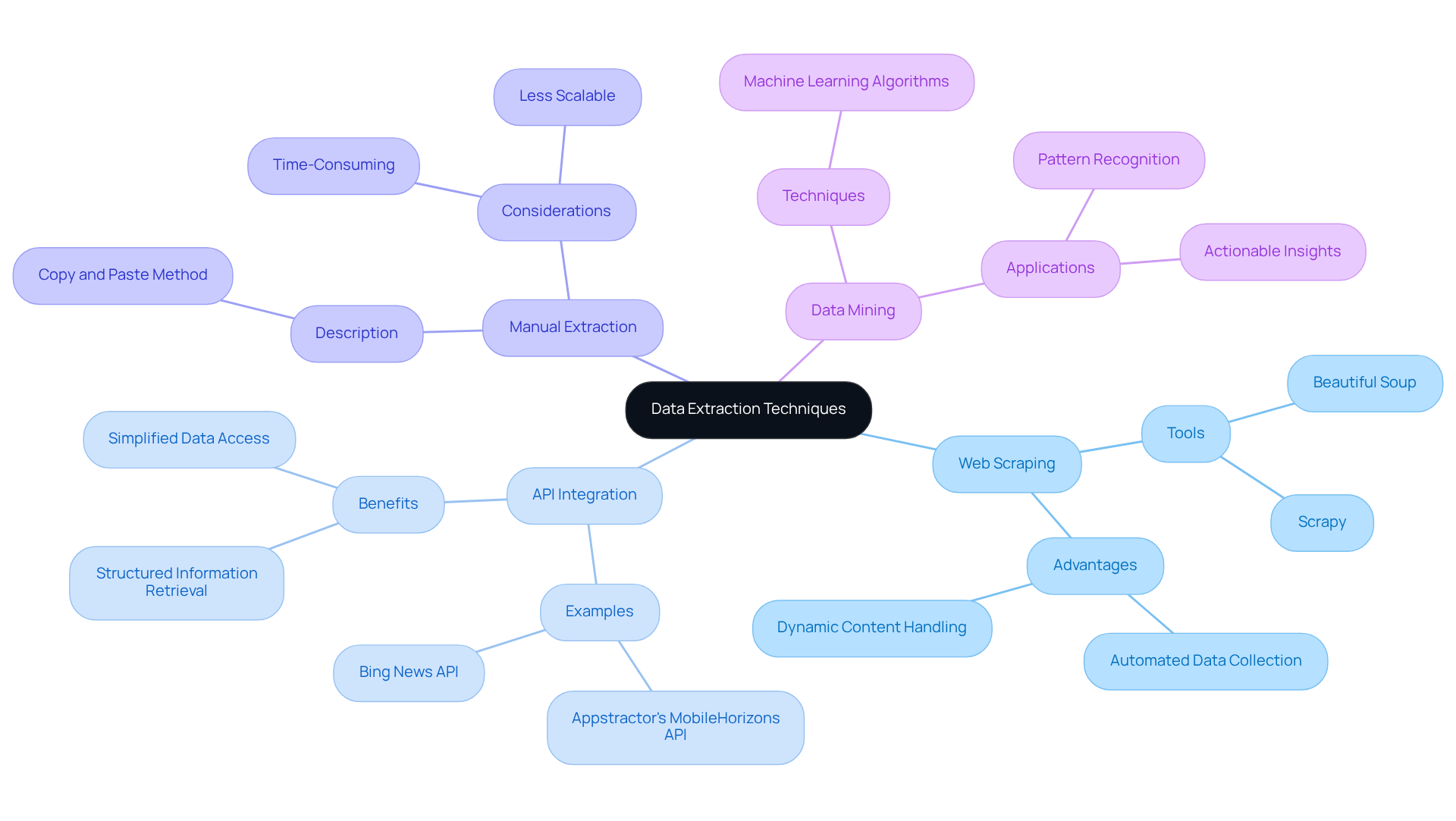

Explore Common Data Extraction Techniques

Data extraction from the web can be accomplished through several effective techniques, each offering unique advantages:

-

Web Scraping: Automated tools like Beautiful Soup and Scrapy facilitate the extraction of data from websites, enabling marketers to gather large volumes of information efficiently. This method is particularly useful for dynamic content.

-

API Integration: Numerous websites offer APIs for structured information retrieval, significantly simplifying the extraction process. In 2026, the utilisation of APIs in digital marketing is expected to increase, with organisations more frequently depending on these integrations to improve information collection and analysis. For instance, Appstractor's MobileHorizons API provides customised information from native mobile apps, allowing marketers to extract and analyse location-sensitive details, display ads, and shopping intelligence. This capability is crucial for gaining hyper-local insights that traditional web scraping methods may overlook. Additionally, the Bing News API allows marketers to access news articles and headlines, making it easier to track industry trends and competitor activities. According to industry insights, higher-volume tiers for APIs like the Bing News API cost between $15 and $18 per 1,000 transactions, highlighting the financial considerations for marketers.

-

Manual Extraction: For smaller datasets, manual extraction may suffice. This straightforward method involves copying and pasting information directly from web pages, though it can be time-consuming and less scalable.

-

Data Mining: This technique analyses large datasets to uncover patterns and insights, often employing machine learning algorithms. It is especially beneficial for marketers seeking to obtain actionable insights from intricate information sets.

As Alejandro Loyola noted, "Compliance now plays a direct role in the design of scraping workflows," emphasising the importance of adhering to legal and ethical guidelines when extracting information. Choosing the suitable technique to extract data from the web depends on factors such as information volume, source complexity, and the specific outcomes desired. As the landscape of digital marketing evolves, leveraging API integration and advanced scraping techniques, particularly those offered by Appstractor, will be essential for staying competitive.

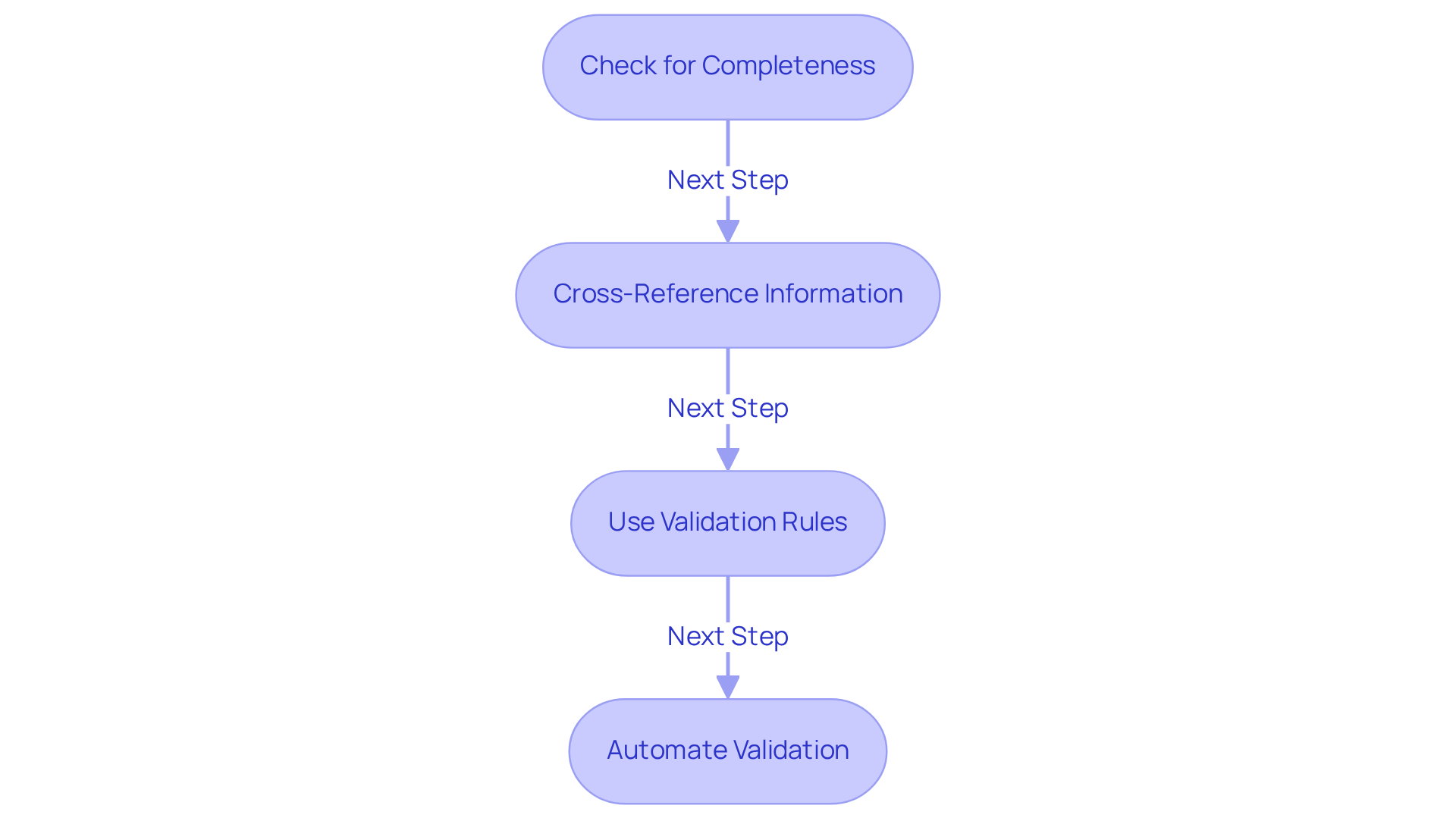

Validate Data at the Source

To ensure the integrity of your extracted data, implement the following validation steps:

- Check for Completeness: Verify that all required data fields are filled and that no essential information is missing. Incomplete information can lead to misguided marketing strategies and lost opportunities.

- Cross-Reference Information: Compare the information you extract data from web against reliable sources or benchmarks to identify any discrepancies. This practise is essential, as 85% of marketers recognise that poor information quality undermines their campaigns.

- Use Validation Rules: Establish guidelines to cheque for types, formats, and ranges. For instance, ensure that email addresses conform to standard formats, as errors in contact information can deter customer engagement.

- Automate Validation: Utilise automated tools to verify information when you extract data from web, minimising manual effort and decreasing the chances of mistakes. Currently, 48% of organisations employ science or AI tools to improve quality, indicating a rising trend toward automation in management.

By validating information at the source, you can significantly enhance the quality of insights derived from your marketing efforts, ultimately leading to more effective campaigns and improved return on investment.

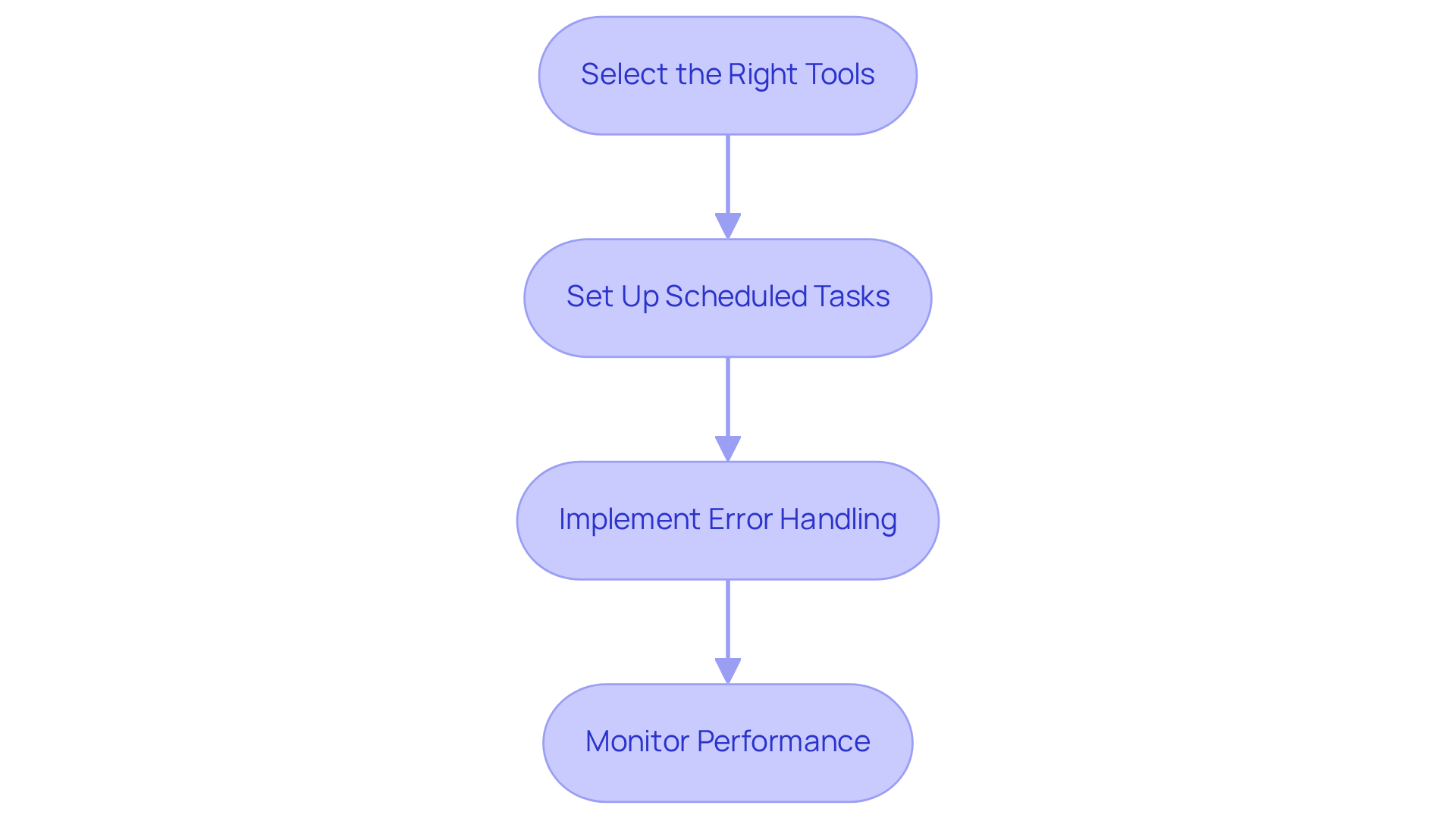

Automate Your Data Extraction Process

To streamline your data extraction process through automation, follow these essential steps:

- Select the Right Tools: Choose automation tools that align with your requirements. Popular web scraping frameworks like Scrapy and Selenium, along with information-gathering software such as Octoparse, can significantly enhance your ability to extract data from the web. Additionally, consider utilising Appstractor's MobileHorizons API, which reveals hyper-local insights from native mobile apps. This offers a distinctive information stream that improves your marketing strategies by supplying customised content based on user intent.

Set up scheduled tasks to automate the execution of your scripts that extract data from the web at regular intervals, using cron jobs or task schedulers. This ensures you consistently have access to the most up-to-date information, which is vital for prompt decision-making and allows you to extract data from the web.

To implement error handling, ensure that your automation scripts can manage errors effectively when you extract data from the web. This includes retrying unsuccessful requests and recording issues for later examination, which helps preserve the integrity of your information gathering. Strong error management can greatly reduce the number of problems encountered when you extract data from the web during information retrieval.

Monitor Performance: Regularly assess the performance of your automated processes to identify bottlenecks or areas needing improvement as you extract data from the web. Continuous monitoring can lead to enhanced efficiency and reduced error rates, with automated workflows typically experiencing 40-75% fewer errors compared to manual methods.

By automating your information gathering to extract data from the web, you can shift your focus from manual collection to thorough analysis, ultimately enhancing your marketing strategies and informed decision-making. Furthermore, consider examining case studies on tailored web scraping services to understand how companies have effectively adopted these methods, including advanced scraping solutions that ensure GDPR compliance and smooth integration.

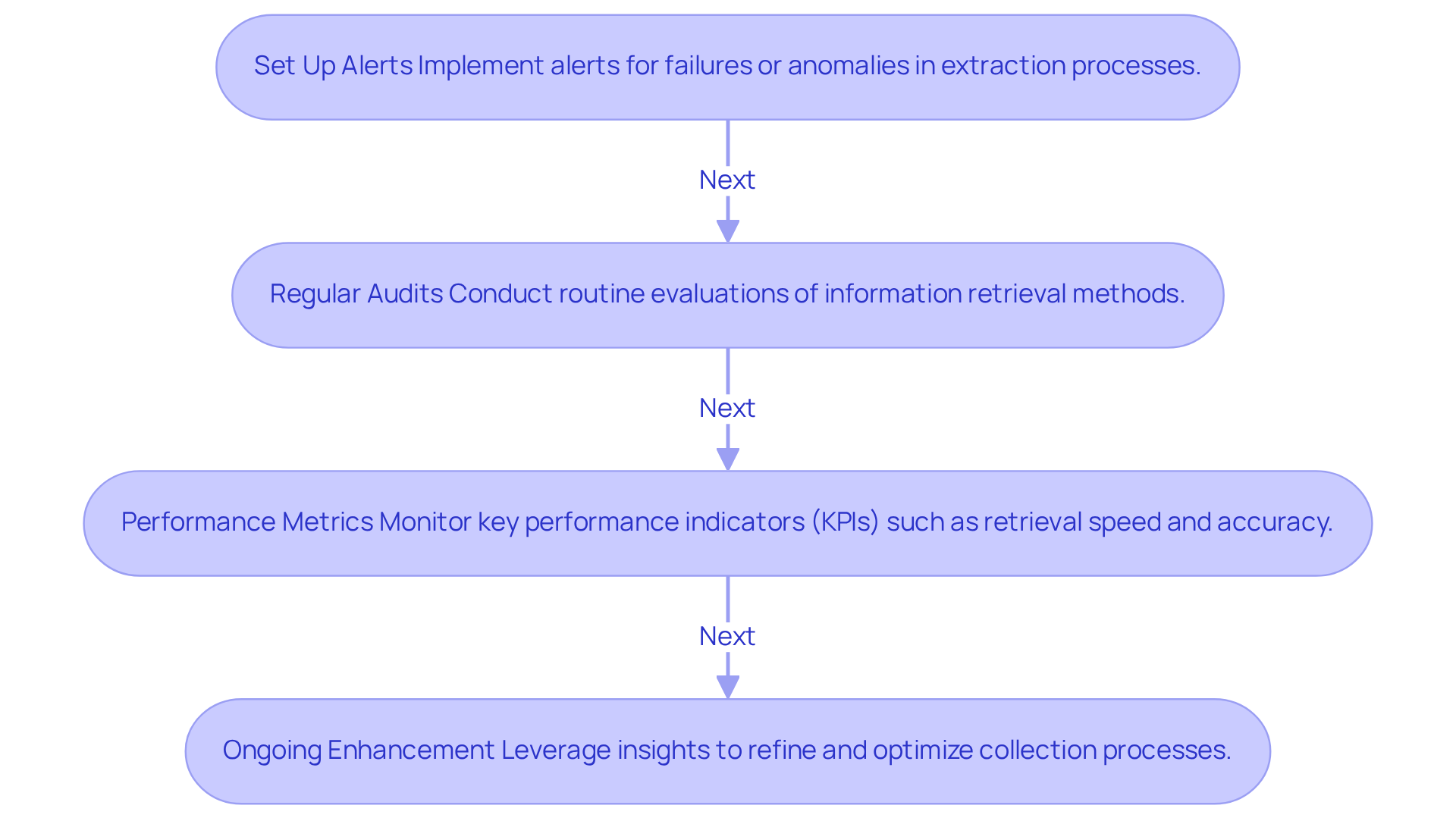

Monitor and Maintain Your Extraction Pipelines

To effectively monitor and maintain your data extraction pipelines with Appstractor's solutions, consider the following best practices:

- Set Up Alerts: Implement alerts for failures or anomalies in your extraction processes. This allows for quick responses to issues. With rotating proxies, you can ensure a dependable connection that reduces downtime.

- Regular Audits: Conduct routine evaluations of your information retrieval methods. This confirms they are operating as expected and yielding precise outcomes. The company provides tailored strategies and crawler development to enhance your auditing processes.

- Performance Metrics: Monitor key performance indicators (KPIs) such as retrieval speed, information accuracy, and error rates. This assessment helps gauge the effectiveness of your pipelines. Utilizing comprehensive management solutions from this service can assist in optimizing these metrics.

- Ongoing Enhancement: Leverage insights obtained from observation to refine and optimize your collection processes. Adjustments may be necessary to adapt to shifts in information sources or business requirements. Appstractor's ongoing maintenance and support ensure that your systems remain resilient and efficient.

By maintaining a vigilant approach to monitoring, you can ensure that your efforts to extract data from the web continue to deliver valuable insights for your marketing strategies.

Conclusion

Mastering data extraction is not merely a technical skill; it serves as a crucial strategy for achieving marketing success in a data-driven landscape. By grasping the fundamentals of data extraction-encompassing various sources, methods, and legal considerations-marketers can leverage information to shape their strategies and drive impactful results.

This guide has explored key techniques such as web scraping, API integration, and data validation, each highlighting unique advantages. Selecting the right approach based on specific marketing goals is essential. Furthermore, automation emerges as a vital tool that streamlines data collection, enhances accuracy, and allows marketers to concentrate on analysis and strategy development. Effective monitoring and maintenance of extraction pipelines are also critical, ensuring that data remains reliable and actionable.

In summary, the importance of robust data extraction practices cannot be overstated. As businesses increasingly depend on data for competitive advantage, investing in these methods will not only refine marketing strategies but also cultivate a culture of informed decision-making. By embracing these best practices today, marketers will be well-equipped to navigate the complexities of the digital landscape tomorrow, ensuring they stay ahead in an ever-evolving market.

Frequently Asked Questions

What is data extraction?

Data extraction involves retrieving data from various sources, such as the web, databases, or APIs, and transforming it into a usable format for analysis.

What are the types of data sources?

Data sources include structured data from databases and unstructured data from web pages. Web scraping is increasingly used by businesses to extract data from the web.

What are common methods of data retrieval?

Common methods include web scraping, API calls, and manual collection. Web scraping is particularly strategic for extracting data efficiently from the web.

What role do file formats play in data retrieval?

File formats like JSON, XML, and CSV are crucial for the data retrieval process, influencing how information is processed and utilised.

How does authentication and IP rotation impact data extraction?

Authentication and IP rotation are important for secure and efficient access to resources during information extraction. Services like Appstractor provide integrated rotation and persistent sessions for log-ins.

What legal considerations should be taken into account during data extraction?

Organisations must be aware of copyright issues, website terms of service, and privacy regulations like GDPR, especially when handling personal information.

What is web scraping, and what tools can be used for it?

Web scraping is an automated method for extracting data from websites using tools like Beautiful Soup and Scrapy, which allow for efficient gathering of large volumes of information.

How does API integration facilitate data extraction?

API integration simplifies the extraction process by providing structured information retrieval. Many organisations are expected to rely more on APIs for data collection and analysis in the coming years.

What is manual extraction, and when is it used?

Manual extraction involves copying and pasting information directly from web pages and is typically used for smaller datasets, though it can be time-consuming.

What is data mining, and how is it beneficial for marketers?

Data mining analyses large datasets to uncover patterns and insights, often using machine learning algorithms, making it valuable for marketers seeking actionable insights from complex information.

Why is compliance important in data extraction?

Compliance with legal and ethical guidelines is crucial when extracting information, as emphasised by industry experts, to ensure that data extraction workflows are designed responsibly.

List of Sources

- Understand Data Extraction Basics

- What Are the Best AI Tools for Data Extraction in 2026? (https://consentia.com/what-are-the-best-ai-tools-for-data-extraction-in-2026)

- The Legality of Web Scraping in 2026: Legal Precedents, GDPR, 152-FZ, and Best Practices (https://mobileproxy.space/en/pages/the-legality-of-web-scraping-in-2026-legal-precedents-gdpr-152-fz-and-best-practices.html)

- Data Extraction Trends US Enterprises Should Watch in 2026 (https://linkedin.com/pulse/data-extraction-trends-us-enterprises-should-watch-2026-webdataguru-jbtsf)

- Is Web Scraping Legal in the UK? GDPR & DPA 2018 Guide (2026) (https://ukdataservices.co.uk/blog/articles/web-scraping-compliance-uk-guide)

- Explore Common Data Extraction Techniques

- Guide to Google News API and Alternatives (https://scrapfly.io/blog/posts/guide-to-google-news-api-and-alternatives)

- Top 5 Best News API solutions in 2026 (https://scrapingbee.com/blog/top-best-news-apis-for-you)

- State of Web Scraping 2026: Trends, Challenges & What’s Next (https://browserless.io/blog/state-of-web-scraping-2026)

- Scraping Web Articles Using NewsAPI in Python (https://medium.com/@nathanlee_46385/scraping-web-articles-using-newsapi-in-python-a0e97fbab8ed)

- Validate Data at the Source

- 30 Data Hygiene Statistics for 2026 | PGM Solutions (https://porchgroupmedia.com/blog/data-hygiene-statistics)

- Advertising Week (https://advertisingweek.com/why-data-integrity-matters-lessons-for-the-advertising-industry)

- How to Win in 2026: Why Data Validation Matters More Than Ever (https://linkedin.com/pulse/how-win-2026-why-data-validation-matters-more-than-ever-mctit-xifzf)

- New Study Proves Data Integrity Is Critical Growth Driver in Digital Advertising (https://martechseries.com/sales-marketing/programmatic-buying/new-study-proves-data-integrity-is-critical-growth-driver-in-digital-advertising)

- New Study Proves Data Integrity Is Critical Growth Driver in Digital Advertising (https://finance.yahoo.com/news/study-proves-data-integrity-critical-150000464.html)

- Automate Your Data Extraction Process

- The Essential Guide to Automated News Extraction - AI-Driven Data Intelligence & Web Scraping Solutions (https://hirinfotech.com/the-essential-guide-to-automated-news-extraction)

- Automation Statistics 2026: Comprehensive Industry Data and Market Insights (https://thunderbit.com/blog/automation-statistics-industry-data-insights)

- News Data Extraction: Challenges and Solutions (https://groupbwt.com/blog/data-extraction-from-news-articles-key-challenges-tools-and-benefits)

- News Scraping: Best Practices for Accurate and Timely Data (https://thunderbit.com/blog/news-scraping-best-practices)

- Monitor and Maintain Your Extraction Pipelines

- Setting up ‘production-issue alerts’ made my life easier. Here's how. (https://thoughtworks.com/en-us/insights/blog/setting-production-issue-alerts-has-made-my-life-easier-heres-how)

- Data Pipeline Efficiency Statistics (https://integrate.io/blog/data-pipeline-efficiency-statistics)

- Getting Started: Automatic Detection And Alerting For Data Incidents With Monte Carlo (https://montecarlodata.com/blog-automatic-detection-and-alerting-for-data-incidents-with-monte-carlo)