Introduction

Mastering the art of data extraction from websites has evolved into a strategic imperative for businesses seeking to excel in a data-driven landscape. As organisations confront the vast amounts of information available online, the capability to efficiently gather and analyse this data becomes crucial. It can reveal valuable insights into market dynamics, customer preferences, and operational efficiencies.

However, despite the potential for improved decision-making and competitive advantage, many businesses face challenges with the complexities of effective data extraction. To navigate these challenges, what best practices can organisations adopt to ensure successful data extraction and maximise its impact?

Understand the Importance of Data Extraction

Data retrieval is a crucial process that allows businesses to gather and utilise information from diverse sources, such as websites, databases, and APIs. In today’s information-centric environment, the ability to effectively conduct data extraction from websites can significantly influence decision-making and strategic planning.

-

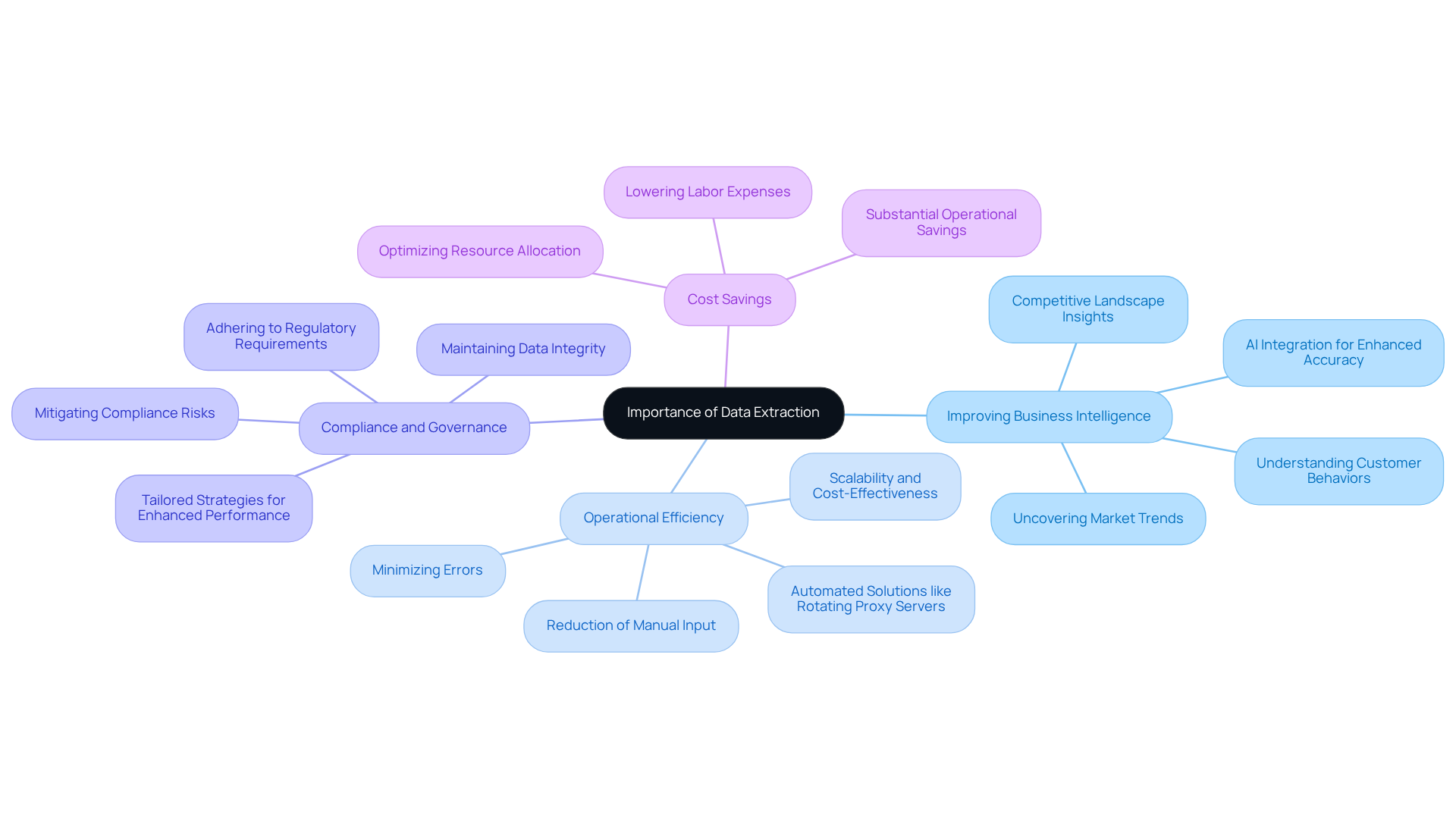

Improving Business Intelligence: Efficient information gathering enables organisations to uncover insights into market trends, customer behaviours, and competitive landscapes. This intelligence is vital for making informed decisions that drive growth and innovation. The integration of AI in information retrieval processes enhances this capability, facilitating dynamic interpretation of data and improving accuracy. Advanced analytics solutions from the company leverage AWS Cloud Management to streamline these processes, ensuring operational efficiency and growth.

-

Operational Efficiency: Streamlined information retrieval processes greatly reduce manual input, minimising errors and enhancing workflow effectiveness. Automated solutions, such as Appstractor's Rotating Proxy Servers, can handle large volumes of data extraction from websites without increasing labour or infrastructure costs, allowing teams to focus on analysis rather than data collection. This scalability and cost-effectiveness are essential for resilient crawlers and continuous monitoring, supported by features like Rate Limiting and Outlier Detection.

-

Compliance and Governance: Regular data extraction from websites practices are essential for maintaining integrity and adhering to regulatory requirements. This ensures that companies can effectively review their practices and mitigate compliance risks. The comprehensive full-service information solutions from the company support this by providing tailored strategies and seamless integration for enhanced business performance, including Information Normalisation and Missing Value Imputation.

-

Cost Savings: Automating information retrieval can result in significant cost reductions by lowering labour expenses and optimising resource allocation. Companies that adopt automated solutions, such as those offered by Appstractor, can efficiently process large volumes of data, leading to substantial operational savings.

As organisations increasingly recognise the strategic value of information, mastering techniques for retrieving insights becomes essential for leveraging it as a competitive asset. The Information Retrieval Software market is projected to grow at a CAGR of 14.3%, underscoring the rising acknowledgment of the strategic importance of information retrieval.

Explore Effective Data Extraction Methods

There are several effective methods for extracting information, each suited to different types of content and business needs. The following techniques are among the most common:

-

Data extraction from websites using web scraping involves automated tools to gather large volumes of information from online sources. Tools such as Beautiful Soup and Scrapy facilitate this process. Additionally, the platform enhances this capability with sophisticated information-gathering solutions, allowing companies to derive valuable insights from native mobile applications, especially in hyper-local contexts.

-

API Integration: Many platforms offer APIs that enable direct information extraction. A prime example is Appstractor's MobileHorizons API, which unlocks tailored information from third-party applications and provides organised content that integrates seamlessly into existing systems. This method is efficient and often yields structured information, simplifying integration into current workflows.

-

Database Queries: For businesses with organised data stored in databases, SQL queries can be utilised to extract specific details quickly and effectively.

-

Manual Extraction: Although less efficient, manual extraction may be necessary for small datasets or when automated methods are impractical. This process involves copying and pasting information directly from sources.

-

Optical Character Recognition (OCR): To retrieve information from scanned documents or images, OCR technology can convert text into machine-readable formats, facilitating further analysis.

Selecting the appropriate method depends on the information source, volume, and specific needs of the enterprise. With Appstractor's solutions, including rotating proxies and full-service options, companies can achieve swift and clean data extraction from websites that is tailored to their requirements.

Implement Best Practices for Successful Data Extraction

To achieve successful data extraction, businesses should implement the following best practices:

-

Define Clear Objectives: Establishing precise aims for information gathering is essential. Clearly specifying the particular information needed for data extraction from websites and its intended application not only directs the choice of the suitable retrieval method but also improves the overall efficiency of the process. Research indicates that organisations prioritising clear objectives in their information extraction strategies see a marked improvement in quality and relevance.

-

Validate Information Sources: Ensuring the reliability and currency of information sources is paramount. Businesses must validate their sources to avoid extracting inaccurate or outdated information. For example, organisations that regularly confirm their information sources report significantly higher accuracy rates in their extracted sets, which directly influences decision-making and operational efficiency.

-

Automate Where Possible: Utilising automation tools can greatly simplify the retrieval process. Appstractor's advanced information mining solutions automate the data extraction from websites and processing of organised content from the web, minimising human error and enhancing efficiency. This enables the processing of larger datasets in a fraction of the time. Organisations that implement automated information retrieval solutions often observe a decrease in operational expenses and an enhancement in processing speed. As mentioned by Neha Gunnoo, 'Automation is essential to scaling information gathering efforts without compromising quality.'

-

Monitor and Audit: Ongoing observation of the retrieval process and frequent assessments of the information are essential for ensuring accuracy. This method involves hashing rows, removing duplicates, normalising encodings, and conducting schema validation prior to delivery, ensuring the integrity of the extracted information. Companies that incorporate robust auditing practices report enhanced trust in their data-driven decisions.

-

Employ Rotating Proxies and Adaptable Service Choices: Appstractor provides rotating proxy servers and adaptable service choices, including Full Service and Hybrid models, to improve information gathering capabilities. These options enable businesses to expand their information gathering efforts efficiently while ensuring adherence to legal and ethical standards.

-

Honour Legal and Ethical Standards: Following the terms of service of the websites being scraped and adhering to privacy regulations is essential. Organisations must ensure that their information retrieval practices align with legal standards to avoid potential penalties and maintain ethical integrity.

By following these best practices and utilising Appstractor's efficient web information gathering solutions, businesses can significantly enhance the quality and reliability of their information gathering efforts, positioning themselves for success in a knowledge-driven landscape.

Utilize Advanced Tools and Technologies for Data Extraction

Incorporating advanced tools and technologies can significantly enhance the process of data extraction from websites. Below are some recommended tools:

-

Scrapy: This open-source web crawling framework is designed for efficient information gathering from websites. With support for various data formats and high customizability, Scrapy is favoured by developers for building tailored data pipelines. As of 2026, it continues to evolve, offering enhanced features that simplify the retrieval process.

-

Octoparse: A user-friendly web scraping tool that requires no coding skills, Octoparse features a visual interface for setting up extraction tasks. It excels at managing dynamic content, making it a versatile option for businesses seeking to automate information collection without technical barriers.

-

ParseHub: This tool is particularly effective for scraping information from websites utilising JavaScript and AJAX. Its point-and-click interface streamlines the setup procedure, enabling users to retrieve information swiftly and effectively, even from intricate sites.

-

Import.io: A powerful information extraction platform, Import.io enables users to convert web pages into structured information seamlessly. With API access for integration with other applications, it is ideal for businesses seeking robust information solutions.

-

DataMiner: As a browser extension, DataMiner allows users to scrape data directly from their web browsers. It is ideal for rapid removals, offering an easy choice for those who require prompt results without complicated arrangements.

Additionally, Appstractor's MobileHorizons API stands out as a unique solution for unlocking hyper-local insights from native mobile apps. This API enables companies to retrieve and examine tailored information from external applications, improving their information retrieval capabilities beyond conventional web scraping tools. For instance, while tools like Scrapy and Octoparse focus on web information, the MobileHorizons API accesses app-specific data, offering insights that are often overlooked. By utilising these advanced tools, including Appstractor's offerings, businesses can significantly enhance their data extraction from website processes, ensuring the collection of precise and relevant insights efficiently. The adoption of such technologies is crucial as organisations face increasing demands for high-quality data in a rapidly evolving digital landscape.

Conclusion

Mastering the art of data extraction from websites is essential for businesses aiming to leverage information as a strategic asset. Efficiently gathering and analysing data drives informed decision-making and enhances overall operational effectiveness. As organisations navigate an increasingly data-driven landscape, implementing best practises in data extraction is crucial for maintaining a competitive edge.

Key insights from this article highlight the importance of:

- Automating data retrieval processes

- Defining clear objectives

- Ensuring the reliability of information sources

By employing advanced tools and technologies, such as web scraping frameworks and APIs, businesses can streamline their data extraction efforts. This results in significant cost savings and improved accuracy. Additionally, adherence to legal and ethical standards fortifies the integrity of the data collected, fostering trust in data-driven strategies.

In a landscape where data is abundant yet often unstructured, the ability to extract meaningful insights is a game-changer. Organisations are encouraged to adopt these best practises and leverage innovative solutions to enhance their data extraction capabilities. By doing so, they position themselves for success and unlock the full potential of their information resources, paving the way for informed strategies and sustainable growth.

Frequently Asked Questions

What is the importance of data extraction for businesses?

Data extraction is crucial for businesses as it allows them to gather and utilise information from various sources, influencing decision-making and strategic planning.

How does data extraction improve business intelligence?

Efficient information gathering through data extraction helps organisations uncover insights into market trends, customer behaviours, and competitive landscapes, which are vital for informed decision-making and driving growth.

What role does AI play in data extraction processes?

The integration of AI in information retrieval enhances the capability to dynamically interpret data and improve accuracy, facilitating better decision-making.

How does streamlined information retrieval contribute to operational efficiency?

Streamlined processes reduce manual input, minimise errors, and enhance workflow effectiveness, allowing teams to focus on analysis rather than data collection.

What automated solutions are mentioned for data extraction?

Appstractor's Rotating Proxy Servers are mentioned as automated solutions that can handle large volumes of data extraction from websites without increasing labour or infrastructure costs.

Why is compliance and governance important in data extraction?

Regular data extraction practises are essential for maintaining integrity and adhering to regulatory requirements, helping companies mitigate compliance risks.

How can automated data retrieval lead to cost savings?

Automating information retrieval can significantly reduce labour expenses and optimise resource allocation, resulting in substantial operational savings for companies.

What is the projected growth of the Information Retrieval Software market?

The Information Retrieval Software market is projected to grow at a CAGR of 14.3%, indicating the increasing recognition of the strategic importance of information retrieval.

List of Sources

- Understand the Importance of Data Extraction

- Data Extraction Service Growth Trends with a projected 10.2% 2026 to 2033 (https://linkedin.com/pulse/data-extraction-service-growth-trends-projected-102-2026-s4b1e)

- Data Extraction Trends US Enterprises Should Watch in 2026 (https://linkedin.com/pulse/data-extraction-trends-us-enterprises-should-watch-2026-webdataguru-jbtsf)

- Why Automated Data Extraction Is Essential in 2026: Top Benefits Explained – Data Science Society (https://datasciencesociety.net/benefits-of-automated-data-extraction)

- Data Extraction Software Market Strategies: Trends and Outlook 2026-2034 (https://datainsightsmarket.com/reports/data-extraction-software-1371732)

- Explore Effective Data Extraction Methods

- Best Data Extraction Tools (https://rossum.ai/blog/best-data-extraction-tools)

- Data Extraction Software Market Strategies: Trends and Outlook 2026-2034 (https://datainsightsmarket.com/reports/data-extraction-software-1371732)

- Best Data Extraction Tools of 2026: Top 11+ Solutions (https://brightdata.com/blog/web-data/best-data-extraction-tools)

- Top 11 Data Extraction Tools in 2026 - IT Supply Chain (https://itsupplychain.com/top-11-data-extraction-tools-in-2026)

- Data Extraction Trends US Enterprises Should Watch in 2026 (https://linkedin.com/pulse/data-extraction-trends-us-enterprises-should-watch-2026-webdataguru-jbtsf)

- Implement Best Practices for Successful Data Extraction

- Why Automated Data Extraction Is Essential in 2026: Top Benefits Explained – Data Science Society (https://datasciencesociety.net/benefits-of-automated-data-extraction)

- Guide to Document Data Extraction Using AI in 2026 (https://parsio.io/blog/document-data-extraction-using-ai)

- Data Extraction Trends US Enterprises Should Watch in 2026 (https://linkedin.com/pulse/data-extraction-trends-us-enterprises-should-watch-2026-webdataguru-jbtsf)

- Legal Considerations For Data Extraction APIs (2026) | Parseur® (https://parseur.com/blog/data-extraction-api-legal)

- Data Transformation Challenge Statistics — 50 Statistics Every Technology Leader Should Know in 2026 (https://integrate.io/blog/data-transformation-challenge-statistics)

- Utilize Advanced Tools and Technologies for Data Extraction

- Best Data Extraction Tools of 2026: Top 11+ Solutions (https://brightdata.com/blog/web-data/best-data-extraction-tools)

- Top Data Extraction Tools to Use in 2026 (Full Comparison) (https://capsolver.com/blog/AI/best-data-extraction-tools)

- Top 11 Data Extraction Tools in 2026 - IT Supply Chain (https://itsupplychain.com/top-11-data-extraction-tools-in-2026)

- Top Data Extraction Tools 2026 | PromptCloud (https://promptcloud.com/blog/top-data-extraction-tools-2026-a-complete-guide)

- State of Web Scraping 2026: Trends, Challenges & What’s Next (https://browserless.io/blog/state-of-web-scraping-2026)