Introduction

Web scraping has emerged as a powerful tool for businesses aiming to leverage the vast amounts of data available online. As organisations increasingly depend on data-driven insights for strategic decision-making, the ability to extract and analyse information from websites becomes essential.

This guide provides a comprehensive, step-by-step approach to mastering basic web scraping with Python. Readers will gain the skills necessary to navigate the complexities of data extraction.

However, it is crucial to consider the ethical implications and technical challenges associated with this growing practise. How can one ensure responsible and effective data collection? This guide will address these concerns while equipping you with the knowledge to harness web scraping effectively.

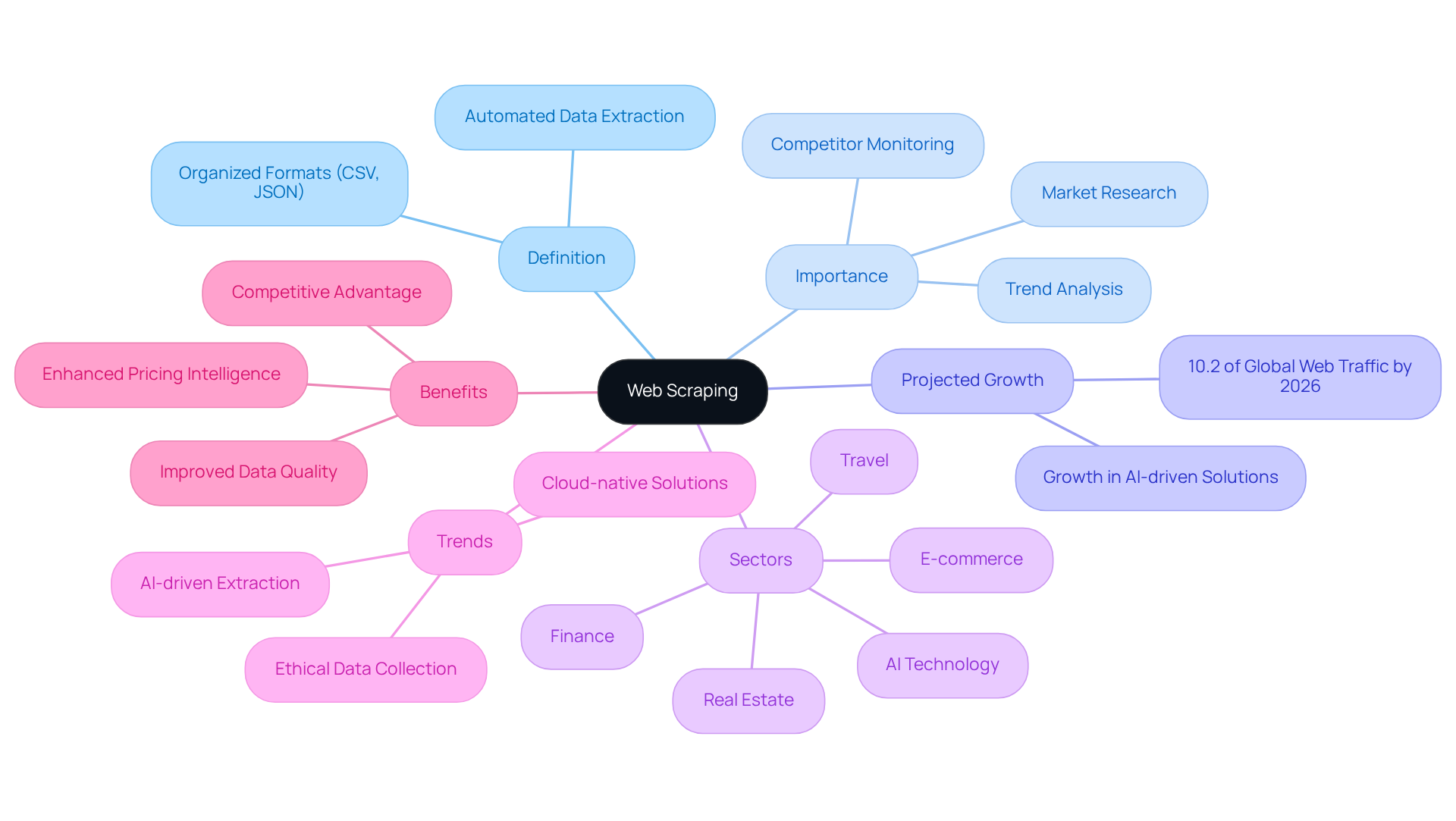

Understand Web Scraping: Definition and Importance

Web harvesting refers to the automated process of extracting information from websites, employing software tools to gather data and convert it into organised formats such as CSV or JSON. This technique is essential for businesses, as it provides valuable insights from extensive online data, facilitating effective market research, competitor monitoring, and trend analysis.

In 2026, web extraction is projected to account for approximately 10.2% of all global web traffic, highlighting its increasing significance in data-driven decision-making. Various sectors, including e-commerce, finance, and travel, leverage web data extraction to enhance pricing intelligence and track market trends, underscoring its vital role in strategic planning.

Furthermore, as organizations adopt ethical information-gathering practices, the focus on responsible extraction is becoming increasingly important. Recent trends indicate a movement towards AI-driven and cloud-native extraction solutions, which streamline operations and improve information quality.

By mastering web data extraction, businesses can significantly bolster their analytical capabilities and maintain a competitive advantage in their respective markets. With Appstractor's enterprise-class private proxy servers, you can ensure secure and reliable information extraction, facilitating efficient data collection and delivery solutions tailored to your needs. This includes flexible formats and endpoints such as JSON, CSV, Parquet, S3, GCS, BigQuery, and Direct DB Insert.

Set Up Your Environment: Tools and Libraries for Web Scraping

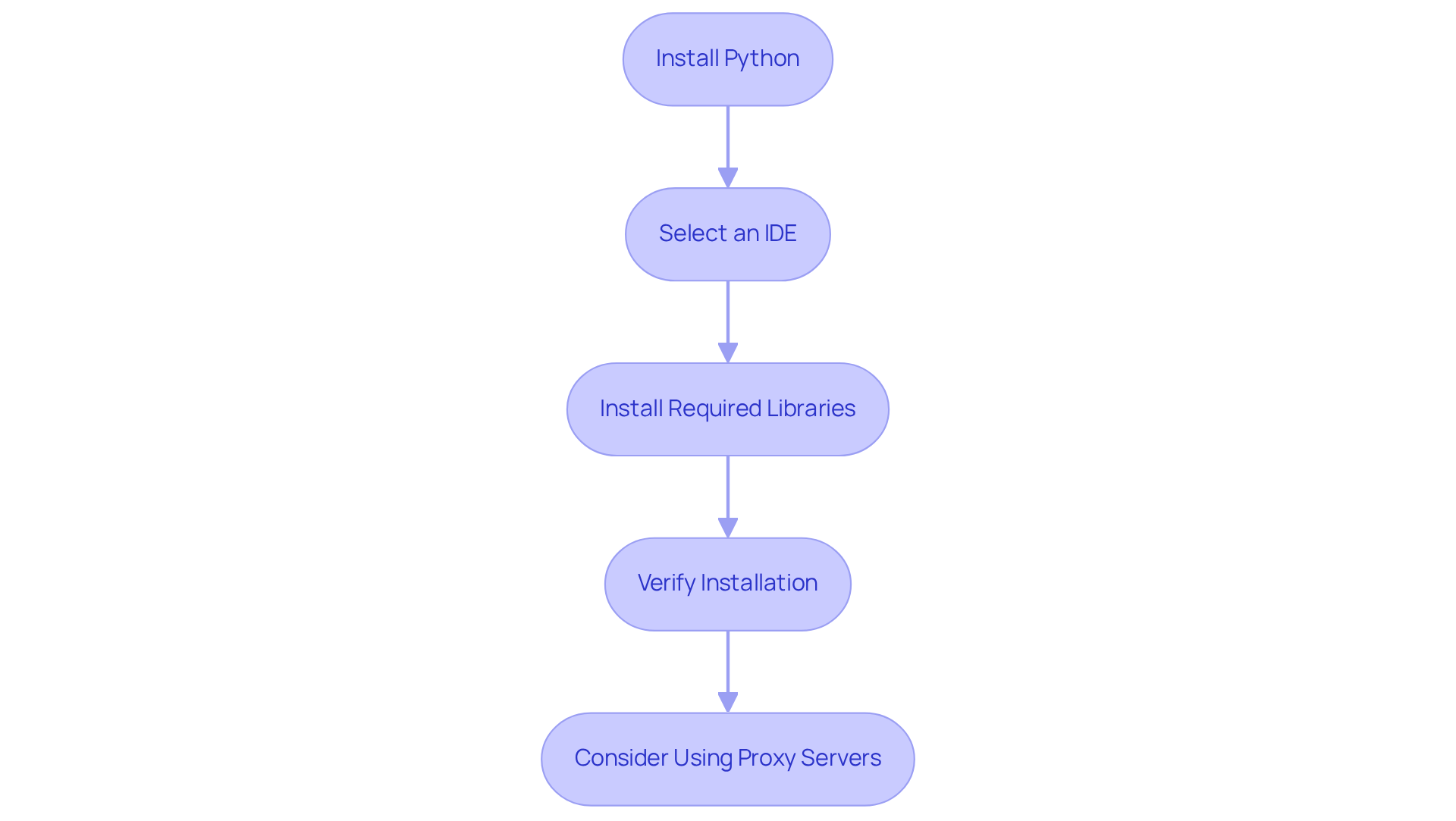

To embark on your web scraping journey with Python, follow these essential steps to set up your environment:

-

Instal Python: Begin by downloading and installing Python from the official Python website. Ensure you have the latest version compatible with your operating system.

-

Select an Integrated Development Environment (IDE): Choose a suitable IDE for writing and executing your Python scripts. Popular options include PyCharm, Visual Studio Code, and Jupyter Notebook, each offering unique features for different coding preferences.

-

Instal Required Libraries: Open your command line interface and execute the following commands to instal the necessary libraries:

pip instal requests beautifulsoup4 pandas- Requests: Simplifies making HTTP requests to retrieve web pages.

- BeautifulSoup: Essential for parsing HTML and extracting pertinent information.

- Pandas: A powerful tool for manipulating and storing information, making it easier to handle the content you scrape.

-

Verify Installation: Launch your IDE and run a simple script to confirm that the libraries are installed correctly:

import requests from bs4 import BeautifulSoup import pandas as pd print('Libraries installed successfully!')

By completing these steps, you will establish a fully functional environment, primed for effective web scraping. To enhance your web extraction capabilities, consider utilising Appstractor's Rotating Proxy Servers for self-serve IPs or their Full Service option for turnkey information delivery. These solutions streamline the information extraction process, allowing you to focus on analysis rather than manual data collection.

Execute Your First Web Scraping Project: Step-by-Step Instructions

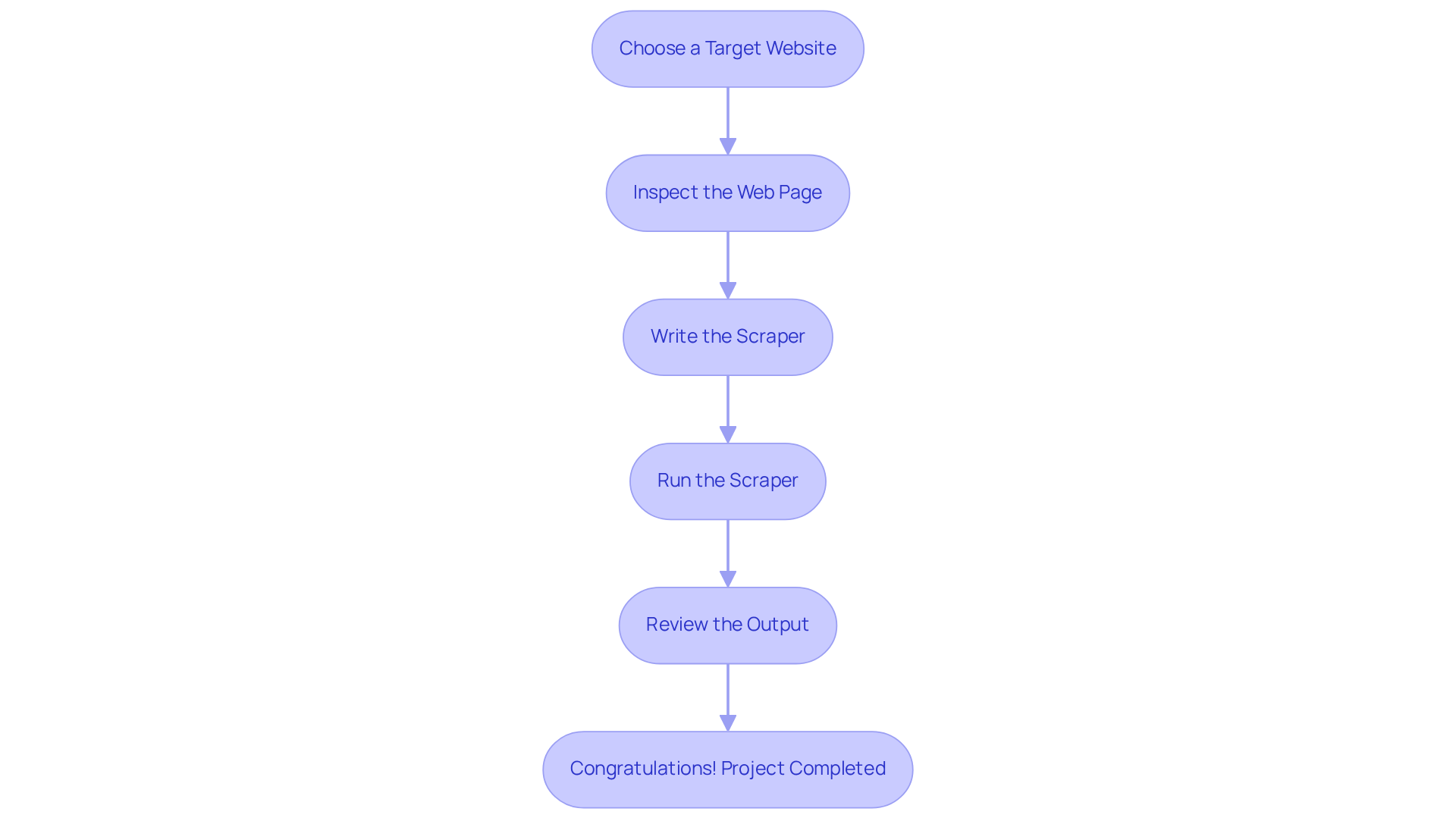

To commence your journey in basic web scraping python, let's embark on your first project by extracting data from a fictional e-commerce site. This exercise is essential for utilising Appstractor's advanced data scraping solutions, particularly in price monitoring and competitive tracking.

- Choose a Target Website: For this demonstration, we will use a fictional e-commerce site,

http://example.com/products. - Inspect the Web Page: Open the website in your browser, right-click on the listings, and select 'Inspect' to examine the HTML structure. Identify the HTML tags that contain the item name, price, and description.

- Write the Scraper: Create a new Python file (e.g.,

scraper.py) and input the following code:import requests from bs4 import BeautifulSoup url = 'http://example.com/products' response = requests.get(url) soup = BeautifulSoup(response.text, 'html.parser') products = [] for item in soup.find_all('div', class_='product-item'): name = item.find('h2', class_='product-name').text price = item.find('span', class_='product-price').text description = item.find('p', class_='product-description').text products.append({'name': name, 'price': price, 'description': description}) print(products) - Run the Scraper: Execute your script in the command line:

python scraper.py - Review the Output: Check the console for the collected item data. You should see a list of dictionaries containing the product names, prices, and descriptions.

Congratulations! You have successfully completed your first web extraction project. This foundational experience with basic web scraping python will serve you well as you delve into more complex extraction tasks and techniques. With the global e-commerce market projected to reach $6.88 trillion, mastering web scraping will become increasingly valuable in this evolving landscape, especially for tasks like seasonal demand analysis and ensuring MAP compliance.

Store and Manage Your Scraped Data: Best Practices

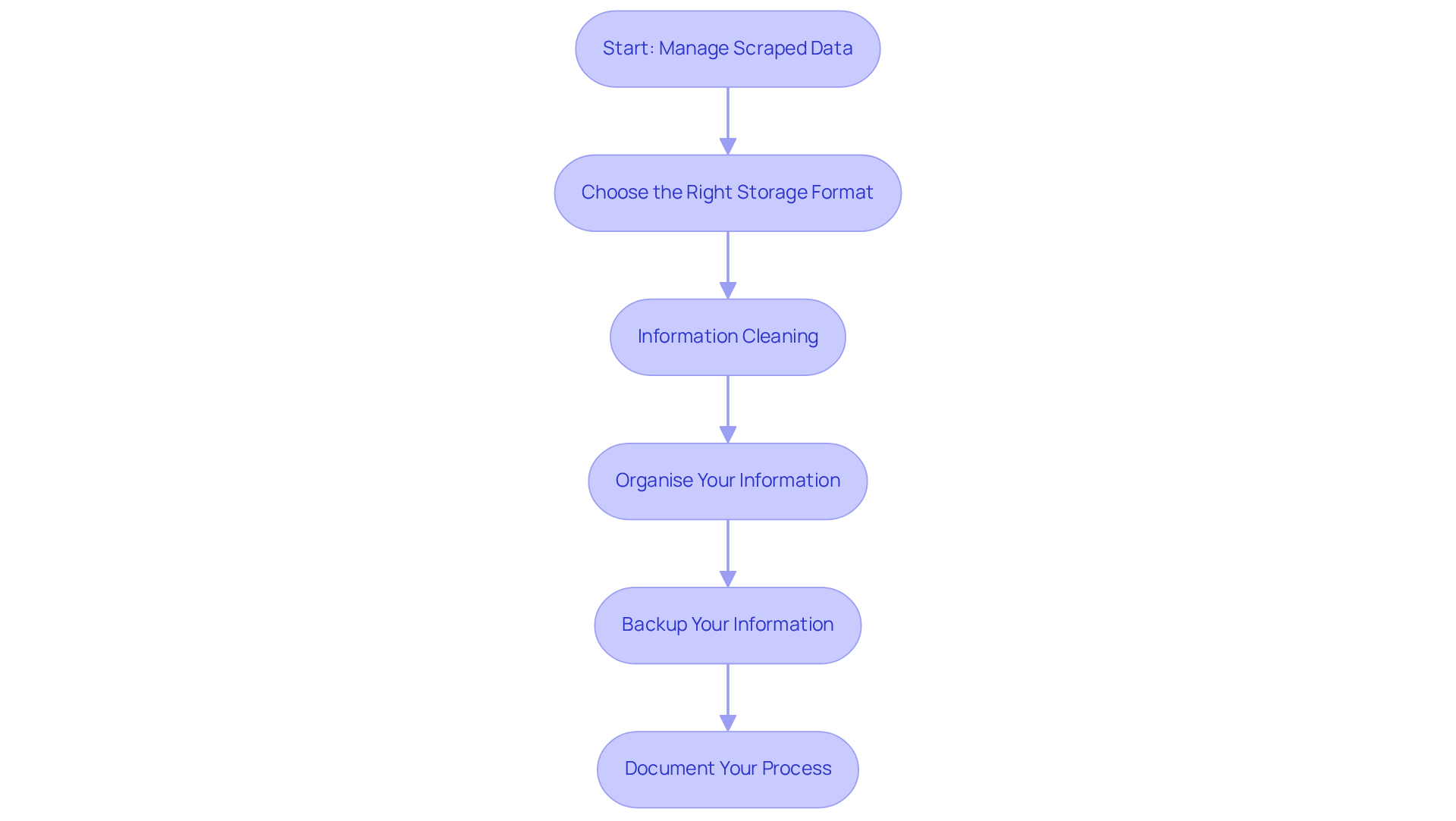

Once you have collected information, effective storage and management are crucial for maximising its value. Here are some best practices to consider:

-

Choose the Right Storage Format: Select a format that aligns with your data needs:

- CSV: Best for simple datasets, offering easy readability.

- JSON: Perfect for hierarchical structures, enabling nested information.

- Databases: Utilise SQL databases (e.g., MySQL, PostgreSQL) for structured information or NoSQL options (e.g., MongoDB) for unstructured content.

-

Information Cleaning: Before storage, tidy your information to remove duplicates, handle missing values, and guarantee consistency. Libraries such as Pandas are essential for managing and cleaning tasks.

-

Organise Your Information: Structure your information logically to enhance accessibility. For instance, create distinct tables for different entities (e.g., products, categories) within a database to facilitate efficient querying and analysis.

-

Backup Your Information: Implement regular backup procedures to protect against loss of information. Consider using cloud storage solutions or external drives to ensure redundancy and reliability.

-

Document Your Process: Maintain comprehensive documentation of your scraping procedure, including target URLs, information fields, and any transformations applied. This record will serve as a valuable reference for future projects and audits.

By adhering to these best practices, you can ensure that your scraped data is organised, clean, and primed for insightful analysis, ultimately enhancing your data management strategies.

Conclusion

Mastering the art of web scraping with Python opens up a world of possibilities for businesses and individuals alike. By effectively extracting and managing online data, organisations can gain critical insights that drive informed decision-making and enhance competitive strategies. This guide has provided a comprehensive roadmap, from understanding the fundamentals of web scraping to executing your first project and managing the data you collect.

Key insights highlighted in this article include:

- The importance of selecting the right tools and libraries, such as Requests and BeautifulSoup, to facilitate data extraction.

- The significance of ethical practises in web scraping and the need for proper data storage and management.

By implementing best practises, such as choosing suitable storage formats and maintaining clean datasets, users can maximise the value derived from their web scraping efforts.

As the digital landscape continues to evolve, the ability to harness web data will become increasingly crucial. Embracing web scraping not only equips businesses with the tools to monitor market trends and competitor strategies but also fosters innovation in data-driven solutions. Therefore, taking the first steps into web scraping today can pave the way for future success in an increasingly data-centric world.

Frequently Asked Questions

What is web scraping?

Web scraping, also known as web harvesting, is the automated process of extracting information from websites using software tools to gather data and convert it into organised formats such as CSV or JSON.

Why is web scraping important for businesses?

Web scraping provides valuable insights from extensive online data, facilitating effective market research, competitor monitoring, and trend analysis, which are essential for data-driven decision-making.

What is the projected impact of web extraction on global web traffic by 2026?

By 2026, web extraction is projected to account for approximately 10.2% of all global web traffic, indicating its growing significance.

Which sectors commonly leverage web data extraction?

Sectors such as e-commerce, finance, and travel utilise web data extraction to enhance pricing intelligence and track market trends.

What are the recent trends in web data extraction practises?

Recent trends indicate a movement towards ethical information-gathering practises, AI-driven solutions, and cloud-native extraction methods that streamline operations and improve information quality.

How can mastering web data extraction benefit businesses?

Mastering web data extraction can significantly enhance analytical capabilities and help businesses maintain a competitive advantage in their markets.

What solutions does Appstractor offer for web data extraction?

Appstractor provides enterprise-class private proxy servers for secure and reliable information extraction, offering efficient data collection and delivery solutions in flexible formats such as JSON, CSV, Parquet, S3, GCS, BigQuery, and Direct DB Insert.

List of Sources

- Understand Web Scraping: Definition and Importance

- Outsourcing Web Scraping: Complete Decision Guide 2026 (https://tendem.ai/blog/outsource-web-scraping-guide)

- State of Web Scraping 2026: Trends, Challenges & What’s Next (https://browserless.io/blog/state-of-web-scraping-2026)

- What is Web Scraping? Enterprise Use Cases for 2026 (https://kadoa.com/blog/what-is-web-scraping)

- Web Scraping Report 2026: Market Trends, Growth & Key Insights (https://promptcloud.com/blog/state-of-web-scraping-2026-report)

- 2026 Web Scraping Industry Report - PDF (https://zyte.com/whitepaper-ebook/2026-web-scraping-industry-report)

- Set Up Your Environment: Tools and Libraries for Web Scraping

- The best web scraping tools in 2026 (https://zyte.com/learn/best-web-scraping-tools)

- 7 Best Web Scraping Tools Ranked (2026) (https://scrapingbee.com/blog/web-scraping-tools)

- The Best Python Web Scraper Tools in 2026 (https://thordata.com/blog/scraper/ai-web-scapeing-and-python)

- Top Python Web Scraping Libraries 2026 (https://capsolver.com/blog/web-scraping/best-python-web-scraping-libraries)

- Best Python Web Scraping Tools 2026 (Updated) (https://medium.com/@inprogrammer/best-python-web-scraping-tools-2026-updated-87ef4a0b21ff)

- Execute Your First Web Scraping Project: Step-by-Step Instructions

- Get product data from multiple global marketplaces (https://blog.apify.com/how-to-scrape-products-e-commerce)

- eCommerce Data Scraping in 2026: The Ultimate Strategic Guide (https://groupbwt.com/blog/ecommerce-data-scraping)

- Store and Manage Your Scraped Data: Best Practices

- News Scraping: Best Practices for Accurate and Timely Data (https://thunderbit.com/blog/news-scraping-best-practices)

- News Scraping: Everything You Need to Know (https://oxylabs.io/blog/news-scraping)

- News Scraping Guide: Tools, Use Cases, and Challenges (https://infatica.io/blog/news-scraping)

- The State of Web Crawling in 2026: Key Statistics and Industry Benchmarks (https://thunderbit.com/blog/web-crawling-stats-and-industry-benchmarks)