Introduction

In a data-driven landscape, extracting valuable insights from the web is crucial for digital marketers. By mastering web scraping best practises, professionals can access a wealth of information that informs strategies and enhances competitive advantage. However, navigating the ethical and technical complexities of this process poses a challenge.

How can marketers ensure they scrape data responsibly while maximising its utility? This article explores ten essential web scraping best practises that streamline data collection while upholding integrity and compliance in the digital realm.

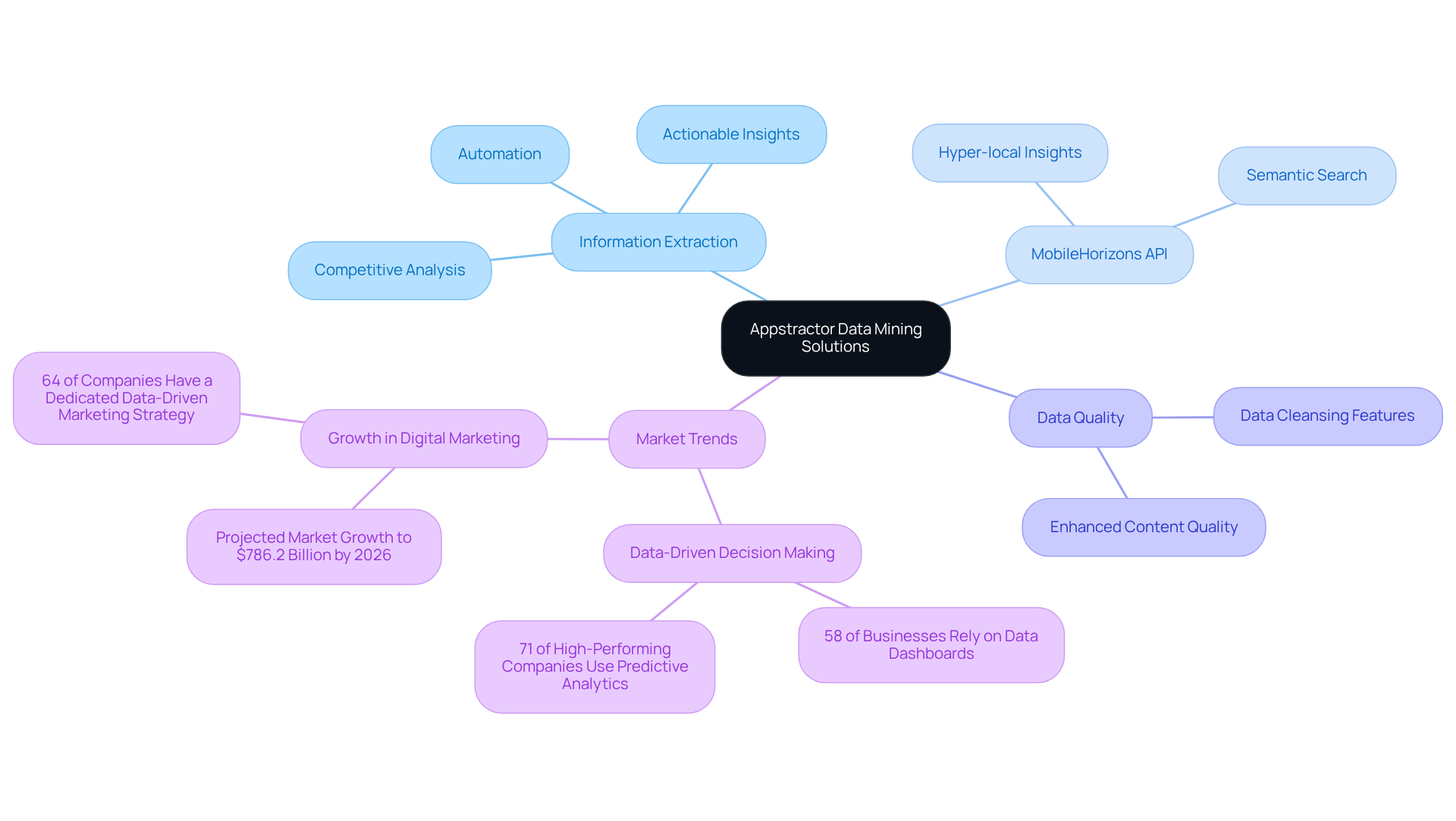

Leverage Appstractor for Advanced Data Mining Solutions

The company provides advanced information mining solutions that empower digital marketers to extract actionable insights from extensive datasets. By leveraging this platform's services, professionals can automate the information extraction process, ensuring the efficient collection of clear and relevant details. The platform's rotating proxies and robust data cleansing features significantly enhance the quality of the gathered content, making it indispensable for market research and competitive analysis.

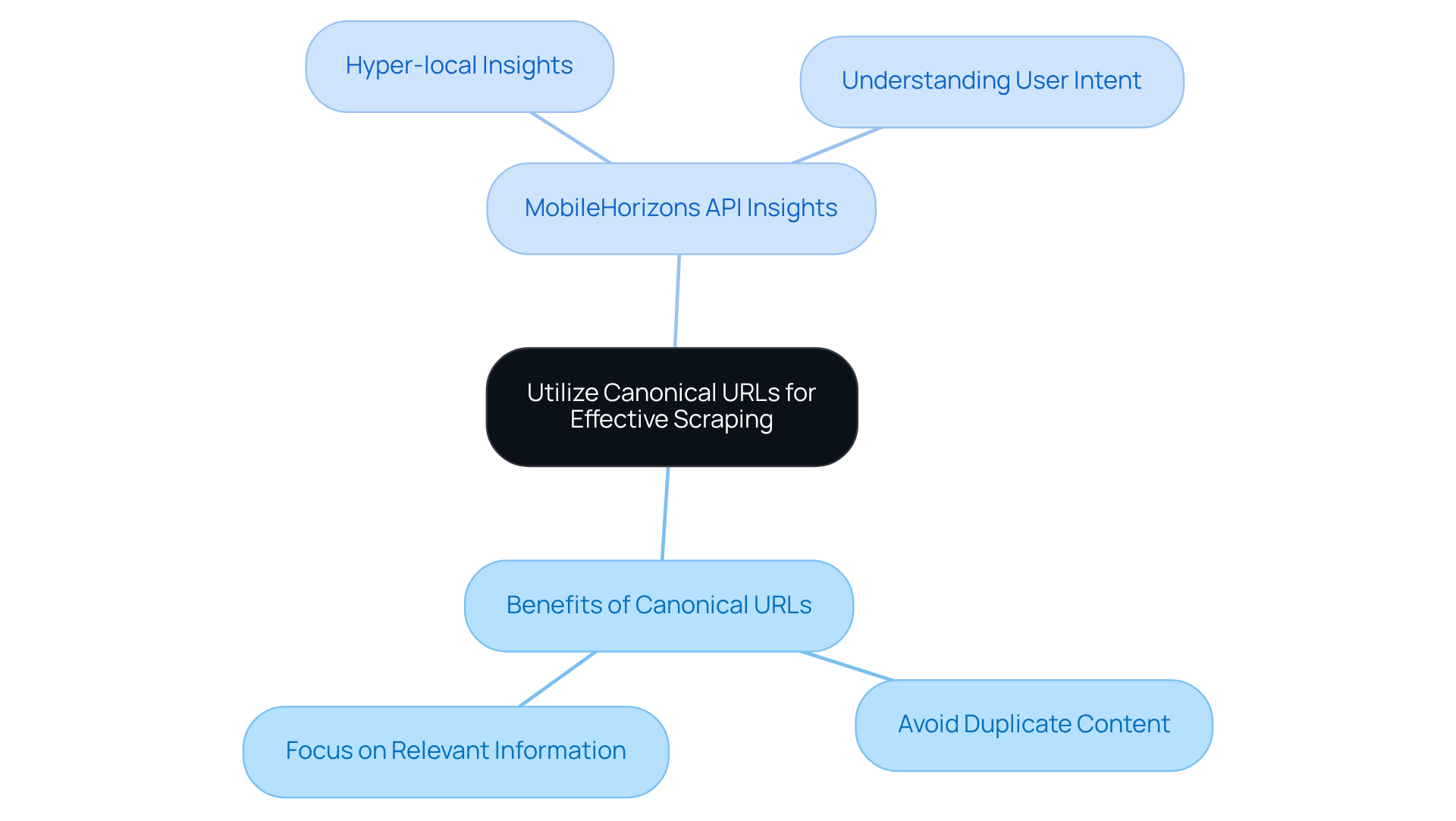

With the introduction of the MobileHorizons API, the platform offers hyper-local insights from native mobile applications, allowing advertisers to access customised information feeds that refine their strategies. This API adeptly manages semantic search and user intent, equipping marketers with more relevant and context-aware information.

With over 14 years of industry experience, the company has established itself as a trusted partner for businesses aiming to harness information mining. As organisations increasingly depend on data-driven decision-making - projected to be a critical factor for 58% of businesses by 2026 - Appstractor's solutions are vital for maintaining competitiveness in a swiftly changing digital landscape. Current trends indicate that web scraping best practices are crucial for enhancing marketing strategies, with 71% of top-performing firms utilising predictive analytics to elevate their campaigns. By integrating Appstractor's features, including the MobileHorizons API, marketers can not only improve their information extraction processes but also drive significant business growth through informed decision-making.

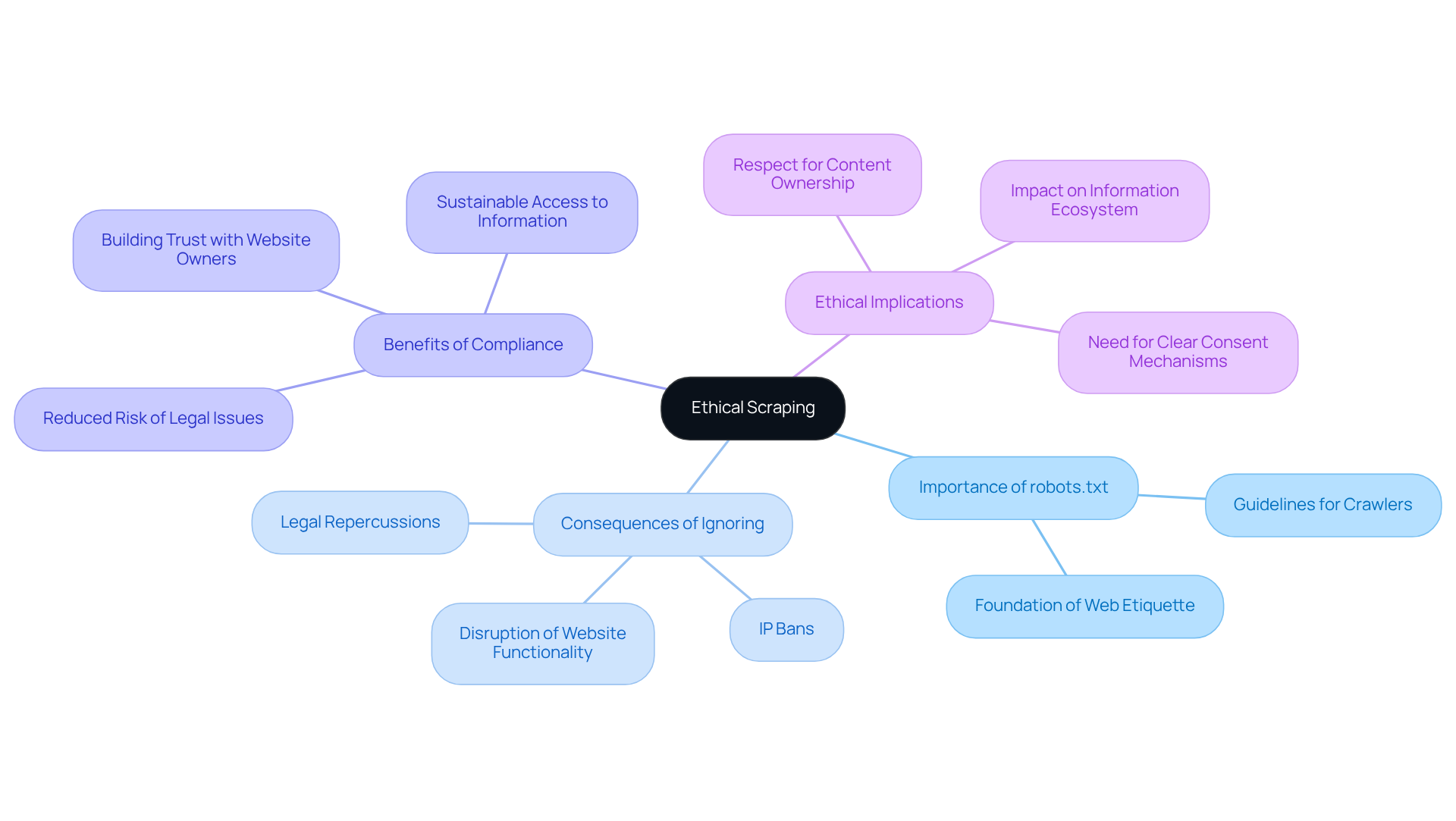

Respect Robots.txt for Ethical Scraping

Before initiating any web data extraction project, reviewing the robots.txt file of the target website is crucial. This file acts as a guideline for web crawlers, indicating which pages are accessible and which should be avoided. Ignoring these directives can lead to IP bans and significant legal repercussions. While the Robots Exclusion Protocol (REP) is not legally binding, it relies on the goodwill of scrapers for compliance.

Adhering to web scraping best practices underscores the importance of following these guidelines. This not only promotes a healthier information ecosystem but also minimizes the risk of disrupting the website's functionality. Businesses that prioritize compliance with robots.txt reduce the likelihood of legal issues and build trust with website owners, enhancing their long-term access to information.

For instance, organizations that engage in ethical extraction are less prone to IP bans and can maintain stable relationships with their information sources. As the landscape of web extraction evolves, understanding the ethical implications of disregarding robots.txt becomes increasingly important, especially in light of ongoing discussions about content ownership and the use of AI in information gathering.

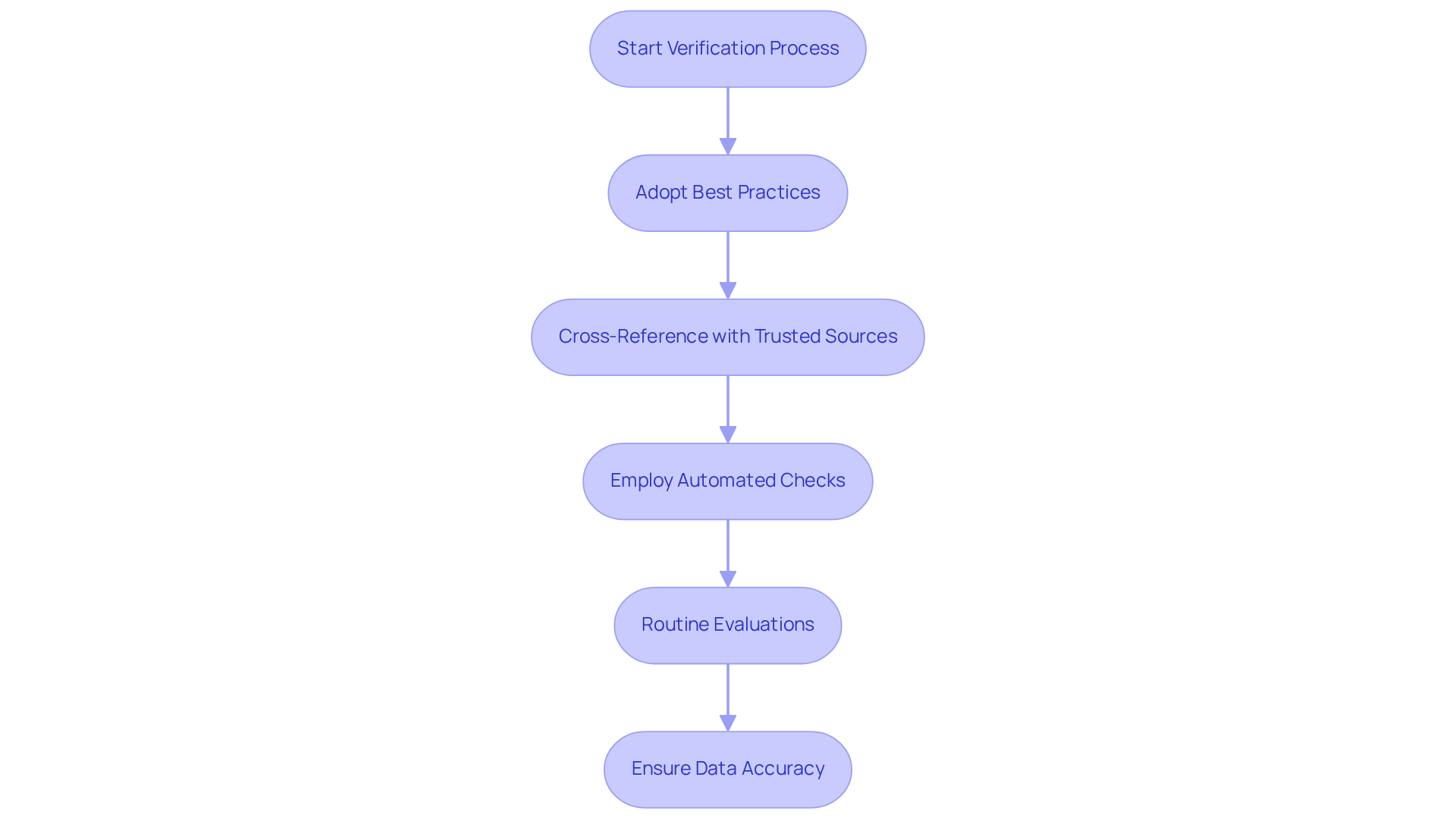

Continuously Verify Scraped Data for Accuracy

To ensure the reliability of insights obtained from web scraping, continuous verification of scraped information is essential. To identify anomalies, marketers should adopt web scraping best practices that include validation techniques such as cross-referencing with trusted sources and employing automated checks. Routine evaluations of the information can assist in preserving its precision and significance, enabling companies to make knowledgeable choices based on reliable facts.

This tool enhances this process by providing efficient web extraction solutions, including rotating proxies and full-service options. These features enable scalable crawlers and ongoing monitoring. With over 3,000 businesses depending on information from more than 100,000 sources, Appstractor guarantees that clients can 'Get Clean Web Information, Fast.'

For instance, a financial services company that monitored compensation information observed a 30% decrease in offer rejection rates by ensuring accuracy through systematic validation. Similarly, a retail executive encountered a $500K loss due to obsolete competitor information, highlighting the importance of thorough validation practices.

As the web information strategy landscape evolves, the significance of accuracy continues to grow, making robust verification processes essential for success.

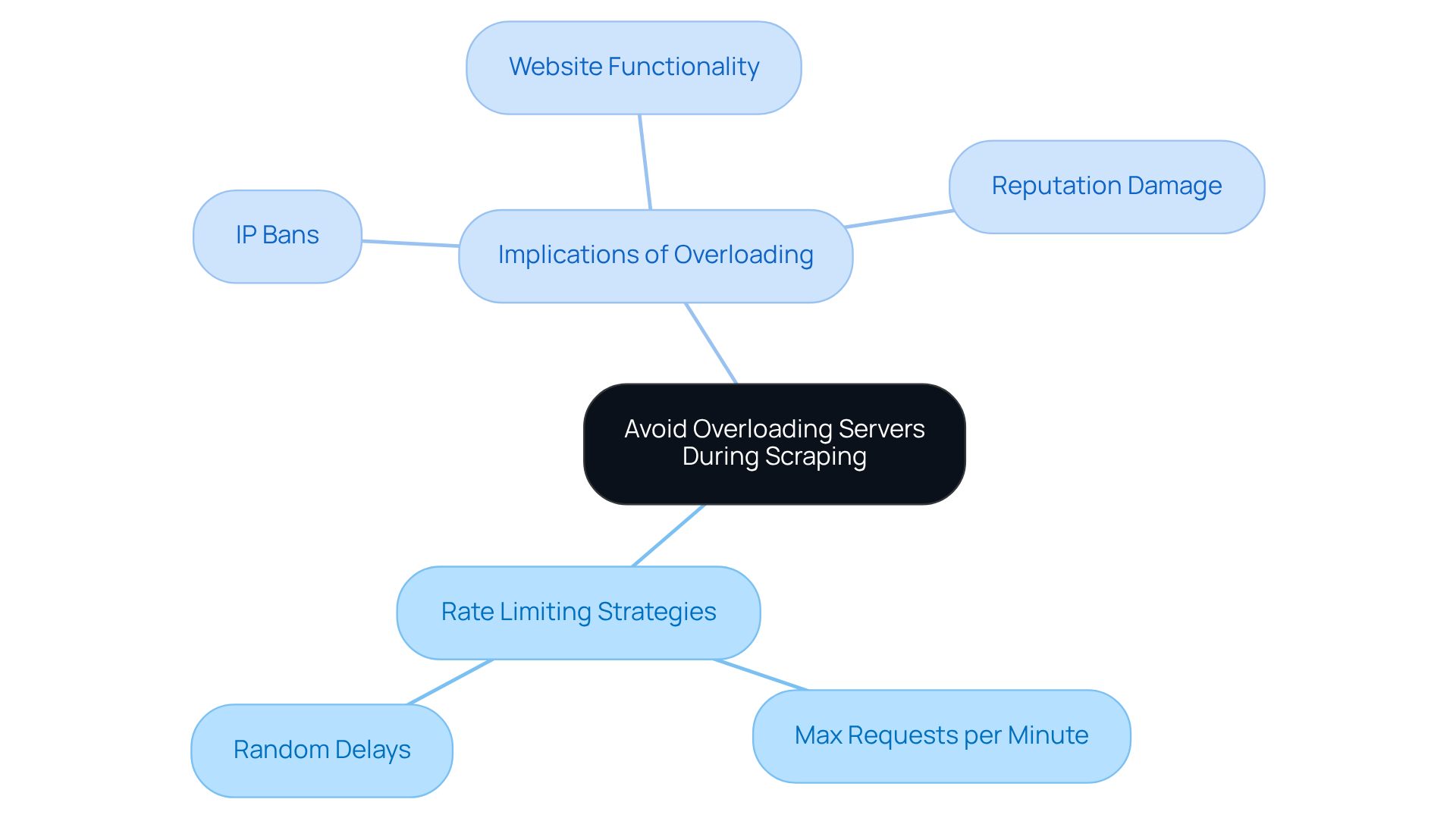

Avoid Overloading Servers During Scraping

To ensure effective web extraction without disrupting target servers, it is crucial to avoid overwhelming them with excessive requests. Implementing rate limiting strategies, such as:

- Setting a maximum number of requests per minute

- Introducing random delays between requests

can effectively mimic human browsing behaviour. This approach not only helps in preventing IP bans but also maintains the website's functionality for other users.

By being mindful of server load, marketers can foster positive relationships with information sources, which is essential for sustainable extraction efforts. In fact, 73% of companies utilise web extraction for market insights, emphasising the need for responsible practises to safeguard these valuable information channels.

Furthermore, excessive requests can result in server overload, causing performance problems that may discourage genuine users and harm the reputation of the data collection entity. Embracing web scraping best practises for rate limiting is not merely a technical requirement; it is a strategic necessity for digital marketers seeking to enhance their information gathering processes.

Utilize Canonical URLs for Effective Scraping

In web harvesting, the use of canonical URLs significantly enhances the effectiveness of information extraction. Canonical URLs indicate the preferred version of a webpage, helping scrapers avoid duplicate content and focus on the most relevant information. By utilising web scraping best practices that prioritise canonical URLs, professionals can optimise their information collection processes, ensuring they acquire the most accurate and pertinent insights available.

Moreover, leveraging Appstractor's MobileHorizons API allows marketers to gain hyper-local insights from native mobile applications. This approach not only enhances the relevance of information but also provides a comprehensive understanding of user intent and location-sensitive details, which are crucial for effective digital marketing strategies.

Handle Multiple Pages and Lists Efficiently

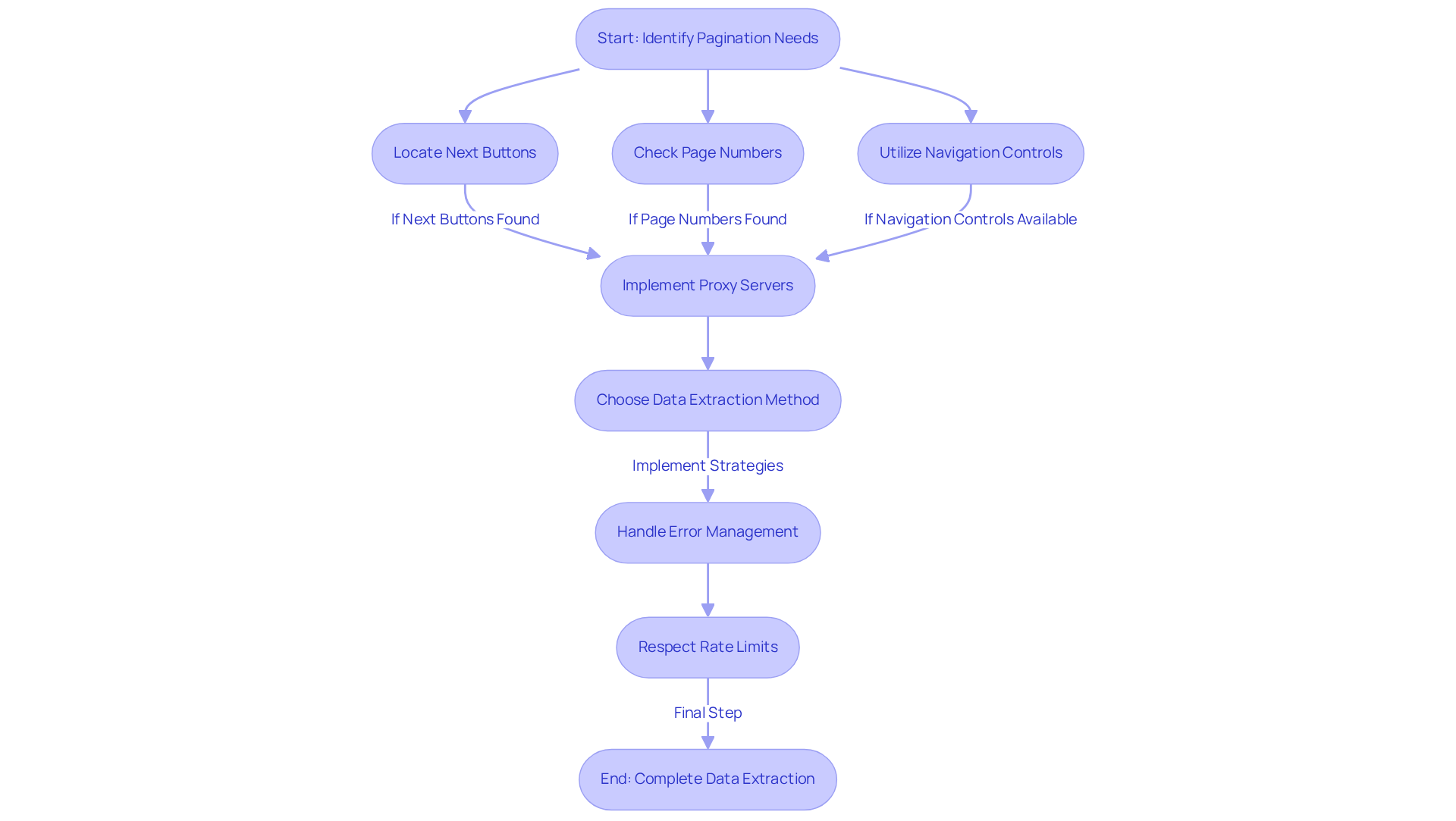

Efficient information extraction from websites with multiple pages or lists necessitates a strategic approach to pagination techniques. Marketers must prioritise the identification of 'next' buttons, page numbers, and other navigation controls to ensure smooth content traversal. Research shows that 30-70% of business-critical information is located beyond the first page, highlighting the significance of effective pagination strategies.

Utilising contemporary tools, such as rotating proxy servers, can greatly enhance performance, enabling the collection of large datasets without sacrificing speed. Appstractor offers flexible data extraction methods tailored to business needs, with options for Full Service and Hybrid solutions. Additionally, user manuals and FAQs are available to assist professionals in effectively leveraging these services.

As Kevin Sahin, co-founder of ScrapingBee, notes, "Mastering web data extraction pagination is the difference between gathering just a handful of records and obtaining complete datasets that generate real business value." By mastering these pagination techniques, professionals can capture essential information across complex web structures, ensuring comprehensive insights that facilitate informed decision-making.

Addressing challenges such as error management and rate limiting is crucial for resilient extraction, allowing professionals to navigate the intricacies of web content retrieval efficiently.

Adopt Scraping APIs for Modern Solutions

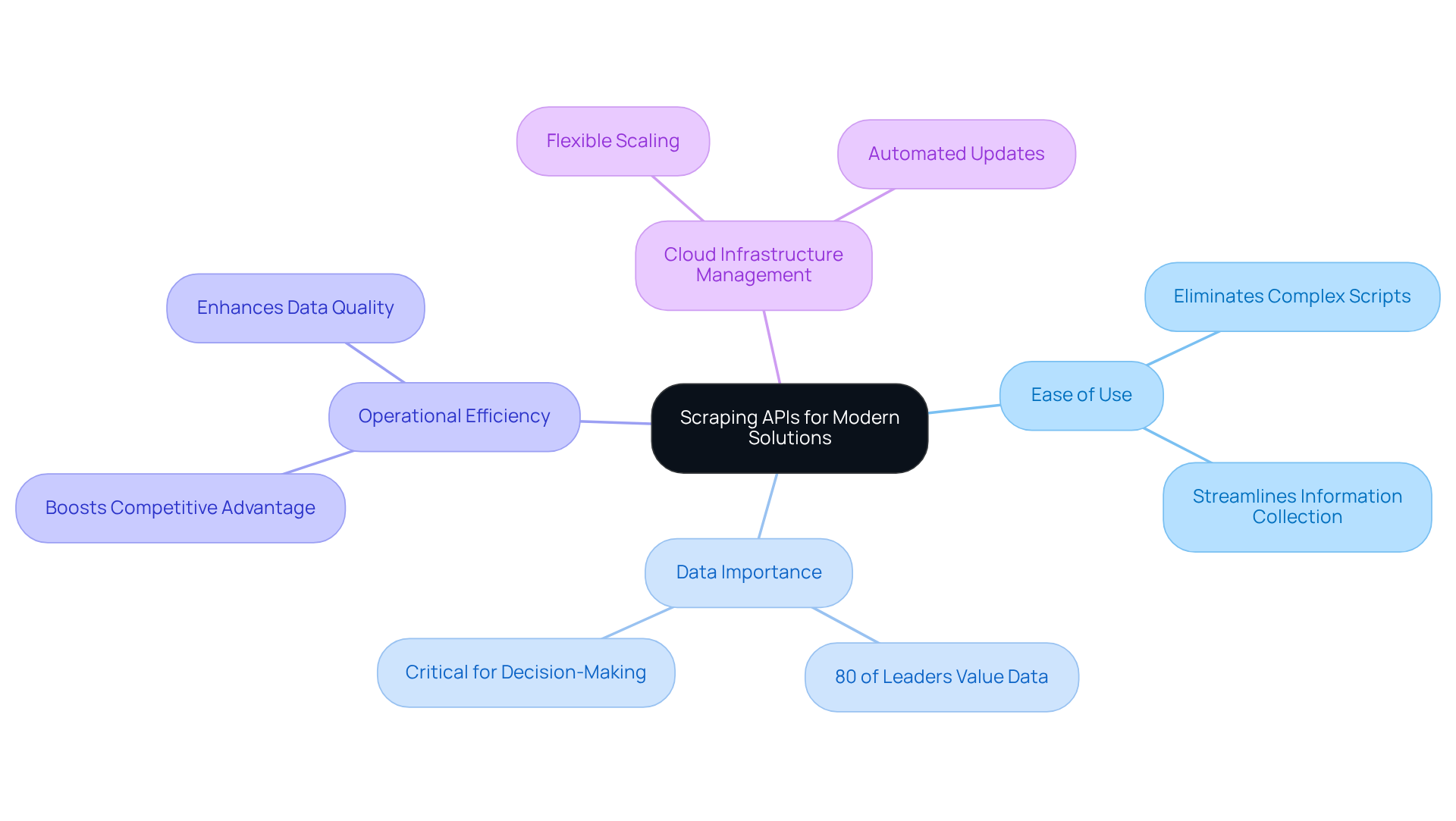

Extracting information through APIs represents a cutting-edge method for information retrieval, enabling marketers to navigate the complexities of traditional extraction techniques with ease. By leveraging APIs, businesses can access organised information directly from websites, eliminating the need for intricate extraction scripts. This approach streamlines the information collection process, significantly improves information quality, and ensures adherence to website terms.

As of 2026, a notable 80% of business leaders acknowledge data as essential for decision-making, highlighting the critical role of integrating scraping APIs into data strategies. By embracing these modern solutions, marketers can boost operational efficiency and secure a competitive advantage in their data-driven initiatives.

Furthermore, utilising cloud infrastructure management, such as that offered by this service, can enhance these processes. With features like flexible scaling, cost management, and automated updates, Appstractor's advanced information extraction solutions empower businesses to effectively manage pricing strategies and monitor competitor activities, illustrating the practical applications of these technologies.

Implement Data Cleaning Techniques Post-Scraping

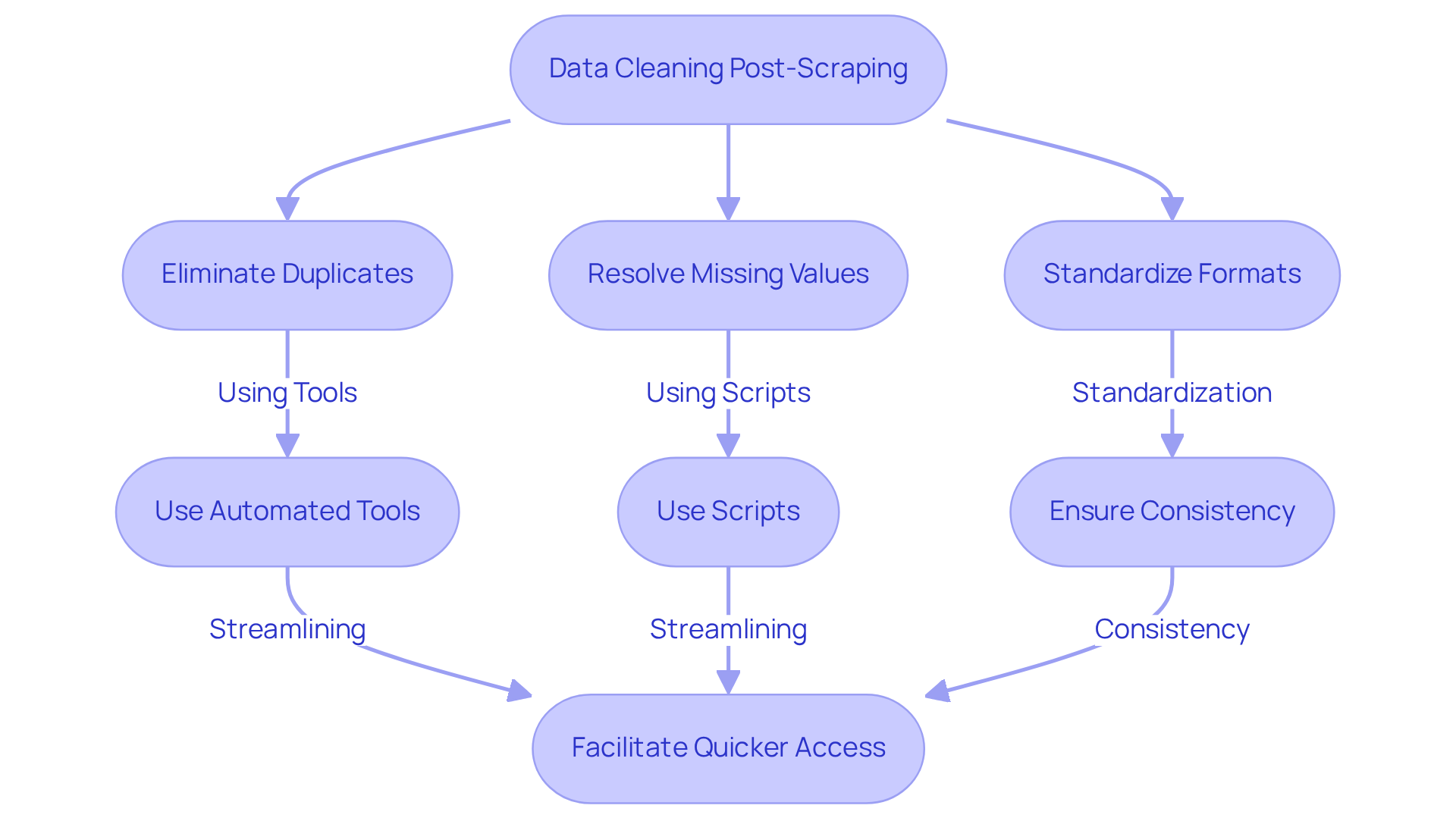

Effective cleaning techniques following data scraping are essential for transforming raw information into actionable insights. Marketers must prioritise the elimination of duplicates, the resolution of missing values, and the standardisation of formats to ensure consistency. By employing automated tools and scripts, businesses can streamline their cleaning processes, facilitating quicker access to reliable insights.

Utilising Appstractor's rotating proxies and full-service options enhances information extraction capabilities, ensuring that the cleaned data is both reliable and comprehensive. To maximise these benefits, advertisers should regularly evaluate their data cleansing strategies and adjust them based on the insights gained.

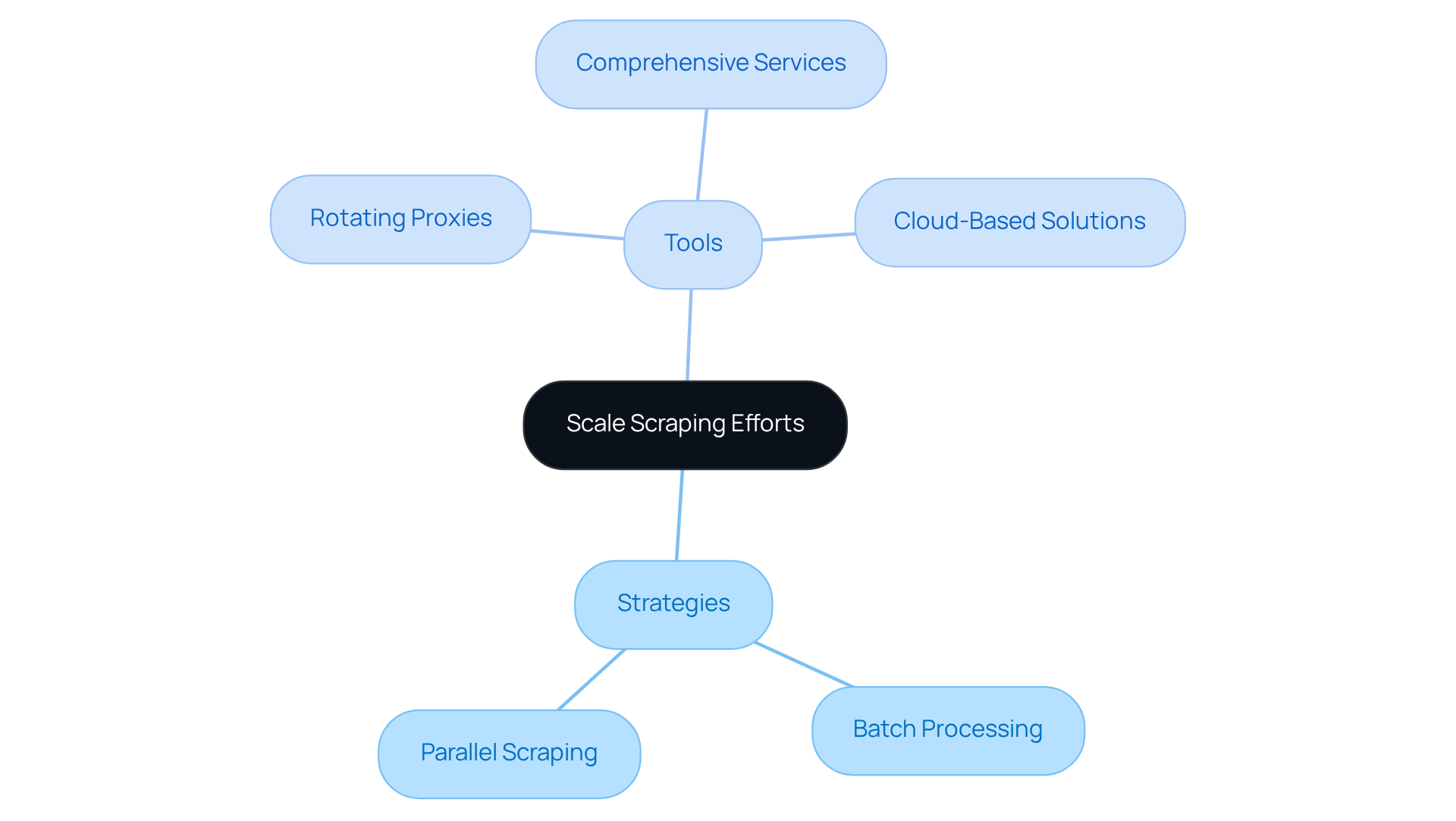

Scale Scraping Efforts Beyond Single Pages

To enhance information gathering, marketers should expand their extraction efforts beyond individual pages. This involves implementing strategies such as:

- Batch processing

- Parallel scraping

to efficiently collect information from multiple sources simultaneously.

Employing rotating proxies and comprehensive services can significantly improve these initiatives, offering dependable and swift access to clear online information. Additionally, leveraging cloud-based solutions can further enhance scalability, allowing businesses to manage larger datasets without compromising performance.

Appstractor's MobileHorizons API reveals hyper-local insights from native mobile applications, enabling professionals to gather and examine tailored information that aligns with their business goals.

By adopting these strategies, marketers can ensure they capture extensive information that drives effective decision-making.

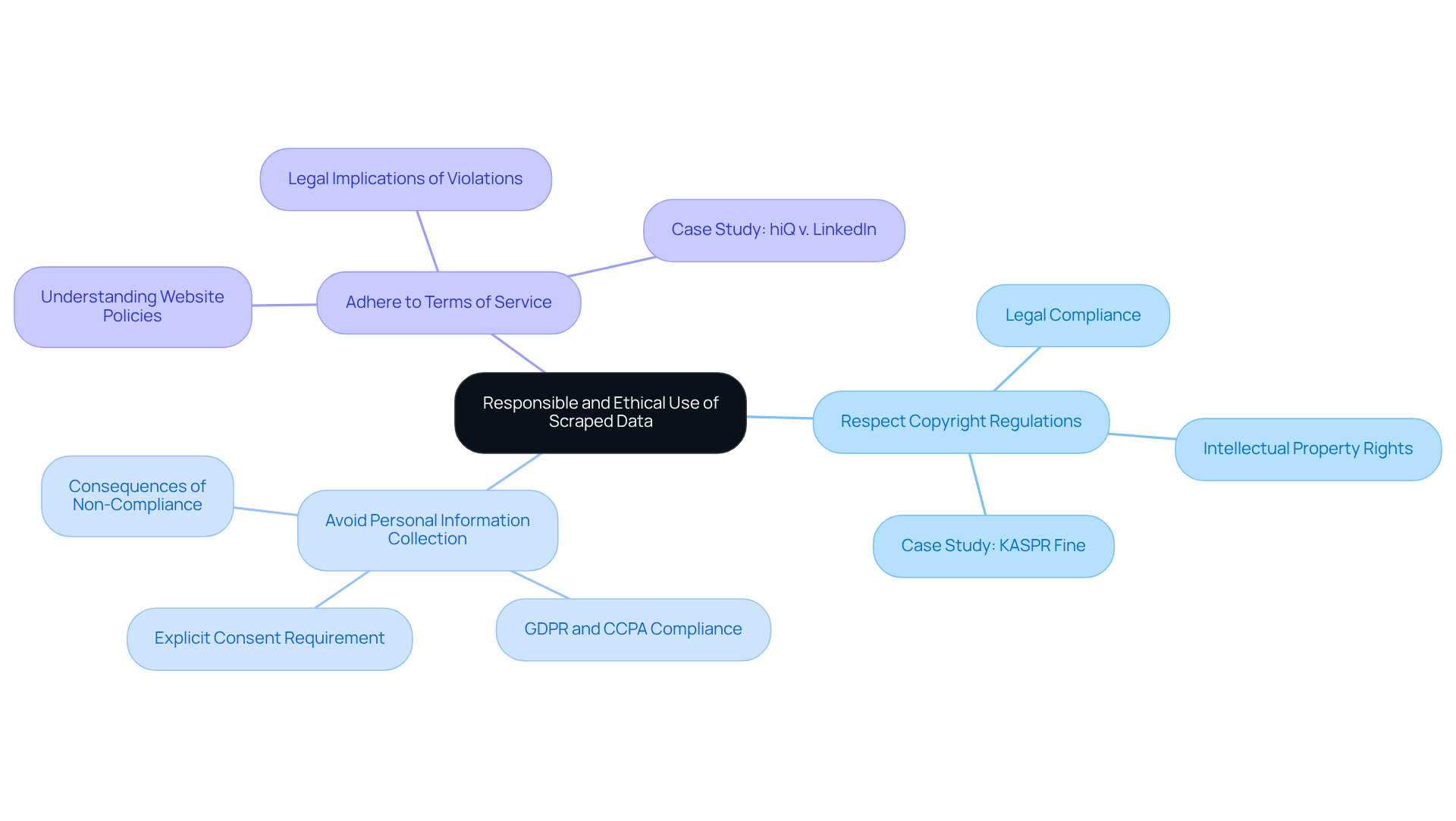

Use Scraped Data Responsibly and Ethically

Marketers must prioritise the responsible and ethical use of gathered information by following web scraping best practises to comply with legal standards and protect their brand's reputation. This responsibility includes following web scraping best practises, which involve:

- Respecting copyright regulations

- Avoiding the collection of personal information without explicit consent

- Adhering to the terms of service of the websites being scraped

By adopting ethical data practises and following web scraping best practises, businesses can foster trust with their audience and ensure that their data collection efforts enhance their marketing strategies. As the legal landscape evolves, particularly with the upcoming enforcement of the EU AI Act in 2026, maintaining compliance will be crucial for marketers. Non-compliance could lead to significant penalties, potentially reaching €35 million or 7% of global revenue, thereby jeopardising brand integrity.

The recent fine of €200,000 imposed by the French CNIL on KASPR for collecting publicly available LinkedIn profiles underscores the importance of adhering to these standards. With the global web scraping industry projected to reach $5 billion by 2025, adhering to web scraping best practises will be essential for businesses to thrive in this growing market.

Conclusion

By implementing the essential web scraping best practises outlined in this article, digital marketers can significantly enhance their data extraction strategies. These practises emphasise ethical scraping, accurate data verification, and efficient information gathering, streamlining operations while ensuring compliance with legal standards. The integration of advanced tools like Appstractor empowers marketers to extract valuable insights while maintaining the integrity of their data collection methods.

Key insights discussed include:

- The necessity of respecting robots.txt for ethical scraping.

- The critical role of continuous data verification.

- The importance of employing canonical URLs to optimise information retrieval.

Additionally, strategies for managing server load and utilising scraping APIs for modern solutions are crucial for effective web scraping. By adopting these practises, marketers can navigate the complexities of data extraction while fostering trust and maintaining strong relationships with information sources.

As the landscape of digital marketing evolves, the significance of responsible data use cannot be overstated. Embracing these web scraping best practises will enhance marketing strategies and safeguard brands against potential legal repercussions. Marketers are encouraged to stay informed about the latest developments in web scraping and continuously refine their approaches to remain at the forefront of data-driven decision-making.

Frequently Asked Questions

What services does Appstractor provide for data mining?

Appstractor offers advanced information mining solutions that help digital marketers extract actionable insights from extensive datasets. The platform automates information extraction, ensuring efficient collection of clear and relevant details.

How does Appstractor enhance the quality of gathered content?

The platform utilises rotating proxies and robust data cleansing features to significantly improve the quality of the collected content, making it essential for market research and competitive analysis.

What is the MobileHorizons API, and what does it offer?

The MobileHorizons API provides hyper-local insights from native mobile applications, allowing advertisers to access customised information feeds that refine their strategies. It manages semantic search and user intent for more relevant and context-aware information.

Why is data-driven decision-making important for businesses?

Data-driven decision-making is projected to be a critical factor for 58% of businesses by 2026, making solutions like Appstractor vital for maintaining competitiveness in a rapidly changing digital landscape.

What are web scraping best practises mentioned in the article?

Best practises include reviewing the robots.txt file of the target website, adhering to ethical scraping guidelines, and continuously verifying scraped data for accuracy to minimise legal risks and maintain stable relationships with information sources.

Why is it important to respect the robots.txt file during web scraping?

The robots.txt file indicates which pages are accessible to web crawlers. Ignoring it can lead to IP bans and legal repercussions. Compliance promotes a healthier information ecosystem and builds trust with website owners.

How can businesses ensure the accuracy of scraped data?

Businesses can ensure accuracy by continuously verifying scraped information through validation techniques such as cross-referencing with trusted sources and employing automated checks.

What are the consequences of not verifying scraped data?

Failing to verify scraped data can lead to significant issues, such as outdated or incorrect information, which can result in financial losses and poor decision-making.

How does Appstractor support businesses in data extraction?

Appstractor provides efficient web extraction solutions, including rotating proxies and full-service options, enabling scalable crawlers and ongoing monitoring for over 3,000 businesses relying on information from more than 100,000 sources.

List of Sources

- Leverage Appstractor for Advanced Data Mining Solutions

- 2026 Data Management Trends and What They Mean For You | Alation (https://alation.com/blog/data-management-trends)

- Data-Driven Marketing Statistics 2025: 93+ Stats & Insights [Expert Analysis] - Marketing LTB (https://marketingltb.com/blog/statistics/data-driven-marketing-statistics)

- Data Mining Tools Market is Likely to Increase At a Significantly High CAGR During Forecast Period 2030 | IBM, SAS Institute, Oracle, Microsoft, Teradata, MathWorks (https://openpr.com/news/3792112/data-mining-tools-market-is-likely-to-increase-at-a-significantly)

- 180+ Powerful Digital Marketing Statistics for 2026 - WordStream (https://wordstream.com/blog/ws/2022/04/19/digital-marketing-statistics)

- 10 Eye Opening AI Marketing Stats to Take Into 2026 | Digital Marketing Institute (https://digitalmarketinginstitute.com/blog/10-eye-opening-ai-marketing-stats-in-2025)

- Respect Robots.txt for Ethical Scraping

- Robots.txt Is Having a Moment: Here's Why We Should Care (https://techpolicy.press/robotstxt-is-having-a-moment-heres-why-we-should-care)

- Scrapers selectively respect robots.txt directives: evidence from a large-scale empirical study (https://arxiv.org/html/2505.21733v1)

- Artificial intelligence web crawlers are running amok (https://npr.org/2024/07/05/nx-s1-5026932/artificial-intelligence-web-crawlers-are-running-amok)

- Ethical Web Scraping: Principles and Practices (https://datacamp.com/blog/ethical-web-scraping)

- News companies are doubling down to fight against AI Web scrapers (https://inma.org/blogs/Product-and-Tech/post.cfm/news-companies-are-doubling-down-to-fight-against-ai-web-scrapers)

- Continuously Verify Scraped Data for Accuracy

- Web Scraping Use Cases: The C-Suite Strategic Guide For 2026 (https://tezeract.ai/web-scraping-use-cases)

- Data Quality Checklist for Web Scraping: 15-Point Framework (https://tendem.ai/blog/data-quality-checklist-web-scraping)

- How to Verify Scraped Web Data in 2026: The Complete Expert Guide (https://apexverify.com/blog/guides-and-tutorials/how-to-verify-scraped-web-data-in-2026-the-complete-expert-guide)

- How to Fix Inaccurate Web Scraping Data: 3 Proven Methods (2026) (https://ficstar.com/how-to-fix-inaccurate-web-scraping-data)

- Why Data Validation Techniques in Web Scraping Crucial (https://actowizsolutions.com/why-data-validation-techniques-in-web-scraping-crucial.php)

- Avoid Overloading Servers During Scraping

- News publishers limit Internet Archive access due to AI scraping concerns (https://niemanlab.org/2026/01/news-publishers-limit-internet-archive-access-due-to-ai-scraping-concerns)

- Understanding Web Scraping Legality: Global Insights & Stats (https://browsercat.com/post/web-scraping-legality-global-statistics)

- Rise of AI crawlers and bots causing web traffic havoc (https://computerworld.com/article/4044127/rise-of-ai-crawlers-and-bots-causing-web-traffic-havoc.html)

- Content Scraping: Causes 6% loss in over half of companies (https://kasada.io/use-cases/content-scraping)

- How can excessive or malicious web scraping harm the interests of content providers? - Tencent Cloud (https://tencentcloud.com/techpedia/132537)

- Handle Multiple Pages and Lists Efficiently

- Handling Pagination While Web Scraping in 2026 (https://brightdata.com/blog/web-data/pagination-web-scraping)

- How to handle pagination in web scraping? (https://datahen.com/blog/how-to-handle-pagination-in-web-scraping)

- How to Use Web Scraper Pagination for Efficient Extraction (https://thunderbit.com/blog/web-scraper-pagination-efficient-extraction)

- How to Master Web Scraping Pagination: Hidden Techniques Experts Use (https://scrapingbee.com/blog/web-scraping-pagination)

- How to Handle Pagination in Web Scraping (2026 Guide) (https://dev.to/n3x1s/how-to-handle-pagination-in-web-scraping-2026-guide-2fhp)

- Adopt Scraping APIs for Modern Solutions

- API vs Scraping: What’s Better for Your Business Data Needs? (https://linkedin.com/pulse/api-vs-scraping-whats-better-your-business-data-needs-tagx-pfobc)

- The 8 Best Web Scraping APIs in 2026: Ranked & Tested (https://brightdata.com/blog/web-data/best-web-scraping-apis)

- Web Scraping Report 2026: Market Trends, Growth & Key Insights (https://promptcloud.com/blog/state-of-web-scraping-2026-report)

- E-Commerce Data Scraping Trends: Insights for 2024–2030 (https://realdataapi.com/e-commerce-data-scraping-trends.php)

- Web data for scraping developers in 2026: AI fuels the agentic future (https://zyte.com/blog/web-data-for-scraping-developers)

- Implement Data Cleaning Techniques Post-Scraping

- Essential Data Cleaning Techniques for Improved Data Quality (https://acceldata.io/blog/data-cleaning-made-easy-with-tools-techniques-and-best-practices)

- 10 Essential Data Cleaning Techniques for Accurate Analysis (Best Practices) - Numerous.ai (https://numerous.ai/blog/data-cleaning-techniques)

- 19 Inspirational Quotes About Data: Wisdom for a Data-Driven World (https://medium.com/@meghrajp008/19-inspirational-quotes-about-data-wisdom-for-a-data-driven-world-fcfbe44c496a)

- Cleaning Scraped Data: From Raw to Ready-to-Use [2026 Guide] (https://tendem.ai/blog/cleaning-scraped-data-guide)

- Scale Scraping Efforts Beyond Single Pages

- 2026 Web Scraping Industry Report - PDF (https://zyte.com/whitepaper-ebook/2026-web-scraping-industry-report)

- State of Web Scraping 2026: Trends, Challenges & What’s Next (https://browserless.io/blog/state-of-web-scraping-2026)

- Large-Scale Web Scraping: A Complete 2026 Guide (https://crawlbase.com/blog/large-scale-web-scraping)

- Web Scraping Statistics & Trends You Need to Know in 2025 (https://kanhasoft.com/blog/web-scraping-statistics-trends-you-need-to-know-in-2025)

- Use Scraped Data Responsibly and Ethically

- Legal & Ethical Web Scraping- Compliance Guide 2025 (https://forage.ai/blog/legal-and-ethical-issues-in-web-scraping-what-you-need-to-know)

- Web Scraping Compliance in 2026: Legal Frameworks, Ethical Proxy Use, and What Enterprises Must Know (https://illusory.io/blog/web-scraping-compliance-2026-legal-ethical-proxy)

- Ethical AI Scraping in 2026: Navigating the Legal Landscape | Use Apify (https://use-apify.com/blog/web-scraping-legal-landscape-2026)

- Data Privacy And Ownership To Remain Key Concerns In Web Scraping Industry Next Year (https://forbes.com/sites/garydrenik/2023/12/18/data-privacy-and-ownership-to-remain-key-concerns-in-web-scraping-industry-next-year)